Shuran Song

@SongShuran

Followers

8,507

Following

470

Media

31

Statuses

246

Assistant Professor @Stanford University working on #Robotics #AI #ComputerVision

California

Joined July 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

McDonald

• 1240083 Tweets

EP7 U STEAL MY HEART

• 439787 Tweets

#الهلال_العين

• 280836 Tweets

日本シリーズ

• 153463 Tweets

سالم

• 130736 Tweets

Neymar

• 83612 Tweets

علي العين

• 77028 Tweets

Liz Cheney

• 75994 Tweets

نيمار

• 39674 Tweets

ناصر

• 35850 Tweets

البليهي

• 32652 Tweets

curitiba

• 27544 Tweets

Paul Di'Anno

• 24989 Tweets

Iron Maiden

• 23155 Tweets

كوليبالي

• 22703 Tweets

#الاهلي_الريان

• 22344 Tweets

زعيم اسيا

• 22277 Tweets

AFIP

• 20846 Tweets

جيسوس

• 20696 Tweets

Chris Kaba

• 19709 Tweets

juanjo

• 16771 Tweets

Al Hilal

• 14552 Tweets

سفيان رحيمي

• 13307 Tweets

Daniel Penny

• 12287 Tweets

سافيتش

• 11833 Tweets

olivia rodrigo

• 11514 Tweets

فهد العتيبي

• 11048 Tweets

الشوط الاول

• 10542 Tweets

Last Seen Profiles

Check out UMI! 3 things I learned in this project:

1. Wrist-mount cameras can be sufficient for challenging manipulation tasks with the right hardware design.

2. Cross-embodiment policy is possible with the right policy interface.

3. BC can generalize if the data is right.

Can we collect robot data without any robots?

Introducing Universal Manipulation Interface (UMI)

An open-source $400 system from

@Stanford

designed to democratize robot data collection

0 teleop -> autonomously wash dishes (precise), toss (dynamic), and fold clothes (bimanual)

45

356

2K

3

31

246

More robots do not always lead to higher productivity if they don’t collaborate ;) Check out our latest work in

#CORL2020

.

Despite being trained on 1-4 arms static task, the system generalizes to 5-10 arms with dynamic targets

w/ Huy Ha, Jingxi xu

4

45

217

UMI got the Outstanding System Paper finalist

#RSS2024

. Congratulations team!! 🥳

Hope to see more UMI running around the world 😊 !

6

8

198

DextAIRity: Deformable Manipulation Can be a Breeze!

#RSS2022

A different way to manipulate objects using controlled airflow that reaches beyond contact 🤖

w.

@Zhenjia_Xu

,

@chichengcc

,

@Ben_Burchfiel

,

@eacousineau

, Siyuan Feng

@CAIR_lab

+

@ToyotaResearch

🦾

5

54

194

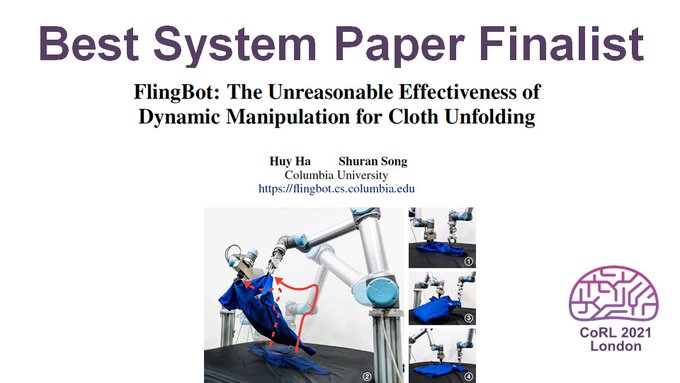

Congratulations to Huy for winning the best system paper at CORL

@corl_conf

. It was so much fun building FlingBot and seeing the system work :)

9

8

178

Honored to be selected as a Sloan Fellow. I am grateful to my mentors, collaborators, and most importantly my awesome students!! Thank you all!

The

@SloanFoundation

picked five

@Columbia

scientists for research fellowships this year. Congrats

@jcolinhill

,

@GKaragiorgi

,

@KaczaLab

,

@SongShuran

+

@henryquantum

!

@CUSEAS

@ColumbiaQuantum

@ColumbiaCompSci

@NevisLabs

1

6

40

25

3

170

Diffusion Policy for robots!

The most impressive thing to me is how fast we can deploy a new skill with this framework -- and we just keep adding more and more.

Cheng has made the framework really easy to use, so you try it out too. Colab & Github:

0

23

162

Universal Manipulation Policy Network – a single policy learns to manipulate a diverse set of articulated objects (e.g., fridge, laptop, or drawers) regardless of their joint types or # links.

w.

@zhenjia

@zhanpeng_he

Things we learned 🧵⬇️1/n

3

32

163

Honored to be a Microsoft Research Faculty Fellow!

5

0

148

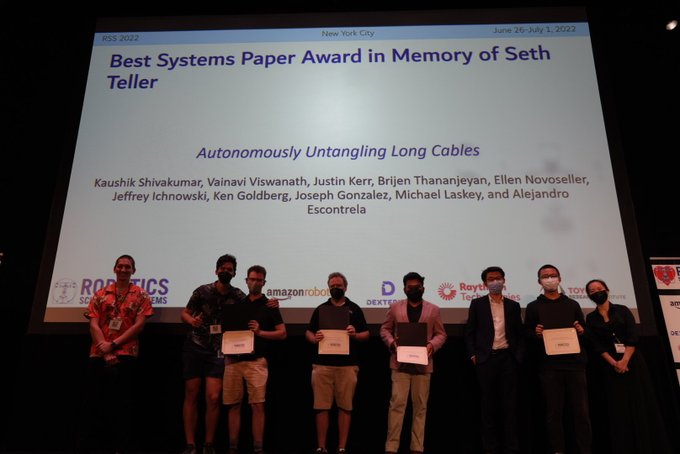

Congratulations to

@chichengcc

for winning the Best Paper Award and

@Zhenjia_Xu

for the Best Systems Paper Finalist at

#RSS2022

!! 🥳🎉

Big congrats to the winners (and the finalists!) of the

#RSS2022

awards:

- Best paper award:

- Best systems paper award:

- Best student paper award:

4

18

100

11

8

143

Excited to receive the NSF CAREER award! I'm grateful to all my students

@CAIRLab

, mentors, and collaborators for making this possible 😊

and thank you, Holly and Bernadette, for writing this nice article that summarizes our research. 🤖

Congrats to our

@ColumbiaCompSci

Prof Shuran Song

@SongShuran

, who's won an

@NSF

CAREER award to enable

#Robots

to learn on their own and adapt to new environments.

@ColumbiaScience

@Columbia

3

5

41

10

13

148

Embodiment is such a critical component of Embodiment Intelligence but often gets overlooked.

Can robots learn to generate different embodiment (i.e., hardware designs) for different tasks that drastically simplify perception, planning, and control?

Check it out ⬇️

Can we automate task-specific mechanical design without task-specific training?

Introducing Dynamics-Guided Diffusion Model for Robot Manipulator Design, a data-driven framework for generating manipulator geometry designs for given manipulation tasks.

w. Huy Ha,

@SongShuran

7

46

257

1

14

140

Dynamic manipulation turns out to be so much more effective for cloth unfolding! Check out FlingBot -- unfold your shirt in 3 steps! 😉

Code for both simulation & real robots is available!

#CORL2021

w/ Huy Ha

6

20

140

Real2Code -- translating real-world articulated objects to sim using code generation! With the code representation, this method scales well wrt the number of object parts, check out the 10-drawer table it reconstructed 😉

1

17

138

Don't want to collect hundreds of demonstrations for every object and scenario? Check out EquiBot form

@yjy0625

--- Leveraging equivariance in diffusion policy to make it sample-efficient and generalizable!

0

17

132

By plugging a $5 contact microphone 🎤into UMI, we can now "hear" 👂all the critical contact events during manipulation and "feel" ☝️the subtle differences on the contact surface.

Check out

@Liu_Zeyi_

's new work on ManiWav: Manipulation from In-the-Wild Audio-Visual Data!

1

18

123

Struggling with your 2D visual predictive models that keep losing track of objects? Time to try out this 3D dynamic scene representation (DSR)

at

#CORL2020

.

w. zhenjia_xu

@zhanpeng_he

@jiajunwu_cs

1

23

105

One of the common questions I get for UMI is how to apply it to mobile robots, eps when we don't have a precise IK solver. Check out UMI-on-legs!

With a manipulation-centric whole-body controller, we can put any UMI skills on a legged robot🐕

Video:

0

8

99

This robot is having a lot of fun!

Check out

@ruoshi_liu

's PaperBot, a robot that learns to design, fold, and throw a paper airplane 😊✈️, and many other things!

1

9

87

The tastiest robot demo 🤩 !!

Introducing 𝐌𝐨𝐛𝐢𝐥𝐞 𝐀𝐋𝐎𝐇𝐀🏄 -- Hardware!

A low-cost, open-source, mobile manipulator.

One of the most high-effort projects in my past 5yrs! Not possible without co-lead

@zipengfu

and

@chelseabfinn

.

At the end, what's better than cooking yourself a meal with the 🤖🧑🍳

237

1K

5K

1

9

83

Position control can only go so far. For contact-rich tasks, robots must master both position and force – that’s where compliance comes in!

But what’s the right compliance? 🤔Hint: being always compliant in all directions won’t cut it.

Check out

@YifanHou2

’s solution 😉⤵️

1

8

76

Thank you, Deepak! I'm honored to be in such great company :)

1

2

66

What if your robot hand suddenly lost a finger? 🤕🤖

Wouldn’t it be great if the same policy could still be effective?

Check out "Get-Zero"— by representing the embodiment as a directed grasp, the single trained policy can generalize across new designs without retraining 🪄

0

8

65

UMI's pretrained weight is released.

We have tested the policy on three different robots: UR5, Franka, and ARX. Time to try it on your robot !!

Buy any "espresso cup with saucer" on Amazon, and it should work -- or let

@chichengcc

know if it doesn't 😉

1

3

61

Amazing work on collaborative cooking. The interaction between human and robots is so natural and smooth, see the subtle things like how the robot is pausing and waiting for the human to pour soup, very impressive!

1

4

55

Check out

@chichengcc

's step-by-step tutorial on building the UMI gripper. We really hope to see more UMIs running in the wild. 😊

We made a step-by-step video tutorial for building the UMI gripper! Please leave comments on

@YouTube

if you have any question

9

25

190

0

3

49

One grasping policy for many and (new!) grippers. Code is available here: [](). Try it out, and let us know if your favorite gripper is missing!

w. Zhenjia, Beichun,

@submagr

3

4

49

#RSS2022

is happening next week

@Columbia

!

@chichengcc

and

@Zhenjia_Xu

are presenting Iterative Residual Policy

and DextAIRity

Join us for a tour of our lab on Thursday! Our robots are getting dressed for demos 😜

0

6

43

@haqhuy

’s new project:

*Scaling up* robot data collection using LLM for ✅ task decomposition ✅ reward formulation

*Distill down* into visuomotor policies that ✅ operate from raw sensory input ✅ improve overtime.

Check out the engaging Q&A here 😉

0

6

45

Also, forget to mention, UMI is always evolving! If you're adding new sensors or making hardware tweaks, please share it as well! 🙌

Even when the data is not directly transferable to the current UMI, it can still power pretraining or other creative applications. 🚀

1

2

42

Just like us humans, failures are inevitable for robots as well and it is important to "REFLECT" on them!

Check out

@Liu_Zeyi_

and

@ArpitBahety

's new project on failure reasonings for robots.

The new dataset (RoboFail) and code are out too!

2

5

38

dense 3D tracking for deformables 👗

1

3

25

TRI's effort on Scaling up Diffusion Polices!

Hats off to

@ToyotaResearch

for exciting results with “Large Behavior Models” and Diffusion Policies:

2

19

136

0

0

26

sweet demo 🍬🍭🥳

@andyzengtweets

0

0

24

#CVPR2022

We are looking volunteers from the CVPR community (graduate students, university faculty, and researchers) to help us organize *in-person* outreach events!

1

3

21

It is hollywood-level demo. so cool!

Excited to share our latest progress on legged manipulation with humanoids. We created a VR interface to remote control the Draco-3 robot 🤖, which cooks ramen for hungry graduate students at night. We can't wait for the day it will help us at home in the real world!

#humanoid

7

67

322

0

1

19

4/5 It is an amazing team work from 5(!) different universities!! Thank you all

@Zhenjia_Xu

, Zhou Xian,

@Xingyu2017

,

@chichengcc

,

@huang_zhiao

,

@gan_chuang

1

1

15

also, check out

@yihuai

's documentation on how to run the UMI-on-Legs system on physical hardware!

@haqhuy

We spent a lot of effort on the documentation and hope that people can easily reproduce our work (including hardware!). We disscussed our hardware choices and how we fixed all kinds of harware problems so you don't have to. Please check it out!

1

0

14

0

0

15

The deadline for

#RSS2022

Workshops & Tutorials is approaching (Feb 18)! Remember to submit your proposal. 🤖

0

2

14

Looking forward to it 🤖

TOMORROW: Spring 2022 GRASP SFI: Shuran Song,

Shuran Song,(

@SongShuran

) Columbia University, “The Reasonable Effectiveness of Dynamic Manipulation for Deformable Objects” 3/16 @ 3:00 - 4:00pm - Levine 512 & Zoom. See you there!

0

4

26

0

0

13

Grasping dynamic and moving objects, the

#IROS2021

talk is today! w. Ireiayo Akinola,

@xu_jingxi

, and Peter Allen

0

1

11

Code:

Paper:

Website:

#RSS2022

w.

@chichengcc

,

@Ben_Burchfiel

,

@eacousineau

, Siyuan Feng

@CAIRLab

+

@ToyotaResearch

0

0

10

nice summary of ManiWav!

0

0

10

It was great having you here! The talk was great, learned a lot!! 😊

I had the pleasure of speaking at

@Columbia

’s vision seminar, kindly hosted by

@SongShuran

,

@sy_gadre

.

My talk focused on using Transformer explainability algorithms to improve performance of downstream tasks (e.g. image editing).

Check it out :)

0

2

33

1

0

8

and environments beyond BusyBoard, like kitchens in AI2 THOR Home. (3/n)

code & paper:

w/

@Liu_Zeyi_

@Zhenjia_Xu

#CoRL

2022

0

1

4

@animesh_garg

@UofTRobotics

Love your gif, gonna steal it for my next talk :)) Thank you for inviting me, it was really fun!!

0

0

4

@shahdhruv_

@chris_j_paxton

Thank you

@shahdhruv_

@chris_j_paxton

!

@Zhenjia_Xu

is really the magician who made all the magic happen 🥷

0

0

3

@danfei_xu

@kevin_zakka

@andyzengtweets

@photoneo

Some of the images are indeed grey-scale images. Photoneo doesn't have a color camera 🙃

0

0

2

Remember to submit your workshop and Tutorial proposal to 🤖

@RSS_Foundation

2022. Less than a week left ⌛️

The deadline for

#RSS2022

Workshops & Tutorials is approaching (Feb 18)! Remember to submit your proposal. 🤖

0

2

14

0

0

1

@MahnaSakshay

@crazy_sanguine

@zhenjia

@zhanpeng_he

The policy does need to take the position into account ( PositionNet) Most of the time it learns to apply force away from the joint axis but not "furthest".

1

0

1