Yasuo Yamasaki

@yasuoyamasaki

Followers

331

Following

1,446

Media

1,050

Statuses

15,142

Explore trending content on Musk Viewer

Gus Walz

• 324596 Tweets

El TSJ

• 290863 Tweets

Marçal

• 156810 Tweets

Macri

• 123326 Tweets

sabrina

• 108044 Tweets

Golpe de Estado

• 96215 Tweets

Beyoncé

• 81524 Tweets

Barron

• 80324 Tweets

#النصر_الرايد

• 76428 Tweets

Happy Anniversary

• 66892 Tweets

Boulos

• 65577 Tweets

Ann Coulter

• 60712 Tweets

A'TIN ANG PANALO

• 57075 Tweets

رونالدو

• 45616 Tweets

Lugano

• 38771 Tweets

MUERO X DECÍRTELO OUT NOW

• 30346 Tweets

Servette

• 29141 Tweets

Dotson

• 27125 Tweets

Mudryk

• 25335 Tweets

Mike Pence

• 22588 Tweets

Conference League

• 20375 Tweets

#LUGvBJK

• 19092 Tweets

Guiu

• 17537 Tweets

Dinesh

• 16835 Tweets

Noni

• 13859 Tweets

CARAJO FURIOSO

• 13671 Tweets

Ken Salazar

• 13530 Tweets

#كريستينسين_مطلب_اتحادي

• 11969 Tweets

Xmail

• 11685 Tweets

Masuaku

• 11603 Tweets

Mendy

• 10286 Tweets

Last Seen Profiles

@motto_ishikawa

ヤマザキパン、こんなことをやっておいて「私たちは復興を支援します」という宣伝をまったくしないの、格好良すぎる。これが落ち着いたら毎日ヤマザキパンを買う。

17

598

4K

松尾研言語モデル(10B-SFT)の学習データは以下:

(事前訓練データ) ... 合計600Bトークン

Japanese C4 (公開データは830GB)

The Pile (公開データは825GB )

(教師あり微調整データ)

Alpaca (English)

Alpaca (Japanese translation)→リンク先は清水さんのGitHubリポジトリ

1

7

56

Google DeepMindのMoEに関する論文。既存のSparse MoE方式にSoft MoEという方式を提案。それぞれの専門家モデルに仕事を割り当てるルータと呼ばれるモデルの方式を工夫。専門家モデルを4096人に増やしてもスケールする。DeepMindもこっちの方向?スケーリング則はどうなったのか。

0

12

53

TransformerはGPUの利用効率が全然よくない。

GPT-2の場合、グラフの黒丸程度。

上にいくほどGPUを使いこなしている

本研究では赤丸まで改善したが、まだGPUネックにいたっていない。(効率が悪いのは投機実行を行うせいdさと思う)

これ解決したらグラフィックボードはいらなくなるんじゃね?

0

8

52

LLMは学習データからの模倣とは言え、因果推論をまあまあ成功する。一部のタスク(グラフ発見、反事実推論)については、自然言語処理のみのLLMが、専用の因果アルゴリズムを凌駕した。

0

13

45

“サム アルトマン: 「AGI は終点ではありません。 [ASI - 人工超知能] を達成するには、2030 年か 2031 年までかかります。私にとって、これは、大きな誤差範囲を伴う合理的な推定だとずっと感じてきました。私たちは想定していた軌道に乗っていると思います。」

注: OpenAI

1

12

44

@MORIDaisukePub

すでに作成済のドキュメントすべてについて、IPAの標準ガイドラインへの参照がないか確認し、変更があれば探してリンクを張り替えて、変更のお知らせをしないといけない。

今からでもIPAのサイトの方を元に戻した方が日本全体の作業量は小さいと思う。バックアップはあるだろうし。

1

11

33

@izumisatoshi05

IPv6のlocalhost(::1)へのアクセスを試すが OICEVOXが受ける設定になっておらず4秒でタイムアウト。その後IPv4のlocalhost(127.0.0.1)へアクセス、なのかもしれません。

0

13

29

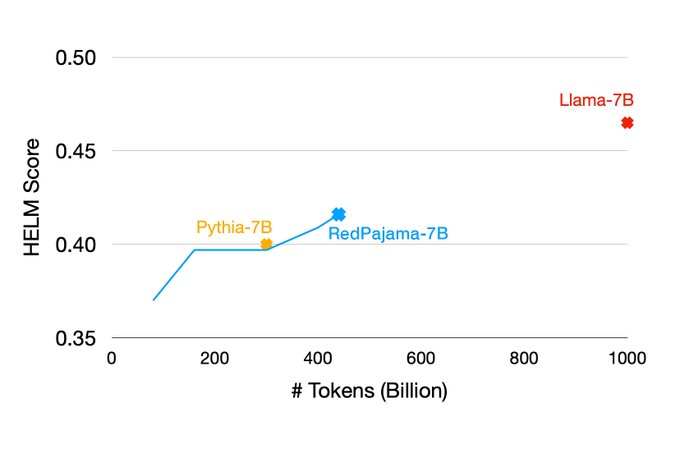

完全フリーLLMを目指すRedPajamaの進捗報告。70億パラメータのモデルの学習を実施中で今半分くらい終わったそう。楽しみ。

0

10

30

@motto_ishikawa

他にも応援先が見つかった!

0

4

25

@kis

追試しました(ChatGPT4)

1. ノーヒント → 壊れたアスキー文字列を返す

2. 機械語だよ → x86とかARMとかいろいろあるので。。。

3. 8bit → 6502だとこうなります

4. 00はNOP → intel 8080ですね!

... 賢い

1

9

15

“Apple は、ChatGPT よりも強力であると考えられる最も先進的な言語モデルである Ajax のトレーニングに 1 日あたり数百万ドルを費やしていると伝えられています。

Apple は AI 分野では出遅れているように見えるかもしれないが、3,000 億ドルの研究資金を持つ企業を過小評価してはいけない。”

0

9

14

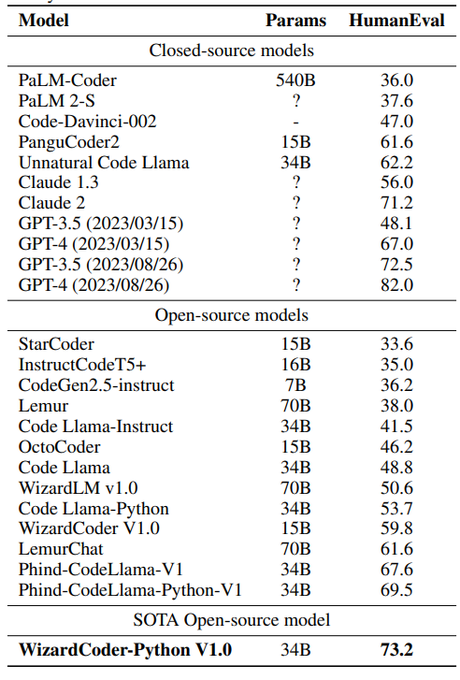

GPT-3.5, GPT-4 に8/26バージョンなんて出たの?

性能上がってるように見える。

🔥🔥🔥

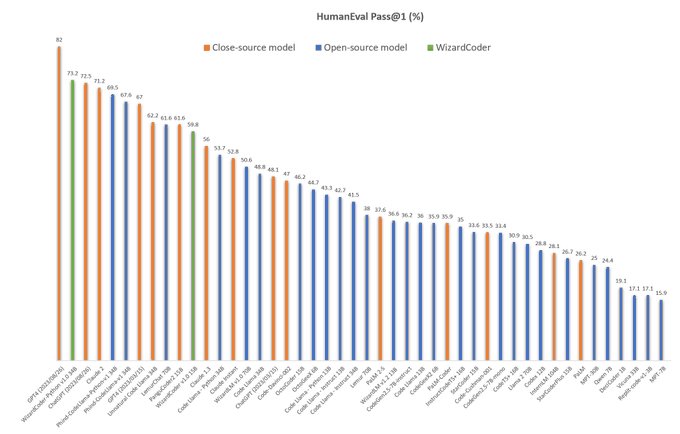

Introduce the newest WizardCoder 34B based on Code Llama.

✅WizardCoder-34B surpasses GPT-4, ChatGPT-3.5 and Claude-2 on HumanEval with 73.2% pass

@1

🖥️Demo:

http://47.103.63.15:50085/

🏇Model Weights:

🏇Github:

The 13B/7B

66

404

2K

1

4

14

LLMの行う忖度(sycopancy)に関する研究がいくつかあるが、これはGoogleの論文。あるファインチューニングを行うことにより忖度を約10%緩和させた。

New

@GoogleAI

paper! 📜

Language models repeat a user’s opinion, even when that opinion is wrong. This is more prevalent in instruction-tuned and larger models.

Finetuning with simple synthetic-data () reduces this behavior.

1/

12

137

621

1

4

11

"噂に反して、Phi-2モデルはダウンロード可能ですが、再配布はライセンス違反です。"

0

5

10

"ロボット工学は、AI において私たちが克服する最後の、そしてこれまでで最も困難な堀となるでしょう。"

AIだけで科学を進めるという凄い世界のその前に、人間の仕事のかなりをAIがこなせるようになるまでにはロボット工学が発達が必要という意見。言語モデルはOpenAIに任せてロボットを作ろう!

0

2

9

GPTの共通テスト実施にて、「小説」の成績がよくなく、分析すると心情把握ができずに勝手に独自の解釈を広げていったそう。日本人特有の空気を読むのが苦手、という解釈もできる。

0

2

9

”もしグーグルがトランスフォーマーの論文を発表しなかったら、AIの歴史は(そしておそらく人類の歴史も)何年も後退していただろう。誰もがもっとひどい目にあっていただろう。

0

5

9

@kosakaeiji

参考情報:

1

0

8

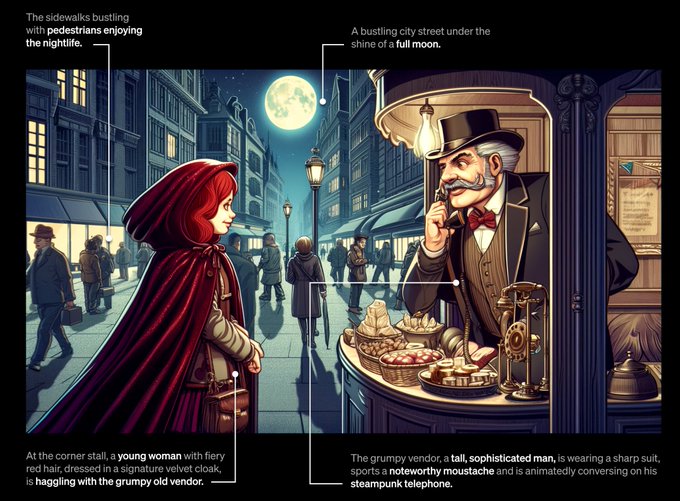

“DALL・E 3は単なるMidJourneyに対するスタンスではないと思います。これは実際には、DeepMind Gemini に対する、大規模にマルチモーダルな LLM の今後の壮大な戦いの予告編です”

ChatGPTというUIが大成功したので、そこを全てを詰め込もうとしているようにも思える。ウィスパーも統合されるんでは。

0

4

8

AIバブルの示唆。GPU買占め、データセンター用地買収、はすでに起こってる。

0

1

8

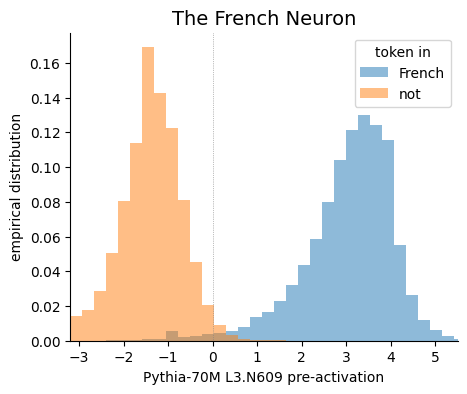

みんなLLMを作るのに忙しいけど、その内部はよくわかっていない。LLMの内部のニューロンがどうなっているか分析してみた。どの層がスパースか、などいくつかのことがわかった。、、、ということらしい。この分野もっと発達しろ!空いてるニューロンを活用してもっと賢くなれ!増やせシナプス!

1

2

7

@bioshok3

「どうなってもNVidiaは大量のGPUを生産・販売することになる。」

→ついでにOpenAIのインフラを提供するMicrosoftも大量のGPUサービスを販売することになる。

(NVidia->Microsoft->OpenAI)

1

0

8

AIダンジョン・マスターズ・ガイドの紹介や、意図(intent)と心の理論に触発された強化学習で訓練されたDnDのDM対話エージェントの作り方など。

プレイヤーがどのように反応するかを事前に予測することで、より良いDMを作ることができます!"

#ACL2023NLP

1

2

7

"研究者が研究を行うために 100 個の GPU が必要で、チームがすでにコンピューティングによってボトルネックになっている場合、チームの他の全員が GPU を待つことで生産性が低下するだけなので、彼らを雇用する意味はありません。"

人間の研究者=GPU消費者。

確かに。

0

0

6