Mike Conover

@vagabondjack

Followers

3,980

Following

1,873

Media

148

Statuses

3,043

Founder & CEO at Brightwave; formerly language models at Databricks.

Joined February 2010

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

LINGORM PANTENE TRIP

• 574045 Tweets

محمد

• 378107 Tweets

FREEN X PRADA OPENING STORE

• 206104 Tweets

#KingPowerBirthdayxEngfa

• 71199 Tweets

علي النبي

• 71147 Tweets

Kindiki

• 65113 Tweets

ホームラン

• 58587 Tweets

APO DELIGHTS AND SURPRISES

• 56927 Tweets

KAOPP 1ST FAN MEETING

• 48302 Tweets

Hayırlı Cumalar

• 47984 Tweets

新刀剣男士

• 27477 Tweets

WIN BA PRADA WMW

• 27172 Tweets

オースティン

• 27113 Tweets

LMSY AFFAIR FINAL EP

• 22380 Tweets

Wizkid

• 21757 Tweets

#جمعه_مباركه

• 21294 Tweets

IEBC

• 17090 Tweets

#bebekkatilleri

• 11359 Tweets

Last Seen Profiles

Pinned Tweet

Today we're announcing the $6M seed round for

@brightwaveio

led by

@DecibelVC

and with participation from

@p72vc

and

@Moonfire_VC

.

We are building an AI research assistant that generates insightful, trustworthy financial analysis on any subject and have customers with assets

8

6

41

Take note, OpenAI constructs prompts using markdown. I believe this is reflective of their instruction tuning datasets.

In our own work we’ve seen markdown increase model compliance far more than is otherwise reasonable.

20

121

821

GPT-4 is able to infer authorship from a passage of text based on style and content alone.

Given the first four paragraphs of the March 13, 2023

@stratechery

post on SVB, GPT-4 identified Ben Thompson as the author.

26

78

433

The power of free and open data! The databricks-dolly-15k dataset has been translated into Spanish and Japanese in under 24 hours!

Who's running Self-Instruct on this with

@YiTayML

's (et al) UL2?

6

64

335

Top information sources for AI Engineers, at

@aiDotEngineer

Summit courtesy of

@barrnanas

&

@AmplifyPartners

.

16

43

335

Love this post from the

@Replit

team on their process for building LLM's like the Ghostwriter code assistant.

@databricks

, alongside

@huggingface

and

@MosaicML

, constitute what they're calling the 'modern LLM stack.'

5

46

269

Happening now!

@ShayneRedford

speaking on LLM's as part of the Data Talks speaker series at Databricks. Will be tweeting insights and slides from our inspiring speaker as we go.

4

40

225

We took a detailed look at the level of effort required to reproduce the LLaMA dataset and it's highly non-trivial. You read the paper and there are entire classifiers hidden behind five word clauses.

If this corpus is the real deal it's hard to overstate how valuable it is.

5

30

220

💥 This. Team. Ships.

Databricks committing to the

@huggingface

datasets codebase - so now anyone can read directly from a Spark dataframe into a dataset nearly 2x faster.

3

37

209

Inspired by

@jeremyphoward

, a non-exhaustive list of the reasons the

@togethercompute

RedPajama LLaMA dataset reproduction saves the rest of us a lot of work. [1/n]

4

18

177

"The Rust Programming Language" from No Starch Press is great, and the 'Rustlings' practice problem sets are very thoughtfully designed.

@vagabondjack

do you have a prefered "getting started in rust for a dude who's never used a compiled language" book?

9

0

46

3

8

164

Get it while it's hot! The

@MosaicML

MPT-7B -Instruct model is trained on the databricks-dolly-15k instruction tuning corpus.

LLaMA-grade language capabilities with Dolly-like instruction following. Ship it!

2

21

130

Stunning

@BigCodeProject

! A trillion tokens in 80 languages, huge context window, and step function improvement in quality.

38% improvement on HumanEval Pass

@1

vs Replit's replit-code-v1-3b. Nearly doubles the performance of models in the Salesforce Codegen family.

Introducing: 💫StarCoder

StarCoder is a 15B LLM for code with 8k context and trained only on permissive data in 80+ programming languages. It can be prompted to reach 40% pass

@1

on HumanEval and act as a Tech Assistant.

Try it here:

Release thread🧵

76

666

3K

2

18

94

We saw (and documented) only minimal benchmark differences between Pythia and Dolly, but the actual difference could not have been more striking.

3

12

59

.

@mat_kelcey

To pick an ML algorithm for a practical application, read 5 papers in the domain and implement the one they all claim to beat.

3

42

53

CEO of

@togethercompute

(Red Pajamas team) confirming their LLaMA reproduction is currently being trained and it staged for release soon.

Doing a hell of a lot of good for the world here.

@vagabondjack

Training is well underway and we should have something to share a lot sooner than that!

0

0

13

0

8

47

Don't sleep on the interactive T-SNE viewer for the GPT4All dataset. Awesome work from

@andriy_mulyar

et al.

1

12

44

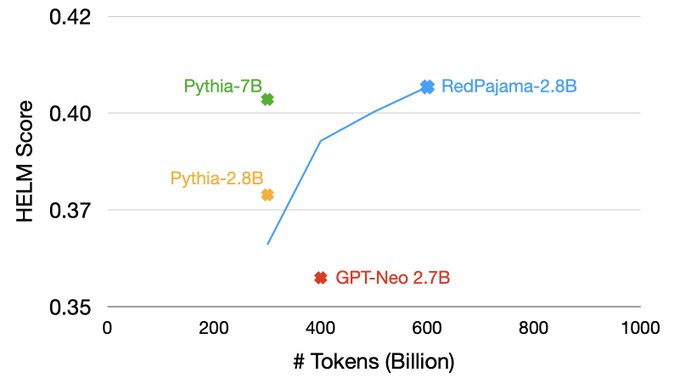

This plot is amazing.

More, clean data at training time means you can get comparable quality with fewer than half the parameters. Cheaper and faster to run without compromising quality. Super high leverage move here from the

@togethercompute

team.

0

10

40

700+ comprehensive pages, Springer's 'The Elements on Statistical Learning', as PDF d/l from Stanford Stats Dept.

http://t.co/9ZKpv6LR

0

26

36

My experience with constructing datasets for LLM’s suggests the mechanism at play is a qualitative difference in the character & quality of content (on the open web, et al.) that follows urgent, emotional appeals.

3

4

28

@LightningAI

Hey hey! I helped create Dolly & love Lightning. Not sure if you're aware, but all our training and inference code (HF pipeline) is available in our github repo.

Add'l the model is available in 3/7/12B sizes.

1

1

26

@danlovesproofs

is pretty close to what you describe, minus wrapped calls to the backing service.

1

2

24

Statistics professors hate this! Find a maxiamlly separating hyperplane with this one weird kernel trick!

http://t.co/0druBKfOYu

4

38

24

This kind of detailed, generous record of otherwise undocumented arcana is interesting and super valuable. The OPT-175 log book from Meta's train likewise offers great perspective on the real work of making this happen.

h/t

@AETackaberry

for the flag.

A few weeks ago, we published our

#BloombergGPT

paper on arXiv. It's now been updated to include the training logs (Chronicles) detailing our team's experience in training this 50B parameter

#LargeLanguageModel

. We hope it benefits others.

#AI

#NLProc

#LLM

0

40

178

1

4

23

@omarsar0

The dataset is money, super proud of the team for getting this out in two weeks time - the internal push was a sight to behold.

1

0

22

Haystack is such a slick framework for out of the box semantic search w transformer, vector index integration. Joined the

@deepset_ai

office hours this week, team is really on the ball. Wish we had this when we were building

@SkipFlag

. cc

@peteskomoroch

3

6

22

Most influential book I've ever read? 'The Wealth of Networks,' by Yochai Benkler. Available free online.

http://t.co/itodGKeDas

0

7

19

@simonw

@HelloPaperspace

@huggingface

Hey Simon, our team here at Databricks has code for running this in 8-bit mode on an A10. Shoot me a DM w your email and we’ll help get you sorted!

2

0

21

A pleasure to share that our work on the network structure of global labor flows is live! Multi-faceted texture and powerful explanatory power on this one -- couldn't be more proud of the work from this team.

0

4

18

The video for my SF Data Mining talk, 'Information Visualization for Large-Scale Data Workflows' is now online!

http://t.co/Hge4ZVzXAC

0

5

20

Hosting

@ShayneRedford

, of Flan / Flan-T5 fame, at Databricks for a 200+ attendee talk on how fine tuning inputs shape the behavior of models.

May wind up live tweeting the talk if I can convince Shayne to let me screenshot his slides. Stay tuned. 😅

2

1

19

For all the spicy takes about the raise and the model not being that different from other offerings, a few remarkable facts stand out.

1) LinkedIn lists 24 people at

@inflectionAI

. This is an incredible accomplishment for a team this size.

1/n

🗣️ Ready to talk with Pi?

The

@heypi_ai

iOS app is now available for download in the App Store!

With our app you can hear Pi talk and select from multiple voice options. Try it out:

18

6

41

1

2

17

Automated pattern discovery and hypothesis generation in large-scale datasets. New research, this month in 'Science'.

http://t.co/qZdaKxvv

0

17

16

I am the picture of stoked for this. To reiterate, (as I understand it) this will be an open LLaMA clone trained on the Red Pajamas LLaMA-alike dataset.

Will be a state of the art step function for open models.

2

1

16

One of the many reasons enterprise customers will prefer to build and operate their own LLM’s.

1

0

15

Best pod on the block.

🚨 Latest Latent Space is live!

MPT-7B and The Beginning of Context=Infinity

with

@jefrankle

and

@abhi_venigalla

of

@MosaicML

available at all major podcast retailers (and Hacker News 👀)

Personal Highlights:

➜ Discussion of whether

@_jasonwei

's

10

73

473

1

1

15

Just saw the

@SciDB

R package for parallel computing on sparse arrays compute an SVD, from the prompt, on a 100Mx18K matrix, in ~5 seconds.

3

13

15

.

@mat_kelcey

maintains some of the the most peculiar git repositories. Fascinating work going back many years.

0

2

14

Stoked to be attending

@aiDotEngineer

in SF next week. If you're at the conference and want to learn more about how we're building the operating system for autonomous financial research shoot me a line.

We're hiring for AI engineering, distributed systems, frontend and design!

Last

@aiDotEngineer

update before Sunday:

All tickets sold out this week!

We were >600% oversubscribed since day 1 but ran an invite-only process to ensure everyone you run into will have something great to share!

For those not joining us in person, I've been busy

15

10

108

3

1

13

Looking forward to speaking at 11a PST today with the one and only

@acroll

and Sam Shah (VP Eng, Databricks) about Dolly and the surprising, emergent capabilities of large language models.

See you there!

0

2

13

Check out the LinkedIn Data team's analysis of venture capital funding using Crunchbase + LinkedIn!

http://t.co/ajD0vZNTB6

0

18

12

GPT-4's ability to identify the person who wrote a passage of text appears to work for other prolific writers. Here

@matt_levine

is picked out based on the opening 4 paragraphs of his March 17 post.

1

1

13

Love this thread - it’s amazing to work at a company with the resources to pull this off, and a leadership team willing to lead with improbable ideas.

@Databricks

just released the first commercially usable, instruction-tuned open-source LLM

Might be a bigger step forward for open-source than LLaMA, Alpaca, et al

I'm sitting here reading the story of how they got there and it's just incredible:

1

16

65

0

0

13

@swyx

@sama

@lexfridman

I’ve been thinking about this a lot lately as well. It reminds me of Hitchhiker’s Guide to the Galaxy, in which the answer is known but the right question remains elusive.

1

0

13

@andriy_mulyar

The heatmap on the right is so good I want to die. The spatial and structural characteristics of language are dear to my heart.

One of my profile backgrounds is a social network of Slack interactions with topical activity coded by color.

1

1

12

In SF for the Dolly 2.0 model / data release. Who’s up for AI drinks tonight? Sounds like

@seanjtaylor

is east coast this week so it feels a shame to call it Ethanology, but we can still have fun.

1

1

12

The “GPU Poor” hats. 😂

@lizcentoni

@navrinasingh

@tianjun_zhang

@Michaelvll1

@jerryjliu0

We also gave out AI Pioneer awards:

-

@hanlintang

from Mosaic for the largest exit in gen AI 💰

-

@pirroh

for helping 25M+ developers to leverage SOTA code models in their workflow

-

@vagabondjack

for showing us how the GPU poor only need $30 to finetune a great small model

0

0

6

0

1

12

GPT-4 correctly identifies

@ThisIsSethsBlog

as the author of two short posts from March 2023 that don't contain any PII.

1

1

12

@EigenGender

Possible this is what you used, but L-theanine, a compound found in green tea, is synergistic with caffeine and is a ridealong in some caffeine pills.

Has a mellowing effect and some evidence of improvements to cognition.

1

0

12

I love and have used my

@Baratza

Encore coffee grinder for many years, in spite of the fact that it spews coffee grinds all over my kitchen.

Does anyone have a solution for this?

cc

@seanjtaylor

12

2

11

Talking about

@SkipFlag

’s work on deep NLP at Strata this afternoon. Shoot me a line if you’re at the event!

1

1

10

Slides from my SF Data Mining talk on Information Visualization in Large-Scale Data Workflows.

http://t.co/WGoKChfXrw

0

9

11

Check out the excellent pre-print of

@hadleywickham

's new book, "Advanced R Programming"

http://t.co/cCnk2Nf1Qb

(h/t

@tweetSatpreet

)

1

2

11

The past and the future converge in this very moment. Somewhere, today, 2023’s Homebrew Computer Club is convening to stand up GPT4All.

0

1

10