Sharon Y. Li

@SharonYixuanLi

Followers

7,523

Following

706

Media

89

Statuses

641

Assistant Professor @WisconsinCS . Formerly postdoc @Stanford , Ph.D. @Cornell . Making AI safe and reliable for the open world.

Madison, WI

Joined March 2019

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Tuchel

• 164446 Tweets

#FGO

• 113600 Tweets

#sbhawks

• 48780 Tweets

カズラドロップ

• 47629 Tweets

バーニス

• 38359 Tweets

#deprem

• 36482 Tweets

#baystars

• 34164 Tweets

FY RECAP BLANK SS2EP4

• 31218 Tweets

#BlankReactSS2Ep4

• 28528 Tweets

ZETA

• 23817 Tweets

ソフトバンク

• 19958 Tweets

ジェロニモ

• 19709 Tweets

ホークス

• 17286 Tweets

スタメン

• 14523 Tweets

ジャイアンツ

• 14382 Tweets

カノウさん

• 12440 Tweets

ベイスターズ

• 12014 Tweets

日本シリーズ

• 10773 Tweets

Last Seen Profiles

Sharing our new

#ICML2022

paper on Logit Normalization (LogitNorm), a simple fix to the cross-entropy loss that mitigates the overconfidence issue of deep neural networks. (1/n)

Paper: .

5-min video:

5

73

527

Can we align LLMs without retraining the model (e.g. using RLHF)?

Introducing 🔥ARGS🔥, a simple and powerful test-time alignment approach that leverages a reward model to "guide" your unaligned LLM in decoding time! 🧵(1/n)

#ICLR2024

Paper:

4

67

385

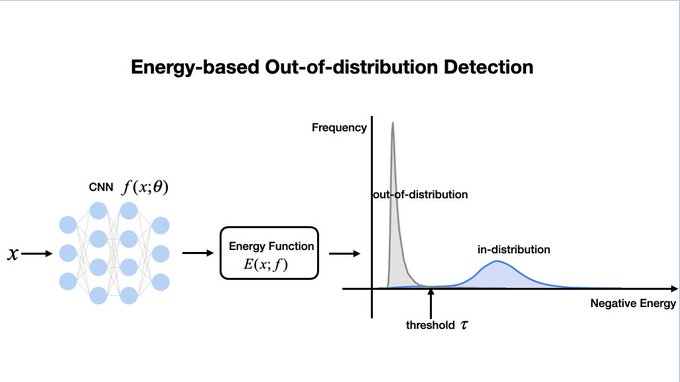

How can we make neural networks learn both the knowns and unknowns? Check out our

#ICLR2022

paper “VOS: Learning What You Don’t Know by Virtual Outlier Synthesis”, a general learning framework that suits both object detection and classification tasks. 1/n

7

65

367

Wrote a blog on automating the art of data augmentation, featuring latest works on the practice, theory and new direction of data augmentation from

@HazyResearch

. Check out on

@StanfordAILab

website:

4

82

318

Vision-language models such as CLIP is powerful in zero-shot classification. But do they know what they don’t know? We investigate the promises and AI safety of large pre-trained models when it comes to out-of-distribution data. 1/n

#NeurIPS2022

Paper:

3

62

261

Grateful to be named as Innovator of the Year by MIT

@techreview

, for “pioneering research in the critical field of AI safety”.

It’s encouraging to witness the growth of the field over the years, with now an active community contributing to the space.

26

12

239

Embedding quality is the key to distance-based OOD detection. Here is a more elegant alternative to off-the-shelf contrastive loss. Sharing our

#ICLR2023

paper “How to Exploit Hyperspherical Embeddings for Out-of-Distribution Detection?”. 1/n

Arxiv:

3

45

229

Grateful to receive an Amazon Research Award for my proposal "Uncertainty-aware Deep Learning for Reliable Decision Making in an Open World" at

@WisconsinCS

. Learn more about the program on the

@AmazonScience

website:

#AmazonResearchAwards

.

21

11

191

Both of our

#CVPR2021

submissions are accepted, including one oral presentation. Thanks to our anonymous reviewer for saying "the paper is enjoyable to read" - worth all that polishing effort. Excited to release the paper and code sometime soon. Congrats to the team! :)

11

0

172

#ICLR2022

is here. Our paper PiCO has received ICLR Oustanding Paper Award (honorable mention). Congratulations to

@Haobo_zju

and the entire PiCO team!

The oral presentation is taking place today April 25: 7:15pm-7:30pm CDT.

Paper:

7

25

159

Incredibly honored to receive the

@AFOSR

Young Investigator Program award this year. This will support our effort on open-world machine learning and AI alignment. Many thanks to the program office and everyone who made this possible! I am grateful 💕

Congratulations to this year's AFRL/AFOSR Young Investigator Research Program (YIP) award recipients! 🎉

@AFResearchLab

#BasicResearch

#AFOSRBoldResearch

#AFOSRYIP

#EarlyCareer

#Grants

#Science

#Engineering

2

5

29

15

4

135

At

#ICML2024

, we are excited to present several new works on:

🔹 Persona In-context learning

🔹 LLM alignment theory

🔹 Task adaptation for Vision-Language Models

🔹 Out-of-Distribution Detection

Please join us in these poster sessions! My students Froilan

@HyeonggyuC

and

8

12

134

My student

@YiyouSun

has successfully defended his PhD thesis today!

Yiyou has made major contributions to the field of OOD detection and open-world ML, which advance and formalize our understanding in this area.

Congrats Dr. Sun, for this incredible journey 🍻

9

2

131

🔥Your Weak LLM is a Secret Alignment Powerhouse!

Scaling alignment with pure human or AI feedback (GPT-4) isn't cheap 💸. It demands enormous human effort or computing power. Our new study reveals a hidden gem: even weak LLMs with just 125M parameters can provide powerful

Can the feedback from a 'weak' LLM with only hundreds of millions of parameters rival that from humans and GPT-4 for alignment?🤔Yes! Our study shows even a 125M weak model can match or even outperform both! 🚀

Learn more: (w.

@SharonYixuanLi

)

Thread below

2

11

47

1

22

132

My student Yifei Ming

@ming5_alvin

successfully defended his PhD thesis today!

His thesis "Reliable Foundation Models in the Open World" addresses critical problems we face today in deploying large pre-trained models into the real world.

Here is an overview of representative

4

4

129

Ever wondered what the world looks like beyond your training data? Thrilled to release

@xuefeng_du

's latest

#NeurIPS2023

paper: DREAM-OOD, a cool framework for crafting photo-realistic OOD images from any in-distribution dataset. Dive in! [1/n]

📄 Paper:

2

27

129

Together with

@balajiln

@DanHendrycks

@tdietterich

and

@latentjasper

, we will be organizing a workshop on "Uncertainty & Robustness in Deep Learning" at

#ICML2020

. See for more info. Please submit your work (deadline: May 22, 2020) and attend the workshop!

1

21

124

Interested in learning about new works on out-of-distribution detection? Please join us and chat at the

#NeurIPS2021

poster session next week. Hope to see you there!

0

16

118

Kicked off my first lecture at

@WisconsinCS

today. What an interesting and strange time to start a tenure-track. Kudos to Blackboard Collaborate which has made the online teaching experience a breeze. I still like in-person talk and dynamics better (to at least see the crowd).

3

3

120

Early days of my grad school, watching TED talks gave me so much inspiration and taught me public speaking.

Tonight I am fortunate to have the opportunity to pay back and share my journey. It’s certainly a dream of my 20s coming true.

Thanks

#TEDxUWMadison

for the great event

3

7

120

Detecting LLM hallucinations is crucial for trust in AI-generated content. But how do we achieve this without massive annotated data?

Check out our

#NeurIPS2024

spotlight paper HaloScope🔍, a practical new framework leveraging unlabeled data from real-world chat-based

🚀Excited to share our NeurIPS 2024

@NeurIPSConf

spotlight HaloScope! 🎉 HaloScope is a new SOTA method that significantly improves hallucination detection for LLMs using unlabeled LLM generations 🧵

#NeurIPS2024

Paper: , w/

@ChaoweiX

,

@SharonYixuanLi

2

17

95

0

14

121

Alignment techniques are crucial for large language models like GPT, Llama, etc. Yet, the theoretical understanding of alignment is still in its infancy.

@shawnim00

's recent work, accepted by

#ICML2024

, takes an exciting step in this direction. Here, I reflect on our recent

0

12

110

Made it to Honolulu for

#ICML2023

! Waiting for the sunrise in darkness while being 5 hours jet-lagged. 🌄

Really excited to meet the new and old friends on the island.

Ps. We will be presenting 3 papers at the main conference. Stop by and chat or DM me for a coffee meetup?

0

4

99

This work is led by two incredibly talented undergraduate students:

@KhanovMax

a sophomore at UW Madison and recently won the prestigious Goldwater Scholarship

@top34051

spent junior & senior years with us and is now pursuing graduate study at

@Stanford

CS. He will be traveling

Can we align LLMs without retraining the model (e.g. using RLHF)?

Introducing 🔥ARGS🔥, a simple and powerful test-time alignment approach that leverages a reward model to "guide" your unaligned LLM in decoding time! 🧵(1/n)

#ICLR2024

Paper:

4

67

385

2

5

97

In response to the recent challenging situations, the Uncertainty & Robustness in Deep Learning (UDL) workshop submission deadline is extended to June 14. See for more information. w/

@balajiln

@DanHendrycks

@tdietterich

@latentjasper

@icmlconf

.

#icml2020

2

19

80

My students and collaborators will present 4 exciting papers on reliable ML at

#ICLR2024

. If you are attending in Vienna, please check them out! 🥰

1⃣ ARGS: Alignment as Reward-Guided Search (

@KhanovMax

,

@top34051

)

A test-time decoding framework that integrates alignment into

1

5

79

Proud advisor moment:

@xuefeng_du

has received the inaugural Jane Street Fellowship. Congratulations!

2

3

73

Human values encompass far more than "helpfulness" and "harmlessness". They span broad personality traits, political views, moral beliefs, and beyond. Can we elicit diverse personas encoded in LLMs? Check out our

#ICML2024

paper PICLe: Persona In-Context Learning 🥒 (with

Can we modify the behavior of your LLM without training?

Introducing PICLe🥒

We elicit diverse personas from LLMs with just a few demonstrative examples!

#icml2024

Paper: (with

@SharonYixuanLi

)

[1/6]

1

12

67

0

8

71

Thanks

@techreview

@Melissahei

for this article. Humbled to be featured with this group of great minds working on some of the most pressing problems in AI and beyond.

1

4

58

This was the first paper Yiyou and I wrote together when he joined my group in fall 2020. I’ve always remembered the excitement we had in this idea, despite a few rejections. He has done several other excellent works ever since. What a journey, looking back.

Do we really need all those weight parameters for OOD detection?

Excited to share our

#ECCV2022

paper DICE – a new sparsification-based framework for OOD detection. 1/n

(joint work with

@SharonYixuanLi

)

1

16

79

1

4

57

Thanks to the senior faculty from

@WisconsinCS

for the surprise in mail! Such a thoughtfully curated collection of local gift and a warmly written letter. Made my day!

0

1

56

I am giving an invited talk at

@eccvconf

workshops today on "How to Handle Data Shifts? Challenges, Research Progress, and Path Forward". Join us at:

1. Uncertainty Quantification for CV:

2. Learning from Limited and Imperfect Data:

1

6

55

A comprehensive survey on OOD detection and beyond. We hope this can be a useful resource for you to learn about the connections and differences among these topics, and find relevant literature in one place.

0

2

55

Kicking off the new semester with an awesome boat trip with students on Lake Mendota! 🌊 🚤 Couldn’t ask for better weather or better company. Cheers to new adventure ahead of us.

@xuefeng_du

@HyeonggyuC

@shawnim00

@LeitianT

@Changdae_Oh

@seongheon_96

@dyushag

@KhanovMax

1

0

54

📢Excited to share our latest

#ICML2024

paper, which bridges understanding between classical anomaly detection and modern OOD, revealing the importance of leveraging labeled ID data.

Ensuring the reliability of machine learning involves detecting data points straying from the

Anomaly detection and OOD detection have been widely studied, but differ in the use of in-distribution (ID) labels during training. This raises a fundamental question: How and when does ID label help OOD detection? 💡 Our

#ICML2024

paper provides a formal understanding on this!

1

18

55

0

6

52

Proud advisor moment - congratulations to

@YiyouSun

on this new life chapter! Nothing makes me happier than seeing you embark on the academic journey. Keep rocking and doing more awesome works at

@UCBerkeley

I will join

@UCBerkeley

as a postdoctoral researcher working with Prof. Dawn Song (

@dawnsongtweets

) in the Fall 2024 semester! My focus will be on developing new techniques and tools for trustworthy LLM with greater safety. Looking forward to exploring new collaborations!

12

3

168

0

1

50

Congratulations to my student

@shreym0di

for winning the prestigious David DeWitt Undergraduate Scholarship! This is the premier and most competitive scholarship for undergraduates in Computer Science, and it recognizes academic excellence within our department.

In Shrey’s own

0

1

39

As a follow-up,

@ming5_alvin

and I looked into this problem since last fall:

"How does fine-tuning impact OOD detection in vision-language models"?

Our findings are now summarized in this article:

Vision-language models such as CLIP is powerful in zero-shot classification. But do they know what they don’t know? We investigate the promises and AI safety of large pre-trained models when it comes to out-of-distribution data. 1/n

#NeurIPS2022

Paper:

3

62

261

1

4

38

Flying for

#ICML2022

today. Let’s catch up if you are also attending in person. I will

- Share a few new works on OOD detection

- Give a talk at the DataPerf workshop (7/22)

- Help

@ml_angelopoulos

and

@stats_stephen

organize the

#DFUQ

workshop (7/23)

See you there!

0

1

39

CS Visit Weekend is happening tmr! If you are attending, I highly encourage you to interact with our faculty and students. I'd be glad to answer questions about

@WisconsinCS

or my research. The best way to make an informative decision is to talk to people and get perspectives :)

0

1

38

what a surprise 🙈🙈🙈

Looks like this paper is going to the stratosphere.

#1

on Arxiv now. Congratulations

@SharonYixuanLi

,

@xuefeng_du

,

@MuCai7

.

1

1

18

0

0

37

Detecting malicious user prompt is an important problem when deploying VLMs in the real world,

@xuefeng_du

’s recent work in collaboration with

@MSFTResearch

has provided a promising solution to safeguard the VLMs. Check out his thread for details!

🥳My

@MSFTResearch

internship paper is out! We show that leveraging unlabeled user data is beneficial for detecting malicious prompts for vision-language model!

paper:

w/

@reshmigh

,Robert Sim,

@AhmedGaSalem

,

@vitroc

,

@emilymlawton

,

@SharonYixuanLi

,Jay Stokes

5

11

51

2

3

34

Please join us at

#UDL2020

poster session and chat about our latest work on ATOM (Adversarial Training with Informative Outlier Mining). w/

@jiefengchen1

,

@andrewxiwu

,

@YingyuLiang1

,

@jhasomesh

Attending

#ICML2020

? Check out our poster at Uncertainty & robustness workshop (UDL), and learn about our latest SOTA results on using informative outlier mining for out-of-distribution detection :-) The session starts at 9am PT. Hope to see you there!

1

1

10

1

6

33

Please consider submitting to our UDL workshop this year! We look forward to your contribution.

Excited to announce that we'll be organizing a workshop on "Uncertainty & Robustness in Deep Learning" at

@icmlconf

this year!

Submissions due June 2, 2021. More details:

cc

@DanHendrycks

@SharonYixuanLi

@latentjasper

@tdietterich

@csilviavr

@sebnowozin

5

90

491

0

0

31

I will be giving a talk at the NeurIPS'21 workshop on challenges and opportunities in uncovering unknowns for the ImageNet model. Exciting agenda (Dec 13) put together by the organizers

@zeynepakata

@coallaoh

@dlarlus

@giffmana

@SanghyukChun

@XiaohuaZhai

0

8

31

✨Interested in understanding how well LLMs can learn from human preferences? Our recent paper introduces a new theoretical framework to analyze the generalization behavior of Direct Preference Optimization (DPO)!

This is the first work to provide a rigorous generalization bound

0

4

30

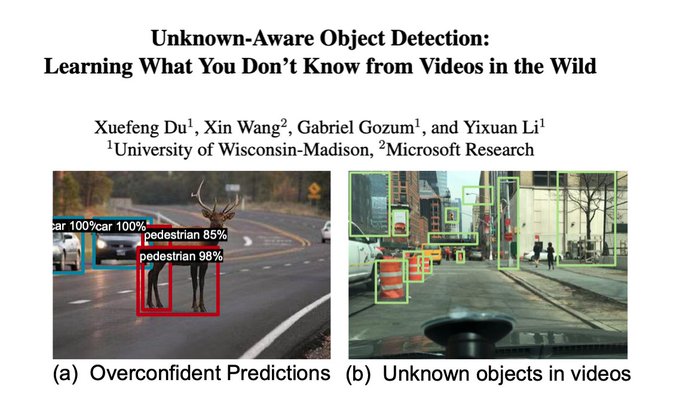

Great to see the enthusiasm in our work on unknown-aware object detection using videos in the wild. Work led by my awesome student

@xuefeng_du

. We sadly missed

#CVPR2022

in person but the oral talk is recorded online. Get in touch with us if you are interested in chatting.

Excited to release our

#CVPR2022

oral paper STUD, a powerful unknown-aware object detection framework that safeguards against OOD objects. STUD is the first to leverage videos in the wild and teaches models to tell apart known and unknowns. (1/n)

Paper: .

3

14

91

0

2

30

Thank you

@amfam

and

@datascience_uw

for sponsoring our research efforts on building

#ResponsibleAI

. Both estimating distributional uncertainty and debiasing ML are timely problems to tackle.

.

@amfam

has partnered with

@UWMadison

through the American Family Insurance Data Science Institute to offer mini-grants for data science research. Nearly $3 million has been awarded to 21 teams since 2020. Learn about Round 3 awards:

0

3

16

1

0

29

Look forward to speaking at the seminar! Thank you for hosting.

We are pleased to have our very own Sharon Li

@SharonYixuanLi

@WisconsinCS

as our speaker of the seminar this week. She will tell us about "Uncovering the Unknowns of Deep Neural Networks: Challenges and Opportunities" on Dec 1 at noon ET.

@UWMadPhysics

0

4

19

1

3

28

I took my first lecture at Cornell with this wise man, who later became my phd advisor. He would always be in his office by 9am (even if he was on an international flight the day before). He stands for what a dedicated and disciplined career truly means.

Congrats on the last lecture in a long and distinguished career to John Hopcroft! I've gotten to spend a bit of time w/John at

@HLForum

over last few years, & he's a delight to be around.

(Sorry the last lecture wasn't in a classroom filled w/students to send you off properly!)

3

12

131

1

0

26

Excited that our work is now available at Nature Scientific Reports!

Thrilled to share our latest work in

@SciReports

on conversational AI. We assessed how

#GPT

interacts with diverse social groups on science & social issues, introduced an equity framework and shared our datasets. Full study is here:

#scicomm

#OpenAI

#HCI

3

26

73

0

1

26

Very excited to share this work on Model Patching—an end-to-end framework for improving robustness against subgroup differences, with benefits on a real-world skin cancer classification task. Thanks to my amazing collaborators

@krandiash

, Albert Gu and

@HazyResearch

!

0

2

24

In our Thanksgiving lab social,

@berylSreya

inspired me to give shoutouts to every student, for all the hard work, enthusiasm, and inspiration they bring us. The messages are the tokens of appreciation for how grateful I am to work with these awesome students at

@WisconsinCS

.

0

0

24

I will be giving a talk at Women in Computer Science (WiSC) at Stanford next Wednesday. I will talk about research on open-world machine learning and will stick around for a casual Q&A at the end. Look forward to this and thanks

@StanfordWiCS

for organizing!

0

3

23

If you are on the job market this year, consider applying! The living quality is a real charm (having spent years in both east and west coast before I moved here).

Our department

@WisconsinCS

is looking for faculty at all levels. Reach out if you need more information.

FYI, Madison was rated as

#1

city livable city. Just saying:-)

0

24

71

0

0

22

This is precisely how I spent my 30s birthday. Finished all episodes yesterday in the midst of CVPR deadline.

Highly recommend binge watching the new Netflix series Queen's Gambit, beautifully filmed, stunningly acted, and a pretty accurate portrayal of what it is like to be a child chess prodigy. Not surprising given that the brilliant

@Kasparov63

was a consultant on it!

#QueensGambit

74

224

3K

0

0

21

If you are at

@CVPR

, please join us at the poster session (paper 6254 & 6415)!

Thanks to our audience for the great questions and conversations. The morning session gave me so much to think about.

#CVPR2021

0

0

21

Join us tonight at the WiML Un-Workshop "Does your model know what it doesn’t know? Uncertainty estimation and OOD detection in DL".

@polkirichenko

@AkramiHaleh

and

@jessierenjie

will lead with an excellent tutorial talk, followed by breakout sessions. Pop in and chat together?

Join us July 21 7:25 pm ET at WiML Un-Workshop at

@icmlconf

for a breakout session on "Does your model know what it doesn’t know? Uncertainty estimation and OOD detection in DL"

Together with

@polkirichenko

@AkramiHaleh

@sharonyixuanli

@sergulaydore

0

4

17

0

2

20

Check out FDIT - a cool and well-executed idea that brings classic signal processing techniques to modern image translation. Fourier space can do wonders.

0

0

19

Students in my deep learning class generated this image using DALL·E 2, with the prompt "Happy Thanksgiving, students at University of Wisconsin - Madison". Pretty cool with the State Capitol in the background. (slide credit:

@YepengJ

, Sijia Fang and Jiahao Fan).

happy holidays!

0

1

16

This also includes a special talk by

@tydsh

(Facebook AI Research) on understanding deep neural networks in a teacher-student setting.

1

3

16

Thank you for featuring, and especially SAIL blog team for helping with the editorial process.

@andrey_kurenkov

@siddkaramcheti

Data augmentation is crucial for machine learning, so how do we do it in a principled way?

Check out our latest blog post courtesy of

@SharonYixuanLi

and

@HazyResearch

new algorithms for automating the search process of augmentation techniques.

0

2

14

0

0

15

Look forward to your participation in

#UDL2020

this Friday! We are collecting questions for our panel discussion (11:30am-12:30pm PT), please submit here: .

Attending

#ICML2020

? Join us for our workshop on "Uncertainty and Robustness in Deep Learning"

@icmlconf

this Friday (July 17), co-organized w/

@SharonYixuanLi

,

@DanHendrycks

,

@latentjasper

,

@tdietterich

.

We had 140 (!) accepted papers

1/3 (contd.)

4

23

135

0

0

14

I had an awesome time serving as the

#WiML2020

mentor this year. Thanks to my co-mentor

@djhsu

, Table 34 participants, and organizers of

@WiMLworkshop

for making the NeuIPS experience memorable.

We would like to express our sincere gratitude to the inspiring cohort of

#WiML2020

MENTORS! We have over 120 mentors from academia & industry! Mentorship roundtables are divided into 3 areas Research (Tables 1–28), Career & Life Advice (Tables 29–50) and Sponsor (Tables 51–63).

3

6

95

0

0

14

Look forward to giving a talk at the MLOS seminar next Monday. Check out the full agenda: . Thanks for organizing the great series

@RemziArpaciD

and Microsoft

@krlis1337

@GraySystemsLab

@MSFTResearch

.

@RemziArpaciD

@WisconsinCS

@GraySystemsLab

@SQLServer

@MSFTResearch

Next up we will have:

@SharonYixuanLi

Assistant Prof. in the CS dept of

@UWMadison

, talking about: “Reliable Open-World Learning Against Out-of-distribution Data" (Monday 9/21 at 2pm)

6/n

1

2

5

1

1

14