Kostas Daniilidis

@KostasPenn

Followers

4,353

Following

1,075

Media

127

Statuses

1,038

Ruth Yalom Stone Professor @Penn @PennEngineers @PennCIS @GRASPlab

Joined December 2012

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

MOONLIT FLOOR OUT NOW

• 278575 Tweets

JUMP

• 209033 Tweets

Mets

• 165541 Tweets

Falcons

• 68220 Tweets

天使の日

• 54669 Tweets

Diana

• 43227 Tweets

#PowerGhost

• 31032 Tweets

Kirk Cousins

• 28753 Tweets

Bucs

• 28066 Tweets

MerzAesthetics x KTMP

• 27655 Tweets

Hayırlı Cumalar

• 23766 Tweets

SB19 IS ACER READY

• 21614 Tweets

#PowerBook2Ghost

• 19438 Tweets

HAVE A SAFE FLIGHT JIN

• 18587 Tweets

Mooney

• 18185 Tweets

ワートリ

• 16914 Tweets

Tariq

• 16380 Tweets

感謝マルチガチャ

• 15832 Tweets

Cane

• 14655 Tweets

PRABOWOsiapkan YangTERBAIK

• 13648 Tweets

Bleed

• 13113 Tweets

KEKUATANkita PERSATUANkita

• 13046 Tweets

最大4体ゲット

• 12656 Tweets

人達同士

• 12616 Tweets

#يوم_الجمعه

• 12502 Tweets

Last Seen Profiles

#CVPR2022

Motion 3: "Any reviewer who has accepted an invitation to review but violates the reviewing guidelines set forth by the conference will be prohibited from submitting any papers to CVPR for up to two

years." My vote is a resounding NO! 1/n

12

28

288

@ylecun

++: "damage to the language system within an adult human brain leaves most other cognitive functions intact." There is ample evidence that reasoning can happen after language ability disappears:

16

40

197

Best Fall present ever: the new book by Tristan Needham. Thanks to

@ch402

for tweeting about it.

@CSProfKGD

Wish we had more diffgeom in the past so that we understand faster

@TacoCohen

's recent papers :-)

9

20

199

#CVPR2024

Such a great effort by my wonderful students. Excellent finish. Good luck to all of you. What a great feeling, 31 years of CVPR deadlines :-)

2

6

186

This is really fascinating. Nina Miolane

@ninamiolane

showing how biological brains encode Lie groups!

#CVPR

Equivision workshop

5

29

192

Congratulations to my amazing student Georgios Pavlakos

@geopavlakos

for receiving the Best Computer Science Dissertation Award at Penn, the 2021 Morris and Dorothy Rubinoff Award.

7

8

161

TRAM recovers 3D human pose in a global world frame with 60% root trajectory error reduction wrt SotA!

Watch the video on our project page

arxiv:

Thanks to my amazing coauthors:

@YufuWang_

,

@ZiyunClaudeWang

,

@LingjieLiu1

.

2

32

160

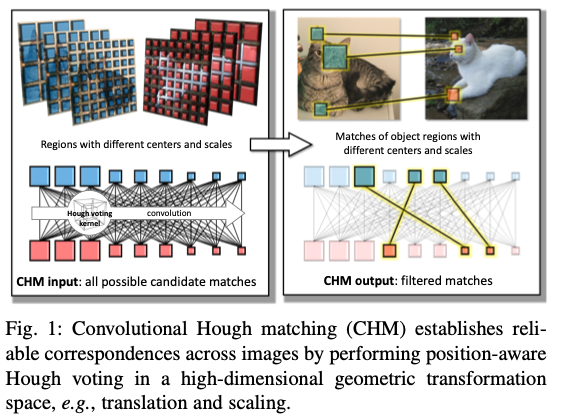

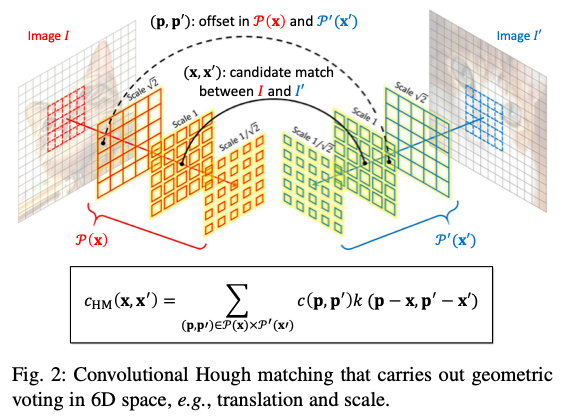

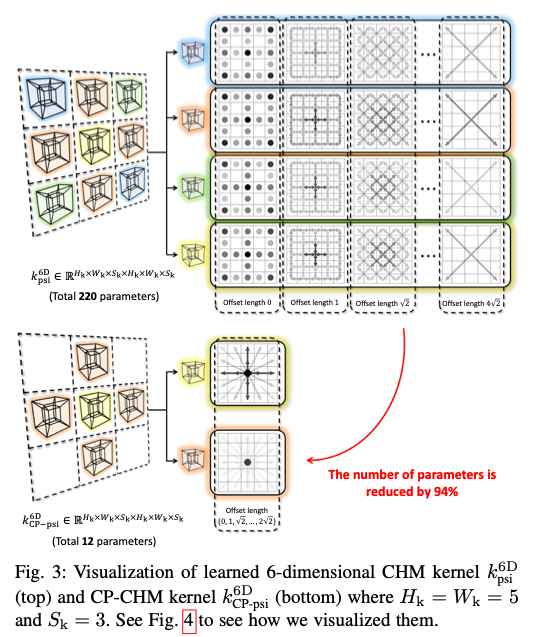

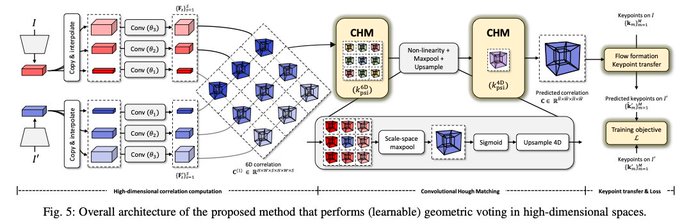

Student: "What are the three most important problems in computer vision?"

Takeo Kanade: "Correspondence, correspondence, correspondence!"

The first question that I ask when I read a DeepX geometry paper is which step solves the correspondence problem.

2

20

131

Our

#CVPR2022

paper introduces a representation of canonical shape for deformed humans, animals and articulated objects via neural homeomorphisms. Very proud of first paper with my student

@Jiahui77036479

:

arxiv:

Project:

4

16

118

Incorporating stronger geometric bias within the architectural design and taking advantage of the inductive bias provided by the vision foundation models enables really fast and robust tracking: Yunzhou Song,

@JiahuiLei1998

@ZiyunClaudeWang

@LingjieLiu1

!

2

15

117

Guess the dance! Produced by the amazing notebook developed by

@nikoskolot

@geopavlakos

based on our

#ICCV2021

ProHMR. (So easy to run that even I an old professor can run it. Video cut to hide the name of the dance).

4

12

109

There is a progress in linear algebra education. I always keep asking this question in the vision class: How many of you have never heard of SVD. It was 1-2 out of approx 120 students (40 undergrad + 80 grad) this semester. 10 years ago, it was half of the class. .

@CSProfKGD

5

4

106

In this

#ICML2022

paper, we exploit the sparsity in the spectrum of tensor field networks to derive a novel nonlinearity/activation and provide an alternative derivation of TFN kernels.

@_onionesque

@TacoCohen

@maurice_weiler

@wellingmax

@mariogeiger

3

20

99

Congratulations to our continuous source of inspiration, Ruzena Bajcsy, for receiving the 2021 Rosenfeld Lifetime Achievement Award

@iccv2021

,

@PennEngineers

@GRASPlab

1

9

92

Birds of a Feather, our CVPR'21 paper; 3D vision in the service of biology (

@akanazawa

,

@Michael_J_Black

,

@FuaPv

,

@silvia_zuffi

,

@KordingLab

);

5

15

84

So blessed to have had Dr. Kolotouros

@nikoskolot

as a student! Look at his widely cited SPIN and ProHMR results. Thank you

@Michael_J_Black

@dineshjayaraman

@pratikac

and Jianbo Shi for the very educational questions.

@GRASPlab

@CIS_Penn

3

6

82

Our post

#eccv2020

workshop on equivariance and data augmentation has an incredible line-up of speakers and is free. Register at

1

21

78

Congratulations to Dr. Karl Schmeckpeper. Sooo proud of you! Thank you

@chelseabfinn

, for mentoring and working with Karl and being on his committee.

5

3

77

Today England will face Germany in a match with a history in computer vision and not only. Reid and Zisserman

@oxford_VGG

wrote one of the most elegant papers in geometric vision at ECCV'96. I devote one of my courser lectures to this pearl:

1

5

76

Four generations of my academic tree at U of Minnesota, Maryland, and Drexel! And Vishnu PhD student at UMD. Could not be prouder!

@AgriRobot

@ptokekar

@Lifeng917

3

5

74

Our equivariant transformer for 3D-reconstruction in function space presented by

@EChatzipantazis

,

@stefanos_pert

,

@EdgarDobriban

at

#ICLR2023

.

0

15

72

Congratulations to Program Chairs of

#CVPR2022

@richasingh26

@KristinJDana

@ganghua1

and Samaras. This CVPR has set a new standard of checks and balances cross AC decisions and reviews with greater granularity and maximum consistency. It felt like unit tests in decision making.

2

7

69

Our Equivariant Vision workshop features five great speakers

@erikjbekkers

@HaggaiMaron

@ninamiolane

@_machc

, and Leo Guibas, spotlight talks, posters, and a tutorial prepared for the vision audience. Come tomorrow, Tuesday, at 8:30am in Summit 321! Thank you

@CongyueD

for

0

15

67

How nature performs dense pose annotation :-) Watch it in full screen.

@silvia_zuffi

@akanazawa

@Michael_J_Black

@CSProfKGD

2

5

63

Congratulations to the organizers of

@neur_reps

for an inspiring workshop full of geometry, topology, and neuroscience!

2

4

62

The culmination of my first NeurIPS could not have been more rewarding: The Neural Wave Machines talk by

@wellingmax

. We started working on equivariance inspired by Max and

@TacoCohen

6 years ago. Thank you and your group (

@maurice_weiler

and all).

1

5

58

At

#CPAL2024

with

@YiMaTweets

! Thank you for being the inspiration and the host of the first

@cpalconf

. I remember sitting next to Yi during the summer of 1998 at Berkeley when

@jana_kosecka

and Yi conceived the self-calibration for SfM that received the ICCV'99 Marr Prize!

0

3

59

Hey hey, my my

The essential matrix can never die

There's more to the picture

Than meets the eye.

Hey hey, my my.

#eccv2022

2

7

58

Would you like to know how to define and realize an equivariant convolution and attention on a light field / feature field? Come to listen to

@xu_yinshua86846

and

@JiahuiLei1998

presenting our spotlight at 10:45am at poster

#309

@NeurIPSConf

1

7

56

This is an amazing dataset showing the triumph of COLMAP "COLMAP, a mature photogrammetry framework, provides 3D annotations that are treated as ground truth"

@mapo1

@SattlerTorsten

1

3

56

More ICCV nostalgia

#ICCV2021

: I had the privilege to do the clerical work at the in-person meeting of the PCs of ICCV 1993. Hans-Hellmut Nagel, Yoshiaki Shirai, and the late Tom Huang met on a very cold weekend in Karlrsuhe to make paper decisions. 1/3

2

3

53

How does convolution or attention work on feature fields defined on the space of rays?

@xu_yinshua86846

@Jiahui77036479

revised our paper and make a great case for generalized convolution/attention

@TacoCohen

@maurice_weiler

@mario1geiger

@_onionesque

2

15

52

#cvpr2022

I am very grateful to all in-time reviewers but also to all late reviewers who informed the AC about an ETA or even that they are unable to do it. And to all emergency reviewers who are stepping in this week.

Reviewing load this year is insane. 🙏🙏🙏

0

0

51

@JiahuiLei1998

and

@WenJiang_PL

finishing intrinsic calibration. Ready to capture the cowbird mating season in 4D with 8 cameras and 24 microphones in our smart aviary, a project with Marc Schmidt from

@PennSAS

Biology/Neuroscience and

@PennCIS

@GRASPlab

.

0

3

49

What a delight to see Professor Sugihara performing again a geometry mastery! The first year of my PhD, my advisor had passed me on his book on Algebraic approach to the recovery of three-dimensional shapes from single images. It was an eye opener to realize how constraint

0

6

48

I am filled with a sense of wonder and admiration when I think about this incredible computer engineering accomplishment of NASA. It is still one of the most fulfilling places to work and my answer when people ask me what I would do if I were not in academia. I experience this

0

1

45

So proud to be advisor together with

@vijay_r_kumar

of Wenxin Liu who defended today with an amazing presentation! Check her TLIO inertial neural ofometry! Thank you

@davsca1

,

@loiannog

,

@dineshjayaraman

, Jianbo and CJ!

1

2

45

Many students and colleagues ask me why I am so obsessed with teaching (and publishing on) optical flow: I now have the right answer: Because a $69.99 COSTCO drone uses it !!!

@Michael_J_Black

@davsca1

@CSProfKGD

2

2

43

We can't wait to welcome Lingjie in 2023! An amazing success for

@CIS_Penn

and the Penn vision community/graphics community!

Thrilled to announce that I will be joining the University of Pennsylvania as an Assistant Professor in January 2023! I'm extremely grateful to all those who have supported me all the way and I look forward to working with students and colleagues at Penn!

@PennEngineers

@CIS_Penn

54

21

514

1

0

41

The experience of suggesting reviewers for

#cvpr2022

was so rewarding. I spent hours and hours visiting more than 100 GS pages, reading papers by potential reviewers, and learning better our exponentially growing community. Back to writing letters!

0

0

40

@Michael_J_Black

@geopavlakos

@nikoskolot

Today SPIN reached 1000 citations. A very powerful idea to leverage geometric model optimization in the training loop. It can be applied to other geometric problems natively solvable using optimization, too.

3

2

39

Do we really need scene supervision? Not really! Equivariant shape priors suffice for segmenting 3D objects. Great work,

@Jiahui77036479

@CongyueD

, and Karl S. !!!

Happy to introduce our new

#CVPR2023

paper EFEM with

@CongyueD

, Karl Schmeckpeper ,

@GuibasLeonidas

,

@KostasPenn

:

- Learn Equivariant object shape prior on ShapeNet;

- Directly Inference for scene object segmentation!

- A new dataset "Chairs and Mugs"!

2

12

65

2

2

38

Mitch Marcus hired Benjamin Pierce,

@RajeevAlur

and me, among many others between 1996-2001. But most importantly, he created the Penn Treebank more than 30 years ago, the first golden annotation of a large corpus.

0

2

38

Thank you

@rodneyabrooks

for the in-person talk at

@GRASPlab

and for sharing your views on computation. It is such a pleasure to listen to something different!

2

5

38

#ECCV2020

How can you predict video from both interaction and observation? Karl Schmeckpeper's paper with

@_oleh

Annie Xie Steven Tian

@svlevine

@chelseafinn

will be presented at 9a and 7p ET.

1

8

36

@NikolaiMatni

and I could not have been prouder today!

@KendallJQueen21

(now Dr. Kendall Queen) has defended his dissertation on the first event-vision-only lane following ground vehicle.

@GRASPlab

@PennEngineers

4

9

37

This was an amazing presentation by

@erikjbekkers

on equivariant neural fields and neural ideograms at the Equivision Workshop. I want to go and read all his 2024 papers now!

Looking forward to this! I'll talk about

Neural Ideograms and Geometry-Grounded Representation Learning

... and cats and dogs and owls!

Thank you

@KostasPenn

@CongyueD

and co for organizing this! Excited to be part of the program!

1

10

94

2

7

39

Come to poster

#73

at the NW corner of the hall to listen to

@Jiahui77036479

. Point clouds do not need supervision.

0

2

37

"Cross-Domain 3D Equivariant Image Embeddings" accepted at

#ICML2019

! Regression-free intra-class “mental rotation”.

1

7

35

Proud to announce the workshop that

@EdgarDobriban

and I are organizing on Sep 4. If you are excited about invariance, symmetry, equivariance, data augmentation register here:

0

15

34

Why we teach optical flow in computer vision. In the moving cubes illusion, take the middle row for 100 first frames and plot it as xt-slice: optical flow is orientation in space-time. Speed is inverse of slope. Rest of the illusion motions will be homework

#CIS580

.

2

2

35

We knew that you can implement convolution with optics. Now ReLUs with optics:

0

5

32