Vedant Misra

@vedantmisra

Followers

1,832

Following

304

Media

20

Statuses

199

AI researcher @DeepMind (Gemini, Minerva, PALM) | Alum @OpenAI (Codex, Grokking) | @HubSpot | Founder/CEO Kemvi (acq HUBS) | Physics @Columbia

San Francisco, CA

Joined September 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

FEMA

• 1203388 Tweets

Liz Cheney

• 209584 Tweets

#LISAxMoonlitFloor

• 197221 Tweets

MOONLIT FLOOR OUT NOW

• 137932 Tweets

SCJN

• 128619 Tweets

The Boss

• 103770 Tweets

#GHGala5

• 100032 Tweets

Bruce

• 92338 Tweets

Mets

• 69981 Tweets

Happy Anniversary

• 59797 Tweets

Baker

• 51010 Tweets

Brewers

• 42600 Tweets

EL DESTELLO IS OUT

• 34794 Tweets

天使の日

• 33230 Tweets

Falcons

• 29013 Tweets

Mancuso

• 25832 Tweets

Halle

• 24850 Tweets

もちづきさん

• 24728 Tweets

Pete Alonso

• 19614 Tweets

Athena

• 15232 Tweets

Mike Evans

• 14530 Tweets

Bijan

• 13510 Tweets

Phillies

• 11404 Tweets

#ゴンチャのハロウィン準備中

• 10748 Tweets

Milwaukee

• 10479 Tweets

Quintana

• 10036 Tweets

Last Seen Profiles

Gemini Pro matches GPT-4 in ELO, but the real game changer is that sampling a million tokens costs $0.5 instead of $30

🔥Breaking News from Arena

Google's Bard has just made a stunning leap, surpassing GPT-4 to the SECOND SPOT on the leaderboard! Big congrats to

@Google

for the remarkable achievement!

The race is heating up like never before! Super excited to see what's next for Bard + Gemini

153

620

3K

11

43

383

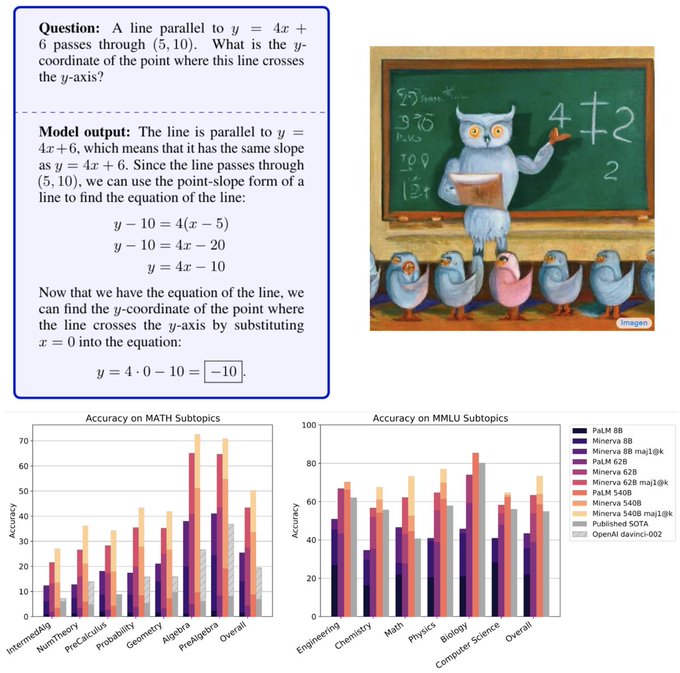

Thrilled to announce🦉Minerva: a large language model capable of solving mathematical problems using step-by-step reasoning in natural language.

See blog here: and samples here: (1/n)

3

30

128

Gemini Pro 1.5 is here! 10M token context window (1M in production for now), comparable evals to Ultra 1.0, and considerably more compute efficient. This model can perceive and answer questions about 10 hours of video, or 100 hours of audio, or 300K lines of code, and will

0

13

62

Thrilled to share what we've been working on at DeepMind!

4

3

40

I'm at

#NeurIPS22

! Interested in LLMs, tool use, scaling, reasoning, AGI, or good restaurants in New Orleans? DM me if you'd like to meet, or stop by our poster on Minerva (Tues 4-6p, no. 920)

3

0

32

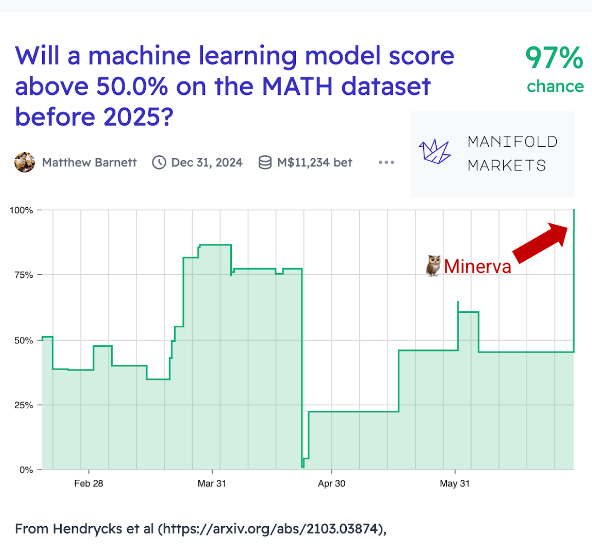

📈

Google latest 540B language model solves 1/3 of STEM undergrad problems from MIT with 50% accuracy on MATH, what

@JacobSteinhardt

predicted would happen by 2025

11

66

387

0

2

26

Can confirm. Sergey has been in the bullpen with the rest of us since the beginning of Gemini, shares our deep fascination with this technology, and recognizes that this is a transformative moment in the history of humanity. His engagement has been immensely motivating for all.

3

3

25

BIG Bench is not only a fascinating collection of tasks for LLMs, it's also a shining example of how open and collaborative research should be organized. Glad to have been part of it!

0

1

24

Now that I've had a paper covered by

@yannic

, next I'm going to go for either a Fields Medal or the Turing award, haven't made up my mind yet.

0

0

21

🤯 When you realize everyone you've worked for or considered working for is now casually hanging out with the President and VP! 🇺🇸 Hey

@JoeBiden

,

@KamalaHarris

, can I join the next reunion? 🤔 I promise to bring my A(I)-game!

0

0

10

Such a pleasure speaking with

@paulroetzer

at

#maicon22

about AI and its impact on humanity - it's a thrilling time to be working on this technology!

It’s the final session of

#MAICON22

and we’re diving deep with a fireside chat featuring

@vedantmisra

where

@paulroetzer

is interviewing him about how

#AI

is not only transforming business but how it will transform humanity in general.

0

0

7

2

2

16

Aloha, I'm at

#ICML2023

! Interested in LLMs, reasoning, AGI, or things to do in Oahu? DM me if you'd like to meet!

2

0

8

It took us longer to go from the Mark I Perceptron to LSTMs (29 years) than from LSTMs to

#ChatGPT

(27 years). It took us longer to go from Word2Vec to Transformers (4 years) than from Transformers to

#OpenAI

's

#GPT3

(3 years). Exponential growth is wild.

#AI

#MachineLearning

0

1

11

So excited about this release! The future is bright for deep networks that understand the visual world

0

2

8

Research mathematicians: Perhaps one day AI will help with mathematical reasoning, but not today

@spolu

: hold my beer

An AI using this GPT thing which folks go on about, has golfed two short proofs in mathlib, Lean's maths library :o

So we now have 134 human contributors (including several ICL undergraduates) and 1 computer.

Thanks to

@jessemhan

for letting me know!

2

37

144

0

0

8

6 / n: See our paper here:

An outstanding collaboration with

@alewkowycz

,

@ajandreassen

,

@dmdohan

,

@ethansdyer

,

@hmichalewski

,

@vinayramasesh

,

@AmbroseSlone

,

@cem__anil

, Imanol, Theo,

@Yuhu_ai_

,

@bneyshabur

, and

@guygr

0

0

4

Have an idea for a task that might be too tough for big language models? Submit it to our new benchmark!

0

1

4

Reading minds is real: A team at

@UTAustin

trained a model to take fMRI inputs and predict the words that people were thinking. () 4/

1

2

3

@mpshanahan

@ilyasut

@mpshanahan

what makes you say there are no space and time in a computer? If you think only meat can be conscious, you *might* be in thrall to an overly simplistic definition of consciousness. The most basic criterion is for there to be a subjective experience, not a body.

1

0

2

"Computer, write me a screenplay":

@DeepMind

shipped Dramatron, demonstrating an approach to hierarchical generation of coherent long-form stories () 7/

1

1

2

Today in

@Nature

:

#AlphaTensor

, an AI system for discovering novel, efficient, and exact algorithms for matrix multiplication - a building block of modern computations. AlphaTensor finds faster algorithms for many matrix sizes: & 1/

114

2K

8K

1

0

2

@ESYudkowsky

@ESYudkowsky

this would be even more true if we knew how biological networks solve the very same problems.

0

0

1

@katieburkie

Fwiw, they recently announced a two year grace period if you're conditionally approved for renewal!

0

0

1

@andrew_n_carr

This partitions all hairs into sets A and B such that all elements of A end up on the floor and all elements of B remain on the scalp with each element of B longer than each element of A

1

0

1