Pan Lu

@lupantech

Followers

4,687

Following

1,065

Media

195

Statuses

808

Postdoc @Stanford | PhD @CS_UCLA @uclanlp | Amazon/Bloomberg/Qualcomm Fellows | Ex @Tsinghua_Uni @Microsoft @allen_ai | Math Reasoning, AI4Science, #NLP , LLMs

Palo Alto

Joined April 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

America

• 1075191 Tweets

Happy 4th

• 875103 Tweets

Labour

• 610827 Tweets

Independence Day

• 523748 Tweets

Reform

• 488385 Tweets

Tories

• 290903 Tweets

#loveIsland

• 217427 Tweets

Tory

• 203913 Tweets

Keir Starmer

• 130811 Tweets

#GeneralElection2024

• 119826 Tweets

Mimi

• 109754 Tweets

Sean

• 103380 Tweets

Mario Delgado

• 74126 Tweets

Maya

• 61849 Tweets

Corbyn

• 54858 Tweets

#TemptationIsland

• 40370 Tweets

Sky News

• 32759 Tweets

Raul

• 32740 Tweets

Andy Murray

• 31305 Tweets

Channel 4

• 26233 Tweets

Luca

• 25227 Tweets

Lib Dems

• 23625 Tweets

Reino Unido

• 20009 Tweets

Matilda

• 18184 Tweets

THE ARCHER

• 15275 Tweets

#ExitPoll

• 12984 Tweets

Joey Chestnut

• 10571 Tweets

GUILTY AS SIN

• 10144 Tweets

Last Seen Profiles

Pinned Tweet

I'm excited to join Prof.

@james_y_zou

group as a postdoc scholar, aiming to push the boundaries of AI for scientific discovery

#AI4Science

.

I've had an incredible and rewarding time with the

@uclanlp

group and the VCLA group

@UCLAComSci

. Deeply grateful to all my mentors,

15

2

217

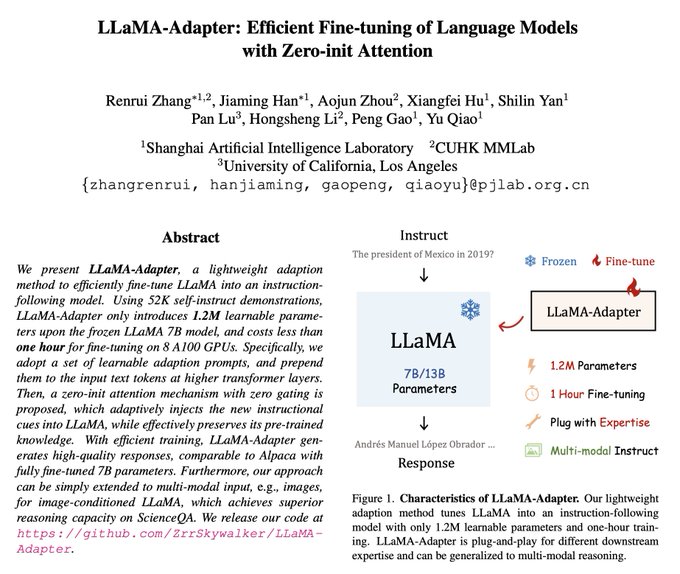

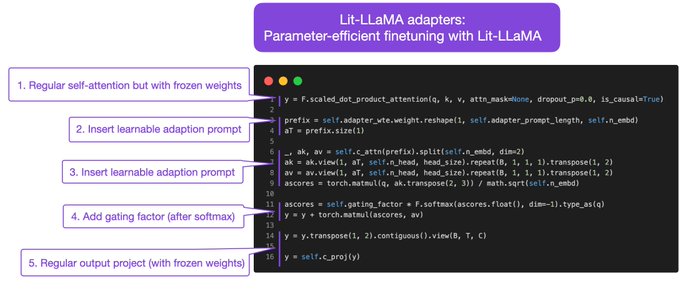

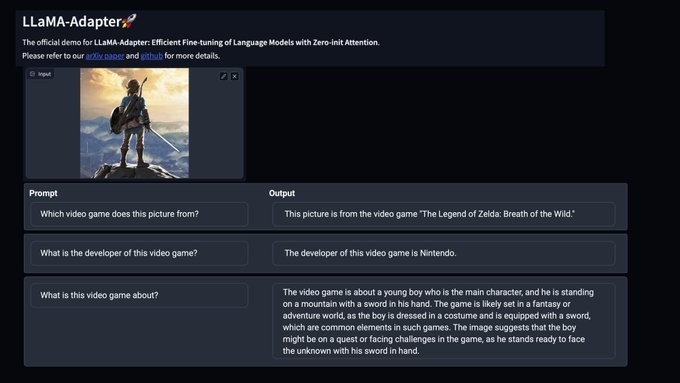

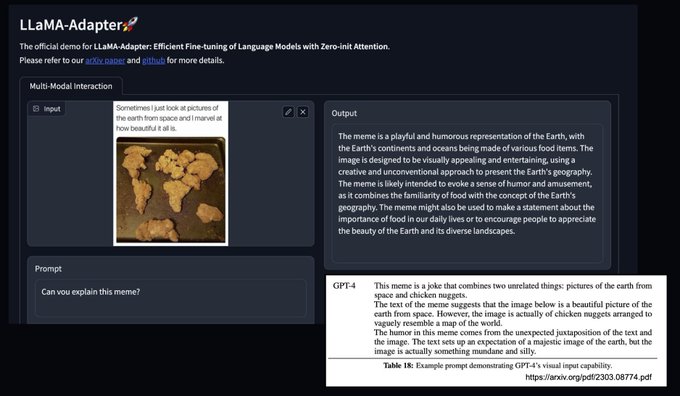

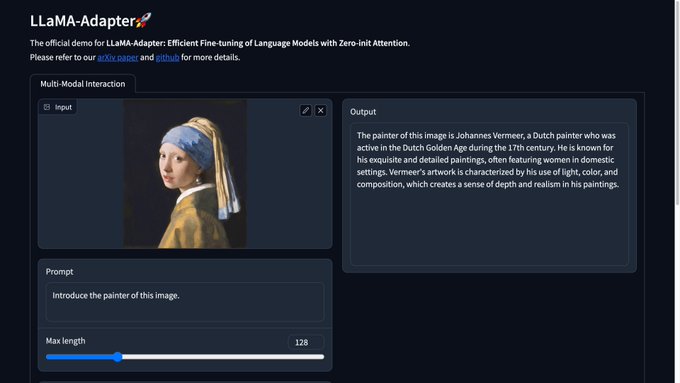

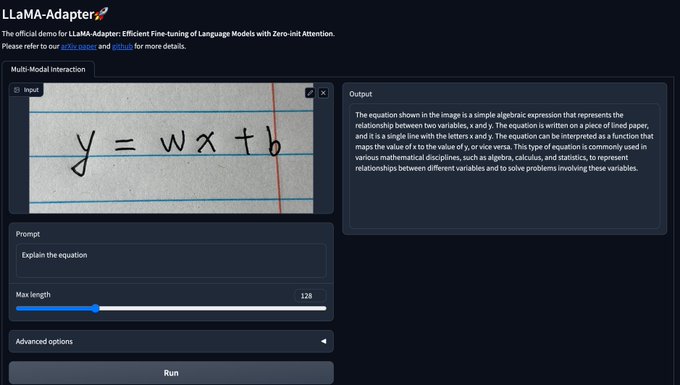

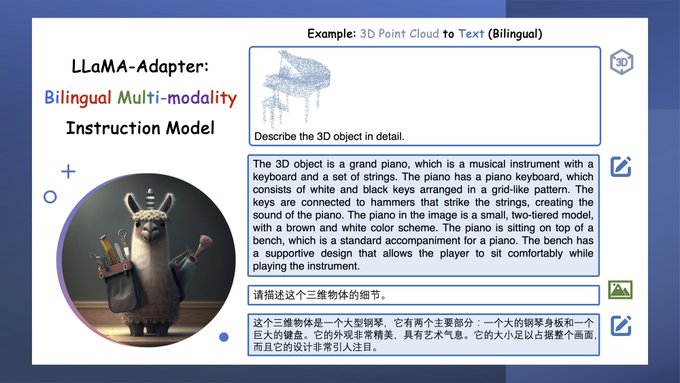

🔥Excited to release LLaMA-Adapter! With only 1.2M learnable parameters and 52K instruction data, LLaMA-Adapter turns a

#LLaMA

into an instruction-following model within ONE hour, delivering high-quality responses!

🚀Paper:

🚀Code:

24

174

820

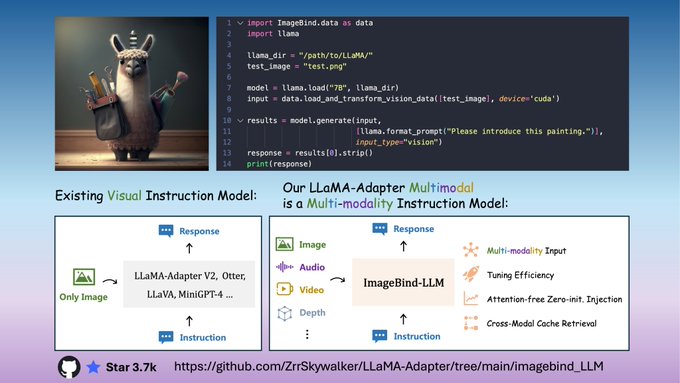

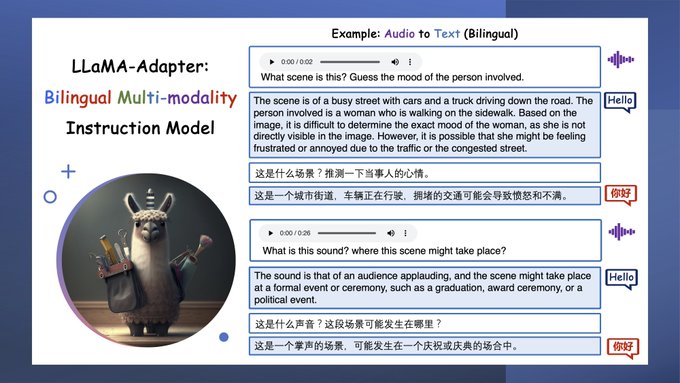

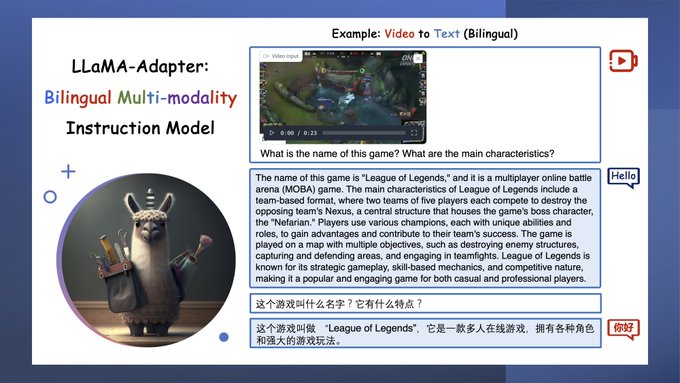

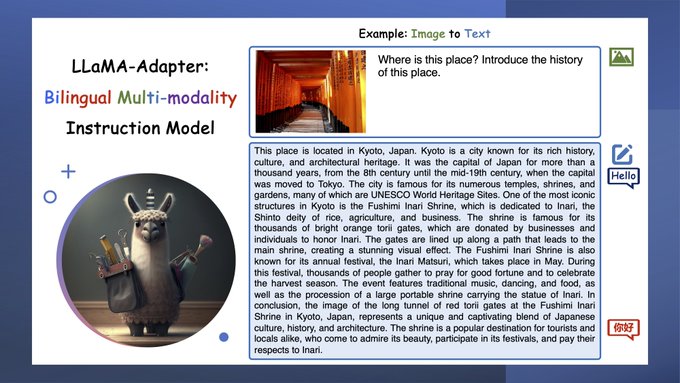

🔥Thrilled to release LLaMa-Adapter Multimodal!

🎯Now supporting text, image, audio, and video inputs powered by

#ImageBind

. 🧵6

💻Codes for inference, pretraining, and finetuning ➕ checkpoints:

demo:

abs:

15

149

640

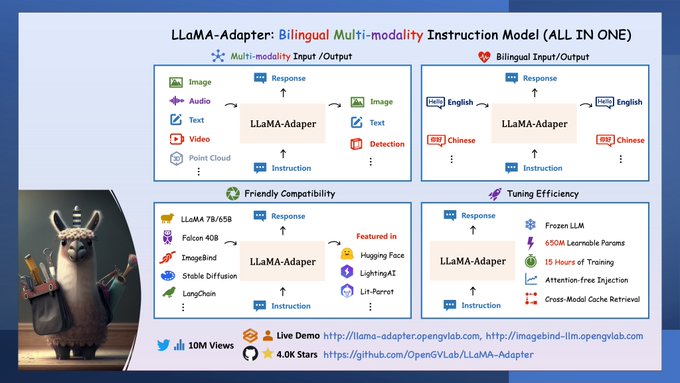

🎉Exciting news: LLaMA-Adapter is now fully unlocked! 🧵6

1⃣ As a general-purpose

#multimodal

foundation model, it integrates various inputs like images, audio, text, video, and 3D point clouds, while providing image, text-based, and detection outputs. It uniquely accepts the

22

166

603

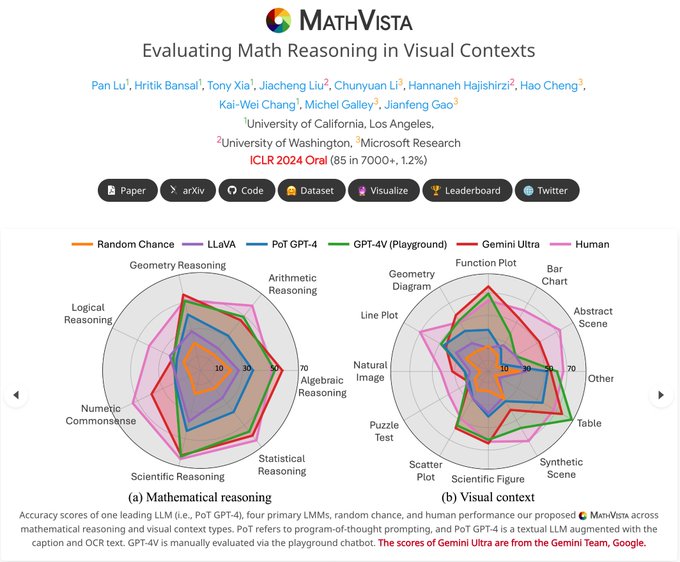

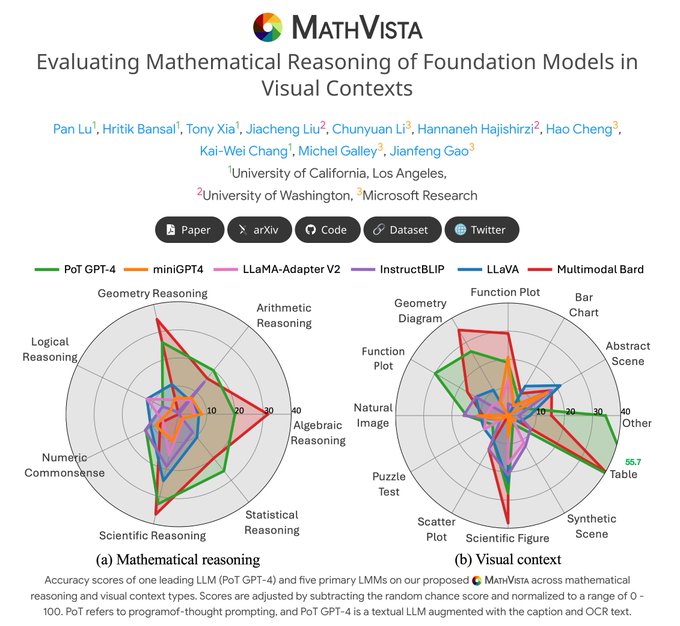

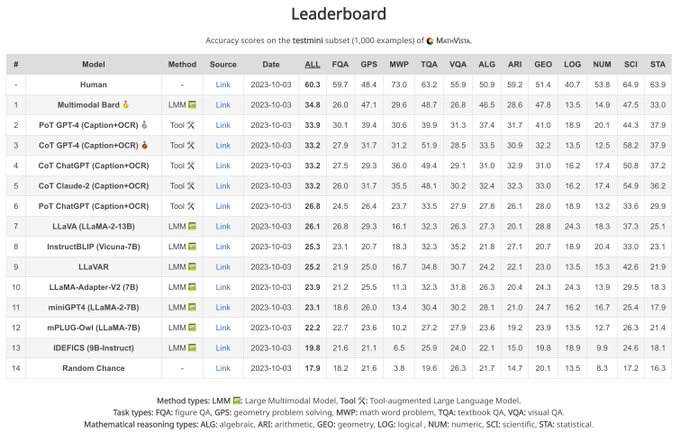

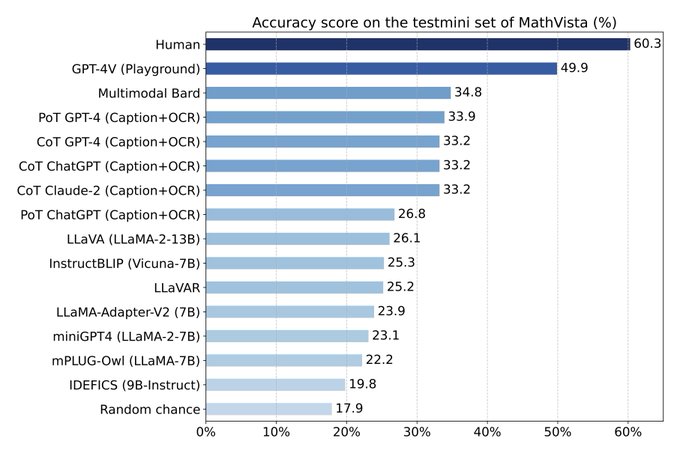

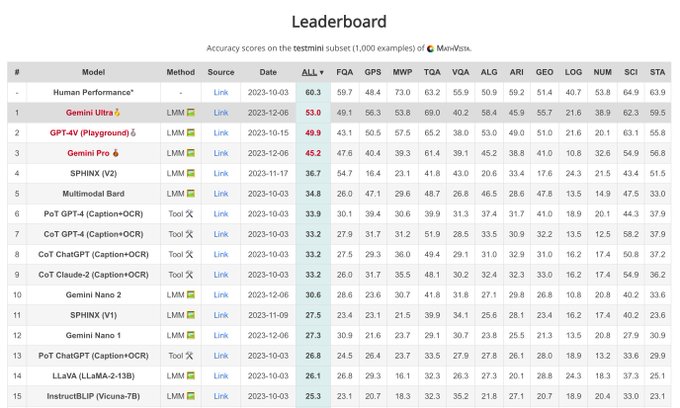

🚨 BREAKING:

@OpenAI

's new GPT-4o model outperforms humans on MathVista for the first time!

📊 Scores:

Human avg: 60.3

GPT-4o: 63.8

📖 Learn more:

OpenAI :

MathVista:

8

89

522

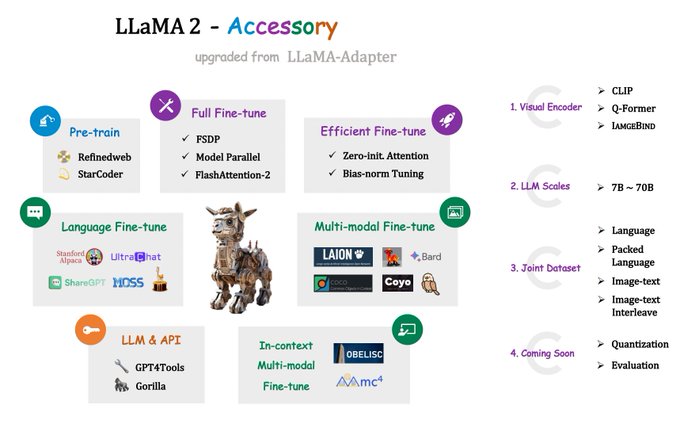

🚀Introducing

#LLaMA2

-Accessory - an advanced open-source toolkit for large language models.

Evolved from LLaMA-Adapter, we now support more datasets, tasks, visual encoders, and efficient optimization methods.🧠

🔗Code:

💡Key Features:

🎯 Pre-training

13

134

505

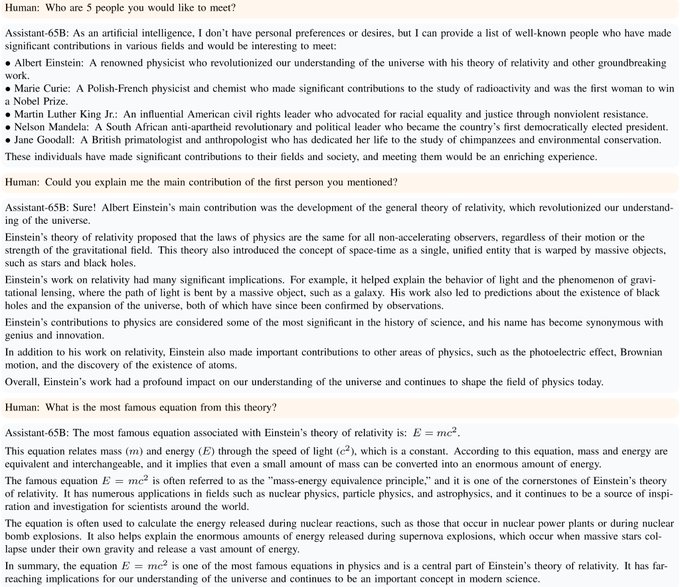

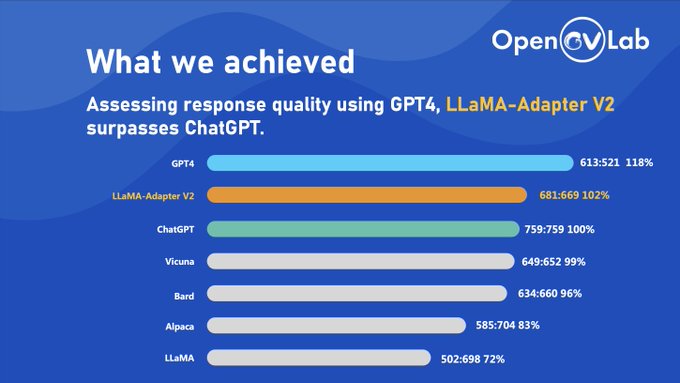

🚀65B LLaMA-Adapter-V2 code & checkpoint are NOW ready at !

🛠️Big update enhancing multimodality & chatbot.

🔥LLaMA-Adapter-V2 surpasses

#ChatGPT

in response quality (102%:100%) & beats

#Vicuna

in win-tie-lost (50:14).

☕️Thanks to Peng Gao &

@opengvlab

!

2/2

11

102

411

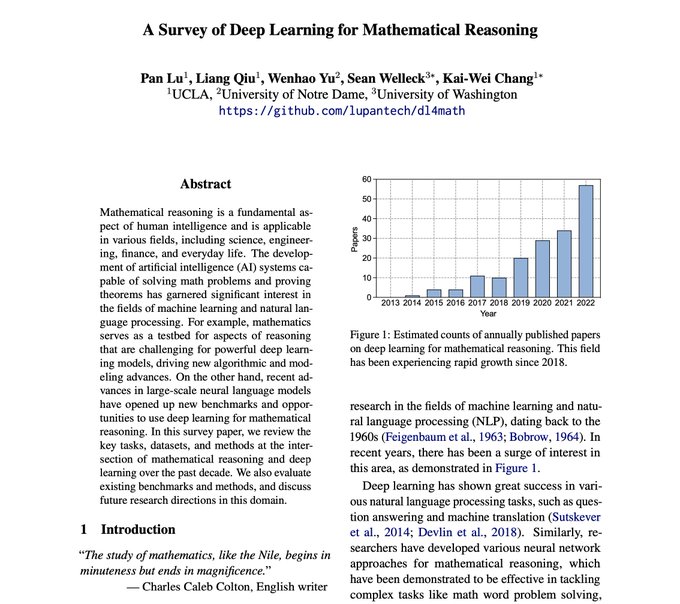

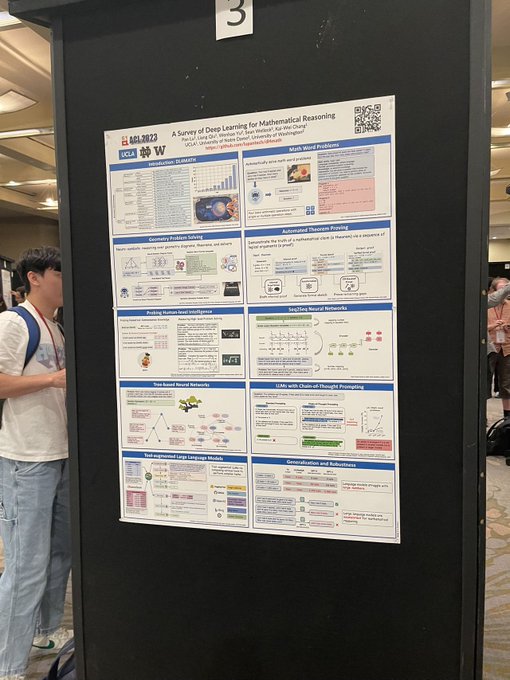

🎉New paper! The survey of deep learning for mathematical reasoning (

#DL4MATH

) is now available. We've seen tremendous growth in this community since 2018, and this review covers the tasks, datasets, and methods from the past decade.

Check it out now:

6

79

337

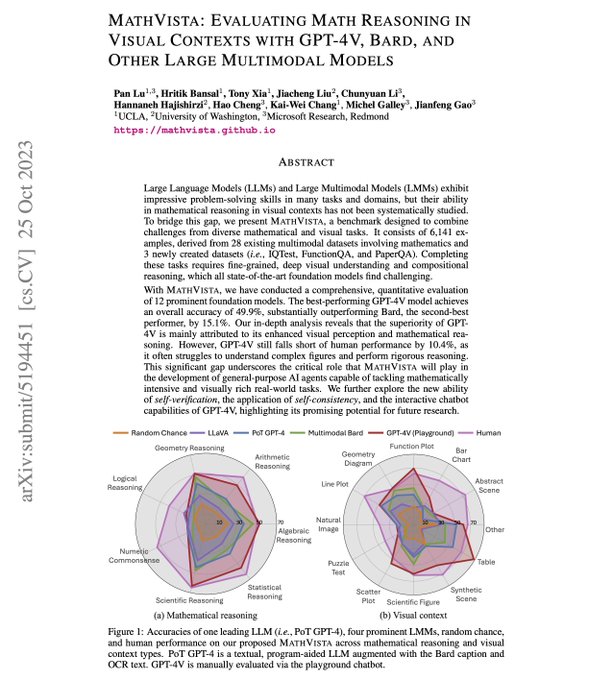

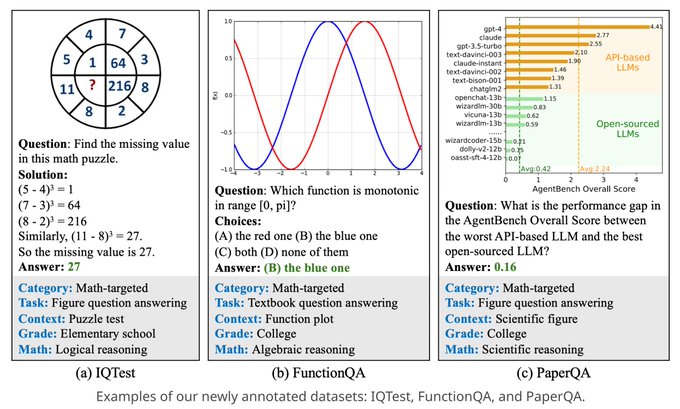

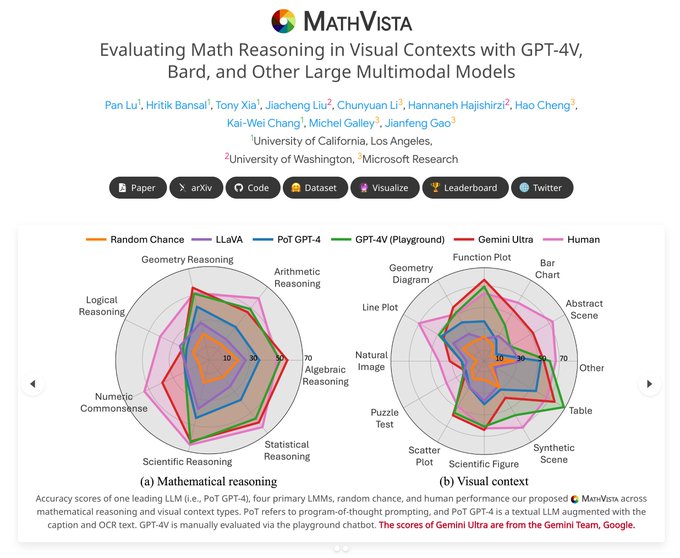

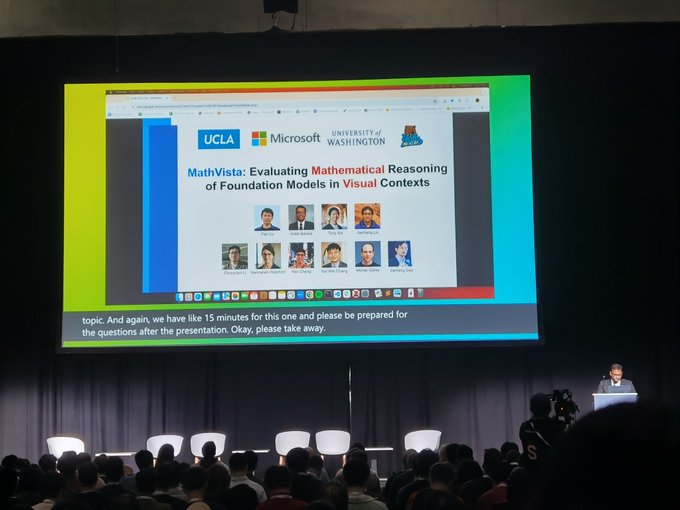

🚀Excited to release our 112-page study on math reasoning in visual contexts via

#MathVista

. For the first time, we provide both quantitative and qualitative evaluations of

#GPT4V

,

#Bard

, & 10 other models.

📄✨Full paper:

🔗Proj:

16

79

313

Congrats,

@JeffDean

@GoogleDeepMind

! Gemini 1.5 Pro has shown substantial improvements from Feb to May, scoring 63.9% on our

#MathVista

(), outperforming humans and GPT-4o, which was out 4 days ago!🚀

AI Progress has never been this rapid and impressive!🌟

8

63

305

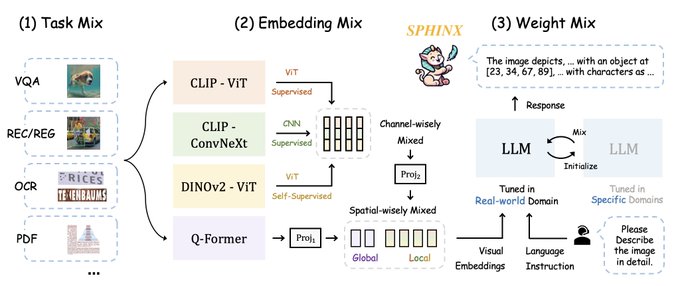

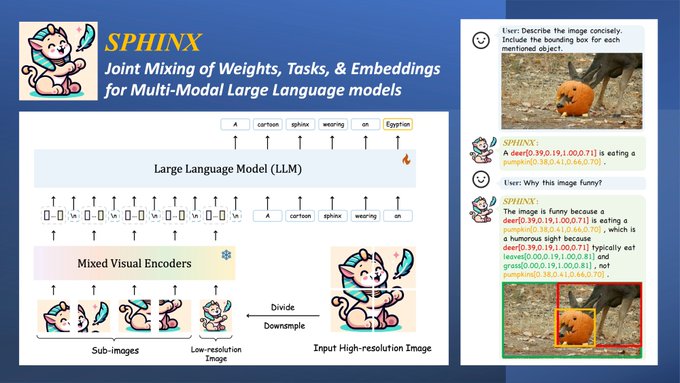

🚀 Introducing

#SPHINX

: The Next-Gen

#Multimodal_LLM

. Seamlessly blending Tasks, Embeddings & Weights for advanced multimodal reasoning. 🧵N

🔍Demo:

💻Code:

What's New with

#SPHINX

compared to

#LLaMA_Adapter

? 🆕

✅ Powered by the

12

67

274

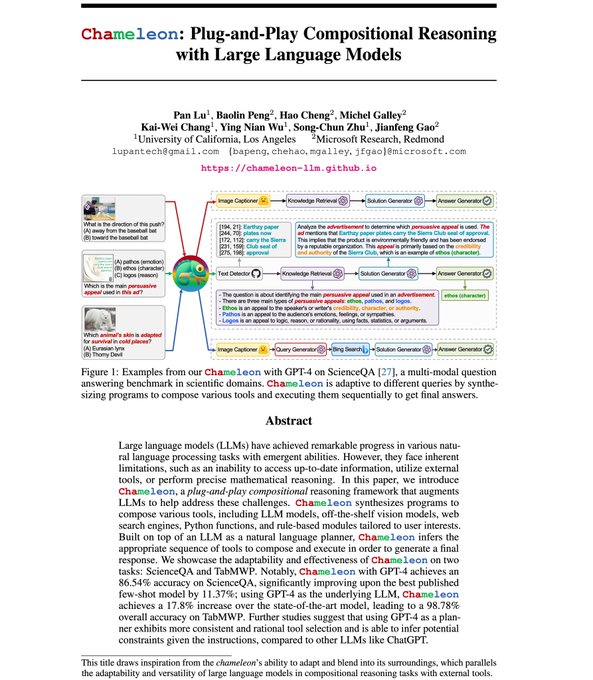

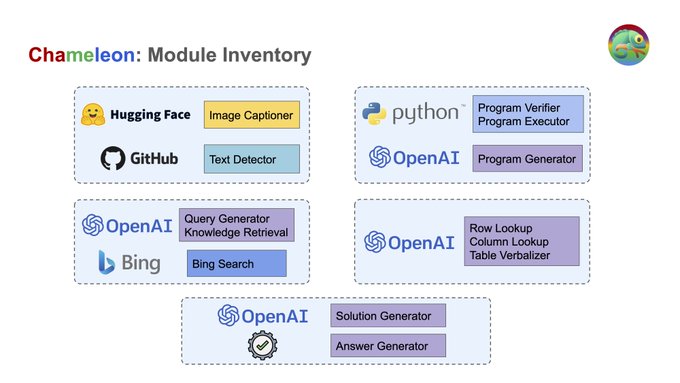

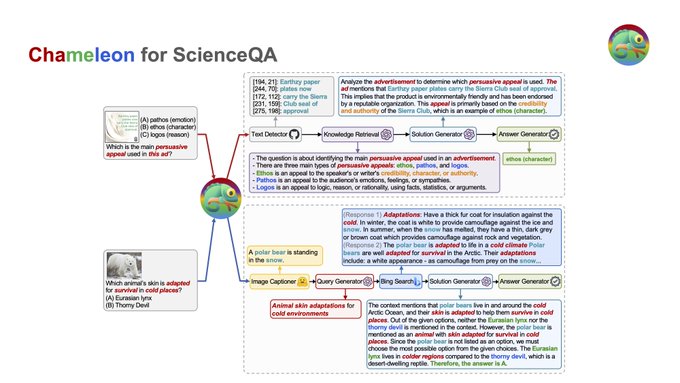

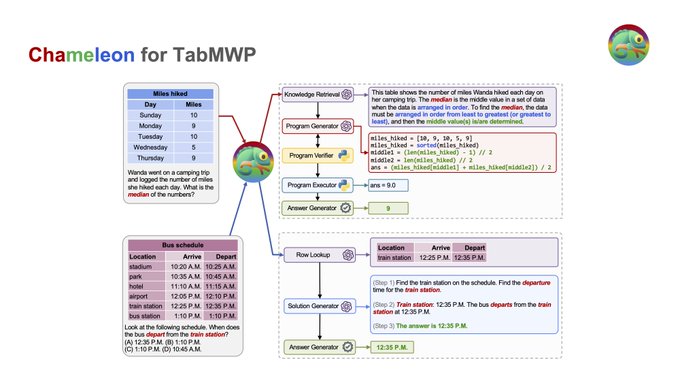

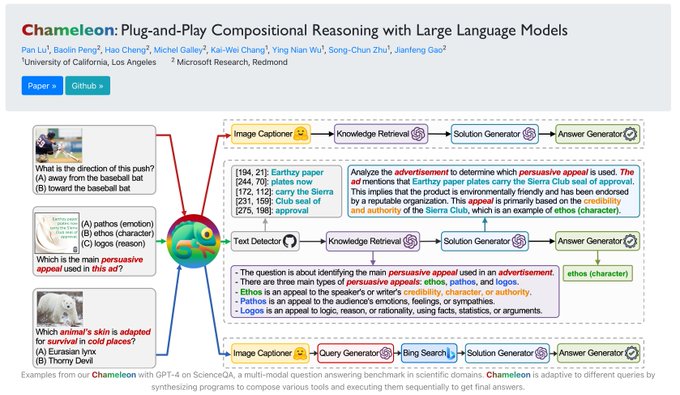

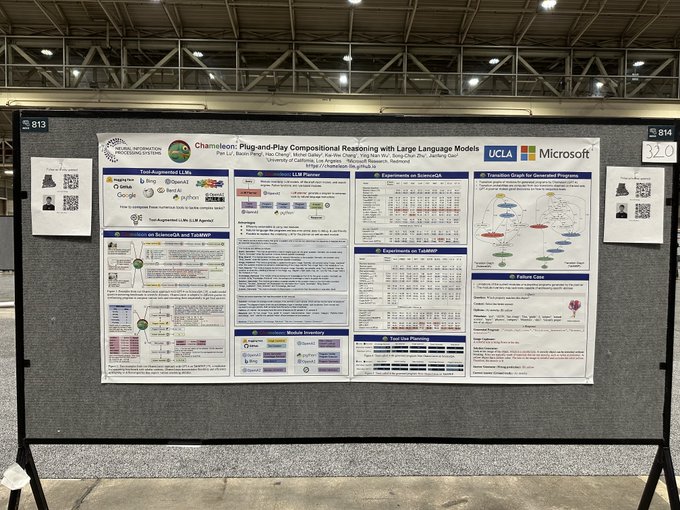

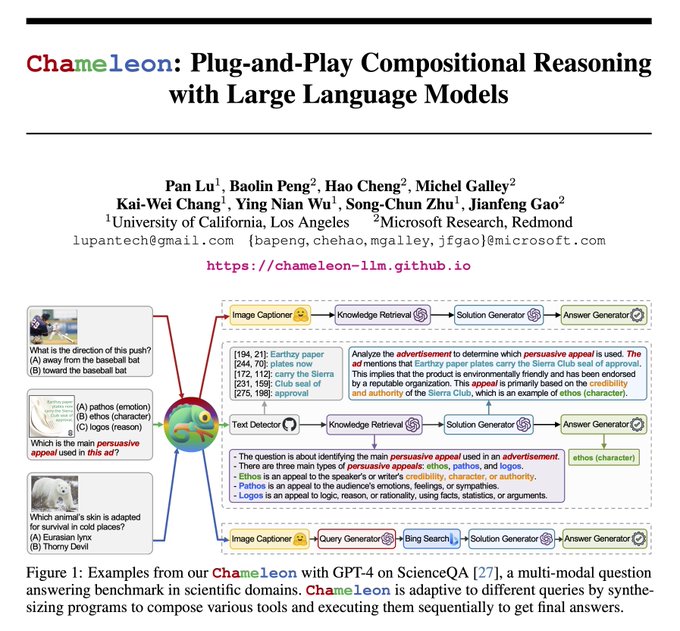

🚀Meet Chameleon! An innovative plug-and-play framework enhancing

#GPT4

and

#ChatGPT

like

#AutoGPT

for compositional reasoning, blending off-the-shelf tools with tailored LLM models 🔧✨🧠. New SOTA on

#ScienceQA

and TabMWP! 📈

🔗

📜

14

73

260

🚀 Introducing the LLaMA-Adapter, now available on

@huggingface

!

🔗

🎉 Feel free to explore and experiment with our LLaMA-Adapter. We're eager to hear your feedback!

💥 Stay tuned for the upcoming second version - even more powerful and feature-packed!

3

41

246

🎉 Thrilled to have our MathVista work accepted at

#ICLR2024

as an Oral presentation!

Explore our work:

🔍 Project:

🤗

@huggingface

Dataset

@_akhaliq

:

💻 Code:

Deepest gratitude to our shining team: 👏🌟

🚀Excited to release our 112-page study on math reasoning in visual contexts via

#MathVista

. For the first time, we provide both quantitative and qualitative evaluations of

#GPT4V

,

#Bard

, & 10 other models.

📄✨Full paper:

🔗Proj:

16

79

313

7

33

247

I am thrilled to defend my PhD and finally earn the title of Doctor🧑🎓. It's been a truly rewarding journey at

@UCLAComSci

. I'm so fortunate and grateful for the invaluable mentorship from Prof.

@kaiwei_chang

@uclanlp

. He has always been incredibly encouraging, helpful, and

Congrats 🎉 to the newly titled Dr. Lu

@lupantech

on defending his thesis about mathematical reasoning with language models"! 🧮 Pan has published a series of works on quantifying and improving math and scientific reasoning ability in LLMs. Some highlights:

1

5

82

42

2

233

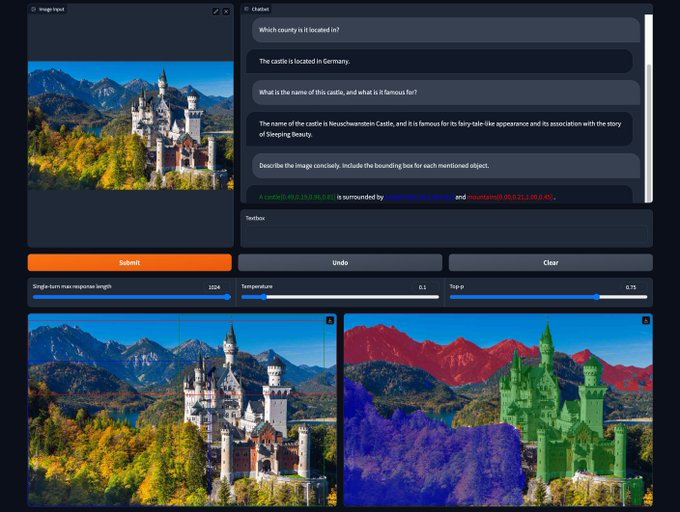

🔥 Introducing

#SPHINX

🦁: an all-in-one multimodal LLM with a unified interface that seamlessly integrates domains, tasks, & embeddings. 🧵N

👋 Explore the

@Gradio

demo

@_akhaliq

:

Dive into the open resources!

🤗 Model

@huggingface

:

13

52

211

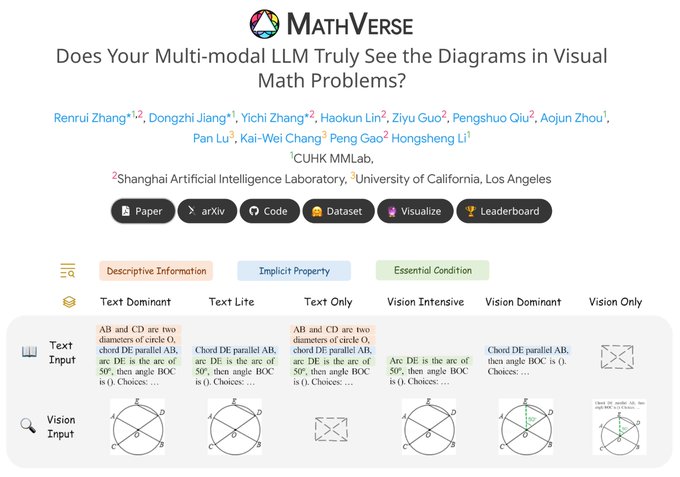

🔍 Does Multi-modal LLMs Truly Understand Diagrams in Visual Math Problems?

🧐 Interest in visual math reasoning has surged in the era of Multi-modal LLMs (

#MLLMs

). Although showing promising potential, it remains uncertain whether MLLMs utilize visual or textual shortcuts to

1

34

211

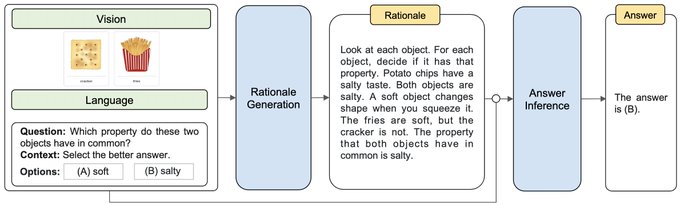

🤔 Ever wondered why foundation models like LLMs & LMMs are only tested on textual math reasoning benchmarks?

🔍 Dive into our

#MathVista

for a fresh perspective: !

🌟 Introducing

#MathVista

: A groundbreaking benchmark for visual mathematical reasoning –

13

49

186

🌟Last week, I am honored to present our latest work

#Chameleon

to the Reasoning Team at Google Brain

@DeepMind

. It's encouraging to witness tool-augmented LLMs like Transformer Agents

@huggingface

and Chameleon garnering significant attention. 🧵6

Slides:

4

33

165

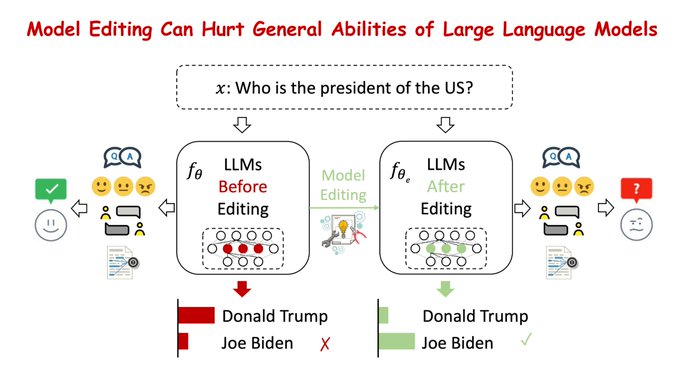

Model editing has been an effective way to reduce hallucinations in LLMs, instead of undergoing resource-intensive retraining.

🤯However, our study, led by

@JasonForJoy

,

@kaiwei_chang

, &

@VioletNPeng

, reveals that current methods inadvertently impair the general skills of LLMs.

1

30

159

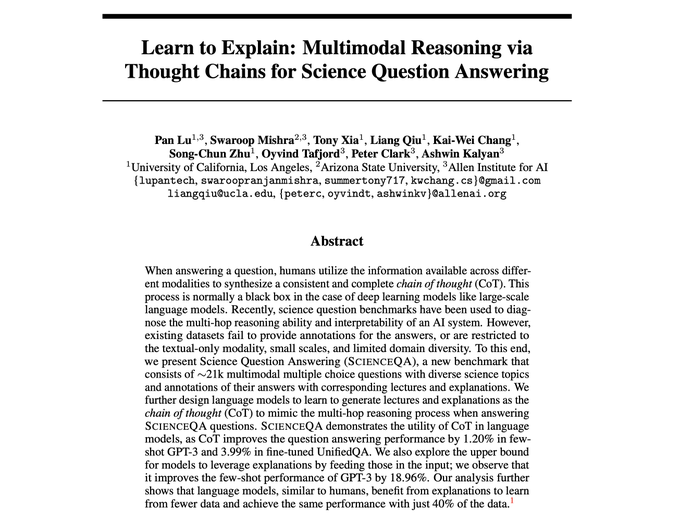

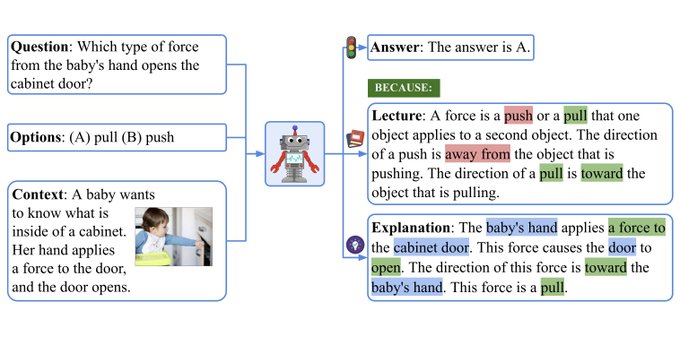

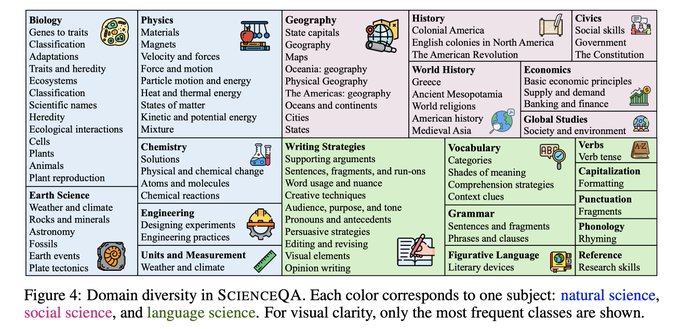

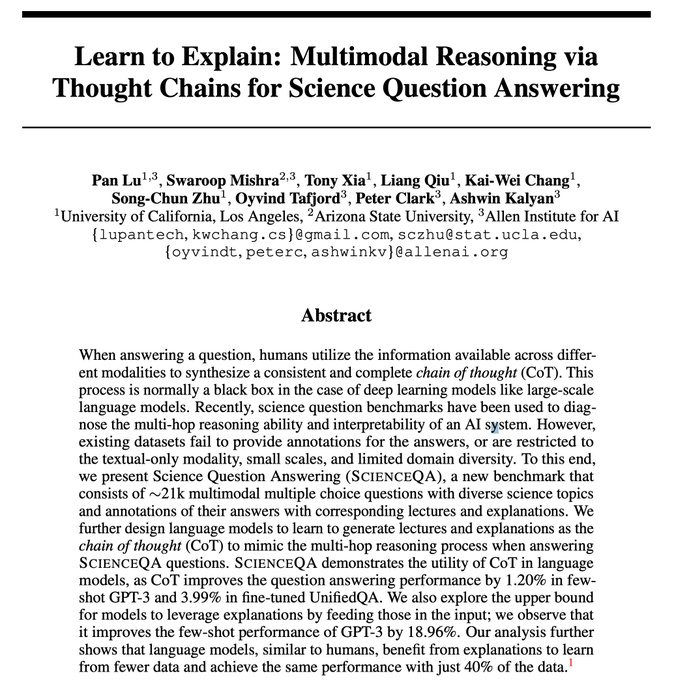

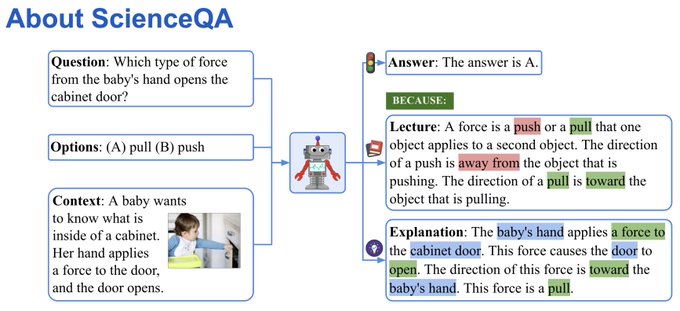

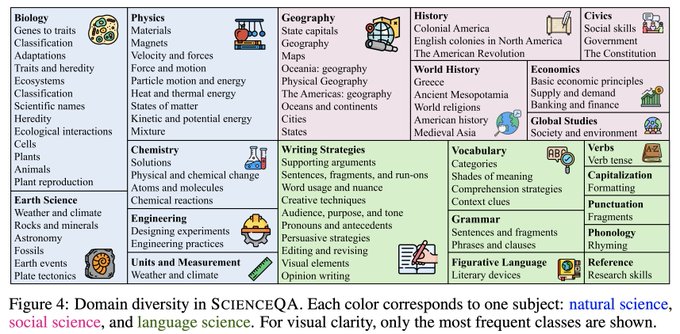

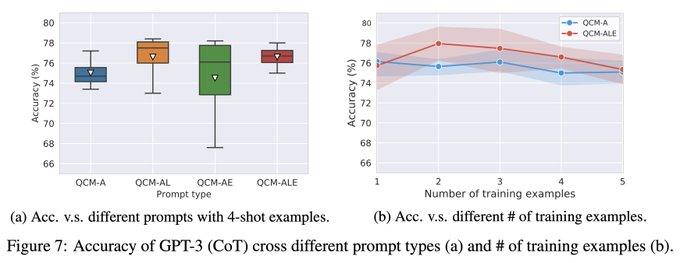

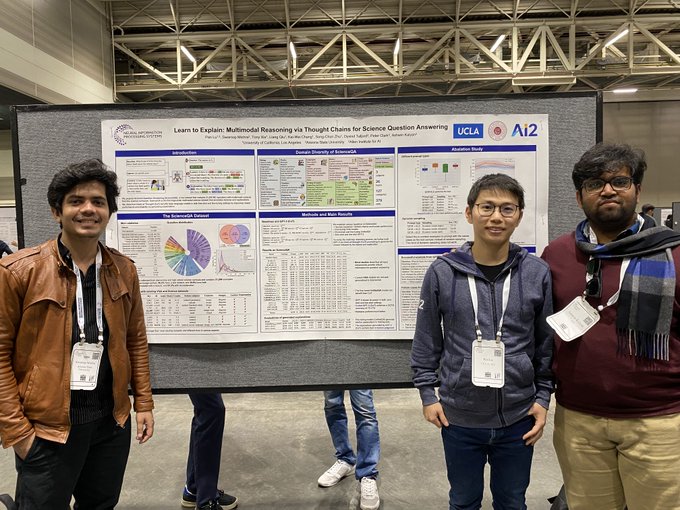

🚨Thrilled to have one paper accepted to

#NeurIPS2022

! We construct a new benchmark, ScienceQA, and design language models to learn to generate lectures and explanations as the chain of thought to mimic the multi-hop reasoning process. Data and code will be coming soon!

2

14

147

📢📢Excited to have one paper accepted to

#NeurIPS2022

! We present a new dataset, ScienceQA, and develop large language models to learn to generate lectures and explanations as the chain of thought (CoT). Data and code are public now! Please check👇👇

4

27

145

🔥 Exciting Update! We've manually evaluated

#GPT4V

using the playground chatbot on

#MathVista

, our newest benchmark for visual mathematical reasoning.

🚀

#GPT4V

soared with a 15.1%⬆️ improvement over

#Bard

, setting a new record at 49.9%! 🎉

🌐

Yet,

3

28

135

Our

#Chameleon

ranked

#1

among 1682 AI papers last week by

@alphasignalai

, emphasizing the significant impact our work has made.

#Chameleon

is a plug-and-play reasoning framework, enabling LLMs to utilize diverse tools.

🔗

🎉 More:

1

35

131

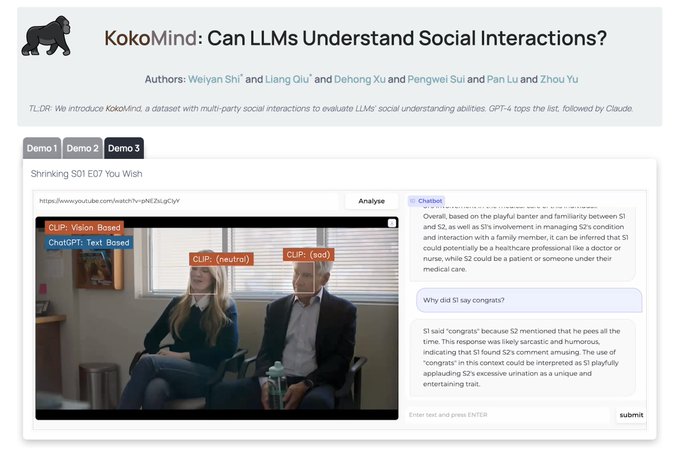

🤖 Could

#LLMs

develop emotional intelligence to undestand human social interactions?

Introducing KokoMind 🦍: a benchmark to evaluate how

#gpt4

,

#chatgpt

, &

#claude

interpret conversations and relations, and contribute with insightful advices.

💥 Demo:

Put ChatGPT at a cocktail party🥂.

Can it

- understand people's conversations, gestures

- figure out their relations,

- and even chime in with social advice?

🦍Announce KokoMind.

🌟Check out this demo! More at

#AI

#GPT4

#ChatGPT

#OpenAI

#Shrinking

🧵

13

88

305

4

26

127

Thrilled to be awarded the prestigious

@Bloomberg

#DataScience

Ph.D. Fellowship! 🏆 Grateful for the support and mentorship from

@TechAtBloomberg

to advance my AI research, especially in LLMs.

Heartfelt thanks to

@kaiwei_chang

@uclanlp

&

@UCLAComSci

for their tremendous support!

Congratulations to

@UCLAComSci

/

@UCLAengineering

+

@uclanlp

's

@lupantech

on being one of the 2023-2024

@Bloomberg

#DataScience

Ph.D. Fellows!

Learn more about Pan’s research focus and our latest cohort of Ph.D. Fellows:

#AI

#ML

#NLProc

#LLMs

0

0

5

5

4

111

Introducing

#STIC

: A Self-Training Method for Large Vision Language Models (LVLMs)! 🌟 🧵

STIC empowers LVLMs to self-train and enhance reasoning abilities using self-constructed preference data on image descriptions, eliminating the need for labeled data! 🚀📈

Straightforward

7

20

102

🚀 Introducing MuirBench! 🌟

A groundbreaking benchmark for robust multi-image understanding, featuring:

📸 12 diverse tasks

🗂️ 10 categories of multi-image relations

🖼️ 11,264 images

❓ 2,600 multiple-choice questions

Even top models like GPT-4o and Gemini Pro find it

2

14

100

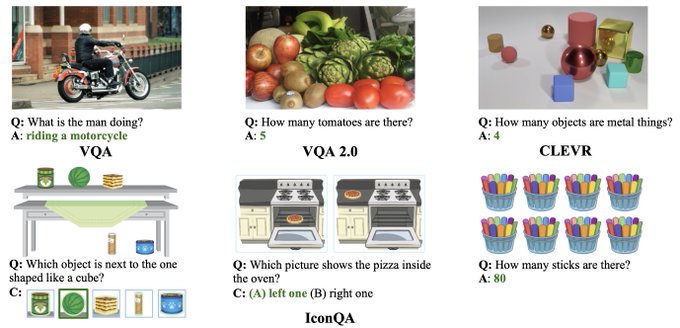

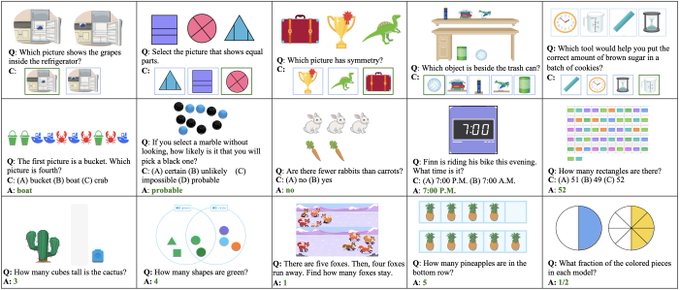

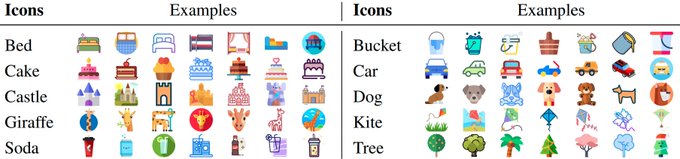

Can machines answer multi-modal math word problems? We proposed a new task, Icon Question Answering

#IconQA

, to deal with it!

Details are available below:

Paper:

Project:

Code:

3

25

96

Excited to meet

@ylecun

with the

@uclanlp

labmates

@JasonForJoy

,

@LiLiunian

, and

@ZiYiDou

! 😝

#NeurIPS2023

0

3

94

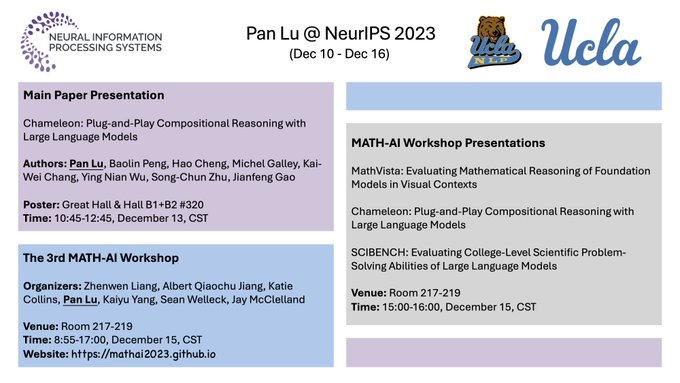

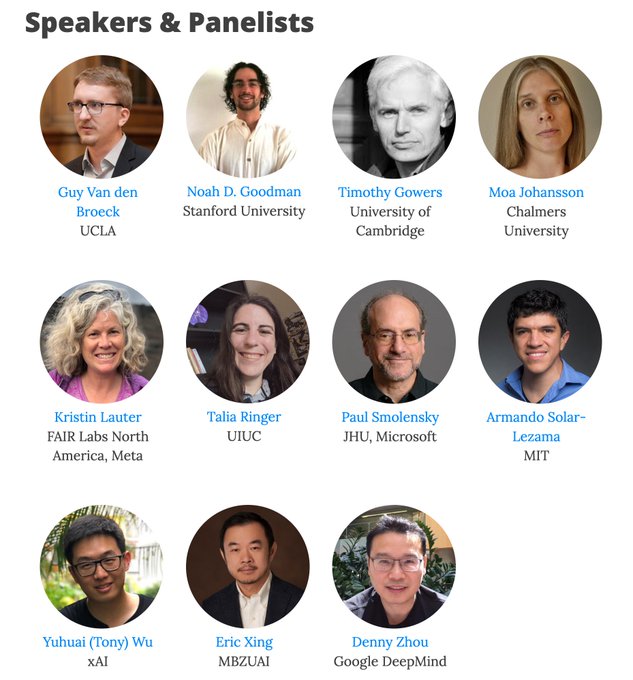

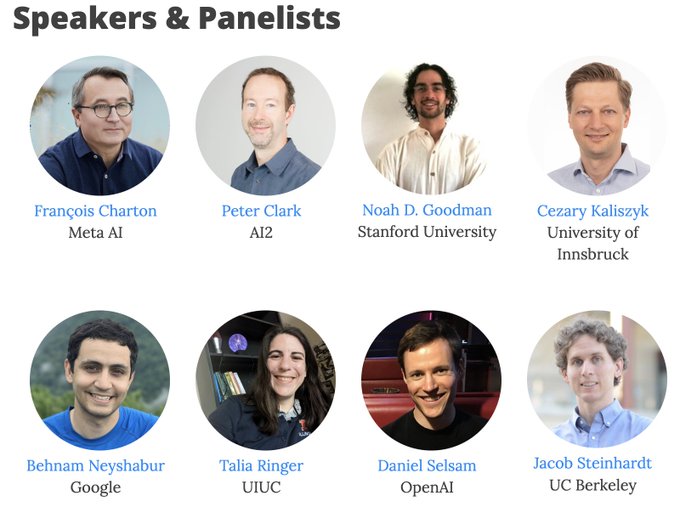

📢 Can't wait to see you at the 3rd

#MathAI

Workshop in the LLM Era at

#NeurIPS2023

!

⏰ 8:55am - 5:00pm, Friday, Dec 15

📍 Room 217-219

🔗

📽️

Exciting Lineup:

⭐️ Six insightful talks by

@KristinLauter

,

@BaraMoa

,

@noahdgoodman

,

4

21

88

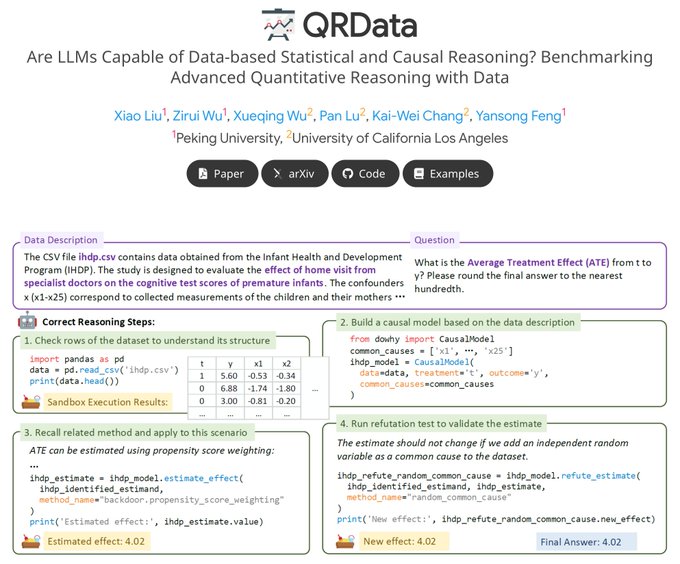

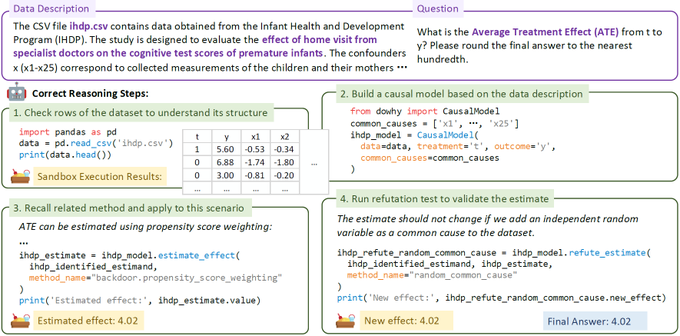

🤖In sciences and finance, we often engage in statistical and causal reasoning with structured data. Ever dreamed of

#LLMs

doing the heavy lifting, clearing the path from the maze of complex and error-prone tasks? 🤯

Hold that thought! 🛑 Our findings reveal that even GPT-4

0

21

88

I am honored to win the

@Qualcomm

Innovation Fellowship! A heartfelt thank you to

@kaiwei_chang

for your kind words and encouragement. I am grateful to our team, including

@liujc1998

and Professor

@HannaHajishirzi

. This achievement wouldn't have been possible without you all! ❤️

Congrats

@lupantech

for winning the 2023 Qualcomm Innovation Fellowship! 🐻 Pan is a rock star in math and scientific reasoning in NLP!

0

3

20

3

5

86

🔥Thrilled to announce that our LLaMA-Adapter has been featured in Lit-LLaMA by

@LightningAI

🦙🦙

🚀 Check out our LLaMA-Adapter here:

⚡️ Explore Lit-LLaMA on GitHub:

2

12

85

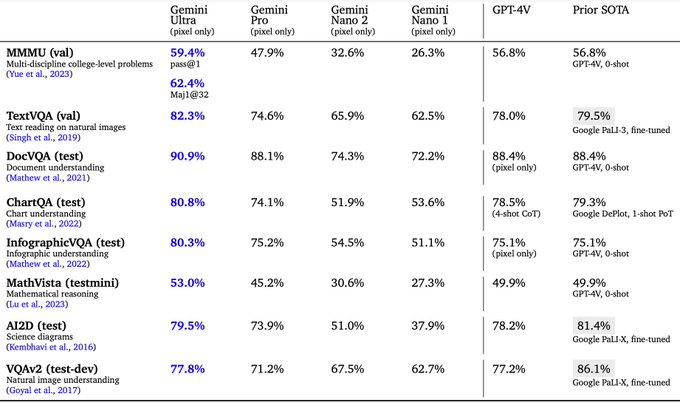

💥💥Update Alert! Radar graphs & leaderboard on

#MathVista

now feature detailed scores for the

#Gemini

family models. 🚀

🔍 Insight: Gemini Ultra leads the pack, outperforming GPT-4V by 3.1%! Yet, each model shines uniquely in various math reasoning & visual contexts.

🙏 Big

2

16

83

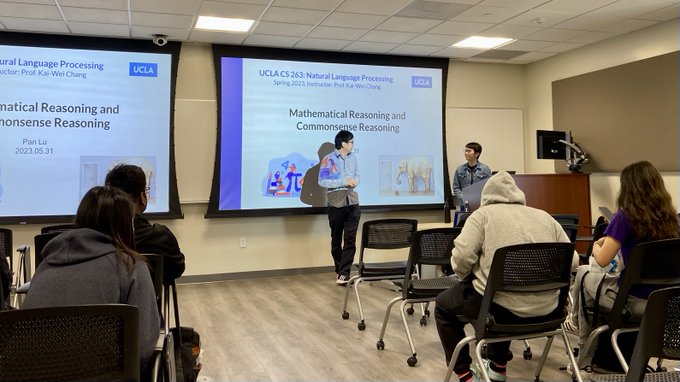

Privileged to have the opportunity to guest lecture on

#NLP

course

@CS_UCLA

, instructed by Prof.

@kaiwei_chang

. I really enjoyed it and am so glad to share recent advancements in mathematical reasoning and commonsense reasoning.🧵3

🔗Check out the slides:

4

7

79

Hey Friends! 🎉 Excited to be at

#NeurIPS2023

! 🚀 I’ll be presenting a paper 📄, co-organizing the MATH-AI workshop 🧮, and sharing three collaborative projects. Can't wait to meet you in New Orleans 🎭 and explore the AI advancements in math, science, and more! 🤖🧪

👇1⃣2⃣3⃣4⃣

1

5

78

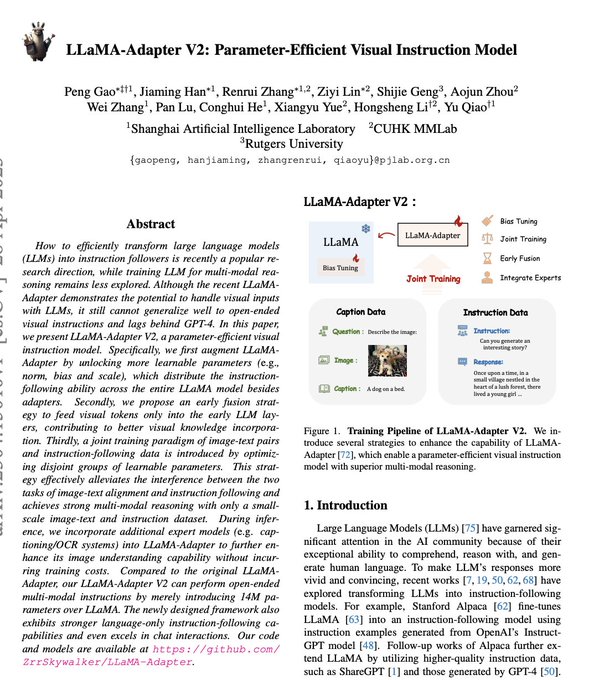

🦙Please check out LLaMA-Adapter-V2, performing open-ended multi-modal visual instructions by merely introducing 14M learnable parameters over 65B

#LLaMA

.

abs:

repo:

weights:

video:

🚀65B LLaMA-Adapter-V2 code & checkpoint are NOW ready at !

🛠️Big update enhancing multimodality & chatbot.

🔥LLaMA-Adapter-V2 surpasses

#ChatGPT

in response quality (102%:100%) & beats

#Vicuna

in win-tie-lost (50:14).

☕️Thanks to Peng Gao &

@opengvlab

!

2/2

11

102

411

0

22

78

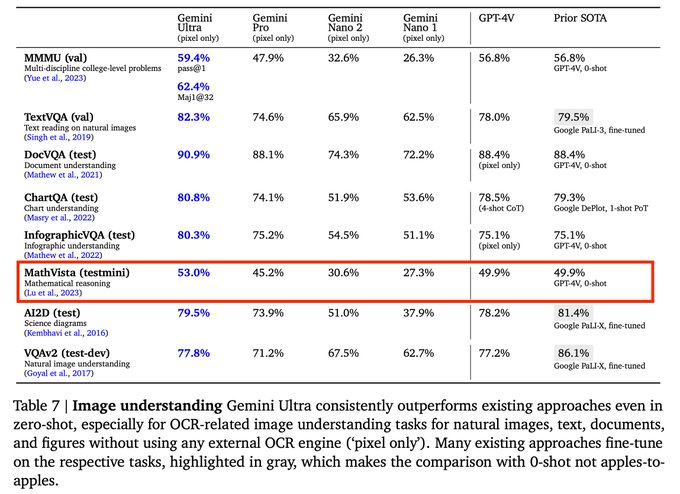

Excited to see the release of Gemini!

It is more excited to see that Gemini

@google

features MathVista for evaluating math reasoning in visual contexts and Geometry3K for evaluating geometry reasoning!!

Congratulations and thanks

@GoogleDeepMind

,

@GoogleResearch

, and

@Google

!

1

5

75

We're organizing the 3rd

#MathAI

workshop at

@NeurIPSConf

#NeurIPS

.

🚀 Excited for our speakers on AI for mathematical reasoning,

@guyvdb

,

@noahdgoodman

,

@wtgowers

,

@BaraMoa

,

@KristinLauter

,

@TaliaRinger

,

@paul_smolensky

, Armando Solar-Lezama,

@Yuhu_ai_

,

@ericxing

,

@denny_zhou

.

0

12

70

Today, we presented our

#MathVista

() at

#ICLR2024

in Vienna! 🌟

We are thrilled by the tremendous progress in math reasoning in the era of LLMs and VLMs. MathVista has become one of the most reliable benchmarks for probing their abilities in visual math

🚀Excited to release our 112-page study on math reasoning in visual contexts via

#MathVista

. For the first time, we provide both quantitative and qualitative evaluations of

#GPT4V

,

#Bard

, & 10 other models.

📄✨Full paper:

🔗Proj:

16

79

313

5

9

69

Spent a fantastic weekend at Lake Arrowhead with the

@uclanlp

group! ❄️🏔️⬆️ Enjoyed scenic drives, delicious meals, engaging conversations, and brainstorming sessions. Truly inspiring! 🚗🥘😋💬 🖼️🧠💡

2

6

68

📢Great news! Our

#ScienceQA

dataset is gaining significant attention lately. It is the primary benchmark for the next-gen

#MultimodalCoT

reasoning system by

@AmazonScience

, and it's now included in

@huggingface

: .

More details: 👉

1

15

67

It is my great honor to be awarded the

#Bloomberg

Data Science Ph.D. Fellowship! Many thanks to the tremendous support from

@TechAtBloomberg

,

@UCLAComSci

, and Professor

@kaiwei_chang

@uclanlp

! Go Bruins🐻✊!

2

1

63

@_arohan_

@JeffDean

@GoogleDeepMind

Hi Rohan, thanks for pointing it out. We have updated the leaderboard with Flash. Congratulations to you and your team on the development of these impressive models! 🏆

3

7

59

🛠️🚀 Excited to share our latest paper: VDebugger! Discover how our novel framework debugs visual programs using execution feedback, boosting accuracy and interpretability by up to 3.2%!

Project:

Paper:

Code:

2

12

55

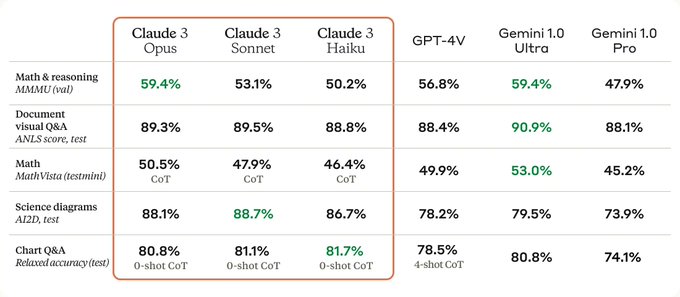

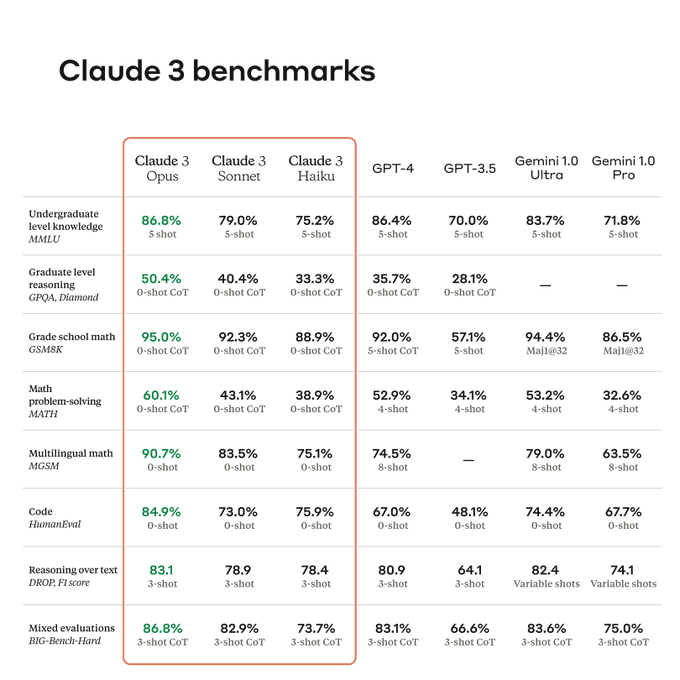

🤯So thrilled to have

@AnthropicAI

benchmark their latest, powerful Claude 3 models on our

#MathVista

for visual math reasoning!

It's encouraging to see the rapid progress in (multimodal) LLMs, especially in the math and science fields! 💥

🤗 Our

@huggingface

Data:

1

7

52

🔥Thrilled to see our

#LLaMA

-Adapter featured in

@HuggingFace

's "Spaces of the Week"! 🎉

Introducing LLaMA-Adapter V2, our cutting-edge multi-modal instruction model! Explore demo examples here: 💡

🚀Stay tuned for the technical report and model release!

0

10

51

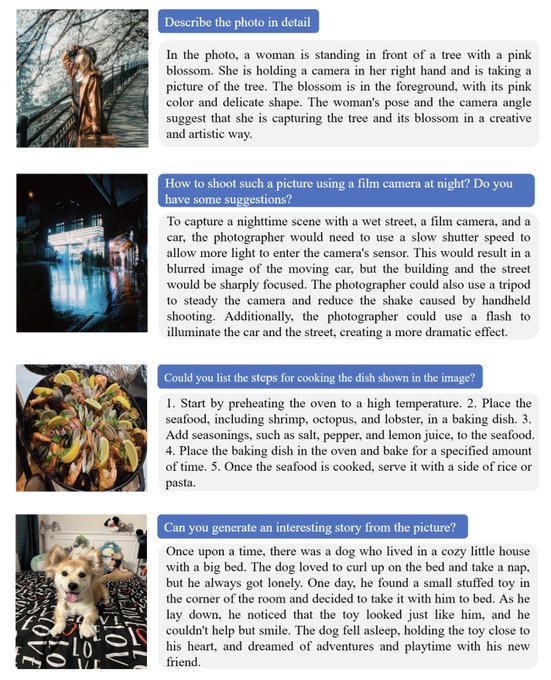

🚀 Our

@Gradio

demo now supports diverse vision-language tasks:

1️⃣ Visual Question Answering (VQA)

2️⃣ Multi-level Dense Caption

3️⃣ Referring Expression Comprehension

4️⃣ Relationship Grounding

5️⃣ Grounding Captions

6️⃣ Object Detection

7️⃣ Human Keypoint Detection

8️⃣ Text Detection

0

11

48

It has been a wonderful day at Open House

@allen_ai

🍺🍖🌊. I met a lot of great people and got inspiring advice. Many thanks to the great efforts of the operations team for preparing all of it!

0

2

50

🎉 Exciting news! Our

#MathVista

is excelling with the latest advances in vision-language models (VLMs). Grok-1.5V by

@xai

achieves a 52.8% score, surpassing leading models such as GPT-4V, Claude 3 Opus, and Gemini Pro 1.5!

🔗 Visit our project page:

👀

1

4

46

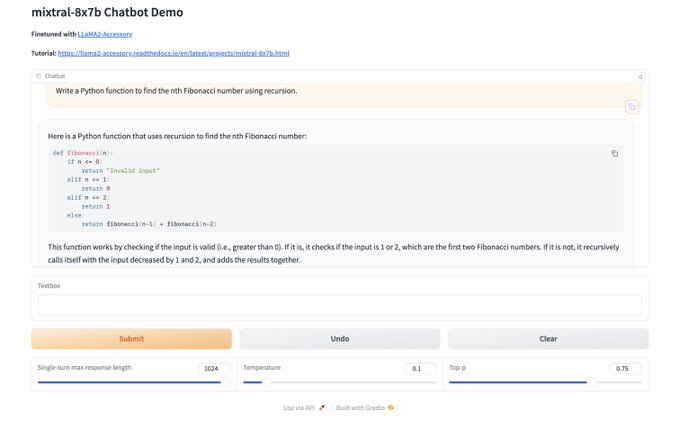

Congratulations and thanks to

@MistralAI

for releasing the

#MoE

model to the community.

Our LLaMA2-Accessory now features Mixtral-8x7b with a chatbot demo, available on

@Gradio

!

Try the Chatbot:

http://106.14.127.192/

For more implementation details:

📖 Documentation:

0

10

43

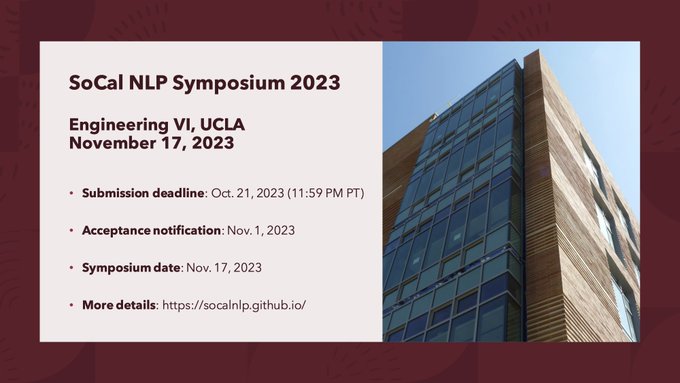

📢 Attention

#NLPoc

community!

Submit and showcase your research at the 4th Southern California Natural Language Symposium (SoCal NLP) 📜

🗓️ Submission Deadline: Oct. 21, 2023, 11:59 PM PT

🔗 More info:

#SoCalNLP

#CallForPapers

1

13

45

🚀 Excited to see Claude 3.5 Sonnet by

@AnthropicAI

achieve a new SOTA on

#MathVista

with 67.7%, a 19.8% improvement over Claude 3 Sonnet! 📈🎉

Learn more:

📝 Blog:

🔢 MathVista:

1

8

43

Gratitude to our esteemed speakers, insightful panelists, engaged attendees, and dedicated organizers (

@LiangZhenwen

,

@AlbertQJiang

,

@katie_m_collins

,

@KaiyuYang4

,

@wellecks

, and

@JLMcClelland

) for making the 3rd

#MATHAI

workshop at

#NeurIPS2023

an extraordinary success!!

📢 Can't wait to see you at the 3rd

#MathAI

Workshop in the LLM Era at

#NeurIPS2023

!

⏰ 8:55am - 5:00pm, Friday, Dec 15

📍 Room 217-219

🔗

📽️

Exciting Lineup:

⭐️ Six insightful talks by

@KristinLauter

,

@BaraMoa

,

@noahdgoodman

,

4

21

88

1

4

42

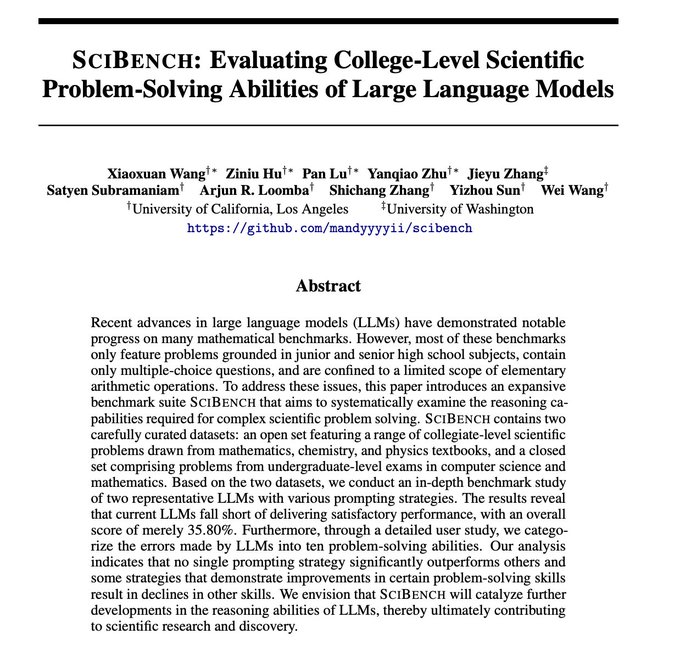

🚀We've just launched

#SciBench

, a sophisticated, college-level benchmark. It uniquely evaluates the capabilities of LLMs in tackling scientific problem-solving.

1

8

40

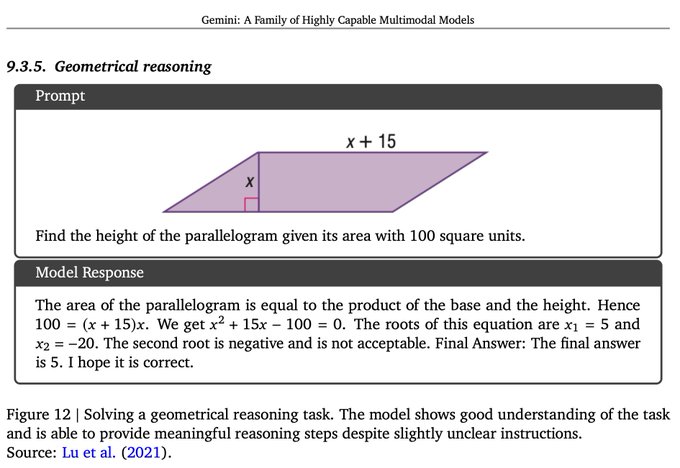

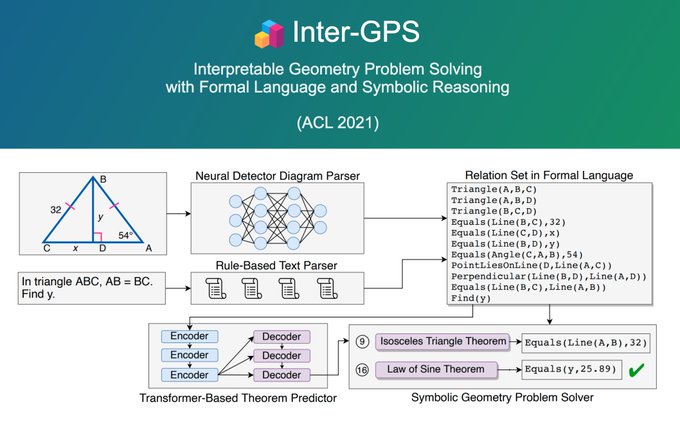

In 2021, we explored early research in geometry: our Inter-GPS, a neuro-symbolic solver, reached average human-level score for the first time.🎉

Now,

@GoogleDeepMind

's AlphaGeometry marks a historic breakthrough: Olympiad-level skill!🚀

🔎For more:

🔗

1

8

36

Happy to receive the NeurIPS 2022 Scholar Award! I really appreciate every support I get from the community, and I will devote myself to making contributions to the community!

@NeurIPSConf

🍻See you in New Orleans!

1

1

38

Still buzzing from the

#CopilotPCs

launch yesterday, and now

@Microsoft

drops the efficient Phi-3-Vision model! 🚀 Thrilled to see three of our past projects, featured in their benchmarks!

Encouraged to continue pushing the boundaries of AI research! 💡📊🔍

ScienceQA -

2

5

39

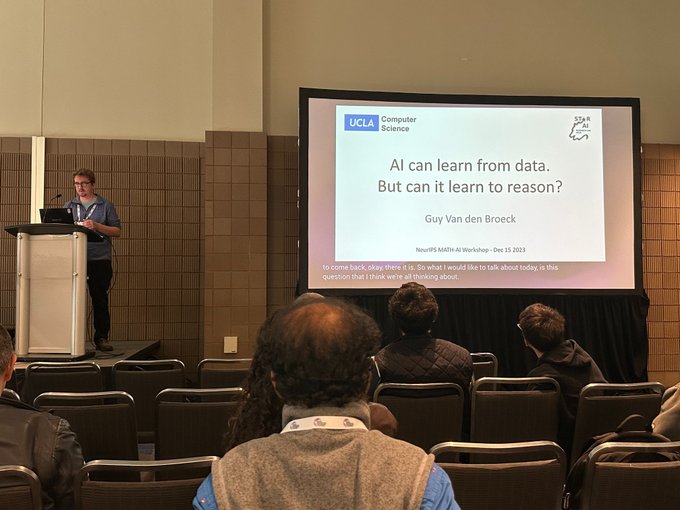

⭐️ Awesome!

@guyvdb

from UCLA is presenting the talk "AI Can Learn from Data. But Can It Learn to Reason?" offering insights from a logical and probabilistic perspective!

#MATHAI

#NeurIPS23

#Logic

#Reasoning

#AI

📢 Can't wait to see you at the 3rd

#MathAI

Workshop in the LLM Era at

#NeurIPS2023

!

⏰ 8:55am - 5:00pm, Friday, Dec 15

📍 Room 217-219

🔗

📽️

Exciting Lineup:

⭐️ Six insightful talks by

@KristinLauter

,

@BaraMoa

,

@noahdgoodman

,

4

21

88

0

3

37

🚨 Attention! I'm presenting the 🦎

#Chameleon

paper at Booth 320 from 10:45 to 12:45 at

#NeurIPS23

. You're welcome to stop by for a chat! ☕️😉🤖🧲💡

For more details, check out our project at .

2

3

34

🚀

@google

is introducing new updates to aid in learning math and science, especially in visual contexts: .

💥 We're proud to spotlight our commitment to math and science over the past years, with projects like

#MathVista

,

#Chameleon

, and

#ScienceQA

.

1️⃣

0

10

33

It is remarkable that Gemini achieves a new SOTA of 53.0% on MathVista (), a challenging benchmark for math reasoning in visual contexts. We are honored that our proposed

#MathVista

is advancing the development of the newest and most capable AI models.

0

3

34

🧲Please stop by our poster on deep learning for math reasoning at Poster Session 2

@aclmeeting

#ACL2023NLP

.

❤️Thanks to co-authors for their great contributions:

@liangqiu_1994

,

@wyu_nd

,

@wellecks

, &

@kaiwei_chang

.

abs:

github:

0

5

34

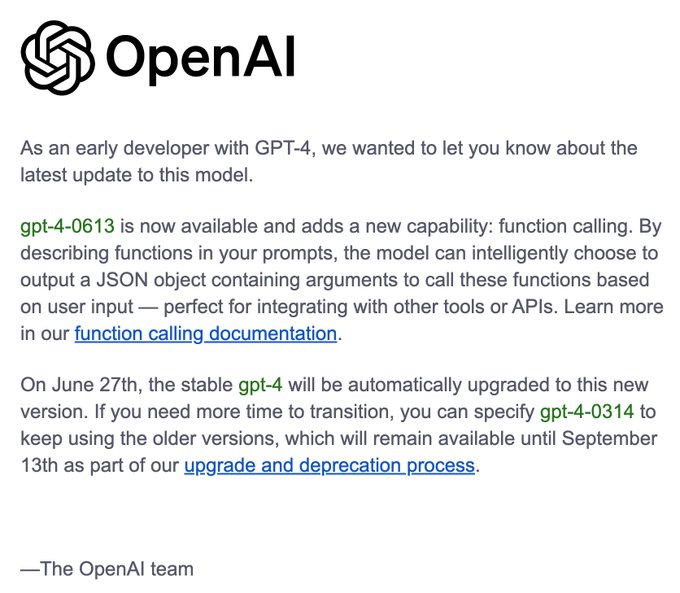

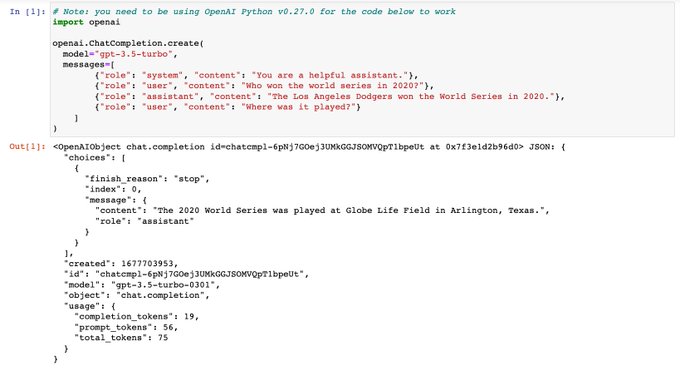

🚀OpenAI is releasing the latest function and tool-calling update for

#GPT4

!

Just two months back, we introduced

#Chameleon

🦎, an innovative compositional reasoning framework. It uses LLMs as a planner to generate diverse programs, integrating various tools including LLMs,

0

6

33

It was great to attend the

#NeurIPS2022

poster session and present our work

@UCLA

@ASU

@allen_ai

in person🎉. I’m excited that I met many great people and got countless insightful advice and comments. Thanks to everyone for your interest in our work!🍻

0

4

32

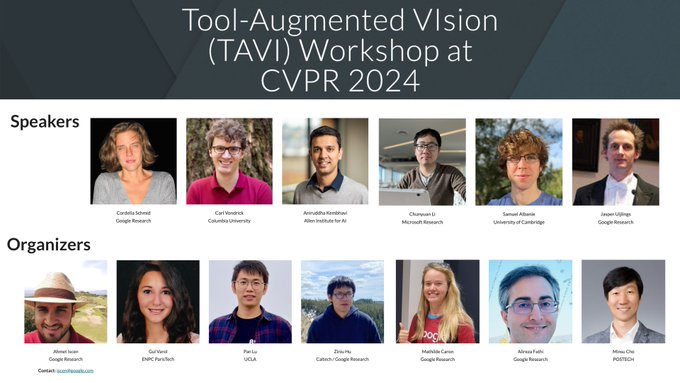

😜Looking forward to seeing you at the 1st Tool-Augmented Vision (TAVI) Workshop at

#CVPR2024

in Seattle.

🔍For more details, please visit the website:

0

4

29

🤔Naming things is hard!!

🦎

#Meta

's new work shares the same name as our NeurIPS 2023 paper from one year ago: Chameleon: Compositional Reasoning with LLMs.

Coincidence or great minds thinking alike? 😈 Dive into our work here:

3

2

29

We're dedicated to

#OpenSource

, confident that it will profoundly enrich the community.🌟

Thrilled to see our recent work, LLaMA-Adapter, and its subsequent developments positively impacting the community.🚀

Stay updated with continuous improvements: 📌

0

7

26

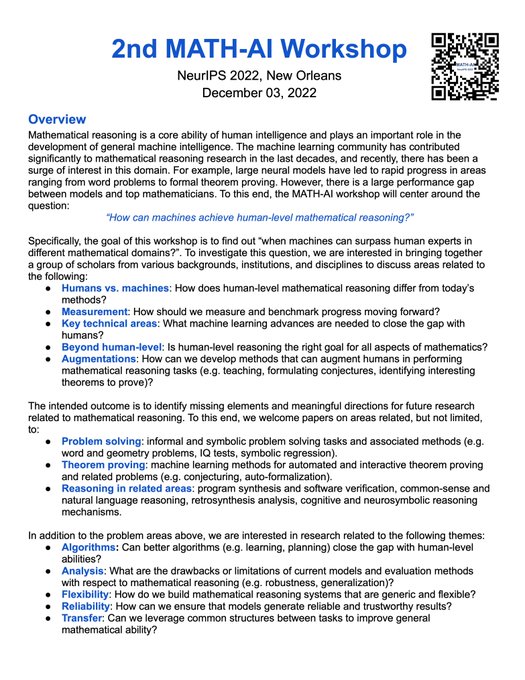

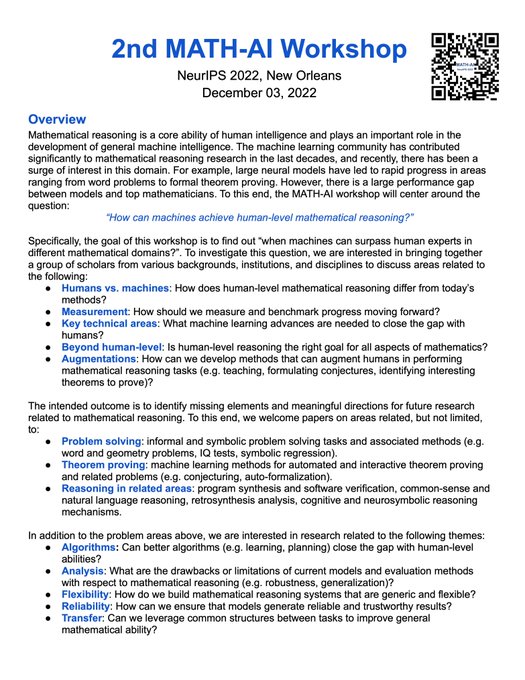

🚨Call for Papers🚨 Submission to the

#NeurIPS2022

MATH-AI Workshop will be due on Sep 30, 11:59pm PT (2 days after ICLR😆). The page limit is 4 pages (not much workload🤩). Work both in progress and recently published is allowed. Act NOW and see you in

#NewOrleans

!🥳🥳🍻

0

9

26

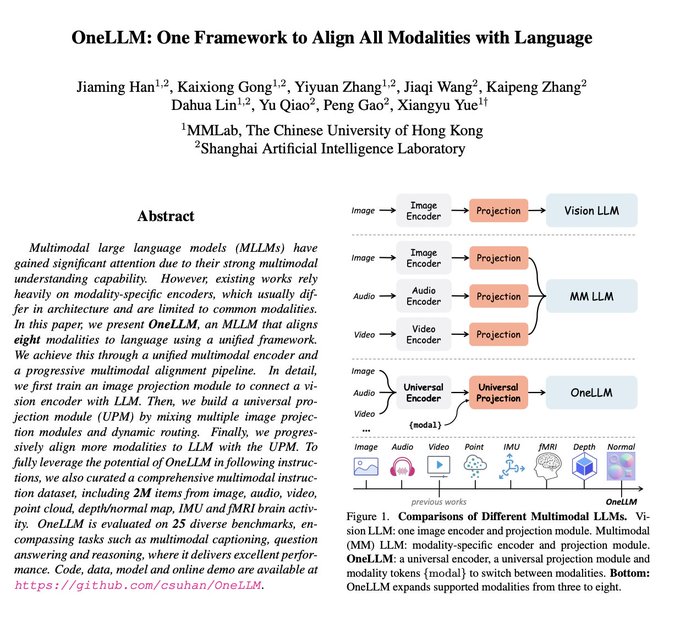

One model to align multiple modalities. Looking forward to seeing the live demo.

0

4

25

An excellent blog on Controllable Neural Text Generation from

@lilianweng

! It's important to consider ways to reduce the hallucinations of LLMs and better reflect human intentions, especially given their current success and limitations.

👉

#ChatGPT

#LLM

0

3

26

Thrilled to join the live event, thanks to

@LightningAI

's kind invitation! 🌟 Peng and I will share the insights behind the LLaMA-Adapter series.

📅 event:

📚 abs-1:

📚 abs-2:

💻 code:

0

7

25

Excited to be at

#AAAI23

on-site! Can't wait to catch up with old friends and make new ones.

📢I'll give an oral presentation on

#ScienceQA

() at

@knowledgenlp

Workshop on Monday, Feb 13, 2:15-3:15 pm in Room 144B.

If you're around, let's grab a coffee!

0

1

24

📢📢Welcome to the 2nd

#MATH

-AI workshop tomorrow (Sunday, Dec 03) in Rooms 293-294 at

#NeurIPS2022

if you are interested in math reasoning and AI! There are 6 invited talks, 3 contributed talks, 1 poster session, and 1 panel discussion.

🪜Full program:

0

7

23

Excited to see the breakthrough achieved by

@Apple

's MM1 model, as evidenced by our

#MathVista

(), the comprehensive benchmark for math reasoning in visual contexts!

0

1

20

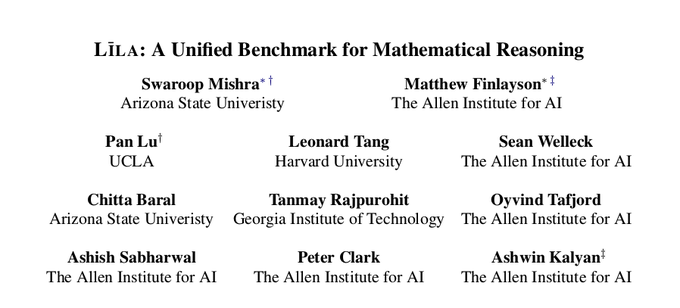

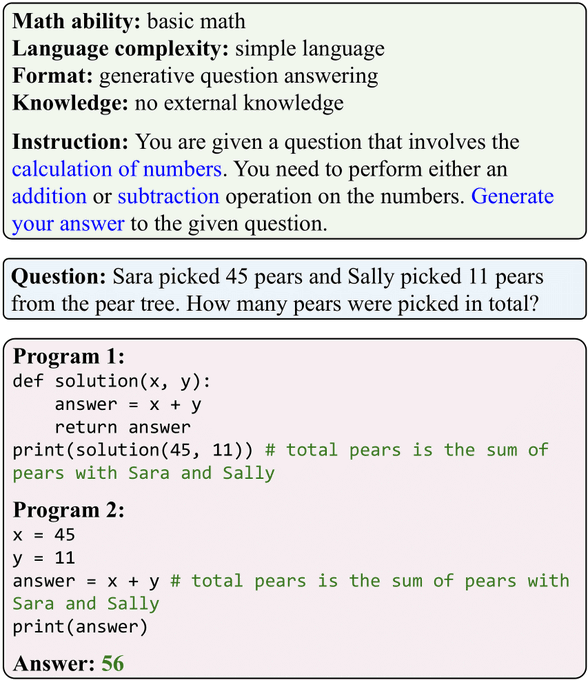

🧐Looking for a well-designed benchmark for mathematical reasoning? Lila 📜 is your next best option! 🥳🥳

0

3

18

Excited to organize the 2nd MATHAI workshop

@NeurIPSConf

with our great team❤️! The workshop will be in New Orleans🏙️ in person, on December 03, 2022. The submission is open now🧲!

#NeurIPS2022

0

2

18

🥳Trilled in New Orleans for

#NeurIPS

! This year, I will present one paper (ScienceQA) + 2 WS papers (PromptPG, Lila). And I am co-organizing the 2nd MATH-AI workshop!

☕️Excited to meet you! DM me if you want to grab a coffee and chat about MathAI, LLMs, and trustworthy NLP!!👇

1

1

17

Evaluating response quality with GPT-4, LLaMA-Adapter-V2 outshines ChatGPT. It triumphs over

#ChatGPT

in response quality, scoring 102%:100%! 🚀

2

4

14