Elias Bareinboim

@eliasbareinboim

Followers

14,204

Following

567

Media

133

Statuses

2,312

Professor of Causal Inference, Machine Learning, and Artificial Intelligence. Director, CausalAI Lab @ Columbia University.

New York, NY

Joined January 2010

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

DG X ORM KORNNAPHAT

• 460308 Tweets

Imran Khan

• 220844 Tweets

Jets

• 182572 Tweets

Mbappe

• 163806 Tweets

Adams

• 135774 Tweets

Rodgers

• 111205 Tweets

Ghana

• 83793 Tweets

#السعوديه_البحرين

• 67311 Tweets

Asnawi

• 65201 Tweets

Sudan

• 57167 Tweets

Fields

• 53652 Tweets

Raiders

• 51840 Tweets

Jerry

• 51469 Tweets

Bloomberg

• 46637 Tweets

#ولي_العهد_في_مصر

• 44282 Tweets

Tuchel

• 37112 Tweets

AFCON

• 29132 Tweets

منصور

• 28098 Tweets

Krypto

• 26978 Tweets

كاس العالم

• 22008 Tweets

iPad mini

• 19261 Tweets

KARIME X SALE EL SOL

• 18007 Tweets

Mike Williams

• 16870 Tweets

Russ

• 16553 Tweets

Economic Club of Chicago

• 15786 Tweets

Tapia

• 15413 Tweets

James Gunn

• 13617 Tweets

Gold Glove

• 10760 Tweets

Last Seen Profiles

Interested in Causal Inference & Reinforcement Learning? Consider attending my

@icmlconf

tutorial on the basic principles & tools of Causal Reinforcement Learning (CRL). I’ll discuss many new & pervasive learning challenges/opportunities within CRL. Link:

6

113

609

The WHY-21 workshop "Causal Inference & Machine Learning: Why now?" is currently accepting submissions, . Our goal is to bring CI & ML researchers together to discuss the nextgen AI! (joint w/

@yudapearl

, Y. Bengio, T. Sejnowski,

@bschoelkopf

)

@NeurIPSConf

1

93

349

The WHY'21 workshop "Causal Inference & Machine Learning: Why now?" will take place this Monday at

#NeurIPS2021

. Our goal is to bring CI & ML researchers together to discuss the nextgen AI! Program (joint w/

@yudapearl

,

@bschoelkopf

, Y Bengio, T Sejnowski)

5

58

322

1/2 Thanks to the 5k new followers in this past year! To celebrate, two announcements -- First, we are beta-testing a tool called ‘Fusion’, which offers an easy-to-use way of doing causal inference from 1st principles (see

#bookofWHY

). Subscribe here: .

6

70

313

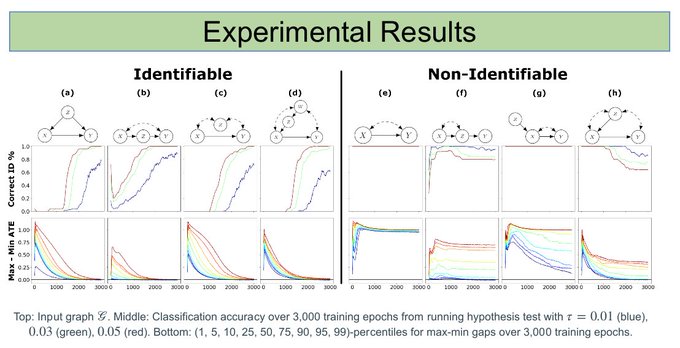

1/6 If you are interested in causal inference & machine learning, I am excited to share some of the latest work of the causal artificial intelligence group that will appear

@NeurIPSConf

this year. We welcome you to stop by and chat with us at the following times…

#NeurIPS2021

2

51

303

Our work on general identifiability was just selected as UAI-19 Best Paper Award (1 out of 450)! The paper provides the conditions necessary to reason with experimental distributions; e.g., one can combine two dists, do(X1) & do(X2), to answer about the joint effect, do(X1, X2).

UAI 2019 best paper award to research on Causal Inference: "General Identifiability with Arbitrary Surrogate Experiments" by Sanghack Lee, Juan D. Correa and Elias Bareinboim (

@eliasbareinboim

).

#UAI2019

.

0

10

66

4

25

259

Hi Judea, thank you for your kindness and for sharing your wisdom & friendship during this long journey.

I feel humbled and blessed to have the opportunity to study & research the foundations of causal inference and AI with you.

Congratulations to

@eliasbareinboim

for getting tenure at Columbia University. Simultaneously, congratulations go to Columbia University for a securing its leadership in next-generation AI.

This advancement strengthens causal inference research with an important academic

1/2

15

14

290

18

9

226

@jonathanrlarkin

Hey Jonathan, cool to see you interested in causality! :)

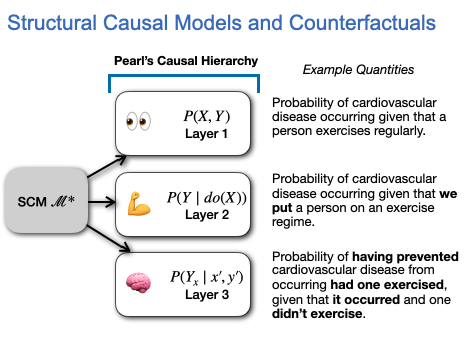

I would say that the starting point for causal researchers is that we are NOT useful in tasks that reside in layer 1 (L1) of Pearl’s hierarchy, i.e., purely predictive tasks (). I believe the

8

29

212

1/3 Just made available the video & slides for my

#ICML2020

“Causal Reinforcement Learning” (CRL) tutorial: . CRL combines the strengths of Causal Inference(CI) & RL to solve novel/practical decision-making problems that neither approach can tackle alone!

2

42

212

1/6 If you are interested in causal inference & machine learning, I am excited to share some of the latest work of the causal artificial intelligence group that will appear

@NeurIPSConf

this year. We welcome you to stop by and chat with us at the following times…

#NeurIPS2020

2

42

207

The call for papers of our causal inference and machine learning symposium is out -- "Beyond Curve Fitting: Causation, Counterfactuals, and Imagination-based AI," which will happen this spring at Stanford. Details:

@yudapearl

@bschoelkopf

@CsabaSzepesvari

1

61

173

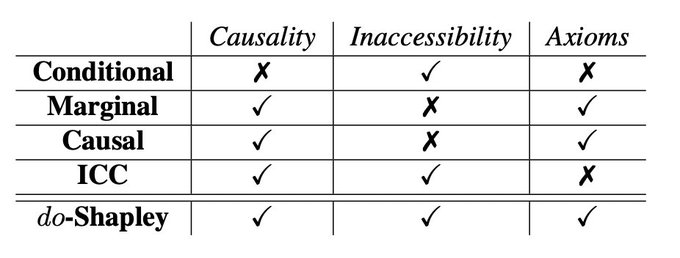

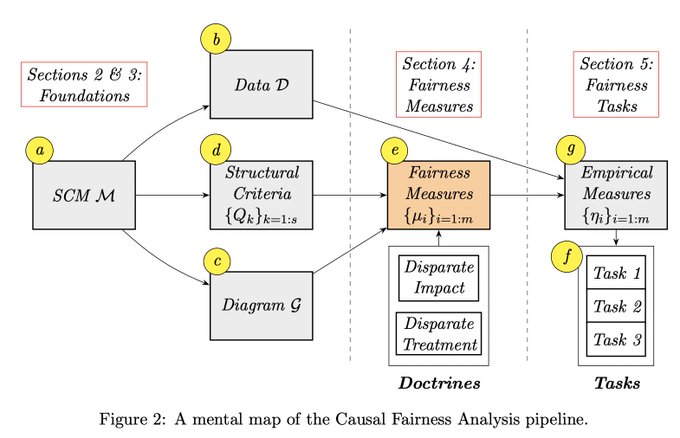

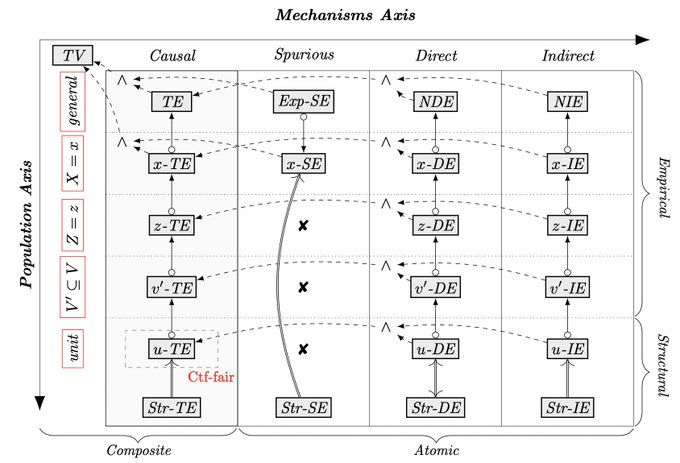

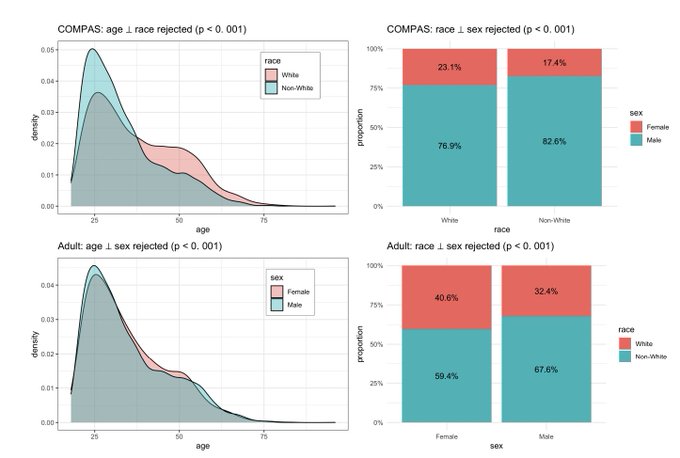

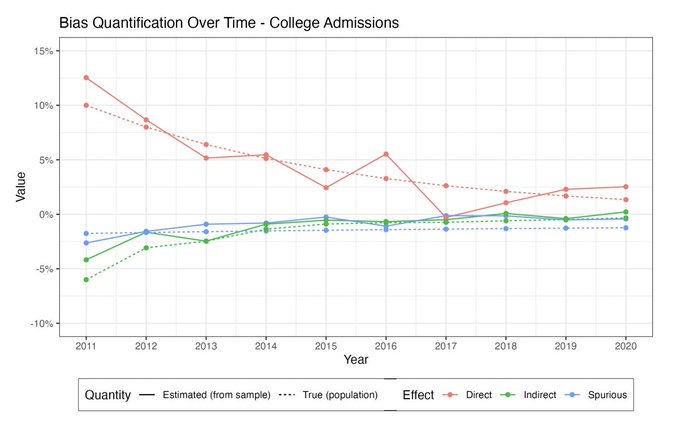

Happy to share our tutorial's video on "Causal Fairness Analysis" in the

@FAccTConference

this year (joint work w/ Drago Plecko &

@junzhez

): , and the slides: . Special thanks to the organizers,

@tiberiocaetano

&

@zacharylipton

.)

1

28

152

New piece on causal inference & the future of AI at the

#MITreview

-- "What AI still can’t do" by

@BrianBergstein

-- including comments by

@yudapearl

,

@bschoelkopf

,

@rlmcelreath

.

3

58

135

The slides of Judea's talk - "The Foundations of Causal Inference, with Reflections on ML and AI" - this week at the

#WHY19

is available, see ; more coming soon.

2

35

137

The WHY-19 will be happening from Mar/25-27 @ Stanford. The theme this year is "Beyond Curve Fitting: Causation, Counterfactuals, Imagination-based AI". We have great speakers, including J. Pearl, Y. Bengio, K. Imai, J. Ioannidis. Don't miss!!

#bookofwhy

5

18

119

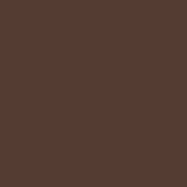

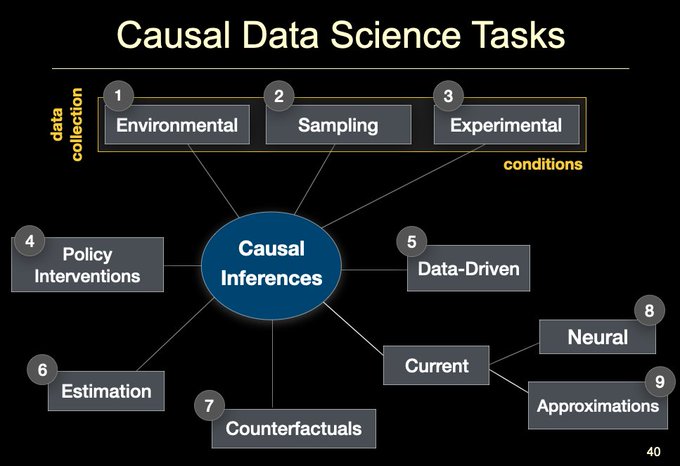

For those interested in my talk about "causal data science" presentation at

@MIT

last week, here is the link to the slides:

3

20

117

@tdietterich

@yudapearl

@roydanroy

Thanks, Tom. The video, slides, and other resources are available on the causal RL website:

2

24

110

2/2 Due to server constraints, be patient, access will be 'raffle' style. Second, we created a Youtube channel w/ causal inference videos. So far we have

@yudapearl

's keynote @ WHY-19 & my lecture on causal data science

@Columbia

. Watch, subscribe & share:

1

22

108

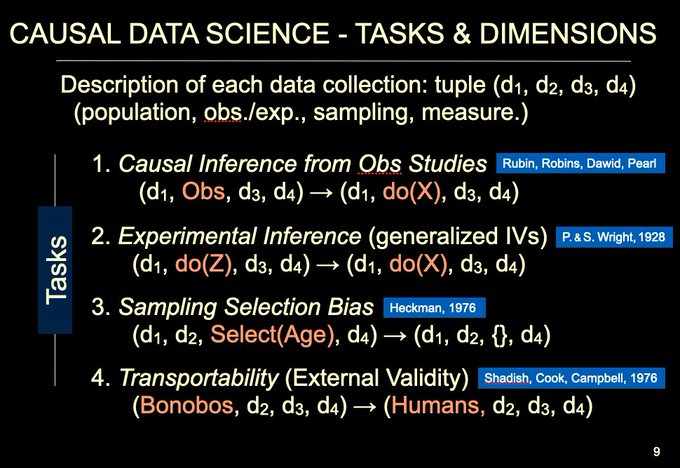

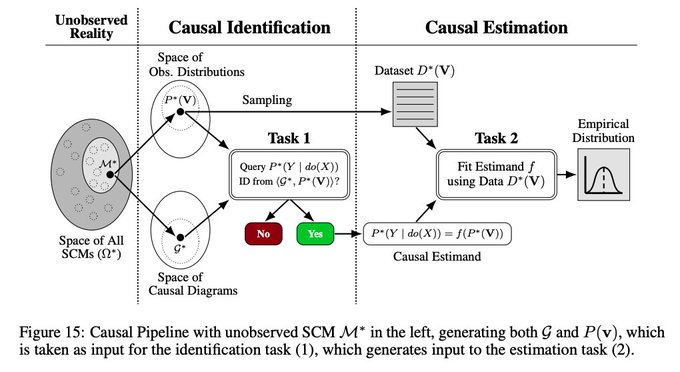

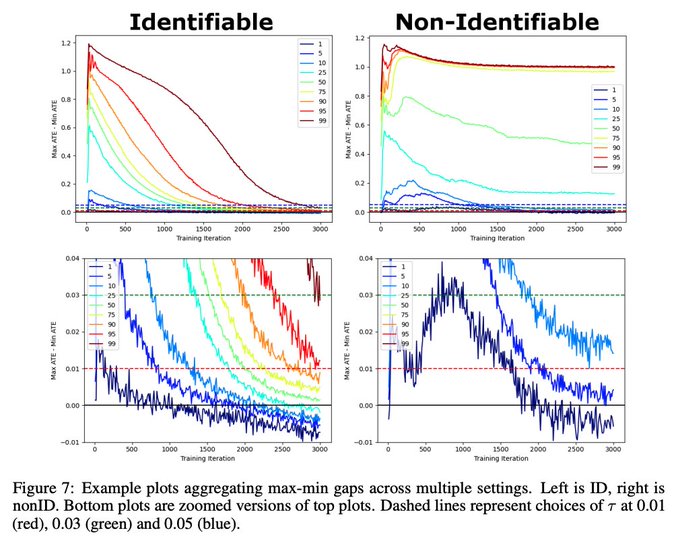

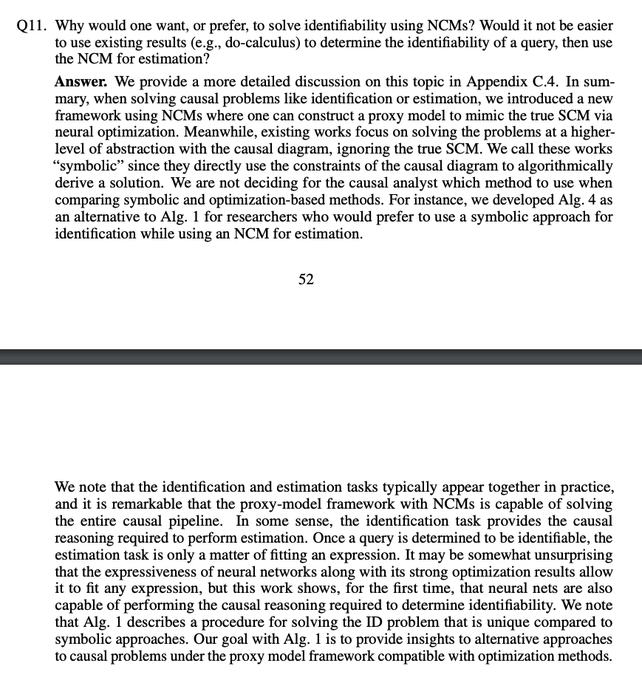

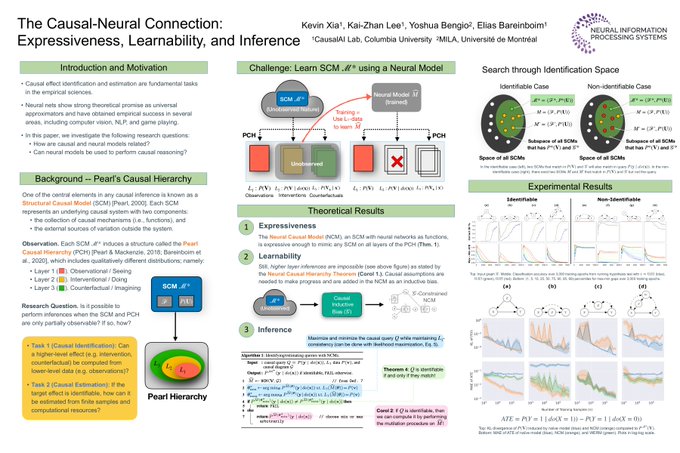

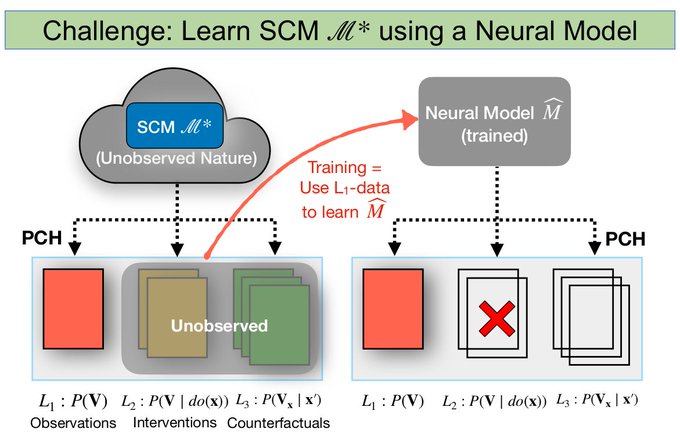

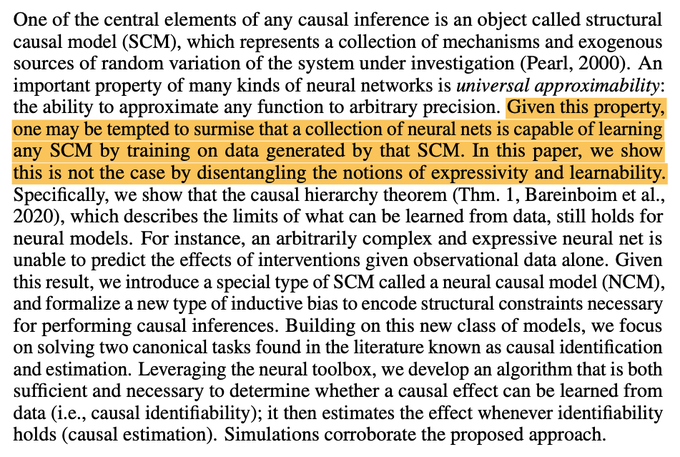

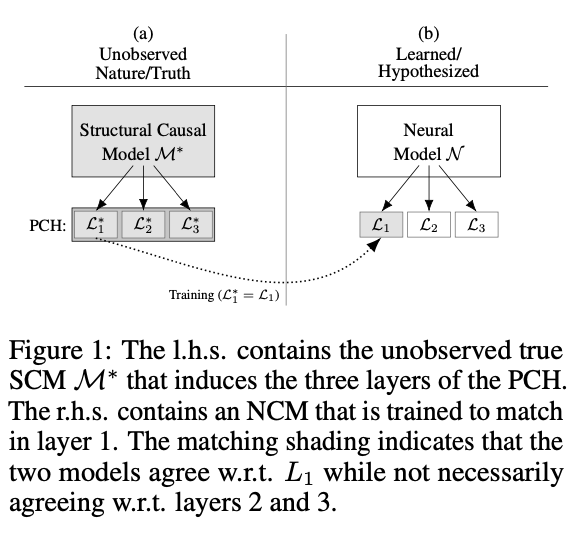

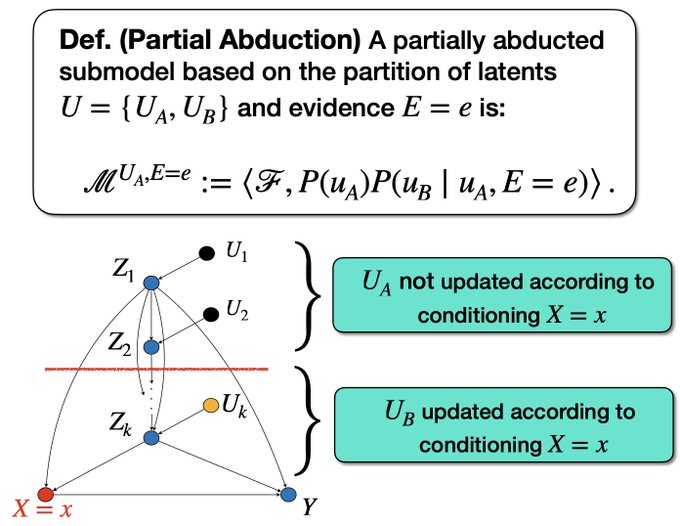

Neural causal models were introduced in + the 1st general algorithm to perform causal inference entirely *inside* a neural net! There is indeed a tradeoff bt symb. & OPT-based methods for ID, elaborated in App C4, p 43; see also FAQ-Q11

@ylecun

@GaryMarcus

@johnmark_taylor

Bottom line:

Identification = Symbolic,

Estimation = Deep Learning.

More details:

Also:

4

6

39

2

22

108

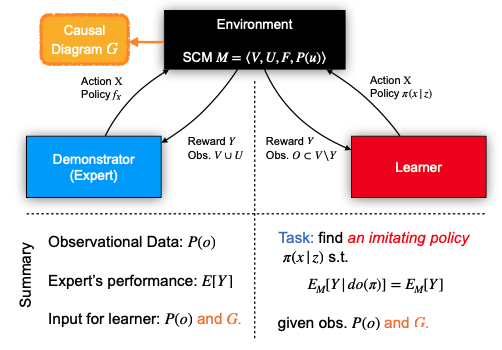

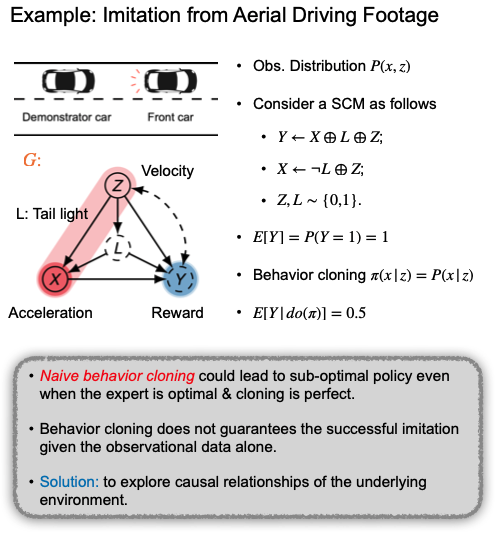

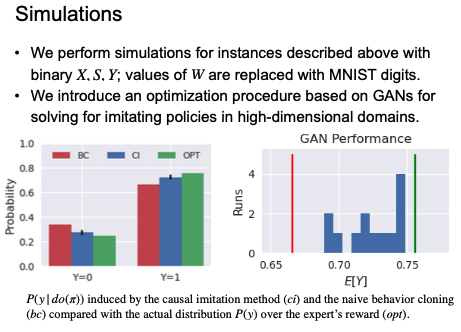

1/6 If you are curious about how causality & imitation learning are related, check our latest

#NeurIPS2020

paper (w/

@junzhez

@danielkumor

): “Causal Imitation Learning with Unobserved Confounders,” & stop by our oral presentation later today (09:15 EST).

1

18

106

The slides of the WHY-19 talks are now available on our website, see: . Thank you,

@yudapearl

@PHuenermund

@murat_kocaogIu

@tdietterich

@tobigerstenberg

@MariaGlymour

1

32

105

The call for papers for the Journal of Causal Inference's special issue on the data-fusion challenge -- combining observational and experimental data -- is out! I will be co-editing it with

@mark_vdlaan

. Please, consider submitting your best work:

1

37

104

Our paper "Causal Inference and Data-Fusion in Econometrics” is finally out, . We discuss recent results in Causal AI in the context of Econometrics. It has been a pleasure to work w/

@PHuenermund

, who wrote a nice tweet-thread explaining the paper (below).

Finally,

@eliasbareinboim

and I are able to share a working paper that we've been working on for quite a while now:

"Causal Inference and Data-Fusion in Econometrics"

🚨

#DAG

#CausalInference

#Econometrics

#DataScience

#MachineLearning

#AI

1/

9

180

565

1

24

101

We are developing a tool called ‘Fusion’ that is fully compatible w/ the

#BookOfWHY

, following the discussion in Ch. 10. Fusion offers an easy-to-use way of doing causal inference & fusion from 1st principles, incl. do-calc. If interested, subscribe here .

@yudapearl

@wiredmau5

@yudapearl

What do you think about DoWhy and CausalImpact? Are there any tools that you would recommend for when you are ready to put what you have read into practice? Thanks!

#Bookofwhy

0

0

0

3

29

96

I will be talking today in the MSR's Frontiers in Machine Learning 2020 -- "On the Causal Foundations of Artificial Intelligence (Explainability & Decision-Making)" -- . If you are attending the event, I would be glad to chat more.

@amt_shrma

@emrek

1

4

84

I'll be talking about causal inference & the data-fusion next week at Harvard, see the link below. If you are around, consider stopping by. I'll be covering topics such as transportability & generalizability as discussed in this PNAS paper , See you!

@HiTSatHarvard

Seminar Series:

"Causal Inference and Fusion"

Elias Bareinboim, PhD

@eliasbareinboim

Purdue University, Dept of Computer Science

@PurdueCS

Thursday, March 21, 2019

10:00-11:00 Harvard Medical School, WAB563

#HiTSSeminar

2

2

10

4

16

85

If you are around Palo Alto, I’ll be speaking at the Stanford Business School (

@StanfordGSB

) about “Causal Data Science’, i.e., how to make transparent & principled causal inferences from data. When: Mon Oct/7, 1:10 - 2:30pm; Where: Rm G101, Gunn Bldg.

@StanfordMed

@StanfordEng

4

3

84

"Purely evidence-based policy doesn’t exist" -

Nice essay by UChic-Econ, Lars Peter Hansen (

@UncertainLars

); similar to points repeatedly raised by J. Ioannidis ( ) &

@yudapearl

(

#BookOfWhy

) in other fronts.

@PHuenermund

@analisereal

0

29

83

If you are in Boston, I’ll be speaking at the

@MIT

graphical models workshop on “Causal Data Science’. When: Today 9:30 am; Where: MIT Bldg 2 (Math). I'll be around for a few hours after the talk, drop me a line if you are interested in talking more about CDS.

@mitidss

@MIT_CSAIL

1

9

80

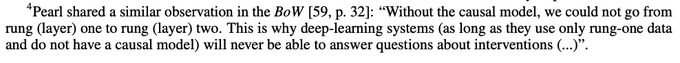

Regardless of RL, which has interesting connections w/ CI (), Deep & Causal modes of reasoning are connected in a fundamental way, as recently discovered: . I believe this starts addressing Judea's concerns, as quoted in footnote 4.

1

17

81

Agree, my take on generalization related to causal knowledge: . The foundations are pretty stable but there have been some newer results since I wrote this around 2014. Thanks

@yudapearl

for the inspiration & partnership. Summary:

2

26

76

I'll be talking about causal inference & data-fusion at MIT next week; see details . If you are around, consider stopping by. I'll be covering topics such as generalizability & transportability, following our PNAS paper … See you!

How can we handle the biases that emerge when piecing together multiple datasets collected under heterogeneous conditions? Join us next Monday (May 2) for an IDSS Distinguished Seminar with

@eliasbareinboim

on Causal Inference and Data Fusion.

1

7

22

5

18

79

Nice blog post () by

@an1lam

discussing some common misconceptions about Causal Inference & summarizing some of what he learned in my course this Spring. It was nice having you around, Stephen, thanks!

1

13

71

@dustinvtran

@zacharylipton

@CsabaSzepesvari

@GaryMarcus

@earnmyturns

@yudapearl

@ShalitUri

Sorry for the self-citation, but a lot is happening - e.g., off-policy method thr. causal reason.: ; Where to intervene? ; Counterfactual bandits: ; Learn causal model from do-dist():

1

5

69

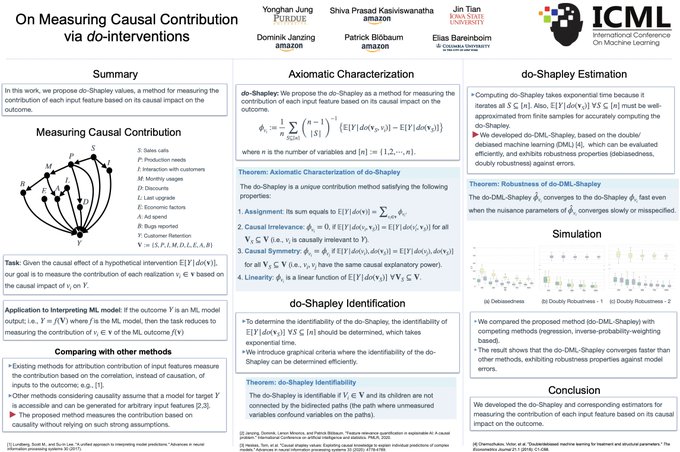

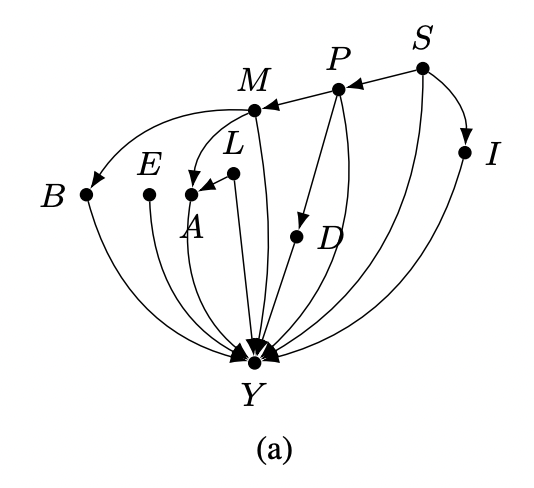

If you are at ICML, check our paper (w/

@YonghanJung

& Jin Tian) on the first family of Double-ML estimators for any identifiable effect computable from observational data (i.e., equiv. class of DAGs):

When: July/23, 12-2 am (EST),

1

11

69

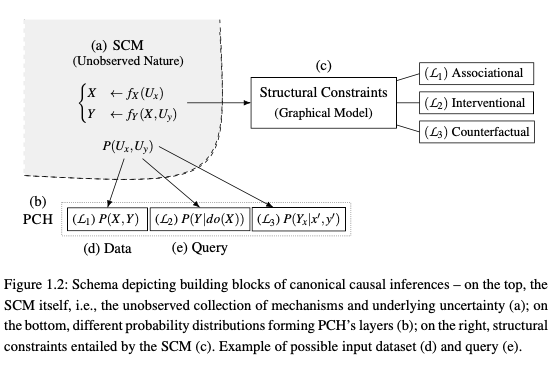

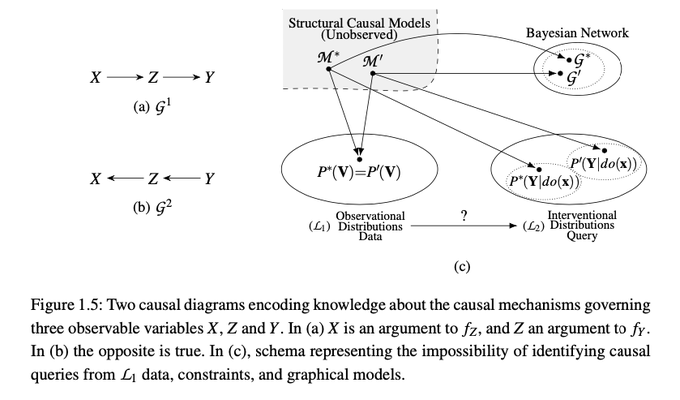

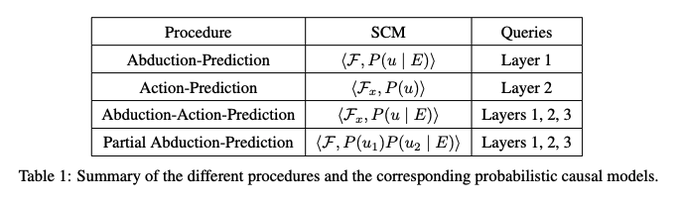

1/ The words “model” & “diagram” seem to be overloaded & shouldn't be conflated, which we clarified in our new chapter (). i) Model (top left, Fig 1.2) = A structural causal model (SCM) is a collection of mechanisms underlying a system & it’s always there.

@yudapearl

One thing I missed in "The Book of Why" was on how causal models come to be. Most of the discussion in the book already assumed some kind of model/diagram. Many "curve-fitting" ML practitioners I talked to struggle especially on this part and are therefore skeptical about it.

3

1

10

1

13

69

My paper with Pearl on causality & big data is available online - Causal inference and the data-fusion problem

#PNAS

0

8

67

One way of attaching causal meaning in regression is thr Instrumental Variables (Wright, 1928). In a new paper w/

@analisereal

@danielkumor

, we unify the literature on linear identification & develop a method for finding Instrumental Cutsets (general. IV)

0

20

66

Thanks for sharing your thoughts, Amit. Recall, just to add some clarity in terms of context, my comment regarding

@ylecun

&

@yudapearl

's posts is neither about generative nor about deep learning versus causal; those are pacified issues in the literature. In other words, we now

So from this perspective, I see the value of both

@ylecun

and

@eliasbareinboim

viewpoints.

True that we cannot learn causal agents simply from observing the world, since observed data is usually confounded.

1

0

14

4

17

67

Carlos Cinelli (

@analisereaal

) just gave a wonderful talk about external validity, transportability, and generalizability in causal inference, which is at the core of what CI is all about. The slides are available here: .

1

12

67

For those who are curious, this is the paper the cute baby is learning about, "Estimating Causal Effects Using Weighting-Based Estimators" (). The task is to allow the estimation of identifiable expressions that go beyond the usual backdoor/IPW cases.

1

11

63

Thanks for writing the piece,

@erichorvitz

! I think "causal inference" should be put front and center since, as part of the AI community, we could help provide sound foundations for many challenges (including safety, equity, robustness, transparency, and understanding). Happy to

"Now, Later, and Lasting: 10 Priorities for AI Research, Policy, and Practice," forthcoming in CACM, with

@conitzer

@SheilaMcIlraith

@PeterStone_TX

#AI100

@CACMmag

@TheOfficialACM

@StanfordHAI

@PartnershipAI

@WHOSTP

@CarnegieMellon

@UofT

@CIFAR_News

2

18

61

3

12

63

Thanks for sharing this. Somewhat curious perspective by on causality. Apparently, he believes that causality is just one among "hundreds of different things that deep learning doesn't do well" & his example of another is "explainability"! Thoughts?

6

15

62

Insightful note by investor

@RayDalio

on the necessity of understanding cause-effect relations to make robust decision-making & the insufficiency of machine learning, (~3 mins): . We do have a language & tools to encode this understanding today!

@yudapearl

2

17

56

1/3 This seems to be a nice extension of the work causal-neural connection () for GNNs, but I haven't fully read it (congrats,

@kerstingAIML

& team!). Still, we answered precisely this question in Q11 in the FAQ (p. 52), also attached for your convenience.

4

11

53

@ylecun

@yudapearl

> "You don't necessarily need RL to train a causal world model." (...) "Only SSL from off-line observation data and planning."

If I understand what you are saying, in addition to problems of scale that we can leave aside for now, your proposal assumes that the way the offline

2

3

49

I believe what you are looking for is called "causal inference" (CI) - every problem I found in RL is solved from 1st principles by tools developed within the CI framework. For a few cataloged examples, see , ,

2

7

51

Can 'Causal Data Science' be helpful for the future of the Amazon Forest? This idea has been first articulated by

@juanccas

(

@wef

,

@EBPgenome

) in a thoughtful, new piece that just appeared at the Exponential View,

@PierreGentine

@runge_jakob

@yudapearl

0

8

49

Thanks for your interest,

@haschyle

, I just posted the slides here: . The talk was recorded and I expect the video should be available sometime in the near future, stay tuned.

@StanfordGSB

0

1

50

Nice rebuttal to Sutton's note by

@wellingmax

. The folks doing causal inference may be excited to read the note since we learn case after case that there's no such thing as model-blind causality. Certainly worth reading to see how causality fits the larger conversation in ML.

1

9

46

I'll be talking about causal inference and data-fusion this Monday at Columbia. If you are around, consider attending.

@eliasbareinboim

will present "Causal Data Science: A general framework for data fusion and causal inference" as part of the Distinguished Lecture Series on Monday, April 1 at 11:40am in CSB 451.

#DataScience

#causalinference

1

6

10

4

8

43

1/2 I don't disagree but my feeling from interactions at NeurIPS this year is that there's a deeper phenomenon going on. Folks want to do CI since they feel it's important, incl. because of your new book, but they haven't spent the time & energy learning what CI is really about.

@ngutten

@jsusskin

Interesting viewpoint. Trouble is, regression is such a small part of CI that to say "To do CI with regression requires an extra step" is almost like saying: "To do CI with algebra requires an extra step". Its better to say "Do CI first, add NN if needed"

@ylecun

#Bookofwhy

1

0

8

3

1

42

@ylecun

@yudapearl

Yann, what is the difference between what you call "causal prediction" and computing next-state probabilities in a typical reinforcement learning (RL) setting?

As we elaborated through our work on Causal RL (), there are exciting things coming from RL, but

3

5

40

Hi Judea, I just learned about Danny from your tweet. His work is really groundbreaking and he is a unique human being, very generous, humble, and curious. I have some memories and will share one. We first met in person when I was interviewing here at Columbia about 5 years ago.

0

1

40

1/4 Broadly, because estimating a cond. prob. P(Y | X) is easier than a joint one, P(Y, X, Z), when X & Z are high-dimensional. Indeed, this observation led to (non-causal) graphical models in the 1980s, including Bayesian nets (ie, non-causal DAGs), Markov random fields & so on.

@VC31415

@Chris_Auld

@Sander_vdLinden

@GordPennycook

@AdamBerinsky

@lkfazio

@DG_Rand

What would the point of such a factorization be? Why would one, say, prefer estimating a conditional expectation to an unconditional one?

1

0

2

1

6

41

For the folks following the thread, the disentanglement of the assumptions and conclusions is essential in data science. After months of discussions & going around, Ivan’s clean & crisp analysis sheds light on a pervasive issue of transportability. Thanks for sharing this, Ivan.

In this post and multiple others,

@f2harrell

says that a generally correct way to analyze RCTs with binary outcomes is to fit a logistic regression. One of the main claims is that this leads to "one-number" effect measures that are "highly transportable".

11

22

155

1

5

40

1/12 Brdly spk, assuming one can infer the effect of an intervention when experimental data matching exactly this intervention is available is not surprising, causally-speaking. Why? There's no cross-rung inference, but a typical function approx exercise. This is Judea's main...

@yudapearl

@GaryMarcus

@moultano

What precisely do you mean by "mathematical impossibility"? Causal graph can be inferred if observations are sampled using different random interventions. Such inference can be done using DL . Then CI can be done on top using another DL model

1

1

5

3

2

39

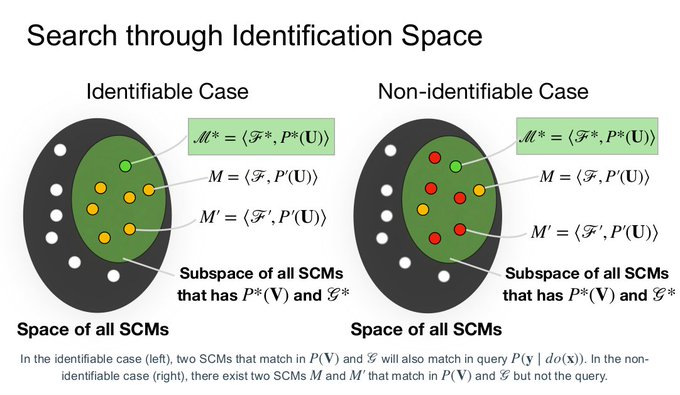

We have an example written to answer this issue; see p. 28, example 10 (), and summarized in the diagram below. We can have different Bayes nets generating the same obs. data (P(V) ) & that naively would entail different do-distributions P(Y | do(X)).

@julianschuess

But people do this all of the time. They fit a statistical model to the data (a markov net over a DAG), and do "causal" calculations. Is there an interpretation, especially given that there is fundamentally no way of verifying from the data if the model is causal or not?

3

0

7

0

8

37

Thank you, Judea! We will keep your offer open for an indefinite duration. :)

Speaking of advertising for a job or a postdoc, here is one from

@eliasbareinboim

I do not know what could be more "innovative" than research on Causal AI. If I was qualified I would go for it.

1

4

29

1

2

36

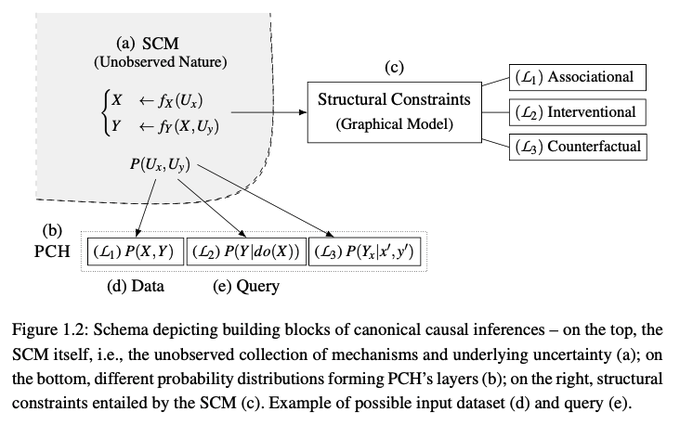

Thanks! Lang. L1 (association), L2 (intervention), L3 (counterfactual) are increasingly complex & higher-order lang. include the lower ones. Sec 1.3 formalizes the expressiveness issue; Theorem 1 (pp. 22) shows the containment is strict; for intuition, see examples 7-9 (~p. 27).

@PHuenermund

@yudapearl

@eliasbareinboim

Great paper. It is not clear to me why L3 questions are separated from L2. It seems that L2 is a special instance of L3. L2 is done using do() notation, but this is abandoned when doing L3.

4

1

13

1

1

35

Everyone wants data-driven smth, sure, but Judea's perspective is technical, rooted in the Causal Hierarchy Theorem (CHT) (p. 22, ). The CHT says that for almost any causal model, the layers of the hierarchy do not collapse (examples 7-9 are illustrative).

@yudapearl

It’s unlikely that data-driven ML will be replaced. Rather it will be augmented by causal modeling. And I bet that even causal modeling will eventually become data-driven itself in the form of causal generative models.

2

19

180

2

7

33

Following

@causalinf

request, here are some of

@yudapearl

personal memories from his early days, which were taken out of the

#bookofwhy

: . It's still unpublished material but authorized for your enjoyment. More to come.

#bookofwhy

1

6

33

The goal shouldn't be "good in-distribution generalization" but extracting the proper invariances to work in the real world, which distribution rarely matches the sample (chang. conditions). In causality, this task is known as statistical transportability,

@yudapearl

If I were to think of a reason, it’d be that a good chunk of ML is about getting good in-distribution generalization (yes, “curve-fitting”). The lack of a sound causal treatment doesn’t bite that hard in this setting.

0

0

2

1

1

34

@tdietterich

@hardmaru

I would just add that even though not all causal invariances are learnable from interactions, some of them are. I think the most promising setting is reinforcement learning, where interventional capabilities are available (e.g., , ).

2

2

33

@ccaballeroh10

@yudapearl

@ylecun

@sirbayes

The terminology game is indeed complicated and usually arises when we attempt to make interdisciplinary claims, but point well taken.

To focus on the substance, my suggestion for those interested in learning more is to first:

(1) understand what control theory has accomplished,

1

3

30

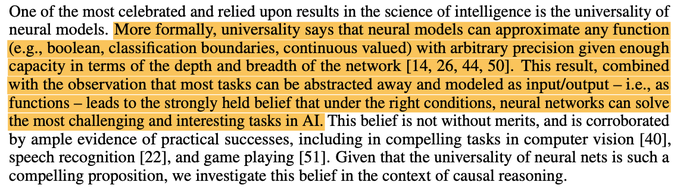

Thanks for sharing your thoughts, K. The universality of NNs does NOT help causal reasoning. In general, it's not that a task would be "harder," but impossible to solve. I am sharing below a recent paper that investigates this issue from first principles,

0

5

31

@ildiazm

It's surprising to hear

@f2harrell

saying that, it seems the opposite of how things evolved. I am attaching an intro written in Stat Sci ~10yr ago on threads vs. assumptions in the ctx of RCT's external validity, where transportability theory started.

1

4

31

For those interested in causal inference & around NYC, I'll be talking about causal data science & modeling today (Nov 21) at CUIMC. Time: 1:00 pm - 2:30 pm. Location: Hammer Health Sciences Building, 701 West 168th Street, Room # LL-106

@ColumbiaMSPH

@ColumbiaMed

@DSI_Columbia

3

2

31

4/5 Tue 6:30 pm (EST) “On Measuring Causal Contributions via do-Interventions”, with

@YonghanJung

, D. Janzing, J. Tian, S. Kasiviswanathan. Link: .

2

4

31

The wisdom in this line is quite remarkable & largely unknown by most folks outside the field (& some reviewers inside :)). My 2 cents: this is one of the first instances where the requirement of having a model was relaxed after understanding it seriously:

2/2

Along a similar vein, I was asked to retweet the last line of my slides in the Why-19 symposium . Gladly; it reads: "Only by taking models seriously we can learn when they are not needed." And I still vow for it.

#Bookofwhy

2

8

37

1

0

30

Hi Damien, thanks for raising the issue. I am not sure I fully buy into the "camps" comparison, with all due respect, and I would add that most folks I know who are doing causal inference research are not focused on the worst-case scenario but on systematic "understanding," which

4

6

22

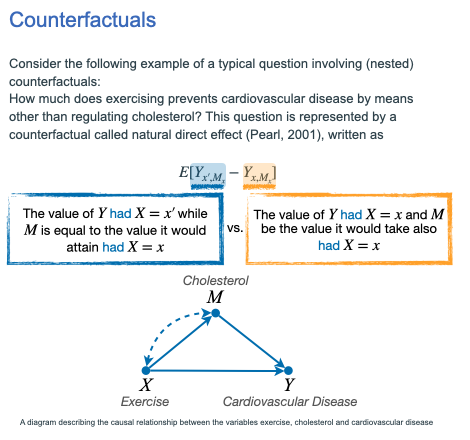

6/6 Wed 7:30 pm (EST) “Nested Counterfactual Identification from Arbitrary Surrogate Experiments”, with Juan Correa &

@sanghack

. Link: .

3

6

27

Scientific progress happens but at a slower pace than it should by any reasonable standard (see, eg, ). I volunteer to help, perhaps talking w/ some of the agencies leaders? Reviewers are extra conservative, perhaps need enlightened leadership?

@tdietterich

4

0

27

1/3 From Hume (footnote 2, ): “Nature has kept us at a great distance from all her secrets, & has afforded only the knowledge of a few superficial qualities of objects; while she conceals from us those powers & principles, on which...

1

5

28

Nice & informative introductory post on Causal Data Fusion! Congrats,

@PHuenermund

!

New post on

@causal_science

explaining the data fusion paradigm – an integrated and fully automatable framework from problem specification, to identification, to estimation.

#CausalInference

@yudapearl

@eliasbareinboim

#Causality

#DataScience

#ML

#AI

2

39

144

0

6

28

@yudapearl

's talk at CIFAR Machines & Brains workshop -- "Data versus Science: Contesting the Soul of Data-Science": . (Due to some Zoom issues, the synchronization of the video & slides was not perfect, apologizes!!)

@bschoelkopf

@MILAMontreal

@CIFAR_News

0

8

27

The parameters of the SCM are almost never identifiable from obs. data, check the Causal Hierarchy Thm (p. 22) & examples 7-9 (p. 24-26, ). Still, estimating effects using DAGs as proxies for the SCMs makes total sense, e.g., see .

@eliasbareinboim

@deaneckles

@pablogerbas

I missed the scope of this statement "so estimating/fitting the parameters makes no sense" -- is this specific a paper or do you mean it never makes sense to estimate parameters using a DAG?

0

0

1

0

6

27

1/2 I would phrase it more precisely - NCM is a special class of SCMs used as proxies of the true SCM & where the functions are neural nets. IF one wants to solve an identification task, eg, yes, a search over the NCM space would be entailed. App. C4 (p 42) discusses this point.

@eliasbareinboim

If I understand correctly, the NCM approach is a numerical search over the space of neural networks?

0

0

0

2

3

25

Hey

@andrewgwils

, thanks for sharing your thoughts. I am not a radiology expert, but to address a broader, related point -- isn't the success of these methods in this setting due to the huge amount of annotated data available, coming from real, qualified radiologists? As far as I

@MelMitchell1

@geoffreyhinton

The whole point is he wasn't trying to be precise about the exact timescale. It's not 10 vs 5. It was more: "we should start changing the way we train radiologists, and those in professions where we can collect ample data and be greatly assisted by machine learning".

2

0

14

1

5

26

Hi

@ylecun

, thank you for bringing attention to this concerning picture of the current situation.

From my observation, many academics, myself included, might not be so closely monitoring the issue, and as a result, might be unaware that certain individuals or companies are

@tegmark

@RishiSunak

@vonderleyen

Altman, Hassabis, and Amodei are the ones doing massive corporate lobbying at the moment.

They are the ones who are attempting to perform a regulatory capture of the AI industry.

You, Geoff, and Yoshua are giving ammunition to those who are lobbying for a ban on open AI R&D.

If

316

1K

6K

0

2

26

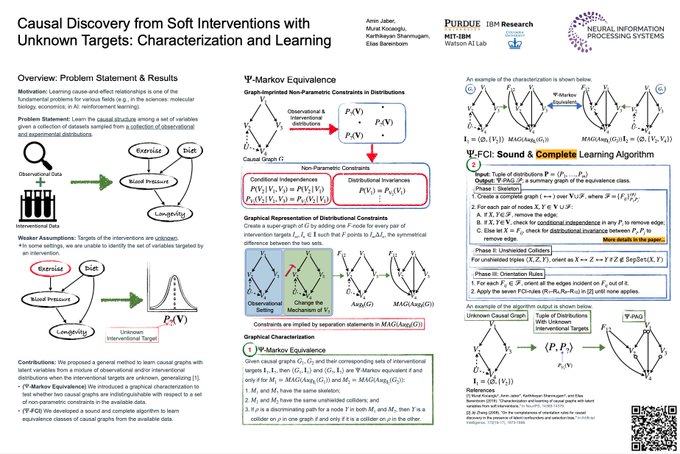

2/6 Wed 12:00 pm (EST) “Causal Discovery from Soft Interventions with Unknown Targets: Characterization and Learning”, with Amin Jaber,

@murat_kocaoglu_

, Karthikeyan Shanmugam. Link: .

1

2

25

2/5 Tue 11:45 am (Poster Session 1)

"Estimating Causal Effects Identifiable from Combination of Observations and Experiments"

(joint work with

@YonghanJung

,

@ildiazm

, and Jin Tian)

The task investigated in this paper is to develop a family of estimators for identifiable

0

5

24