David G. Rand @dgrand.bsky.social

@DG_Rand

Followers

16K

Following

17K

Statuses

11K

Prof @MIT - I've left X, you can find me on BlueSky at @dgrand.bsky.social

Cambridge, MA

Joined June 2012

@martinjanello I have in general left X, but did this post as a special occasion bc of Meta's announcement and the desire to get a message to the folks on X

0

0

4

@MichaelJLewisII @elonmusk @GordPennycook @zlisto @_mohsen_m Thanks!! We definitely aim to do rigorous, unbiased science

0

0

4

@MichaelJLewisII @elonmusk @GordPennycook @zlisto @_mohsen_m Ya agreed, I don't put much stake in the bot sentinel scores. They went into the paper mostly as a control variable...

1

0

3

@MichaelJLewisII @elonmusk @GordPennycook @zlisto @_mohsen_m We aren't able to verify those scores - but to the extent that a social media platform were to use them in the pursuit of a (politically unbiased) goal to reduce bots, our data indicate that Republicans would be much more likely to get suspended than Democrats

1

0

2

@SJDM_Tweets @Schropes @kobih You can access @tomstello_ 's Qualitrics materials, video tutorial etc for integrating LMMs into Qualitrics here

0

2

4

@CaulfieldTim @jonathanstea @GordPennycook @srmarcon @BlakeMMurdoch @angie_rasmussen @doritmi These kind of patterns still have the obvious alternative explanation of Trump supporters getting exposed to more false claims and therefore having more inaccurate beliefs. That is, a causal arrow from support to belief rather than the other way around

1

0

6

RT @RobbWiller: If you had to pick *1* measure of racial attitudes to predict white Americans' Trump support, we find opposition to anti-ra…

0

72

0

RT @oiioxford: NEW: According to Oxford and MIT researchers @_mohsen_m, @DG_Rand, and @cameron_martel_, social media users are more likely…

0

11

0

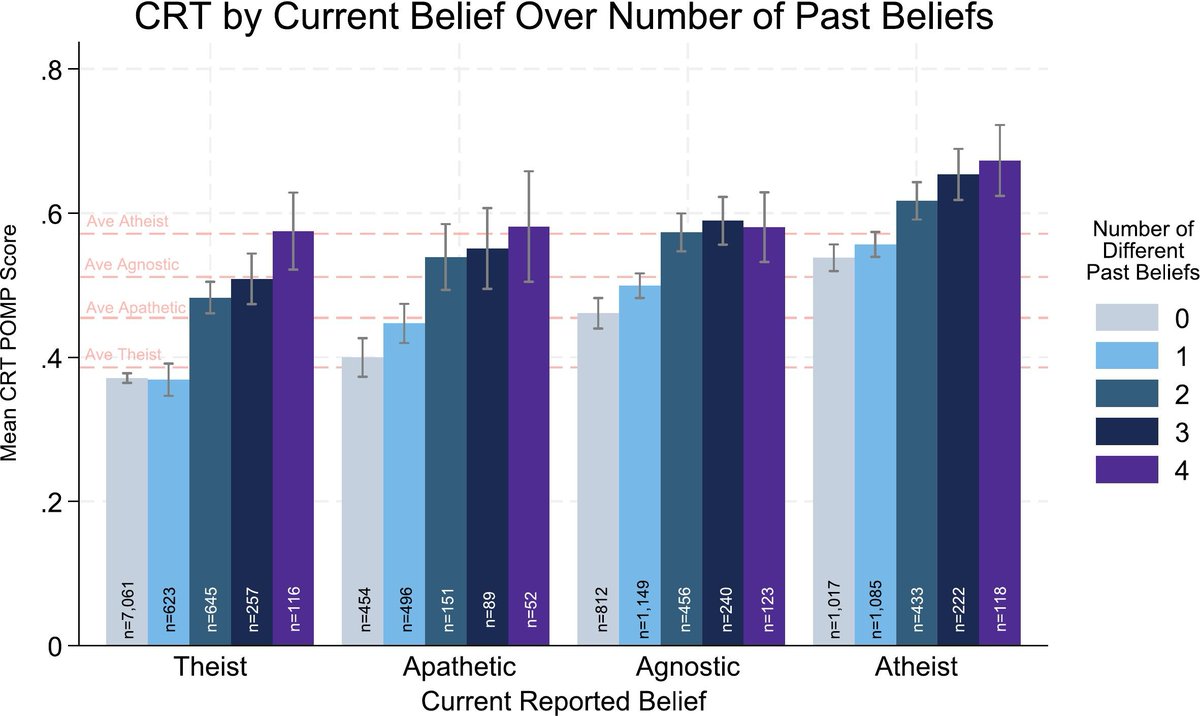

"On the role of analytic thinking in religious belief change: Evidence from over 50,000 participants in 16 countries" ✍️Michael N Stagnaro & @GordPennycook Key finding: Reflection is associated with belief change independent of the direction of change.

0

0

1

RT @CognitionJourn: "On the role of analytic thinking in religious belief change: Evidence from over 50,000 participants in 16 countries"…

0

10

0

RT @alineholzwarth: Are AIs the misinformation machines? Or are we humans the originals? In our latest episode of the Behavioral Design Pod…

0

5

0

RT @GordPennycook: Feel like passing the time while anxiously waiting for the election? I was on 3 fun podcasts recently #1: You Are Not S…

0

5

0

RT @davidmcraney: An interview with the scientists who created Debunkbot, an AI/LLM that reliably reduces belief in conspiracy theories via…

0

11

0

RT @notsmartblog: New episode — an interview with the scientists who created Debunkbot, an AI that reliably reduces belief in conspiracy th…

0

8

0

RT @kakape: So what have I learnt about #misinformation research? I tried to condense it into a list of the 5 biggest challenges the field…

0

76

0

RT @_JenAllen: Great article from @kakape about the five biggest challenges facing misinformation researchers highlighting some of our rece…

0

16

0

RT @CaulfieldTim: 5 biggest challenges facing misinformation researchers 1) Defining it 2) Everything is politica…

0

55

0