Ajay Jain

@ajayj_

Followers

6,104

Following

2,914

Media

67

Statuses

542

Co-founder @genmoai . Co-created denoising diffusion (DDPM), DreamFusion, Dream Fields. Ex Ph.D. @berkeley_ai , @googleai , @facebookai , @nvidiaai , @mit

San Francisco, CA

Joined July 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

梅雨明け

• 208034 Tweets

Raila

• 160348 Tweets

#BKPPยอม_MV

• 55845 Tweets

パワプロ

• 47647 Tweets

NORAWIT X BV IN EMPORIUM

• 29837 Tweets

Harmony

• 24815 Tweets

暇空敗訴

• 24622 Tweets

PAPA DALI

• 20099 Tweets

Kamari

• 20011 Tweets

Overdose

• 19926 Tweets

WazirX

• 16967 Tweets

SEMANGATbaru EKONOMItumbuh

• 15856 Tweets

JIMMYSEA CHOC x AIS

• 15531 Tweets

डिब्रूगढ़ एक्सप्रेस

• 14581 Tweets

रेल मंत्री

• 13175 Tweets

スキズ再契約

• 12212 Tweets

Mehmet Büyükekşi

• 10976 Tweets

#プレバト

• 10939 Tweets

Last Seen Profiles

Pinned Tweet

Meet Replay. We've been hard at work on something exciting. Check out the video and share your creations made with

@genmoai

!

13

8

82

Text-to-3D synthesis with

#dreamfusion

: I typed in "A high-quality photo of a pineapple" and got this lovely 3D fruit!

15

62

518

This is a

#dreamfusion

generated from the caption "a DSLR photo of a ghost eating a hamburger." 👻🤯 Do you think DreamFusion is a good cook?

12

38

376

Paper accepted to

@CVPR

2022! Happy to have worked with amazing collaborators

@BenMildenhall

@jon_barron

@pabbeel

@poolio

on Dream Fields, which synthesizes 3D objects from language descriptions.

11

46

262

Check out our new paper - we put NeRF on a diet! Given just 1 to 8 images, DietNeRF renders consistent novel views of an object using prior knowledge from large visual encoders like CLIP ViT.

w/ Matthew Tancik,

@pabbeel

1/

3

43

255

Check out

#DreamFusion

: our paper on AI-based text-to-3D generation! Just take a look at these synthetic robots 🤖🤖🤖

This draws on years of work from our fabulous team on diffusion models and neural rendering, and I'm so excited for what comes next.

Happy to announce DreamFusion, our new method for Text-to-3D!

We optimize a NeRF from scratch using a pretrained text-to-image diffusion model. No 3D data needed!

Joint work w/ the incredible team of

@BenMildenhall

@ajayj_

@jon_barron

#dreamfusion

136

1K

6K

12

30

229

PixelCNNs generate images pixel-by-pixel in a fixed order. Can we choose the order? Yes! We propose Locally Masked Convolution: a simple, efficient operation for arbitrary order training+testing & more accurate likelihoods.

Paper w

@pathak2206

@pabbeel

1/8

2

48

192

Wow! DreamFusion has been given the Outstanding Paper award at

#iclr2023

Huge congratulations to my co-authors

@poolio

@BenMildenhall

@jon_barron

, and thank you to the conference organizers and reviewers for the feedback and recognition!

Check out

#DreamFusion

: our paper on AI-based text-to-3D generation! Just take a look at these synthetic robots 🤖🤖🤖

This draws on years of work from our fabulous team on diffusion models and neural rendering, and I'm so excited for what comes next.

12

30

229

15

14

193

New work on autoregressive generative models! We improve the expressiveness of your favorite continuous autoreg models like Trajectory Transformer, WaveNet and Image GPT with Adaptive Categorical Discretization. See below for code. Paper at

#UAI2022

2

26

181

Very cool!

@pess_r

ported our Denoising Diffusion models from TensorFlow to PyTorch and made an awesome web demo cc

@hojonathanho

@pabbeel

2

28

107

Modern generative models feel like powerful alien technologies that crash landed on Earth. Yet, they’re based on surprisingly simple concepts. I’m glad to have contributed to the popularization of diffusion models with our 2020 DDPM paper, and am so excited for progress to come.

1

4

99

Meet

@GenmoAI

. We help you create media in the formats you need to tell your stories. Try Genmo today with hilarious and immersive text-to-video generation 🎬

3

14

71

Controllability is a major problem for generative models, so it's hard to find the exact result that you're looking for in the latent space. Today, we announced Replay Camera Controls to allow creators to take the reins on generative video.

1

9

58

Text to 3D with Dream Fields! Come find

@BenMildenhall

@jon_barron

and myself at poster spot 86a at

#CVPR2022

today from 10 am-12:30 pm CDT. Paper and code: .

Paper accepted to

@CVPR

2022! Happy to have worked with amazing collaborators

@BenMildenhall

@jon_barron

@pabbeel

@poolio

on Dream Fields, which synthesizes 3D objects from language descriptions.

11

46

262

3

6

49

I’ll be at NeurIPS this year in New Orleans. Excited to cheer on my collaborators

@AleEscontrela

@AdemiAdeniji

during the Wednesday morning poster session. In VIPER, we use video generative models to train reinforcement learning agents. Come find us at poster 1412 or DM to meet.

0

0

33

We’ll be presenting DietNeRF at

#ICCV2021

tomorrow at 9 AM EDT! Come chat or watch our talk offline at . Our code is also now available at 🥳

1

6

33

Our V2 text-to-image generator is out. V2 tends to produce much more coherent and attractive images out of the box. Try it:

0

1

30

Cats munching on some snacks. Made with Genmo Replay text-to-video v0.1

2

3

29

Last week, I presented Locally Masked Convolution for Autoregressive Models at

#UAI2020

. It was a great conference with insightful conversations! You can read our paper at , or see the talk at . Joint work with

@pabbeel

@pathak2206

1

5

24

We build some crazy infrastructure at Genmo to improve user experience. Today, we released streaming previews. Right after submitting a video to our GPU cluster, Genmo sends users renders of their AI videos. It's super fun to play with. Try queuing up to 4 videos at a time and

0

0

21

Thanks

@shaneguML

! My Twitter profile photo was generated with Score Distillation Sampling. We can also sample images by optimizing 2D Fourier Feature Net weights, and tried some early experiments using Mitsuba 3 as the differentiable renderer.

1

4

20

Great work! Few/single image conditioned NVS is fundamentally a generation problem, and multiview priors are an important research direction.

Excited to announce our work on novel view synthesis with diffusion models! Our model can lift a single 2d image into 3d.

Joint work w/

@wchan212

@rmbrualla

@hojonathanho

@taiyasaki

@mo_norouzi

66

938

4K

0

2

17

Fast multicloud data transfer is going to be important for synthesis and representation learning: need to move TB of data quickly and cheaply to the accelerators. Looking forward to trying this and great work

@_parasj

!

0

1

17

Very exciting progress on high-resolution image generation through denoising diffusion probabilistic models, a collaboration with

@hojonathanho

@pabbeel

New paper on diffusion probabilistic models with

@ajayjain318

&

@pabbeel

:

Likelihood-based generative model with SOTA FID=3.17 on unconditional CIFAR10, and ProgressiveGAN-like quality on 256x256 LSUN & CelebA-HQ (sometimes generates dataset watermarks!)

6

136

450

1

2

17

@geoffreyhinton

@MasoudMaani

Google didn't invent diffusion models (but made plenty of progress on them)

1

0

15

Text to pixel art with denoising diffusion! Can you guess the captions used to generate these vector graphics?

#genmo

1

1

15

Text to image to NeRF to mesh to image to image 🤯

Text-to-3D-to-Image)! I ran the output of DreamFusion through

#stablediffusion

#img2img

. This workflow would be amazing for generating artwork with precise control over the subject.

#dreamfusion

15

140

871

0

0

16

Replay FX gives generative AI videos very cool kaleidoscopic effects🛟🌸

We introduced a library of six customizable effects on our website. FX come with controllable sliders to adjust their strength and playback speed

0

0

14

Check out our paper

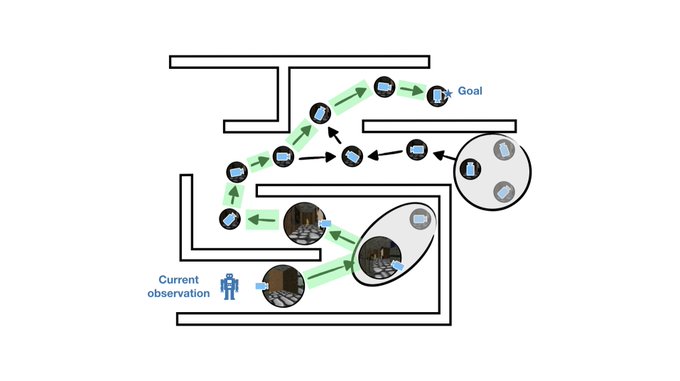

@NeurIPSConf

2020: Sparse Graphical Memory for Robust Planning. Allows an RL agent to build, abstract and plan over a memory of previous observations, really helping with robustness of long-horizon navigation.

New paper coming up at

@NeurIPSConf

- Sparse Graphical Memory for Robust Planning uses state abstractions to improve long-horizon navigation tasks from pixels!

Paper:

Site:

Co-led by

@emmons_scott

,

@ajayj_

, and myself.

[1/N]

1

16

108

1

0

13

Great work

@Michaelvll1

@_parasj

and friends! A nice approach for combining local reasoning over a graph with global self-attention. The GNN may be learning a positional embedding based on local graph structure.

"Representing Long-Range Context for Graph

Neural Networks with Global Attention" by

@_parasj

@Michaelvll1

et al. at

#NeurIPS2021

Strong results on graph classification when combining GNN --> Transformers.

PDF:

3

43

193

0

0

12

Happy holidays!

0

0

12

This is a great application of DDPM by

@cnxhk

, and it's impressive that only 6 iterations are needed during sampling. Generative models should scale with data complexity rather than dimensionality!

0

2

11

New paper on contrastive learning for programming languages with

@_parasj

@tianjun_zhang

@pabbeel

@mejoeyg

Ion Stoica! ContraCode learns similar representations for equivalent but differently implemented JavaScript programs using compiler-based data augmentations

#ml4code

0

2

10

Chat with us tomorrow at

#NeurIPS2020

during the 9-11 AM PST poster session! You can check out the talk or teleport to our poster from . We're in Town A0, Spot B0.

New paper coming up at

@NeurIPSConf

- Sparse Graphical Memory for Robust Planning uses state abstractions to improve long-horizon navigation tasks from pixels!

Paper:

Site:

Co-led by

@emmons_scott

,

@ajayj_

, and myself.

[1/N]

1

16

108

1

0

10

@junyanz89

@ndrewLiu

Cool! Wonderful to see more progress on few shot NeRF. You might enjoy DietNeRF, one of our works that approaches the overfitting problem with another auxiliary loss:

1

0

9

It was a pleasure to catch up with

@profjoeyg

earlier this week on the Generating Conversation podcast. Joey was a fantastic mentor and collaborator at Berkeley. Check out the interview below.

Latest interview from

@profjoeyg

is out!

We chatted with

@ajayj_

, who's the co-founder of

@genmoai

.

The conversations touches on the history of diffusion models 🖼️, some awesome demos 🎥, and the tech behind Genmo 🤖. Check it out!

0

4

9

0

1

8

Come talk with

@hojonathanho

and I about DDPMs at

#NeurIPS2020

! We're in the poster session now, until 11 AM PST. A link is available at . Paper: .

Very exciting progress on high-resolution image generation through denoising diffusion probabilistic models, a collaboration with

@hojonathanho

@pabbeel

1

2

17

1

0

8

I had a wonderful time interning with

@RaquelUrtasun

in 2018, and highly recommend this opportunity to interested students

Research internships are now available

@Waabi_ai

. All year long, with duration of 3-12 months. Available in both Canada as well as US. Join the team at the forefront of innovation in

#SelfDrivingCars

!

Apply:

3

52

218

0

0

8

So smooth!

Playing around with motion

@altfortomorrow

COOL!

I just tried

@genmoai

on three images. It did great and it's free.

I put all three together in a short vid👇 I sped it up 2X

#ai

#AIArtCommuity

#aiART

#aianimation

#animation

#midjourneyV6

#digitalart

#motionGraphics

#art

2

1

15

0

0

8

We're at the UAI virtual poster session now. Come find

@qiyang_li

and I at Poster Session II (c), poster spot G5. Joint work with

@pabbeel

at

@berkeley_ai

.

0

1

7

Congrats

@AxSauer

and collaborators!

0

1

7

LMConv improves PixelCNN++ (

@TimSalimans

et al) CIFAR10 likelihoods to 2.89 bpd by averaging over multiple orders (with one set of parameters). At test time, generate coherent image completions by choosing a maximum context order: just sample the missing pixels last! 5/8

1

0

7

PixelCNNs (

@avdnoord

et al 2016) introduced a convolutional inductive bias with parallel training, useful for generating images in raster scan order and fitting latent priors (eg VQ-VAE). SPN (

@jacobmenick

et al) proposed a variant to support a different subscale order. 7/8

1

0

7

I'm sharing this since we've made a big update to our paper from last year, with lots of new robustness experiments, insights and open questions. Co-authored with

@_parasj

@tianjun_zhang

@pabbeel

@mejoeyg

and Ion Stoica. 3/3

1

0

7

@ShirazAkmal

The ghost model above is an exported mesh (GLB file), rendered in

I also have a bunch loaded up in blender, planning to play around :)

2

0

7