Marvin Schmitt

@MarvinSchmittML

Followers

1,152

Following

609

Media

264

Statuses

1,941

PhD student @ELLISforEurope ( @Uni_Stuttgart + @AaltoUniversity ) | Generative Modeling + ProbML + Amortized Inference + UQ | prev. CS + Psychology @UniHeidelberg

Joined August 2021

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

McDonald

• 578227 Tweets

Flamengo

• 242810 Tweets

Corinthians

• 198711 Tweets

Charles

• 178188 Tweets

#USGP

• 152635 Tweets

Gavi

• 136255 Tweets

Lions

• 98303 Tweets

Franco

• 97238 Tweets

Ferrari

• 96595 Tweets

Watson

• 90768 Tweets

Norris

• 80474 Tweets

Colapinto

• 72810 Tweets

Browns

• 71348 Tweets

Lando

• 65709 Tweets

Staged

• 64520 Tweets

Packers

• 63235 Tweets

Verstappen

• 60301 Tweets

Vikings

• 54618 Tweets

Mahomes

• 46872 Tweets

49ers

• 45653 Tweets

49ers

• 45653 Tweets

#GHDBT7

• 42328 Tweets

Bruno Henrique

• 36534 Tweets

Rossi

• 34104 Tweets

Gerson

• 33667 Tweets

Daronco

• 31618 Tweets

Purdy

• 23933 Tweets

Wesley

• 23581 Tweets

Panthers

• 22537 Tweets

#AustinGP

• 16239 Tweets

Rams

• 14839 Tweets

Yuri Alberto

• 14443 Tweets

Jameis

• 14424 Tweets

Jayden Daniels

• 10932 Tweets

Aiyuk

• 10671 Tweets

Ocon

• 10556 Tweets

Niners

• 10541 Tweets

Last Seen Profiles

@tcarpenter216

Machine learning scientist here.

I use the same trick to get rich with poker ♣️♥️♠️♦️

1. Play 649,739 hands privately at home.

2. Go to the casino‘s poker table

3. Go all-in in the first round

4. Get a guaranteed royal flush (odds 649,739 : 1)

Casinos hate this easy trick! 🔥

13

45

2K

@lies_and_stats

@tcarpenter216

Because I want you all to sign up for my free newsletter which is really just a sales funnel into my 1,399$ masterclass.

2

0

224

1. Many consider brms to be one of the best pieces of statistical software out there.

2. Being Paul's PhD student for the last 3 years, I know for sure that his writing skills are next-level.

Combining 1 and 2, this book is bound to be great.

For the nerds: p(great | 1, 2) =💯

2

14

167

I am excited to start a new chapter: Today is my first day as a PhD candidate in

@paulbuerkner

's research group for Bayesian Statistics at

@SimTechStuttga2

.

I'm extremely grateful for this amazing opportunity and full of joy & curiosity about the future in this awesome team!

4

4

66

Do you want to create a personal website but don't know where to start?

My latest blog post is perfect for you.🚀

Learn how to create a website with

#Quarto

and my simple template to get started in no time!

👇 Check it out and create your website now.

5

15

64

Our paper on consistency models for simulation-based inference has been accepted at

#NeurIPS2024

!

Thanks to a fantastic team Valentin Pratz (co-lead), Ullrich Köthe,

@paulbuerkner

,

@StefanRadev13

📄 Preprint:

🔁 Updates, code, and thread follow soon™

3

9

63

I am at AISTATS this week to present our paper on Meta-Uncertainty in Bayesian Model Comparison.

Happy to chat and meet new folks!

#AISTATS2023

@aistats2023

@aistats_conf

1

6

61

@RamosCejudo

@AJThurston

… and a psychological Easter egg.

Look at the irregularity at 95. if people manage 95, they sure want to hit the 100

2

0

60

We have pre-released the {ggsimplex} R package. It is a ggplot extension for point and density plots in the probability 2-simplex (triangle). Useful for visualizing Posterior Model Probabilities in

#Bayesian

#Statistics

.

👉 Find out more in the blog post:

2

14

57

Our paper "Meta-Uncertainty in Bayesian Model Comparison" got accepted at

#AISTATS

2023! Special thanks to the best coauthors + mentors

@StefanRadev13

&

@paulbuerkner

!

The preprint is on arXiv, the camera-ready version will follow:

#AISTATS2023

#ML

#AI

3

12

55

I'm spending 6 months as a visiting researcher in the Probabilistic ML group at

@AaltoUniversity

🇫🇮

Excited to meet and collaborate with inspiring people!

Thanks to Aki Vehtari

@avehtari

for hosting me, and to

@ELLISforEurope

@SimTechStuttga2

@Cyber_Valley

for mobility funding.

1

1

53

@ZetaOf1

@Julius_Ktxt

I can somewhat understand it from a mathematician‘s perspective. Assignment and equality aren’t the same:

x = x+1 ⁉️

vs.

x <- x+1 ✅

1

0

50

@FanLiDuke

And of course the infamous "I‘m humbled by xx" (also awards)

Which makes no sense at all because winning an award literally means you best everyone else how can that humble you lol

1

0

49

@ClaireLunde

@AcademicChatter

When my son came to a conference with me this year, we turned my poster into a giant paper airplane afterwards ✈️

0

1

42

"JANA: Jointly Amortized Neural Approximation of Complex Bayesian Models" accepted at

#UAI2023

@UncertaintyInAI

🚀

TL;DR: Amortized simultaneous likelihood + posterior approximation in simulation-based inference.

📚 Paper:

🌐 Code:

2

10

40

Ever wondered whether your extreme evidence for a model is reproducible in a replication study?

We propose a method to predict the posterior model probabilities on new data with a novel framework: Meta-Uncertainty.

The best thing: It's applicable to virtually any analysis!

Posterior model probabilities are measures of uncertainty, but are also uncertain themselves. In our new preprint,

@MarvinSchmittML

@StefanRadev13

and I take a deep dive: We introduce Meta-Uncertainty and explore ties to overconfidence and reproducibility:

3

19

93

2

8

36

Thank you so much for the shoutout!

The brand-new BayesFlow version based on Keras 3 is available on the dev branch. We'll merge to main when we're feature-complete.

Questions, feedback, and contributions are always welcome!

Here is the Discourse forum:

0

5

36

🚨 BayesFlow can reliably detect Model Misspecification and Posterior Errors in Amortized Bayesian Inference 🚨

In a paper with

@paulbuerkner

, Ullrich Köthe, and

@StefanRadev13

(), we tackle a major problem in simulation-based inference w/ neural nets! 🧵👇

2

10

32

Working with Paul is the best thing that ever happened to me in my professional life. It's hard to overstate how grateful I am that he is my PhD supervisor.

If you want to apply to this position and have any informal questions: My DMs are open!

0

7

33

I'll be presenting our paper "Meta-Uncertainty in Bayesian Model Comparison" at today's AISTATS poster session.

👉 Let's chat at poster

#125

this afternoon!

🌐 Project website:

#AISTATS2023

@aistats_conf

@aistats2023

0

2

23

This is the most exotic application of our BayesFlow library for amortized Bayesian inference so far:

🕊️ An agent-based model of birdsong learning on real data 🕊️

Authors:

@oporornis

,

@aalbina

, R Alexander Bentley, David Guerra,

@MasYoungblood

🔗 Link:

3

5

23

@PR0GRAMMERHUM0R

Nothing wrong here.

According to naming conventions, there’s a HelloWorld class with a constructor that takes a string argument.

In this line of code, an instance of HelloWorld is initialized with the argument "print".

Problem? 🥸

1

0

19

@stevain

This animation makes me feel uneasy.

For my mental sanity, please show the *de*noising process next time 👀

0

0

22

✨ I had a blast on the

@LearnBayesStats

podcast

🧪 We talked about my research on amortized Bayesian inference (=neural nets for fast Bayes)

💭 I'm passionate about this promising field, but many open questions remain on reliability

🎧 Tune in and let us hear your thoughts!

📢 Episode 107 is Now Available!

✨ In this episode, we dive deep into the world of amortized Bayesian inference with

@MarvinSchmittML

🎧 Tune in now to gain insights:

0

2

11

4

7

22

I am excited to announce the first publication of our new industry-academia collaboration.

Together with great co-authors

@tcarpenter216

@ZetaOf1

, we explore the impact of graduate education on subjective well-being.

Link to full pre-print (YouTube):

4

7

22

@arthurturrell

@neilgcurrie

Definitely {purrr} — makes R feel a bit like Python via stuff like partial and map.

0

0

20

A great summary of amortized Bayesian inference with neural networks for folks with an MCMC background (e.g., Stan or PyMC).

Thanks

@MikeLwrnc

! ✨

0

3

19

The sun is setting over Levi and

@bayescomp

has ended.

Thanks to all attendees for the great conversations and talks. And a special thank you to the organizers for making this awesome conference possible!

#BayesComp2023

0

1

18

On my way to the

@ELLISRobustML

workshop in beautiful Helsinki 🇫🇮☀️

I will present our paper on data-efficient amortized Bayesian inference with self-consistency losses (ICML 2024) in today‘s poster session.

0

1

18

1/2

We carry that to the extreme in our Meta-Uncertainty framework for Bayesian model comparison.

We simulate data from each competing model and perform full model comparisons on each simulated data set. This approaches the *sampling distribution* of model comparison results

1

1

17

I am presenting a poster at

@bayescomp

today.

Come by and let's chat about why we have to think extra hard about model misspecification when we perform amortized Bayesian inference.

#BayesComp2023

0

3

17

Had a great time hosting a tooling session about amortized simulation-based inference at the ELLIS Doctoral Symposium.

So many amazing tooling sessions from inspiring colleagues. It was a pleasure working with all of you!

@ELLISforEurope

@FCAI_fi

#EDS2023

#ELLISPhD

1

4

18

How can we use deep neural networks for Bayesian posterior inference? ✨

What's the difference to a Bayesian Neural Network (BNN)? 👀

Tune in to episode

#107

of the Learning Bayesian Statistics podcast for more about Deep Learning ⚭ Bayes 🎧

Catch the full episode below 👇

2

6

19

Our paper on misspecification in amortized Bayesian inference was awarded with an Honorable Mention at the German Conference on Pattern Recognition 2023

@gcpr2023

.

Thanks to my great co-authors Paul Bürkner (

@paulbuerkner

), Ullrich Köthe & Stefan Radev (

@StefanRadev13

)!

Link👇

2

3

18

Are there any tips or best practices for collaborative writing in Quarto?

I'm looking for an experience that is similar to Overleaf for LaTeX. My fallback option is git.

pinging

@quarto_pub

@MatthewBJane

@SamanthaCsik

@rappa753

@ivelasq3

@melvanbussel

#QuartoPub

#Quarto

3

1

14

@eleafeit

Here’s a great paper from my group that disentangles the question:

TLDR: The approximation algorithm is one part of the model, the other ones are (1) the *probabilistic joint model* and (2) the data to fit.

0

4

17

I will give a talk about Reliable Amortized Bayesian Inference at the Bayes on the Beach conference in Australia next week.

Let's catch up if you're there!

@QUTDataScience

#BotB2024

0

3

16

I had a great time at

#AISTATS2023

with many interesting talks and conversations!

Big thanks to the organizers

@aistats_conf

@aistats2023

for putting together such a memorable event in oh-so-beautiful Valencia 🏝️

1

0

16

I'm teaching an intro workshop on Scientific Python this week 🐍

The target audience has a background in statistics, R, and cognitive modeling.

Which topics would you like to see covered in such a workshop? Any tips?

#rstats

#python

#AcademicTwitter

5

3

15

I'm currently preparing an article for the experimental track of

@JournalOVI

.

That means: The article is a Quarto document on GitHub, deployed via GitHub Pages. You can add collapsible paragraphs, callouts, Shiny widgets, videos, ... sky is the limit.

It's an absolute pleasure!

2

2

14

The PyVBMC documentation is divine.

I'm rebuilding the entire documentation of the BayesFlow library for amortized Bayesian inference, and I'm constantly drawing inspiration from the great docs that

@AcerbiLuigi

@BobbyHuggins16

put together for PyVBMC👏

1

0

15

I crafted this in pure matplotlib a few weeks ago. It looks simple at first, but it made me go crazy. It’s a combination of:

- uncertainty bands or whiskers, depending on data stream

- broken x-axis

- zoom-in with larger markers and grid

🫠🫠🫠

2

1

14

Great hands-on tutorials on amortized Bayesian model comparison with

#BayesFlow

🤩

We just released a tutorial series on performing approximate Bayesian model comparison with

#BayesFlow

, illustrated with cognitive models. 1/2

1

10

31

0

1

15

@DavidKButlerUoA

By regrouping to a 5*N rectangle + x:

- blue fits snuck in blue

- orange fits snuck in orange

- result is a 5 * N rectangle (didn't count N)

- the red dot is left

-> total number is 5N + 1, which is not divisible by 5

(never had to count beyond 5, hope that's ok)

1

0

14

@stephenjwild

@rmkubinec

we got you covered.

1

2

14

@tcarpenter216

I love statistical rethinking, but it might be too advanced when folks have no previous experience.

To get some exposure, I would not recommend textbooks, but rather online courses or videos. There are heaps of offers across those platforms like Coursera etc.

1

0

14

@rmkubinec

If you were to ask me again and again, the odds of me saying that I am a Bayesian (or something more extreme) even if I were a Frequentist are 5.3%.

Therefore, I was not able to reject my null hypothesis that I am a Frequentist at an α-level of 5% 🤷

1

0

12

I presented our work on data-efficient amortized Bayesian inference via self-consistency losses at the

@ELLISRobustML

workshop 🇫🇮☀️

Great crowd and interesting discussions!

Joint work with

@desirivanova

, Daniel Habermann, Ullrich Köthe,

@paulbuerkner

and

@StefanRadev13

.

0

2

12

@JimGrange

If this means that I never ever ever ever have to code a Drift Diffusion Model myself again, I’ll pay any amount of money for ChatGPT Plus 😅

@StefanRadev13

1

0

12

@_AlvinChristian

I don’t know what you *mean*, that’s a pretty *average* machine learning method.

0

0

10

Congrats again, Paul! This is so well-deserved 🥳

In 2020,

@paulbuerkner

was the first independent

#jrgl

to start in SimTech. 3 years and a research group of 5 doctoral researchers later, he is out and about to leave SimTech to become a Full Professor for Computational Statistics at

@TU_Dortmund

. Congrats and all the best! 🥳👏

12

6

115

0

0

10

Pushing the envelope of Bayesian surrogate modeling & simulation-based inference 🚀

Excited to finally release the pre-print of this new project with

@StefanRadev13

, Valentin Pratz,

@uPicchini

, Ullrich Koethe, and

@paulbuerkner

.

👉Check out BayesFlow at

0

2

10

Excited to start into the semester with my class on statistical inference at

@HS_Fresenius

. The home office (aka lecture hall) is geared up to start!

1

1

10

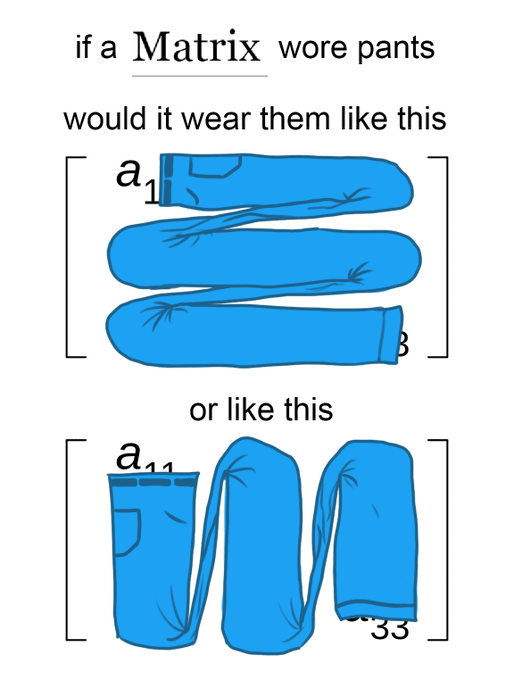

Update:

Thanks to the great stats community on x dot com the everything app, my post found its way to the person who took that exact photo yesterday. I just mindlessly hit "save photo" on my phone and couldn't find it anymore today.

Credits to:

@yahrMason

@MarvinSchmittML

Yes, took this photo yesterday, I knew the internet needed it immediately when I turned the page

1

0

9

0

0

10

beautiful poster though

That hasn't been the Google logo since 2015...

I think

@aistats_conf

needs to update their banner files! 😂😂

(Pic stolen from a separate thread by

@shakir_za

)

3

1

4

0

0

10