Sugandha Sharma

@sugsharma

Followers

2,501

Following

359

Media

64

Statuses

382

GenAI @NVIDIA . Prev: Research Scientist @Microsoft Research (GPT based gaming AI & alignment) | PhD @MIT @mitbrainandcog @FieteGroup @MITCoCoSci

Cambridge, MA

Joined May 2015

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Olympics

• 2016771 Tweets

オリンピック

• 486359 Tweets

フランス

• 226862 Tweets

Christians

• 217145 Tweets

#LaCasaDeLosFamososMx

• 117957 Tweets

花火大会

• 107669 Tweets

Gala

• 98071 Tweets

高校野球

• 55825 Tweets

世界遺産

• 46682 Tweets

Agustín

• 41224 Tweets

佐渡金山

• 35825 Tweets

DDAY YOU BETTA CATCH UP

• 35513 Tweets

バレーボール

• 31118 Tweets

अब्दुल कलाम

• 29863 Tweets

佐渡島の金山

• 26405 Tweets

登録決定

• 22407 Tweets

BOSSNOEUL BEWITCHED

• 22005 Tweets

पूर्व राष्ट्रपति

• 21776 Tweets

D-DAY JULIExSTELL

• 19919 Tweets

リーリヤ

• 17146 Tweets

#APJAbdulKalam

• 17076 Tweets

ONE WORLD ONE SKY

• 14049 Tweets

男子バレー

• 13354 Tweets

世界文化遺産

• 12346 Tweets

syrup16g

• 11914 Tweets

大阪桐蔭

• 11761 Tweets

BILLYBABE FITOXY DAY

• 11643 Tweets

ぬーどるストッパーの陣

• 10598 Tweets

木更津総合

• 10580 Tweets

守備妨害

• 10252 Tweets

Last Seen Profiles

Celebrating the completion of my PhD

@MIT

BCS Stole Bestowal Ceremony today with my PhD advisor, Josh Tenenbaum. Grateful for his guidance and support!

@mitbrainandcog

@MITCoCoSci

15

8

522

Enjoyed the

@NVIDIA

-

@MIT

lunch meetup today w/

@DrJimFan

! I was representing both sides for the first time today🙂. Great reconnecting w/ these wonderful colleagues at MIT too!

@BoyuanChen0

,

@ZhutianYang_

,

@avivnet

@NishanthJKumar1

,

@hyojinbahng

,

@haoshu_fang

,

@TheAndiPenguin

3

2

77

Celebrating with

@vin_agarwal

at the

@MIT

BCS Stole Bestowal Ceremony. Grateful to him for his unwavering support throughout my PhD!

@mitbrainandcog

2

1

76

Thanks

@doristsao

for your kind & thoughtful words about our work! I am glad that you find it beautiful, creative and conceptually insightful!

For folks who would like to know more:

Short talk on Vector-HaSH

Short talk on MESH

@SebastianSeung

Btw, I don't know if

@FieteGroup

is funded by BRAIN, but their recent work understanding grid cell-place cell dynamics as a general mechanism for episodic memory that factorizes problem of building attractors from problem of assigning content to them, is such a beautiful and

2

2

36

2

13

65

Thank you so much

@SebastianSeung

for appreciating our work! I am glad to hear that you consider it a breakthrough in theoretical neuroscience!

For folks who would like to know more:

Short talk on Vector HaSH

Short talk on MESH

@doristsao

@FieteGroup

+1 to an amazing conceptual breakthrough in theoretical neuroscience that I think did not depend on big data or fancy technologies. What we can say is that BRAIN technologies will make the theory testable in a much more conclusive way than was ever possible.

0

1

9

1

11

63

Presenting poster II-122 @

#cosyne2023

today!

First model of hippocampal-entorhinal cortex that encapsulates key findings of entorhinal map plasticity: grid map fragmentation, interpolation at short timescales (local consistency), map merger at long timescales (global consistency)

0

11

54

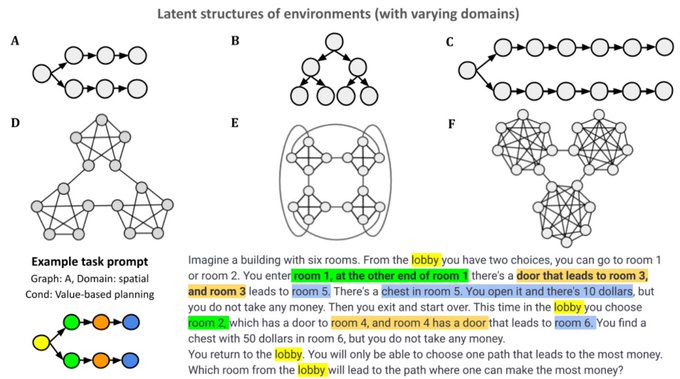

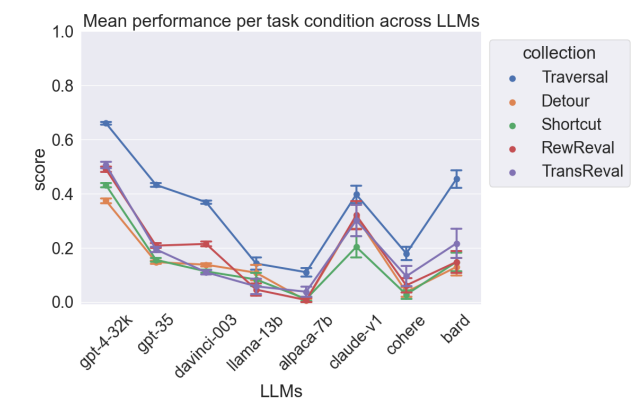

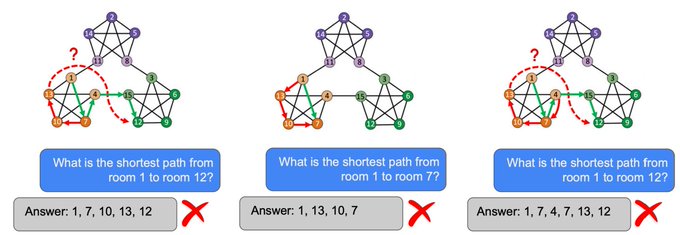

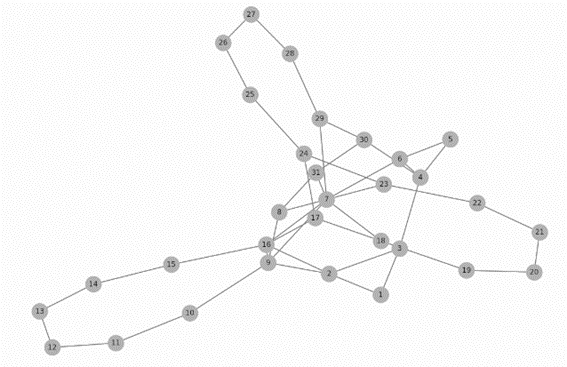

Great line of work by

@criticalneuro

& colleagues showing that despite knowing the connectivity structure of a spatial map (or graph), LLMs fail to report the shortest path between two rooms (nodes). They hallucinate edges that don't exist, report longer paths, & fall into loops.

Delighted to share our

#neurips2023

paper w

@grockious

@hmd_palangi

et al

Evaluating Cognitive Maps & Planning in LLMs with CogEval

We test planning in 8 LLMs.

Failures like hallucinating invalid paths/falling in loops don't support emergent planning.

1/n

9

112

414

1

4

38

Huge congrats to Prof. Ila Fiete for being awarded the Swartz Prize for Theoretical and Computational Neuroscience!! So fortunate and grateful to be working with her!

Thanks to the Swartz foundation

@SwartzCompNeuro

, the SfN Swartz prize committee and the SfN

@SfNtweets

!

Touched to hear from so many brilliant female computational neuroscientists. For the young women and men in computational neuroscience — this is you next.

(1/5)

2

16

100

0

1

33

Thank you

@mcgovernmit

,

@pmcgovern

and

@mitbrainandcog

for all the support through the years!!!

Thanks to my family featured in this photo:

@vin_agarwal

for always being by my side, and especially

@sharmadhruv71

, Shivam and Soumya for coming all the way from Canada to attend!

Congrats to

@sugsharma

on her successful PhD thesis defense! Well done, Dr Sharma - we are all so proud of you!!

@FieteGroup

2

3

24

3

1

32

Excited to announce that our paper

"Content addressable memory without catastrophic forgetting by heteroassociation with a fixed scaffold" got accepted at

#ICML

Arxiv version of the paper:

Stay tuned for the final camera ready version!

2

3

24

Had a wonderful time presenting alongside

@behrenstimb

,

@dileeplearning

,

@lengyel_m

, Dora Angelaki, Ilker Yildirim; Irinia Higgins and Ishita Dasgupta from

@DeepMind

in the

#cosyne2022

workshop. Grateful for all the encouragement and insights from these amazing scientists!

2

0

23

Great work on mapping a small part of the human cortex! Congratulations to the researchers in connectomics team

@GoogleAI

and

@Harvard

!

0

0

19

If you are at

#cosyne2022

, stop by my poster III-058 today to learn about Map Induction: Composition of spatial regions for efficient exploration in novel environments.

#COSYNE22

2

2

19

Flocking behavior in birds is fascinating! In work

@MSFTResearch

(), I found that humans playing an Xbox game showed flocking (without explicit instruction or reward for it), while GPT based AI agents played solo. Next: AI that utilizes social information.

1

0

10

What a creative holiday greeting from

@mcgovernmit

! Glad to have been a part of it for representing Hindi :)

1

0

17

Just finished three years of the Computational and Theoretical Neuroscience journal club today!

Big thanks to Ila Fiete

@FieteGroup

for being the driving force for its inauguration in fall 2018, and

@ScienceMIT

for funding us through Science Quality of Life (SQoL) program. (1/n)

2

2

16

Congratulations to

@maxjaderberg

& team for their amazing work on AlphaFold 3 ! Great collaboration b/w

@GoogleDeepMind

and

@IsomorphicLabs

!

Super excited to be releasing AlphaFold 3 today, developed by

@IsomorphicLabs

and

@GoogleDeepMind

: our next generation AI model for predicting the biomolecular structures and interactions of proteins, DNA, RNA, small molecules, and more:

1/

21

269

1K

0

0

16

SceneDiffusion optimizes a layered scene representation (during diffusion sampling) to obtain spatial disentanglement by jointly denoising scene renderings at different spatial layouts. This disentangles spatial info & appearance allowing spatial editing! Thanks

@liuziwei7

&team.

0

4

14

I was recently invited as a guest for an AI podcast and had an exciting and fun conversation with

@kanjun

and

@joshalbrecht

, the CEO and CTO

@genintelligent

. Today I received this lovely gift and a very kind thank you note from them! Thank you so much

@kanjun

and

@joshalbrecht

!

1

0

14

Recently got invited to present the MESH model at

@GuangyuRobert

's lab and at

@TomasoPoggio

's lab (thanks to

@akshayrangamani

for inviting!)

It was fun discussing memory with scientists/theorists in both labs, and am grateful for all the intriguing questions & fun conversations!

0

1

12

@mitbrainandcog

Stole Bestowal Ceremony with Julianne Gale Ormerod and Sierra Vallin. Thanks to both of them for their tremendous efforts towards supporting and enriching my experience as a graduate student in the BCS PhD program throughout my time

@MIT

. Forever grateful to them!

0

0

11

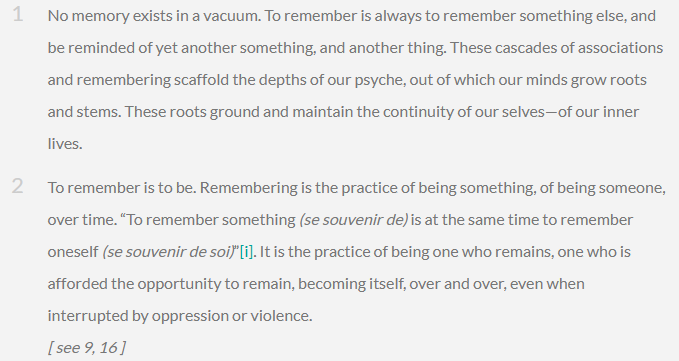

I have always thought that philosophy and the study of the brain go hand in hand, and this beautiful piece of writing by

@criticalneuro

reinforces that thought ! It highlights how important memory is “To remember is to be”. More power to everyone studying memory !

I've studied memory for 15 years with an empirical lens, scanned it, modeled it.

"The logic of memory" is my philosophical musing on memory, what I found missing in scientific discourse.

Thanks to

@Philosoph_Salon

&

@esdsantos

's course on James Baldwin🙏🏼

5

55

296

0

2

11

Had a great time presenting our MESH model at ICML 2022 !

Checkout the spotlight talk here:

Full Paper:

Checkout the following twitter thread for a quick summary:

Big thanks to the ICML organizers !

0

1

11

I will be presenting our work on Map Induction @

#CogSci2022

in the poster session tomorrow from 8:30-10:30am. Drop by if you would like to chat!

Here's a short 5 min talk if you want a trailer:

0

0

11

Folks at

#cosyne2022

#COSYNE22

come check out our poster I-140 to learn about building a content addressable memory model that escapes catastrophic forgetting through a combination of a pre-defined memory scaffold and heteroassociation of dense arbitrary patterns.

3

0

10

Watch PhysDreamer synthesize action-conditioned 3D object dynamics in response to interactions. This requires perception of the physical material properties of objects. PhysDreamer takes a physics-based approach, leverages object dynamics priors learned by video generation models

3D Gaussian is great, but how can you interact with it 🌹👋? Introducing

#PhysDreamer

: Create your own realistic interactive 3D assets from only static images! Discover how we do this below👇 🧵1/:

Website:

13

76

365

0

0

10

Excited for the K. Lisa Yang Integrative Computational Neuroscience

#ICoN

center

@mcgovernmit

which will create advanced mathematical models and computational tools to advance our understanding of the brain. Honored to be a part this amazing initiative!

The

#ICoN

center will also provide four graduate fellowships to

@MIT

students each year in perpetuity. This year’s inaugural grad fellows include Mark Saddler from

@JoshHMcDermott

lab and

@sugsharma

from the Tenenbaum and Fiete labs. 🧵 4/5

1

0

9

2

0

8

Just registered for

#COSYNE2022

Here are my submissions that we will be presenting

@CosyneMeeting

this year.

Got questions? Lets chat more in Lisbon!

2

0

8

The Great Picower Baking Show! Hosted by the Tsai lab

@MIT_Picower

@mitbrainandcog

. What a wonderful effort to bring people together!

0

2

8

Human perception of correlated motion of lines as a moving cube shown in the light show in

#Boston

on new years eve. Reminded me of the cool illusions and perceptual effects shown by

@vin_agarwal

in

@MIT

's Splash2021 organized by

@espmit

. Group photo of our group of teachers!

1

0

8

How to build a memory model that can continuously tradeoff number of stored patterns and pattern richness? Come to our poster at

#ICML2022

to get some answers!

Paper:

Slides:

Check the following twitter thread for a quick summary

0

0

7

Want to learn about a content addressable memory model that doesn't show catastrophic forgetting, but instead exhibits the desired memory continuum? Will be giving a talk on it

@cshlmeetings

today in the afternoon session.

#cshlNeuroAI

Full paper:

1

1

7

Interested in a memory model that gradually forgets similar to humans?

Come to our Spotlight talk (4:15-5:45pm session) @

#ICML2022

& come chat in the poster session today !

"Content Addressable Memory Without Catastrophic Forgetting by Heteroassociation with a Fixed Scaffold"

1

0

7

Really cool work combining inverse physics with inverse rendering. Finally a step towards enabling virtual garment fitting ! - an important use-case I have long thought about 😄.

1

0

7

Can AI be programed to absorb inspiration, crave communication & hence creative expression? What about the active role between the piece of art and the viewer? For instance, The Tree of Life below by Gustav Klimt has been interpreted in multiple ways!

#ai

#aiart

#aiartcommunity

0

0

7

Excited to be on the program committee of the AMHN workshop on Associative Memories

@NeurIPSConf

! I have been working on an associative memory model of hippocampal episodic memory enabled by pre-structured spatial representations with Ila Fiete. Excited to share & see new ideas!

Excited to share the schedule for our

@NeurIPSConf

AMHN workshop at

#NeurIPS2023

:

Please consider submitting your latest & greatest (new work, NeurIPS, AAAI, ICLR, etc):

CfP

Deadline Oct 6 (AoE)

Submissions

1

8

15

0

0

7

@mcgovernmit

recently shed light on mindfulness research

@mitbrainandcog

. Shout out to

@isaac_treves

@CindyLi75708399

@olaozpa

@HalieOlson

@lstigkeit

@gabrieli_john

@MITBrainStudy

for their amazing work!

I gave a high level outreach talk on your work!

1/3

1

0

6

I am deeply grateful to

@SebastianSeung

for recognizing this work and for his kind and encouraging words in his recent tweet 🙏🙏.

@doristsao

@FieteGroup

+1 to an amazing conceptual breakthrough in theoretical neuroscience that I think did not depend on big data or fancy technologies. What we can say is that BRAIN technologies will make the theory testable in a much more conclusive way than was ever possible.

0

1

9

1

0

5

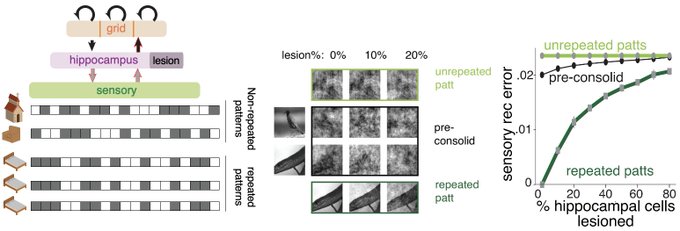

RAG is being widely used to circumvent the problem of hallucinations in LLMs emphasizing the need for efficient external memory storage & retrieval systems like MESH. This talk summarizes key aspects of MESH - a neural memory model inspired by neuroscience

0

0

5

MESH factorizes the problem of storing memory into building attractors & assigning content to them, leading to a graceful trade-off b/w pattern number & pattern richness.

Brief talk:

Paper:

Tweeprint:

2/n

1

0

5

Come to Cambridge, MIT August 6-9 for CCN this year!

📢Submissions for

#CCN2024

are now open at ! 📢

We welcome submissions for 2-page papers (deadline: 12 April) and Generative Adversarial Collaborations (GACs), Keynote+Tutorials, and (new this year!) Community Events (deadline: 5 April).

2

32

59

1

0

5

Literature review with a visual search! How useful. I always thought "why do we not have a graph-based visual search for papers?". Here it is!

0

0

4

Paper:

Twitter thread with a quick summary:

We will be presenting a poster from 6:30-8:30 @

#ICML2022

today. Come chat with us!

0

0

4

Secret to success "fail as much as you can as quickly as possible"

Freaking thrilled to share that our big

@CIHR_IRSC

grant just got funded (at the 3rd percentile!?!). Feels like a big accomplishment, especially squeezing this in right at the end of our first year as a lab. Of course, the project also got not-funded 3 times first, so...

20

5

180

0

0

4

I would like to thank my advisors Ila Fiete and Josh Tenenbaum, my collaborator Sarthak Chandra, and members of

@FieteGroup

and

@MITCoCoSci

for their feedback and support.

Also thanks to

@mitbrainandcog

,

@mcgovernmit

,

@MIT

for enabling this research 🙏🙏.

1

0

4

True. Vision would also be a primary input modality for Spatial Navigation - another area thats important for embodied AI. In addition to interacting with 3D objects, embodied agents need to explore & plan in 3D spaces, building generalizable spatial representations as in humans.

1

0

3

Amazing talks at

#cosyne2021

so far! Enjoying looking at others' work and excited to present a poster on my work today at

#cosyne2021

from 3-5pm and 7-9pm EST. Drop by if you wanna chat!

0

0

3

I am also truly grateful to

@doristsao

for her kind and thoughtful comments and a beautiful two line summary of this work in her recent tweet 🙏🙏.

@SebastianSeung

Btw, I don't know if

@FieteGroup

is funded by BRAIN, but their recent work understanding grid cell-place cell dynamics as a general mechanism for episodic memory that factorizes problem of building attractors from problem of assigning content to them, is such a beautiful and

2

2

36

0

0

3

Pretty cool work reconstructing the neural circuits that carry visual information to the navigation center of a fruit fly brain. Enables generation of new hypotheses about transformation of visual information at various processing stages for generation of head direction signals!

0

0

3

Very useful for those working towards Human-AI alignment!

1

0

3

@mcgovernmit

@Nancy_Kanwisher

@theNASciences

@mitbrainandcog

@ScienceMIT

@MIT_CBMM

Congratulations!!! 👏👏

0

0

2

This is a great opportunity for anyone interested in cognitive neuroscience!

0

0

2

One hypothesis is that this is because episodic memory is enabled by pre-structured spatial representations that support high-capacity memory. Some of these ideas are fleshed in this work:

Tweeprint:

0

0

2

Happy to have contributed to this insightful preview lead by

@honisanders

. Here's the updated link:

Just published a Preview: describing the work of

@jcrwhittington

and

@behrenstimb

in creating the Tolman Eichenbaum machine.

4

4

24

0

1

2

Congratulations Dr. Andrew Francl !

0

0

2

@iclr_conf

Excited to present my work on Modular networks for high capacity pattern and sequence memory

@ICLR_brains

today! Drop by to chat and learn about a neural architecture inspired by the entorhinal-hippocampal system in the brain!

1

1

2

I would like to thank

@MIT

,

@mitbrainandcog

, the ICoN center

@mcgovernmit

,

@MIT_CBMM

and

@NSF

for enabling this research.

If you would like to chat more in person and are going to

#ICML2022

, please find me

@BeyondBayes

workshop

@icmlconf

where I will be presenting this work.

0

0

2

We taught a course titled "Brain as a Computing Device". Thanks to Akhilan Boopathy,

@jaedong_hwang

, and Sarthak Chandra from

@FieteGroup

; Kartik Chandra from

@MITCoCoSci

and

@vin_agarwal

from McDermott lab

@mitbrainandcog

for joining me in teaching this course to 45 students!

1

0

2