Anyi Rao

@raoanyi

Followers

1,791

Following

859

Media

69

Statuses

405

Assistant Professor @HKUST Prev. Postdoc @Stanford & Ph.D. @ MMLab & @Meta @RealityLabs @UofT Works #ControlNet #AnimateDiff @cveu_workshop

Stanford, CA

Joined July 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Errejón

• 567999 Tweets

LINGORM MAJOR FANDOM

• 417898 Tweets

#วันแบงค็อกxซีนุนิว

• 223615 Tweets

ZeeNuNew At ONE BKK

• 210453 Tweets

Ivan

• 116583 Tweets

期日前投票

• 110873 Tweets

Luka

• 107997 Tweets

GROW UP TO BE FREENBECKY

• 95104 Tweets

Durk

• 61008 Tweets

#それスノ

• 55697 Tweets

Alien Stage

• 44440 Tweets

#Mステ

• 38733 Tweets

Mizi

• 33336 Tweets

結城さく

• 23989 Tweets

悪役令嬢の中の人

• 21725 Tweets

ByahengPinas for SB19Con

• 13543 Tweets

hyuna

• 13181 Tweets

未成年の主張

• 11355 Tweets

#ガラビー生パフォ

• 10347 Tweets

Last Seen Profiles

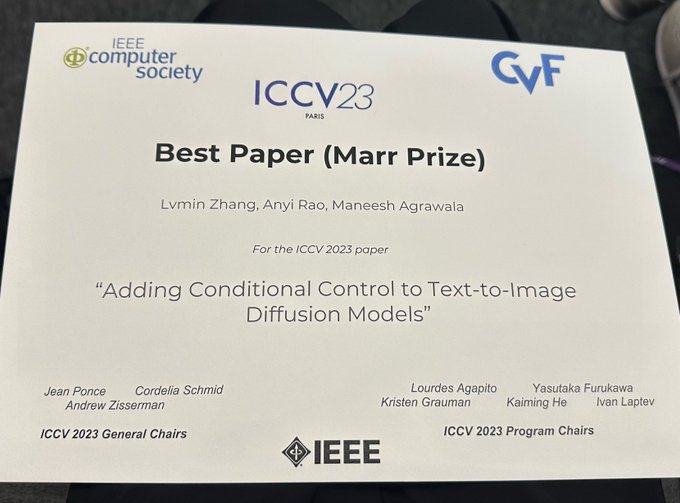

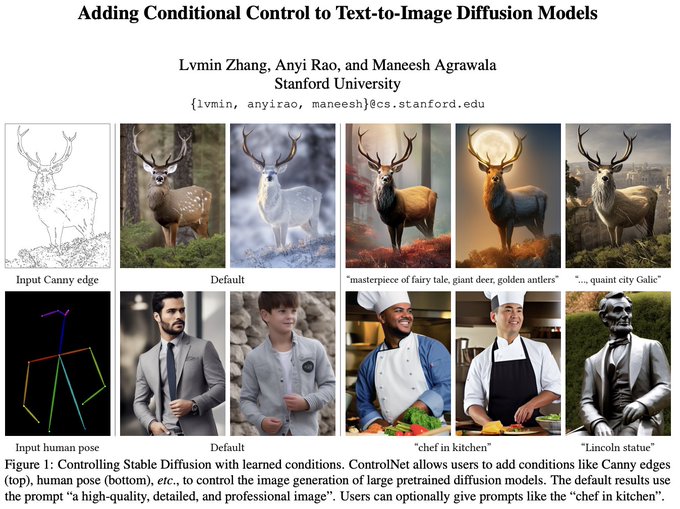

Huge thanks and congrats to my coauthors

@lvminzhang

@magrawala

#ControlNet

for winning the Marr Prize /best paper award

@ICCVConference

#ICCV2023

Join us this afternoon at Oral 1:30 PM “Paris Sud” and Poster 2:30~4:30 PM “Foyer Sud 156”

8

21

435

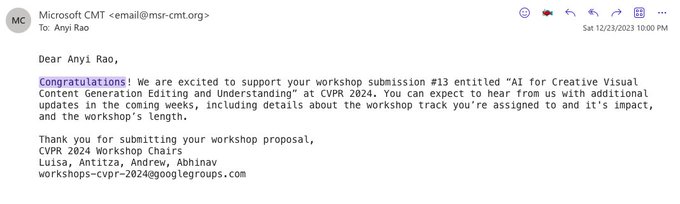

We are excited to share that the AI for Creative Visual Content Generation Editing and Understanding Workshop

@cveu_workshop

has been accepted to

#CVPR2024

@CVPRConf

. See you in Seattle to meet art, tech, and creativity! It is the first time we come to the US🇺🇸

2

10

64

If you miss the

#ICCV2023

@ICCVConference

, you may check our interactive 360 recording of the

#CVEU2023

workshop

@cveu_workshop

to get a feeling of being at Paris (But it is a bit pity that our 360 camera cannot well handle the HDR environment)

1

3

35

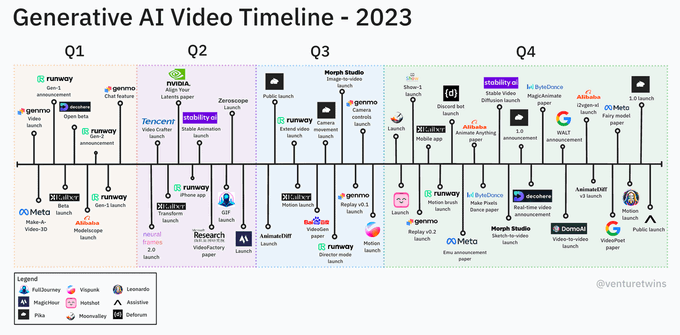

Cool to see applications from two of our research projects

#AnimateDiff

#ControlNet

AI VFX experiment: AnimateDiff + ControlNet

This combines a simple logo animation (left) and

#AnimateDiff

with the QR Monster controlnet into a loop. Instant water simulation!

#aianimation

#stablediffusion

#VFX

#mograph

54

626

5K

2

0

28

#CVPR2023

Great tutorial on Prompting in Vision with a full room of audiences

organized by

@kaiyangzhou

@liuziwei7

@phillip_isola

@lschmidt3

@denny_zhou

0

5

25

It is indeed an amazing opportunity to exchange ideas and feel honored to present a talk!

@Stanford

@UCBerkeley

@Caltech

.

@Stanford

@UCBerkeley

&

@Caltech

computer vision faculty& their students meet today to exchange research ideas, topics include 3D vision, language-visual models, robotic learning, computational photography, vision foundation models, etc. At the EOD, AI is truly fun Science! 1/

8

28

397

1

0

21

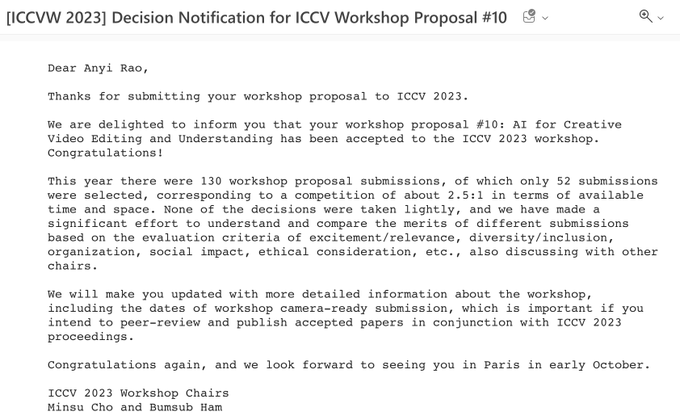

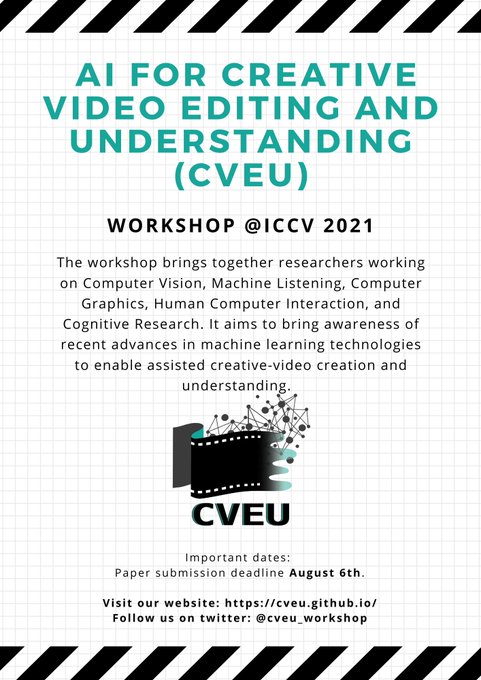

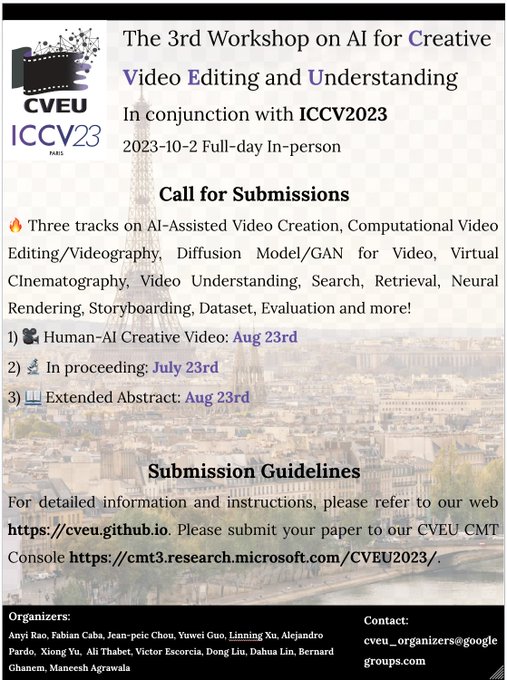

Excited to share that the acceptance of

#ICCV2023

3rd Workshop on AI for

Creative Video Editing and Understanding!

Looking forward to seeing you in Paris, a global center of art, fashion, gastronomy, and culture!

@cveu_workshop

2

2

20

[1/6]

#SparseCtrl

supports multiple scribble keyframes to a video🎬

Codes to be released soon here, please star it and get noticed

1

1

18

Join us at

#ICCV2023

@ICCVConference

Maybe you've heard of

#ControlNet

for controlling text-to-image diffusion models.

At

#ICCV2023

,

@lvminzhang

will explain how it works and present more of the behind the scenes details.

arXiv:

A111 webui plugin:

4

41

256

0

0

15

“Black-box models are difficult to understand failures and costly to improve performance or fix problems” It is a really inspiring talk by Prof.

@YiMaTweets

with a bunch of theoretical insights 👨🏻🔬, which is also very inspiring to my AI + creativity research👨🏻🎨

0

1

13

Check an update of high-resolution

#AnimateDiff

, which can run on a personal device with only ~13GB GPU VRAM

1

0

13

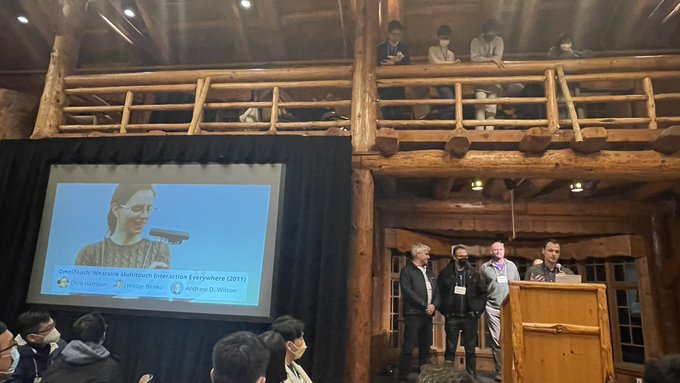

See you at the Opening Talk of

#UIST2023

session “Masterful Media: Audio and Video Authoring Tools”, 2:20 PM, Gold Room, Oct 30th, by

@jama1017

@StanfordHCI

@ACMUIST

0

2

12

Motions can be composed to create more diverse patterns to unleash your creativity. Try it out here

Personalization means a lot. We thus introduce the lightweight motion LoRA into

#AnimateDiff

. Fine-grained motions like the following camera control are enabled. Models are available now at GitHub. Just create more motions as you want.

11

91

493

0

0

12

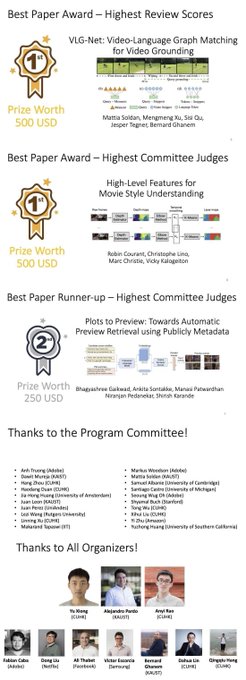

Congrats to the Best Paper Awards and the Runner-up!!! 🥳 Thanks to all the committee members!

Please click the image to see the whole

@cveu_workshop

@ICCV_2021

0

2

11

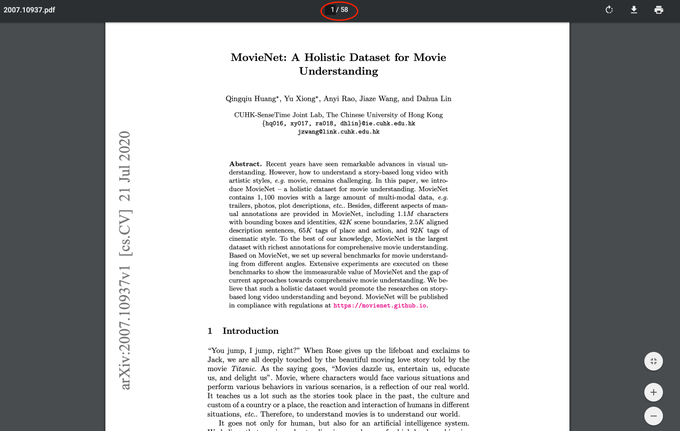

Excited to share our

#ECCV20

*spotlight* paper MovieNet with 58 pages!😆 So many efforts and memories here. Thanks for Movie Group!

@OpenMMLab

@QingqiuHuang

@sizexyu

@EvenEveno

@lindahua

🎉

Arxiv:

Web:

Code:

1

0

10

From car crash to lovers crush, we try to bring some new insights to the community and present better storytelling to us

@cveu_workshop

#ICCV2023

@ICCVConference

0

1

10

This is a very cool application of

#AnimateDiff

in multi-modality media

🚀 Dive into the future with my blend of 3D sound & AI in animation, a passion project at

@dogstudio

! 🎧🤖

Crafted with Cinema 4D, finessed with ComfyUI & AnimateDiff.

A huge shoutout to those who provided invaluable learning resources! 📖

@PurzBeats

,

@8bit_e

and

@c0nsumption_

13

32

238

0

0

9

See you in Denver!

We are back at

#SIGGRAPH2024

for Courses on Generative Models for Visual Content Editing and Creation

@siggraph

on Thursday, August 1st 2:00pm - 5:15pm MDT at Four Seasons 4, Denver Colorado Convention Center

1

1

7

1

2

8

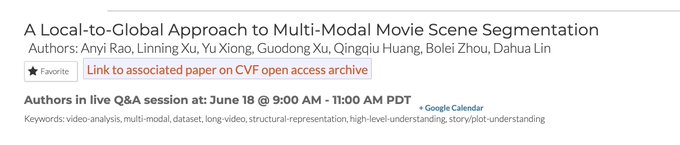

It is always frustrating to work with complex and long videos. We study a semantic consecutive unit "scene"

in our

#CVPR2020

work to facilitate the plot/story understanding of long videos.

Paper:

Webpage:

1

0

8

The submission deadline extended to August 6th! Come to join the amazing workshop with excellent academic researchers, industry group leaders, artists, designers, and entrepreneurs

@ICCV_2021

@cveu_workshop

0

5

8

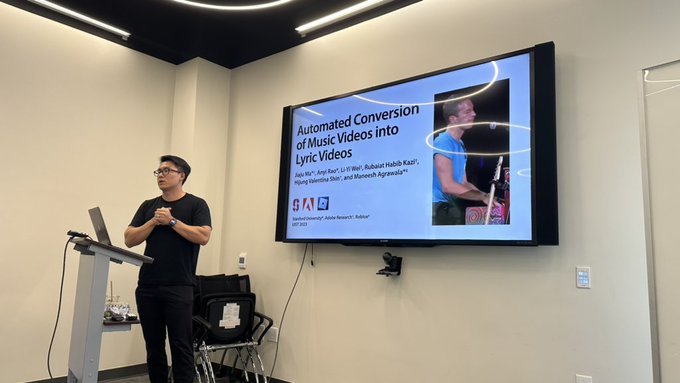

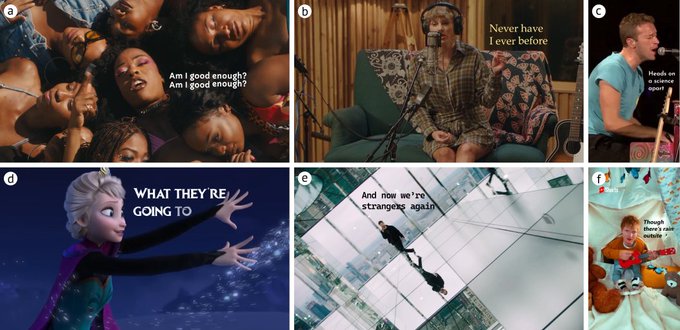

See you at

#UIST2023

for our intelligent creation tool from music video to lyric video. Check our demo and more results

0

2

7

Excited to see creative workflows from

#AnimateDiff

#ControlNet

0

0

7

Try here

Yuwei (

@GuoywGuo

) just released

#AnimateDiff

v3 and

#SparseCtrl

which allows to animate ONE keyframe, generate transition between TWO keyframes and interpolate MULTIPLE sparse keyframes. RGB images and scribbles are supported for now.

Github:

14

57

318

1

1

7

Excited to attend

@BrownInstitute

all hands retreat and learn diverse interdisciplinary projects in person. Feel greatful to receive the magic grant

0

0

6

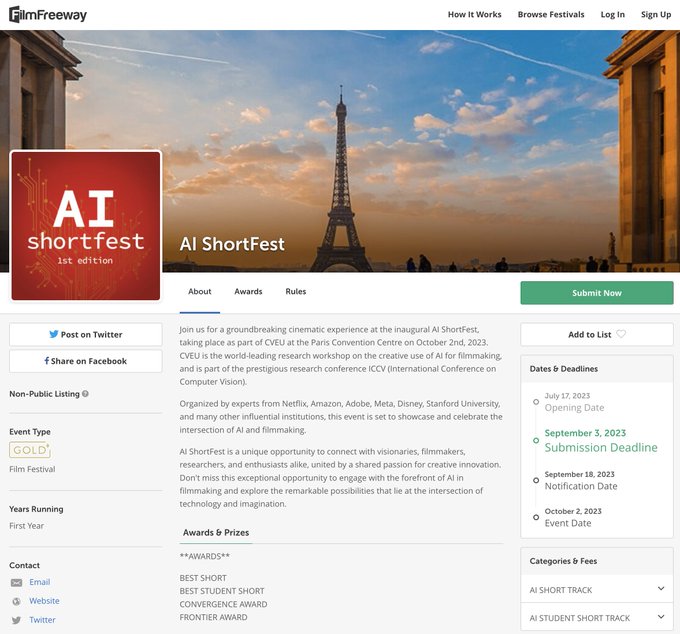

Check this, contribute your creative work🔥 and present it at Paris😃

The CVEU Workshop is back in Paris at

@ICCVConference

. It will be the first time the CVEU workshop is in person. Please start preparing for the third installment of this creative workshop. We have a program full of surprises!

Visit our website:

0

2

12

0

0

6

This year at our workshop in

#ICCV2023

, we put a lot of effort into involving more artists to contribute their ideas and to create really good content. Hope this will inspire more people.

@ICCVConference

Our AI ShortFest is landed on , the largest film submission portal. Please join us!

@ICCVConference

#ICCV2023

Kudos to

@jeanpeic

1

8

18

0

0

5

Let us know the insightful speakers you want to see in Seattle🥳

0

0

6

Cool

#AnimateDiff

Motion Brush for fine-grained video creation control

Roll your own Motion Brush 🖌️

Here are 3 examples using simple masks, prompts, and the in-painting controlnet with

#AnimateDiff

to create (looping!) motion on still images.

#stablediffusion

#aivfx

#aivideo

#ComfyUI

27

173

1K

0

0

5

Always fascinated by artists' creativity.

Using AnimateDiff to create an origami world

Collab

@CitizenPlain

Music

@Artlist_io

I'll share our BTS tomorrow

58

352

2K

0

0

5

Come to watch, listen, and exchange ideas on how to make high-quality beautiful lyric videos 🎵

#UIST2023

1

1

4

It is such an honor to host

@pinar_demirdag

, thanks for contributing to the workshop!

"We are currently in the “visual magic” stage of creative A.I. for artists and amateurs." Check out the impressive blog by our keynote speaker

@pinar_demirdag

@Seyhan_Lee

Thanks for enlightening the workshop 💫✨🌟

#ECCV2022

#CVEU2022

0

3

5

1

0

4

Exciting to see that we will really make this happen next week! The workshop starts with our passion for bridging the gap between art and AI. It has accompanied us for four years from the time when video generation totally does not work to today!

@CVPR

#CVPR2024

🎨✨ Unveil the future of creativity during

#CVPR2024

! Delve into "The Future of Generative Visual Art" and witness the fusion of art and AI. Get ready for groundbreaking insights and innovations!

🗓️ June 18th

📍 Summit 343

Be there to shape tomorrow's visual art landscape! 🌟

2

2

12

0

0

4

Incredible🤩

1

0

4

Looking forward to Prof

@akanazawa

's wonderful talk on "Infinite Nature"! Join us

@cveu_workshop

!

Professor

@akanazawa

from UC Berkeley will be another of our keynote speakers! Join us at

@cveu_workshop

at

@ICCV_2021

for her exciting talk!

Title: Infinite Nature: Perpetual View Generation of Natural Scenes from a Single Image

Time: 13:00 PM - 13:45 PM PST

#CVEUatICCV2021

1

1

8

0

0

4

[4/6] And if we add conditions on more frames, let's say the last four frames of the previous video clip,

#SparseCtrl

can generate a longer video. Codes to be released soon here

#AnimateDiff

, please star ✨ it and get noticed

1

0

4

[5/6] If we add conditions on two similar/dissimilar frames,

#SparseCtrl

can achieve a smooth transition

1

0

3

@magrawala

@wobbrockjo

It is an incredible experience for new UIST attendees like me. Shout out for your amazing organizing teams.😆

0

0

3

Come to watch films at

@ICCVConference

CVEU workshop, Oct 2nd

0

0

3

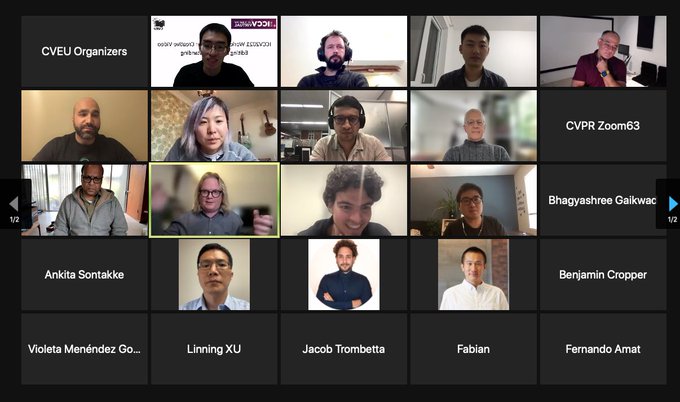

Very Nice and Novel Try😵

Breaking! Our very own

@zZeZenZeng

at

@USC_ICT

taking zoom presentations to the next level! He is defending his PhD virtually using our latest

#realtime

#volumetric

3D

#teleportation

tech based on a single RGB webcam, which will be presented at

@siggraph

and

@eccvconf

2020.

1

5

56

0

0

2

Looking forward to have an AR/VR version for virtual conferences as the tech develops😆

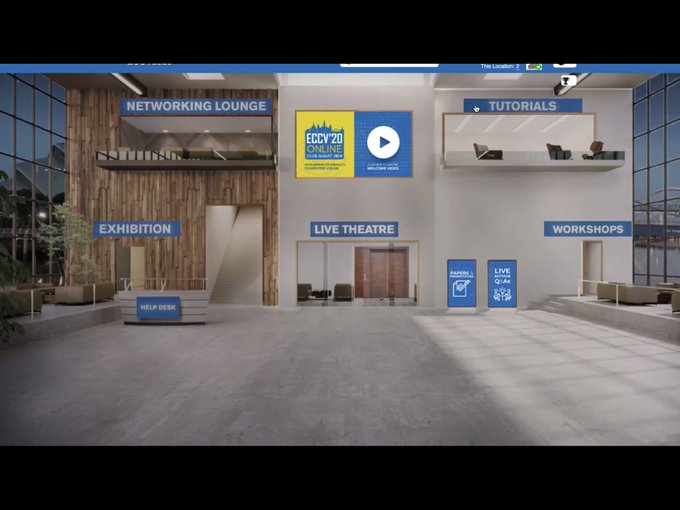

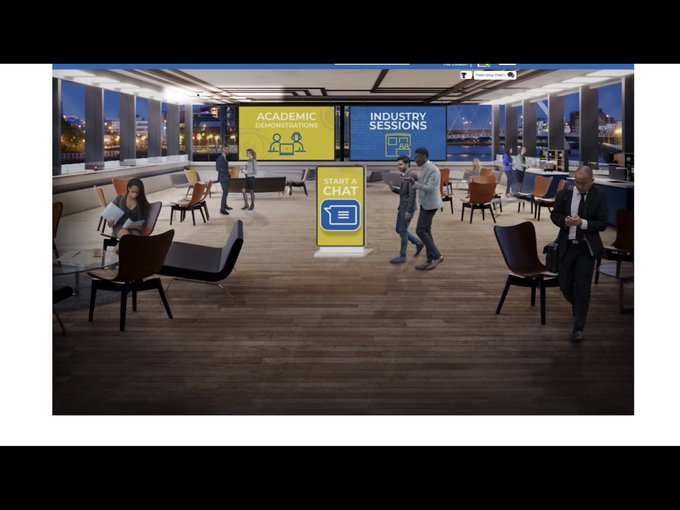

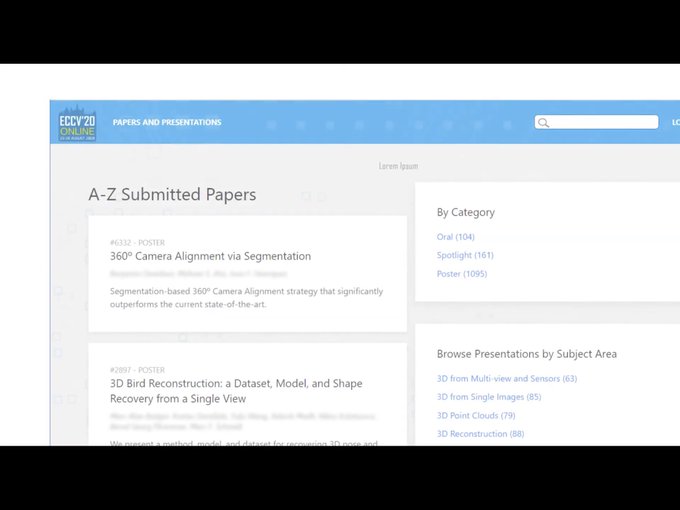

#ECCV2020

virtual platform preview . Looks like they are trying to emulate a real conference environment.

4

17

77

0

0

2

You are welcome to watch the YouTube live streaming through this link

Our keynote speakers!

Oct. 17th, 2021 at

#ICCV2021

:

Prof. James E. Cutting - 08:30 AM - 09:15 AM PST

Prof.

@MarcChristie4

- 09:15 AM - 10:00 AM PST

Prof.

@irrfaan

- 10:15 AM - 11:00 AM PST

Prof.

@akanazawa

- 13:00 PM - 13:45 PM PST

Prof.

@magrawala

- 13:45 PM - 14:30 PM PST

1

3

10

0

0

2

Prof

@magrawala

's papers inspired me to step into this exciting research field. Really looking forward to the exciting keynote on how to make and break videos!😃

CVEU workshop has another exciting Keynote Speaker!

Professor

@magrawala

from Stanford University

@Stanford

will be having an exciting talk!

Title: Making (and Breaking) Video

Time: 13:45 PM - 14:30 PM PST

Visit us at

@cveu_workshop

@ICCV_2021

1

2

10

0

0

2

The way to make it better serving for storytelling leads us to make it like the old tools.

0

0

2

How to inpaint The Arnolfini Portrait? It is not easy for a machine to inpaint it since the hole is too big. But still, human beings are able to create.

#ComputerVision

&

#Arts

@EvenEveno

0

0

2