Pascale Fung

@pascalefung

Followers

2,545

Following

47

Media

21

Statuses

119

Chair Professor of ECE, Director of the Centre for AI Research (CAiRE), Hong Kong University of Science & Technology. Fellow of AAAI, ACL, IEEE, ISCA.

Hong Kong

Joined August 2010

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

ليفربول

• 655640 Tweets

#オールスター感謝祭24秋

• 105467 Tweets

#FBKINGDOMPROLOGUE

• 52277 Tweets

Palace

• 45491 Tweets

花火大会

• 45438 Tweets

ブルーロック

• 30725 Tweets

ケイくん

• 29966 Tweets

服部幸應さん

• 26385 Tweets

料理評論家

• 25546 Tweets

ヒロアカ

• 25006 Tweets

料理学校

• 24968 Tweets

フブちゃん

• 22572 Tweets

#CRYLIV

• 22454 Tweets

織田裕二

• 19936 Tweets

トガちゃん

• 18229 Tweets

Jota

• 16616 Tweets

#踊る大捜査線

• 16547 Tweets

Partey

• 12887 Tweets

Last Seen Profiles

We always knew that Chomsky was wrong about language models, it’s nice to have a paper showing you just how wrong he was!

#ACL2024

best papsr.

28

177

985

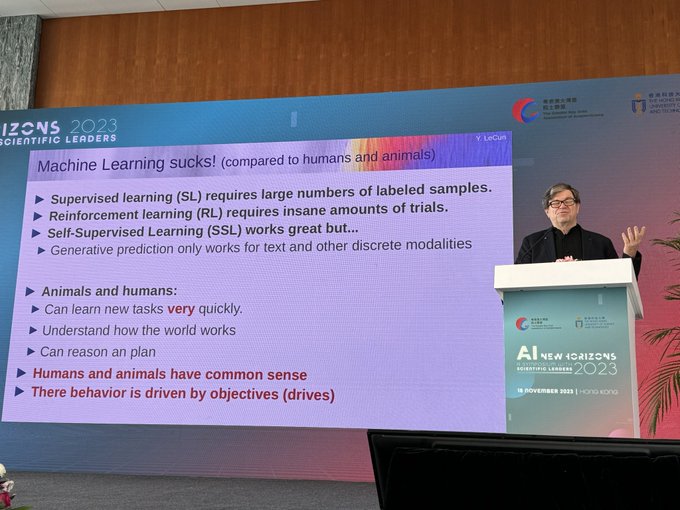

“Machine learning sucks!” Keynote by

@ylecun

at the AI Symposium: New Horizons 2023 at the Asia Society in Hong Kong.

19

68

420

#ACL2024

was great. But very few papers were asking hard scientific questions. A lot of “LLM engineering”. In particular, university labs should really defocus on this type of engineering as industry labs, though not publishing every minute detail, are doing way more advanced

4

36

273

I told President

@EmmanuelMacron

that I started my AI research career from a small project on automatic speech recognition at

#EcoleCentraleParis

when I was a student.

6

10

206

I have long hoped to bring top US and China AI researchers together for a scientific exchange. This finally happened with

@ylecun

, Harry Shum, Feng Junlan (chief AI scientist, China Mobile), Li Hang (Head of Research Bytedance) and Zhou Jingren (CTO of Alibaba Cloud)

8

24

149

Do humans need language for

#reasoning

and

#planning

? It really depends. We “reasons and plan” a lot of physical and artistic activities without thinking in language (eg dance, swimming, painting, playing an instrument…) but language helps us teach/learn how to do them.

17

8

104

The keynote speaker talked for about an hour but cut me off after 10 seconds of my question. If you can’t take a question don’t give a keynote!

#ACL2024

5

5

77

Excellent

#acl2024

keynote by Barbara Plank on how to work with the inherent (and beautiful) variation in languages that leads to uncertainty in LLMs and other AI models. Exactly what NLP needs to hear.

1

6

55

So this paper got a 1 from a reviewer from

#EMNLP

- never gotten a 1 before ever, and is rejected. So I am sharing its arxiv version for feedback. I did get a bunch when I shared it with people in person before. TIA.

7

11

110

It’s 2024, NLP people need to work on the phenomenon of language as manifested in LLMs and other AI models - so that we can make scientific progress - instead of *still* being on the bandwagon of shitting on AGI/LLM endlessly. It’s boring.

#acl2024

1

2

40

We got two awards at

#AACL

2023 - for the ChatGPT benchmarking paper and the Nusawrites Indonesian language resources paper. Congratulations to all co-authors.

3

2

30

Can LLM reason? Here are benchmarking results on ChatGPT from Feb 2023. Paper here

#aaai2024

panel

3

8

23

Video available

Ongoing now! "Ethical and technological challenges of Conversational AI" with

@pascalefung

Come check this talk out!

0

1

3

1

2

18

Got attacked by a man for not citing enough women authors in my keynote on hallucination at

#aaai2024

#Realaaai

showing that alignment tax is not just a problem for GenAI but humans as well. Photo by

@FrancescaRossi_

0

0

14

A number of us from

#hkust

are among the AI top 100 contributors of 2023 by an automatic metric by Bench Council. HKUST and Tsinghua are the top 2 from China. (This is a metric based on publications and citations, not social media or media influence.)

0

2

12

Very humbled to be on the same list as Nobel Laureate Tu You You and Kylie Minogue.

#forbeswomen

#ForbesOver50

#ForbesAsia

2

1

11

To eliminate any human intention, I generated this from an AI model by prompting with a random string of keyboard strokes. So this is pure

#AIart

it is breathtakingly beautiful, reminiscent of 60s psychedelic pop art and Vasarely but not from any artist in particular.

0

2

11

The problem with these images is due to “alignment tax” - when a model has been trained to align with multiple objectives it becomes hard to balance between them. For example here is an apparent conflict between “diversity” and “faithfulness”. It is a hard optimisation problem.

0

4

9

@ednewtonrex

AI does not create anything without human prompts. The creation of an artist using AI is not different in essence from that of digital art or photographic art which are also scalable. There is nothing “copied” by AI art as there is nothing “copied” by photographic or digital art.

1

0

9

Chinese labs and companies are open souring their LLMs, following the lead of their counterparts in the US.

#OpenScience

#AIgovernance

#AIcollaboration

2

0

8

@ednewtonrex

Regulations need to be designed by those humble enough to study the current technology. You cannot regulate what you don’t understand with any wisdom.

0

0

7

Yoshua Bengio’s talk and Q and A on the necessity of AI safety via governance, Bayesian estimation of uncertain and risks, and quantitative harm prevention at

#HKUST

0

0

6

Following the effort of building Indonesian NLP dataset and benchmarks

#Nusacrowd

#Nusawrites

#NusaX

, our team is calling for contributions to South East Asian language dataset

#Seacrowd

#LLM

for all

#AI

for all.

0

2

7

#responsibleAI

should be practiced by every research group big or small. We absolutely need to hold people accountable for the accessibility of their datasets and code and ensure reproducibility before we give out any paper award, if not every publication.

0

1

7

#OpenAI

should just change its name. It’s starting to sound like sarcasm - and humanity is the butt of the joke.

0

0

6

@burkov

Because it is not binary - pattern matching can lead to reasoning in some scenarios, just like humans do.

0

0

5

@ylecun

C obviously and depending on the audience size it is disseminated to. Just follow current copyright laws. Art students routinely copy the grand masters in their studies, nobody calls that copyright infringement.

1

0

3

@petergyang

This was the title Bell Labs gave to people. It was extremely prestigious to be part of Bell Labs. OpenAI is no way close yet.

0

0

4

@kaushik_himself

@ylecun

@sama

@gdb

OpenAI couldn’t have done it without the open-sourced Transformer and many models from Google Meta etc before them. It’s like pulling up the ladder after you have climbed up.

3

0

4

Google, as well as Meta and OpenAI, among others, have large teams of people dedicated to ethics and responsible AI. There is currently no tractable solution to avoid all errors (can any human claim to be ethically perfect?) but the research community will keep trying.

0

0

4

Has anyone noticed that

#Sora

has problems with

#perspectives

in some of the videos it generated? For example in this one. Also it seems to be trained from video game data???

2

0

3

Do humans need language for

#reasoning

and

#planning

? It really depends. We “reasons and plan” a lot of physical and artistic activities without thinking in language (eg dance, swimming, painting, playing an instrument…) but language helps us learn to do them faster.

0

0

3

@ylecun

And anyone “infringing copyrights” in private going through a platform cannot be sued although the platform can detect and prevent dissemnination.

0

0

0

@deliprao

NN does not learn rules. Before NN, there were statistical

AI that learned probabilistic rules automatically. •Rule based systems” are those that are based on manual rules by people. People are very good at pattern recognition but absolutely terrible at coming up with rules!

1

0

3

There are many greater achievements than our papers in 2023. Google and Meta are the top 2. (OpenAI doesn’t publish much.) US is number 1 with China at number 2.

A number of us from

#hkust

are among the AI top 100 contributors of 2023 by an automatic metric by Bench Council. HKUST and Tsinghua are the top 2 from China. (This is a metric based on publications and citations, not social media or media influence.)

0

2

12

0

0

2

@ylecun

@cwizprod1

@harryshum

This is because the apocalypse and doomsday scenario is very much a Christien religious thing influencing western thinking,

0

0

1

What is the meaning of “a world class prompt engineer”? Who certifies one as “world class” and what qualifies one as a “prompt engineer”? (I am not dismissing the importance of

#prompts

. )

2

1

2

The Jedi Council on Reasoning - a panel with

@YejinChoinka

Ernest Davis

@rao2z

moderated by

@murraycampbell

.

1

0

4

@_TechyBen

@ylecun

I posted that as sarcasm in response to those who say AI is taking away our humanity.

1

0

2

@Liv_Boeree

That’s a weird classification. Did you come up with that yourself? Most people working on AI safety are motivated by AI ethics. Without ethics there is no principled way of implementing AI safety.

1

0

2

@ednewtonrex

GenAI tools trained on content cannot generate anything without a human creator prompting them to do so. So AI art is the fruit of human creation as much as digital art or photography art and for that matter, human artists who learned their craft from prior work.

0

0

1

@neilturkewitz

@ednewtonrex

The issue is that the people sitting at the top of the bureaucracy are probably not the people who have the wisdom and knowledge to provide such oversight for a technology that will have this much impact.

1

1

2

@gerardsans

If he didn’t cut me off I would have finished my question in 1 minute. I would have asked him to elaborate on his thesis on why LLM cannot plan well. Instead he went on a tangent about paper reviews etc like a defensive student. His talk was disrespectful of a scientific audience

0

0

1

@ziqi_huang_

This is a video quality evaluation. What’s important is semantic evaluation. A beautiful video portraying things counter to physics is no good.

0

0

1

@gjzhang1

There are many scientific questions to ask. If you want to work on LLM then ask why do LLMs work or does not work in X or Y? How can we benchmark and improve different aspects of machine intelligence - reasoning, planning, world modeling etc? In NLP how is language being

2

1

1

@lateinteraction

RAG alone cannot solve the hallucination problem and is not even a guarantee to improve the outcome. Multiple retrieved facts together can still be generated in an incoherent way. Just try Bing. 2/2

0

0

1

@ZeerakW

I think facial features alone are not adequate, just like audio features alone are not.

0

0

1

@Ginnung93

@neilturkewitz

@ednewtonrex

The “many many years” is also the problem. The technology is moving monthly.

0

0

0

@ZeerakW

To do a good job the system needs to work with psychometricians to come up with non-bias-inducing yet informative interview questions.

0

1

1

@adawan919

I strongly that we need better benchmarking and better understanding of how they work. And how to do that would have been a scientific discussion.

1

0

1

@ZeerakW

There is always a danger that if the system is trained on existing database of current high performers it will learn the bias as well.

0

0

1

@ChujieZheng

I understand that but how about for every paper submission optimised for acceptance also submit 1 or more that is more scientific?

0

0

1

@cocoweixu

@mmitchell_ai

@mlatgt

@ICatGT

@gtcomputing

@alan_ritter

@tareknaous

@michaelryan207

Nice work. But “western values” are not monolithic either. Witness the latest move by the French to incorporate abortion rights into their constitution. Happy International Women’s Day!

0

0

1