Nikhila Ravi

@nikhilaravi

Followers

5,862

Following

1,848

Media

120

Statuses

1,457

Research Engineer @AIatMeta (FAIR), @Cambridge_Uni , @kennedyscholars @harvard , @MCCOfficial cricketer 🇮🇳 🇬🇧 🇺🇸. Projects: Segment Anything, PyTorch3D

San Francisco, CA

Joined October 2013

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

I Wanna

• 246604 Tweets

#BLEACH

• 210163 Tweets

Pete Rose

• 159669 Tweets

PlayStation

• 125716 Tweets

#WWERaw

• 111701 Tweets

Lions

• 105351 Tweets

Jimmy Carter

• 50331 Tweets

Seahawks

• 48902 Tweets

Dolphins

• 47278 Tweets

Hall of Fame

• 47183 Tweets

Titans

• 46327 Tweets

Detroit

• 42217 Tweets

Goff

• 35328 Tweets

BAMBAM

• 34487 Tweets

Happy New Month

• 32669 Tweets

Respecting Ancestors

• 28196 Tweets

सोनम वांगचुक

• 25428 Tweets

コーヒーの日

• 23982 Tweets

Amelia Watson

• 22984 Tweets

Christ is King

• 19184 Tweets

都民の日

• 18633 Tweets

Geno

• 15933 Tweets

メガネの日

• 15854 Tweets

TEN FIREWORK IT

• 14287 Tweets

Charlie Hustle

• 13437 Tweets

McDaniel

• 13013 Tweets

Pancasila

• 11039 Tweets

Last Seen Profiles

Pinned Tweet

SAM 2 is the next generation of the Segment Anything Model for images we released last year! SAM 2 comes with all the great features from SAM (promptable, zero shot generalization, fast inference, Apache 2.0 license), but now also for video! Here's what's in SAM 2🧵👇

9

18

230

4.5 years ago I started my first AI Research project

@MetaAI

. I didn’t have a PhD or research experience. But I was confident it was the right decision. Life often goes by without celebrating the small wins — here’s to taking bold bets and intentionally choosing harder paths 🎉

34

28

816

Thrilled to announce that SAM 2 is out, bringing SAM level capability now to any video! 🤩🚀 Super excited to see what everyone builds!

12

45

752

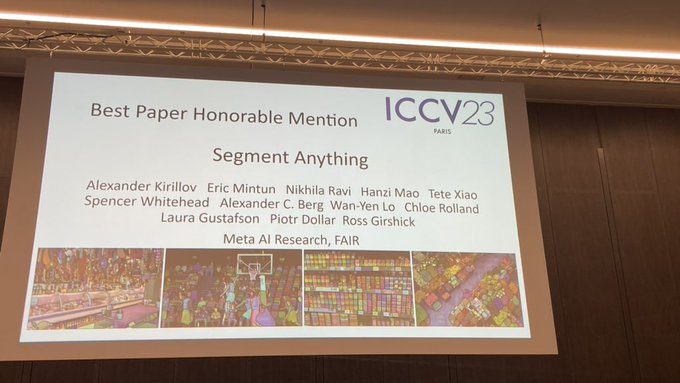

Thrilled to announce that Segment Anything has been accepted to

#ICCV2023

! Co-leading this project with

@kirillov_a_n

for the past ~1.5 years and getting to work with a rockstar team has been the most enjoyable experience in my time

@MetaAI

.

13

17

369

✈️Excited to attend

#CVPR

in Seattle! My team at Meta (FAIR) is hiring Research Scientists & Engineers to work on multimodal models across image/video. *Full-time* roles only starting ASAP in NYC/Bay Area/Seattle. Stop by the Meta booth or DM me to chat! 😀

3

11

324

📢 My team

@AIatMeta

(FAIR) is hiring full-time Research Engineers & Scientists interested in working on foundation models for computer vision and language! If you're at

@ICCVConference

in Paris this week, DM me to chat! Work with us to build the next Segment Anything! 🚀🇫🇷

6

36

297

Today at

@Meta

Connect, Mark announced "Backdrop", a new AI editing feature coming soon to

@instagram

powered by the Segment Anything Model (SAM) my team

@AIatMeta

open-sourced this year! Thrilled to see our research brought to life for billions of people in an app I love!

7

10

180

“I don’t have data I only have opinions”😂

@georgiagkioxari

’s awesome no holds barred talk on AI research in Industry vs Academia at the “Scholars & Big Models” workshop

@CVPR

. TL;DR space of impactful problems is huge and both groups have their respective roles in advancing AI.

2

15

171

Honoured to receive the

@RAEngNews

Young Engineer award earlier this year! Humbled to be able to fulfill a generational career journey from my mum being an engineer at

@ATT

@BellLabs

in the mid 80s, to my role today in helping advance the state of the art in AI at

@AIatMeta

🤩

Five early-career engineers received the RAEng Engineers Trust Young Engineer of the Year award this year. Get to know awardee

@nikhilaravi

, who received £3,000 for her ground-breaking

#AI

work with

@Meta

. Watch on to find out more:

#RAEngAwards

1

3

22

22

4

172

So fun trying to generate Bollywood style music using the MusicGen demo from my colleagues at FAIR! 🇮🇳🎶

"Bollywood music track, traditional Indian sounds of melodic sitar and tabla, blended with modern pop sounds, catchy melody, and infectious rhythms"

8

14

139

Awesome turnout at our poster session today

@CVPR

with

@jcjohnss

(missed you

@georgiagkioxari

!). Non stop crowd for 3 hours! Lots of great questions and discussions! Read more about the paper on the project website

#CVPR2022

1

14

119

Stop by the

@AIatMeta

booth at

@ICCVConference

this afternoon to chat, grab a Segment Anything sticker and try a bunch of demos including SAM, FACET, ImageBind, Dino & more!

1

4

92

After stepping into a Research Engineering Manager role, I've gained a deeper empathy and respect for my managers! Fostering a culture and relationship where 100% transparency, awkward conversations and constant two-way feedback is the norm is no easy feat and requires great care

1

2

82

🇬🇧 Got the opportunity to put my British accent to good use to narrate this reel about SAM for

@MetaAI

!

🔊 Have a listen!

4

3

74

Enjoyed this conversation with

@swyx

and

@josephofiowa

on the journey from SAM to SAM 2 and beyond! We talk about model design, building data engines, innovating on demos and going from research to real world applications!

Our SAM 2 pod with

@nikhilaravi

is out! Fun SAM1 quote from guest cohost

@josephofiowa

:

"I recently pulled statistics from the usage of SAM in

@RoboFlow

over the course of the last year. And users have labeled about 49 million images using SAM on the hosted side of the RoboFlow

3

9

73

1

4

72

One of the best parts of my job

@MetaAI

is getting to work on Open Source! With Segment Anything it's been incredible to see the pace of integration into products and applications to science! Excited for what people build with LLama 2 with the research & commercial use license!

1

4

71

🤨How can industry and academic research be evaluated on a more even playing field?

💰

@jon_barron

’s suggestion

@CVPR

: introduce benchmarks that normalize accuracy by cost — maximize(accuracy/watts) as a metric that’s achievable for all researchers irrespective of compute access.

1

4

68

Thanks

@facebook

for the diversity scholarship to

#reactconf

! Wrote about it here: ReactConf:The Good Parts

@reactjs

2

44

69

A new exact algorithm for computing 3D IoU of batches of oriented 3D bounding boxes is now in Pytorch3D! 450x faster than previous methods!

Spoiled by 2D, I was shocked to find out there are no good ways to compute exact IoU of oriented 3D boxes. So, we came up with a new algorithm which is exact, simple, efficient and batched. Naturally, we have C++ and CUDA support

#PyTorch3D

Read more:

5

35

300

0

9

68

Ridiculous that The Queue at

#Wimbledon

still has paper tickets and a 6 hr wait. Simple tech solutions would go a long way: add QR codes to the queue card to pay online, send text updates on the status/wait time, create a virtual queue instead! Grateful the sun was out 😎☀️

7

3

56

Amazing turnout at our poster after the oral presentation by

@_rohitgirdhar_

@CVPR

! Non stop crowd for the entire session with

@imisra_

and

@mannat_singh

! Read more about Omnivore on the project website and try out the code!

@MetaAI

#CVPR2022

1

2

58

Basing the immigration system on country of birth exacerbates the problem. I was born in India but grew up entirely in the UK and am a UK citizen, but ended up in the India queue. The only reason I got a green card was through my work in AI research! The system needs to be fixed!

2

2

58

📢 Excited to announce FACET from

@MetaAI

, a new comprehensive benchmark for evaluating the fairness of computer vision models across different demographics.

(1/11) 🧵👇

1

10

57

Honoured to have been a part of FAIR for half a decade and help advance the state of the art in AI through open research!

2

0

56

Super excited to share PyTorch3D,

@facebookai

's new library for 3D deep learning research providing:

- Easy batching of heterogeneous meshes

- Optimized common 3D operators

- Modular, differentiable mesh renderering

Try the code and tutorials on GitHub:

5

7

55

Inaugural Desi Tech Mafia dinner in San Francisco for

#indianindependenceday

🇮🇳 co-hosted with

@ravirajjain

and

@lightspeedvp

! Awesome conversation with this group of VCs, founders and researchers in AI! Here’s to more serendipitous connections and creating community! 🤩

2

3

53

At the “Scholars & Big Models” workshop

@CVPR

@jon_barron

putting AI progress in perspective. All technology progresses like a sigmoid — people overestimate the rate and scale of change. Look at airplanes! Most important question to ask as a researcher: which sigmoid to choose?

0

7

52

Loved representing

@AIatMeta

for a

#WHCD

event with

@haddadmedia

in Washington DC, showcasing our research on Segment Anything, the Backdrop feature on Instagram and talking about all things open source!

FAIR researchers (

@AIatMeta

) presented SegmentAnything and our robotics work at the White House correspondents’ weekend.

Llama3 + Sim2Real skills (trained with

@ai_habitat

) = a robot assistant

3

19

224

0

2

47

My 86-year-old grandma’s book launch is happening tomorrow! ❤️ If you’re in Coimbatore or have friends in the area who’d like to join, please share! See you there!

UPDATE!

Join us for a wonderful evening at the launch of "Two Loves & Other Stories" by Smt. Balam Sundaresan at Ardra Hall in Coimbatore, on the 3rd of January!

Looking forward to seeing many of you there!

#launch

#books

#garuda

#coimbatore

0

0

6

1

0

44

Love this! Anyone want to co-host a Desi Tech Mafia dinner in San Francisco? 🇮🇳🌉👩🏽💻

Ladies and Gents we present to you - “ITM” aka Indian Tech Mafia or the brown mafia in VC and building brilliant startups in London 😬

@sameer_singh17

@AkashBajwa96

@23smittal

@akritidokania

@farhanlalji

@chandinijain

@shaheenbudhrani

and many others! Join us if you are one.

18

4

107

5

5

42

What an amazing year it's been at FAIR!! Excited for more to come in 2024!! 🌟🌟🌟

1

1

42

Curious to see use cases for SAM on CPU on a Macbook! ~1.9s for embedding extraction per image on Apple M2 Ultra with the Vit-B model (vs ~0.15 seconds on an NVIDIA A100 GPU) and ~45ms per prompt for mask prediction (similar to to ONNX in browser inference using multithreading).

sam.cpp 👀

Inference of Meta's Segment Anything Model on the CPU

Project by

@YavorGI

- powered by

35

283

2K

4

5

40

📢 My team

@MetaAI

is hiring a Full Stack Eng! ChatGPT & Segment Anything showed the world the vital role of exceptional UX in making AI models accessible & widely adopted! Join us to build demos for the next breakthrough in computer vision!

🔗Apply here:

1

7

40

Awesome panel with

@lightspeedvp

,

@eladgil

,

@hwchase17

,

@hliriani

,

@lisabethhan

🏗️ Build amid rapid change

🗑️ Discard bad ideas promptly

🔍 Find the whitespace in AI apps

🛡️ Consider defenses against incumbents -- what if MSFT turns on this feature?

🎯 No GPUs before PMF

4

8

35

🥳🥳🥳Huge congratulations to

@cfeichtenhofer

&

@judyfhoffman

on the 2023 Young Researcher Award

@CVPR

! Feel incredibly inspired to be in a rockstar Computer Vision team at

@MetaAI

and work with many previous winners incl.

@georgiagkioxari

&

@inkynumbers

🤩

0

1

36

Come and chat with us at the Poster Session today 10-12:30 in Halls B2-C!

0

7

34

Excited for the start of

@CVPR

in Vancouver tomorrow! If you're there and want to chat or meet-up, feel free to DM me!

1

2

34

One of the best parts of building computer vision models is making cool visuals of the outputs! We love taking this to the next level in the SAM team to build interactive demos where anyone can use the model in the real world! Try it out and share your favourite creations!

1

1

32

The State of AI report is out! Enjoyed helping Nathan & team review this year’s edition! It provides a thoughtful & well-rounded overview of the most important trends from research & industry and their influence on safety & policy globally. A must-read for anyone interested in AI

🪩The

@stateofaireport

2023 is now here.

Our 6th installment is one of the most exciting years I can remember. The

#stateofai

report covers everything you *need* to know, covering research, industry, safety and politics.

There’s lots in there, so here’s my director’s cut 🧵

63

542

2K

1

0

32

Inspiring talk by

@sarameghanbeery

@WiMLworkshop

at

#NeurIPS2023

on her journey from professional ballerina to MIT AI professor, how interdisciplinary research e.g AI for biodiversity can be very impactful, and why free food at events is critical for attracting newcomers to AI 😀

1

4

29

Loving the SAM 2 x Olympics content 🥇👌

0

2

28

Join us for our tutorial on Implicit Rendering for Novel View Synthesis using Implicitron and PyTorch3D at

#ECCV2022

! In person, Monday 10/24, 9am-12:30 Israel D. Research talks & coding with

@davnov134

@ovrdr

, Jeremy Reizenstein & lots of P3D stickers!

0

5

29

Loved playing a small part in this documentary about the past, present, and future of Fundamental AI Research at

@AIatMeta

alongside

@jpineau1

,

@ylecun

,

@soumithchintala

,

@LukeZettlemoyer

& Kim! A decade worth of progress and impact captured in ~7 mins!

2

2

27

#GenerativeAI

x

#Bollywood

is here 🤩 What would Gerua sound like if it was sung by Atif Aslam? How about an Atif x KK x Arjit Collab? Love these creative AI generated Bollywood song covers by

@djmrasingh

👏🏽

0

4

26

Great coverage in

@techreview

of our work FACET from

@MetaAI

! With vision capabilities being introduced in foundation models like GPT4-V, benchmarking fairness across different demographics and person related attributes will be increasingly important.

0

4

25

Awesome talk by

@NagraniArsha

on why we should all be using ASR and video metadata at the SSL workshop

@eccvconf

! 👏🏽

#ECCV2022

0

1

24

Key message

@CVPR

Transformers for Vision workshop panel — the importance of data and model co-design. Working on data, although tough, is crucial and rewarding. Everyone loves modeling, but often the real power is in getting the data right. Don't overlook it!

1

1

24

Backdrop has launched in the US! 🎉 So fun to see Segment Anything and Emu powered features in

@instagram

! 😍 Awesome example of research at

@AIatMeta

enabling new product experiences! Try it out!

0

4

24

Awesome Womxn in AI dinner and discussion on APIs vs open source, AI applications and the next step change with

@Redpoint

@molwelch

@ericabrescia

@achowdhery

and many other inspiring researchers & founders.

Also future home goals to have a secret den behind a bookshelf 🤩

1

0

21

Great conversation with

@lisabethhan

&

@ajratner

on data engines, model <> data co-evolution, SAM, best practices for data centric AI, leveraging OSS foundation models, evaluating for robustness, and how enterprises are adopting and adapting these tools for their use cases/data

@ajratner

@SnorkelAI

@nikhilaravi

@MetaAI

Our Partner

@lisabethhan

kicking off the conversation with

@ajratner

and

@nikhilaravi

.

#GenSF

Cc:

@SnorkelAI

@MetaAI

(FAIR)

1

2

11

0

9

21

Congratulations to my amazing colleagues

@MetaAI

!

We’re honored to share that Meta AI researchers received four different publication awards at ACL this week — including three outstanding paper recognitions!

4️⃣ more, award-winning papers to read from Meta AI at

#ACL2023NLP

🧵

5

30

173

1

0

21

Alternative views on "Big Models vs Scholars" by

@deviparikh

@CVPR

:

🛠️Think about big models as infra: find ways to control and use them as tools and get info out of them in reliable and safe ways.

🚧 Embrace constraints: what can be done with just 8 GPUS?

👇🏽+ many more ideas!

0

2

21

Honoured to be chosen as one of the

@CodeFirstGirls

25 ones to watch in tech!

#womenintech

@founderscoders

@dwylhq

1

5

20

Some tips:

- share specific details of your research experience and areas of expertise when you reach out

- look through previous papers from

@AIatMeta

on computer vision

- please don't ask about internships or referrals for another position!

1

0

20

If the UK needs more informed, tech-savvy decision makers,

@SciTechgovuk

should leverage British AI expertise in Silicon Valley. We came out here to learn but want the opportunity to contribute valuable insights back home for shaping the UK's AI strategy! 🇬🇧🇺🇸

1

3

19

SOO excited to be speaking about AWS Lambda +

@GraphQL

@ServerlessMeets

in San Fransisco on Thursday! Thanks for the invite

@goserverless

!

1

3

19

Discovered mum used to work at AT&T Bell Labs in America in the 80s during the dawn of the Internet! 😍

#womenintech

1

0

19

📣 Excited to announce my 86-year-young grandma's debut book, a side project close to my heart! 🌟 From a random lockdown convo, to cold emails to Indian publishers, editing, polishing and receiving the final book, it's been so fun to act as agent & editor! Grab your copy!👇🏽

0

1

19

My 78 year old grandma finished

@Codecademy

#javascript

track this morning! Most amazing grandma ever! Time to apply for

@founderscoders

? ;)

0

7

16

So excited to be in New York for

@ServerlessConf

to talk about

#graphql

+

#serverless

! Sharing the work from

@dwylhq

1

13

17

Excited to be supporting

@WiCVworkshop

@ICCVConference

next week in Paris on behalf of

@MetaAI

! 🇫🇷 I'll be giving a talk about Segment Anything and Laura will be presenting our work on the FACET benchmark for fairness and bias!

Exciting news!🌟 We're thrilled to share that

@Meta

is generously sponsoring

#WiCV

mentoring dinner

@ICCVConference

.

Thank you for your support, Meta!🙌 And thanks

@nikhilaravi

@hanna_mao

@mingfeiy

, Laura Gustafson, Rebekkah Hogan and Chloe Rolland for joining us!

#Meta

#ICCV2023

3

6

47

0

2

18

Top cited publications over the past 5 years according to Google Scholar:

1. Nature

2. New England Journal of Medicine

3. Science

4. ✨

@CVPR

✨

Truly impactful and revolutionary work happening in this computer vision research community!

0

3

18

Stop by our

@ICCVConference

poster for FACET by

@AIatMeta

this afternoon to chat and hear more about the work!

Poster number: 36

Foyer Nord

1

0

17

Really enjoyed attending the

@WiCVworkshop

as a mentor on behalf of

@MetaAI

! Great conversations with peers and mentees and friends I made from the last in person CVPR

@NagraniArsha

!

1

1

16

Thanks so much to

@IamStan

@cfidurauk

and

@acloudguru

for an amazing

@ServerlessConf

! Excited to be part of the

#serverless

movement!

0

3

16

So much fun hanging out with the other speakers

@substack

and

@nodebotanist

at the

#NodeConfLondon

after party!

0

1

15

Fascinating talk by Katie Bouman

@WiCVworkshop

@cvpr2019

on producing the first image of a black hole! 👏👏👏

0

3

15

Super excited to hear about

@github

's new

@GraphQL

API

@ServerlessMeets

in San Fran with

@goserverless

!

#serverless

1

7

15

Had a blast helping judge the

@pearvc

hackathon! Incredible creativity and live demos, like Relay AI's phone agent for small businesses —ask for a discount, it can negotiate! Also, En Passant's custom character commentary for e-sports, complete with a Gordon Ramsay example! 🤩

1

2

13

⛳️ Come chat with me,

@georgiagkioxari

@jcjohnss

, Garrick, Abhinav & Julian about Omni3D at

@CVPR

today!

📌 Poster

#76

⏲️ Wednesday 4:30-6:30pm

#CVPR2023

0

2

14

So excited to be representing

@founderscoders

at

#reactconf

in San Fran all the way from London!

0

6

14

Super excited to to be part of the

@usacricket

women’s training group!!

As a part of their preparations for the CWC Qualifier and T20WC Americas Qualifier,

@usacricket

have announced 📢

🇺🇸 A 28-member Women’s National Training Group

🇺🇸 A 24-member Women’s National U19 Training Group for the first time ever

15

38

691

1

0

12

This is such a fun demo created by my colleagues at Meta and now all the code is open source 🤩!!

2

0

13

Stop by and say hi at the Meta AI booth

@CVPR

, try our awesome demos and chat with us about your work!

1

0

12

Excited to share our work on "Accelerating 3D Deep Learning with PyTorch3D" at

@WiMLworkshop

virtually this year! Full paper:

We are delighted to announce that the

#WiML2020

program features 6 amazing contributed talks by:

@PalomaSodhi

(

@CMU_Robotics

),

@leqi_liu

(

@CarnegieMellon

),

@nikhilaravi

(

@FacebookAI

),

@SadhikaMalladi

(

@Princeton

),

@ML_Theorist

(

@UCSD

),

@jessicadai_

(

@brownuniversity

).

1

20

93

1

4

12

Amazing turn out at the first

@nodegirls_LDN

intro to

@nodejs

workshop hosted by

@StackCareersUK

!!

#nodegirlsLDN

0

9

11

Loved seeing all the AI demos at

@saranormous

@w_conviction

community gathering and meeting many cool researchers and hackers in the space!

0

1

11

Great step by step guide on what it takes to build a custom ChatGPT style app for enterprise scale and data!

The barrier to entry for building new AI products is lower than ever. But making a ChatGPT for your workplace is much more than just your data + vector DB + LLM.

@mr_cheu

,

@jainarvind

and I dive deep into how Glean Chat works and how it was built -

2

2

19

0

1

11

Thrilled to be part of the USA Women’s national training group!

The 28 Player USA Women’s National Training Group.

A huge year for

#TeamUSA

🇺🇸 Women, with the ICC Women’s World Cup Qualifier in Sri Lanka in July followed by the ICC Women’s T20 World Cup Americas Qualifier in September, which USA will host.🙌🏏

MORE➡️:

1

11

49

1

0

11