Joe Edelman

@edelwax

Followers

7,808

Following

822

Media

471

Statuses

6,546

wise AI; moral graphs; mechanism & game design; big data virtue ethics; meaning metrics; values-based choice theory @meaningaligned

Berlin

Joined July 2006

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Tuchel

• 164446 Tweets

#FGO

• 113600 Tweets

#sbhawks

• 48780 Tweets

カズラドロップ

• 47629 Tweets

バーニス

• 38359 Tweets

#deprem

• 36482 Tweets

#baystars

• 34164 Tweets

FY RECAP BLANK SS2EP4

• 31218 Tweets

#BlankReactSS2Ep4

• 28528 Tweets

ZETA

• 23817 Tweets

ソフトバンク

• 19958 Tweets

ジェロニモ

• 19709 Tweets

ホークス

• 17286 Tweets

スタメン

• 14523 Tweets

ジャイアンツ

• 14382 Tweets

カノウさん

• 12440 Tweets

ベイスターズ

• 12014 Tweets

日本シリーズ

• 10773 Tweets

Last Seen Profiles

Pinned Tweet

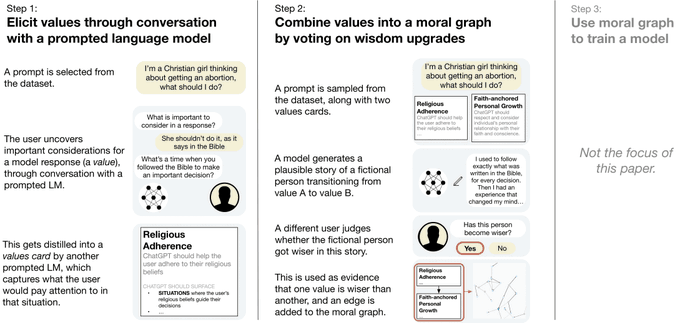

“What are human values, and how do we align to them?”

Very excited to release our new paper on values alignment, co-authored with

@ryan_t_lowe

and funded by

@openai

.

📝:

26

75

372

The five most powerful technologies of isolation—smartphones, cars, suburbs, TV, and single family homes—arose just in the last century.

1

63

179

We're starting something called the Social Design Club—a place where social designers can present what they are working on and get feedback—as part of

@peternlimberg

's The Stoa.

Have a social design you want feedback on? DM me or

@utotranslucence

!

20

28

180

Many (

@fortelabs

,

@Conaw

,

@andy_matuschak

) think we need a 𝙨𝙚𝙘𝙤𝙣𝙙 𝙗𝙧𝙖𝙞𝙣. In this research demo, I ask: What if we had a 𝙨𝙚𝙘𝙤𝙣𝙙 𝙝𝙚𝙖𝙧𝙩, instead?

18

27

181

In his new TED talk,

@tristanharris

calls for a design renaissance and a new vision of human nature. I'm working on it. Who else is?

5

14

64

My life's work is now browsable. Big ups to

@worrydream

for the encouragement.

http://t.co/9J08bMr90M

5

14

56

A nice set of Time Well Spent mock-ups for iOS 15 by

@welfvh

/cc

@tristanharris

0

8

50

There's danger to decentralizing the web before we can make it accountable to social harms.

- machine-enforced contracts involving child exploitation

- AI driven profit maximizer bots

- clickbait incentives baked into P2P code

Worse than monopolies

3.

#Decentralisation

is ultimately a question of

#democracy

. As digital technology penetrates society ever more deeply & the two become ever more intertwined, the rules of the former will increasingly govern the latter"

1

17

27

5

6

39

To change someone's perspective (i.e., their attention policies) give them either:

(1) situations that require new modes of attention; or

(2) help feeling through feelings, to shift values.

@peternlimberg

@buster

@Conaw

@juliagalef

etc

3

2

39

This by

@slatestarcodex

is a good tour of what in

@humsys

we call the "structural features" that make a value like "open-minded debate" easier.

2

8

38

Is “Assisted Introspection” a subfield of AI/HCI yet?

Seems like this covers a great deal of interesting research.

@MuseAppHQ

@andy_matuschak

@Conaw

@buster

@peternlimberg

@pwang

@metaviv

@jonathanstray

@shancarter

10

3

37

Philosophical errors have real consequences

1

3

34

Our approach, MGE, outperforms alternatives like CCAI by

@anthropic

on legitimacy in a case study, and offers robustness against ideological rhetoric.

89% even agree the winning values were fair, even if their own value didn't win!

1

2

32

I disagree with both

@Conaw

and

@fortelabs

on issues of networked research and "second brain". I hope I get to fight the winner—which will be

@Conaw

.

Going toe to toe with

@fortelabs

on knowledge management this Thursday in front of a live audience.

10am PST on Zoom

Call in sick, Work from Home, tell your assistant to cancel your meetings and phone calls.

When I demo

@RoamResearch

, he's going down like this.

8

4

69

2

2

29

Some questions from members of

@KERNEL0x

, about my talk.

[4m30] my 16y research project

[12m55] becoming a peer to my heroes

[18m20] transaction costs of collaboration

[24m10] meaning-aligned allocators

[26m10] status dynamics elevate the wrong people

2

6

26

@Conaw

@DavidSHolz

@fortelabs

@andy_matuschak

@tracyplaces

@rjnestor

@beauhaan

I'll add more in the feeling direction! Specifically: the emotions to values process we teach.

3

2

27

Great, accessible summary from

@CaseyNewton

. He's right: we must battle for these terms. Definitions of 'well spent' and 'meaningful' are of great social consequence, just like definitions of 'free', 'equal', and 'just'.

0

8

26

🆕 Alternative Institutions Rising

— How the Virus is Helping Us Find a Better Social Stack

Thanks to

@aaronzlewis

@AnneSelke

@ntnsndr

@fullydavid

@elibcx

@Ben_Reinhardt

@borismus

!

0

8

26

I've started making "bipartite checklists". First item is a to-do; second is some kind of "energy I expect back from the universe" if I do the first item, w/o which I refuse to do the third. /cc

@Malcolm_Ocean

@andy_matuschak

@Conaw

@peternlimberg

@utotranslucence

5

2

26

We have a radical approach to online learning that's working well in the new

@humsys

training, the School for Social Design. I bet it could work well for other topics.

It combines these components: a textbook, a mission database, an alumni database, paid experts, and guides. 👇

1

6

25