Nayan Saxena

@SaxenaNayan

Followers

3,018

Following

509

Media

59

Statuses

313

Teaching machines how to think, express, and create – one model at a time.

Toronto, Ontario

Joined October 2021

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

GLOBAL SPOTLIGHT APO NATTAWIN

• 229487 Tweets

Morara

• 94613 Tweets

天使の日

• 73279 Tweets

علي النبي

• 71342 Tweets

Bomas

• 49947 Tweets

Hayırlı Cumalar

• 42784 Tweets

SB19 IS ACER READY

• 42090 Tweets

Kasmuel

• 33172 Tweets

Michel Blanc

• 26886 Tweets

#يوم_الجمعه

• 26275 Tweets

#リアルアキバボーイズ武道館

• 22676 Tweets

感謝マルチガチャ

• 21955 Tweets

ワートリ

• 21480 Tweets

Tariq

• 19818 Tweets

名探偵ピカチュウ

• 19329 Tweets

最大4体ゲット

• 17591 Tweets

人達同士

• 17337 Tweets

TJ Kajwang

• 15259 Tweets

BINI LAGING FUDGEE

• 12668 Tweets

所信表明演説

• 12209 Tweets

Last Seen Profiles

Pinned Tweet

Amazing article on our recent work by

@kevinroose

from The New York Times (

@nytimes

), this quote captures the essence well:

"Eventually, if you take advantage of the web, the web will start shutting its doors."

1

4

12

✨ Introducing ToDo : Token Downsampling for Efficient Generation of High-Resolution Images ! With

@Ethan_smith_20

&

@aningineer

, we present a training free method that accelerates diffusion inference upto 4.5x while maintaining image fidelity.

1

9

72

🌟 Excited to finally share that

@LeonardoAi

has been acquired by

@Canva

! Our work on Phoenix, the best image generation foundation model they've seen, played a key role. Special kudos to

@Ethan_smith_20

,

@advadnoun

,

@aningineer

,

@sami_ede

, Martin Bell, and the entire team!

🚀 Exciting news: Welcome,

@LeonardoAi_

! 🚀

Since 2013, Canva's mission has been to empower everyone to design. Today, we're thrilled to join forces with Leonardo AI, a leader in generative AI. 🎉

Leonardo’s team and tech will boost our AI capabilities, enhancing our products

120

106

441

6

11

68

✨ Excited to announce Phoenix, a new foundational image generation model! Over the past few months, I've worked with the amazing

@LeonardoAi_

research team, training and bringing it to life. Huge kudos to

@Ethan_smith_20

,

@advadnoun

,

@aningineer

,

@sami_ede

& the entire team!

2

11

62

The stuff I get to work on as part of my job sometimes feels unreal ! Working on AI art is really satisfying and delving into the technical details has been quite insightful ✨

Here is a preview of what is next to come within Dream by

@WOMBO

🌈

5

4

59

The resemblance is uncanny! Amazing work by

@GaryMarcus

and

@Rahll

highlighting the plagiarism issue in generative AI.

5

6

52

This is amazing, the new xLSTM is parallelizable, with improved exponential gating. Strong results on large-scale language modeling.

2

2

39

✨ Structured completions using LLMs may have finally been solved and that too 100x faster with 10,000x less overhead !

Amazing work by the awesome team

@RysanaAI

. Been following their work since last year, and super happy to see how far they have come.

5

3

34

Bittensor, is a great example of decentralized AI where peers rank each other by training networks which learn the value of their neighbors where high ranking peers are monetarily rewarded with additional weight in the network $TAO :

Not going to beat centralized AI with more centralized AI.

All in on

#DecentralizedAI

Lots more 🔜

253

325

2K

3

4

28

March in a nutshell:

- CEO of

@inflectionAI

resigns and joins

@Microsoft

- Creators of Stable Diffusion resign from

@StabilityAI

- President of Vietnam Resigns

- Prime Ministers of Ireland, Bulgaria & Haiti Resign

- First Minister of Wales Resigns

4

3

27

🎉 ToDo: Token Downsampling is now on Hugging Face Spaces. Try it out!

Thanks to

@aningineer

for putting it together, and

@Gradio

for sharing our work 🤩

1

4

23

✨Excited to share 2 short papers on Neural Architecture Search! To appear, as student abstracts in AAAI proceedings.

In this work, with Robert Wu &

@JainRohan16

, we explore:

- Better pre-optimized search space generation.

- Vulnerabilities with the search space design.

(1/3)

3

7

23

Flash attention 3 is out with faster and better efficiency for long context length and an increase of utilization from 35% to 75% for H100s. Also 1.5x-2x faster with lower error rate for FP8 benchmarked at 2.6x ! Great work by

@tri_dao

and team.

1

1

21

Loving

@getairchat

by

@naval

, once the floodgates open would love to invite some of you all to the platform !

2

1

19

Humane AI pin was

@TIME

Magazine's best inventions for 2023 without the product even being tested/reviewed. You really can't make this stuff up.

0

0

13

🚀

@GoogleAI

just revealed Gemini. The GPT-4 competitor comes in 3 models — Ultra, Pro, and Nano.

This is the FIRST multimodal AI to outperform humans on the MMLU, scoring >90%.

Report from

@GoogleDeepMind

:

🧵Heres everything you need to know:

1

2

11

📃Paper here:

Also available for you to try on Gradio Spaces:

0

6

12

Special shoutout to

@parshantdeep

,

@paul_bridger

@_sshahid_

, and

@CafeSamosaBlue

who've been working really hard to push the boundaries of what is possible in bringing the next AI art revolution!

0

0

12

@Ash_Stewart_

@maystansstuff

Lmao I managed to get one shot

0

0

11

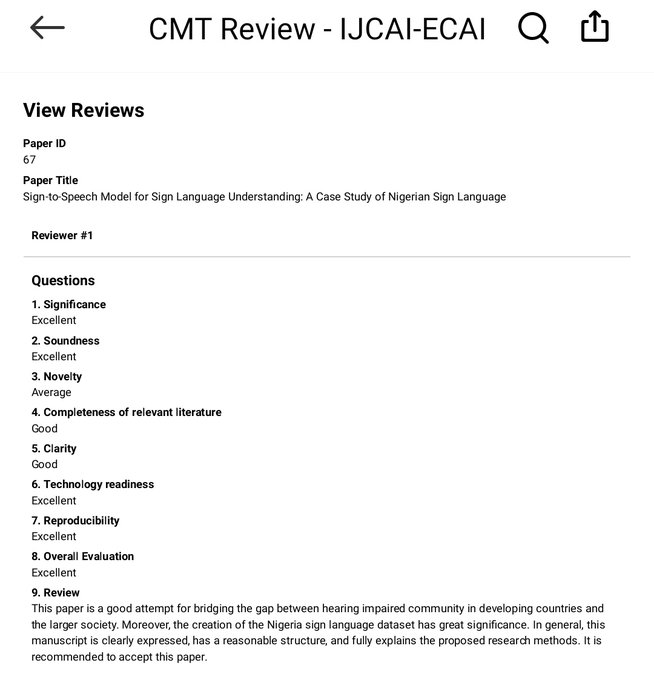

Happy to share that our work, led by

@steveddev

, outlining a pioneer dataset for Nigerian Sign Language (5000 images), has been accepted as an oral paper to the 31st International Joint Conference on Artificial Intelligence!

Check out the preprint here :

My research journey until recently have been haphazard at best - independent work in a not-so-research-inclined climate while leveraging heavily on

@ml_collective

resources[PTO]

Excited to post that this work got accepted + oral at IJCAI-ECAI 2022, AI for Social Good Track!🥳 1/

16

8

62

1

3

11

Bullish on

@cognition_labs

, but the inflated valuation with no VC ROI in sight is scary. I hope a few bad examples don’t set the precedent for the next AI winter and hinder long term growth. Even

@facebook

was valued 8.5MM inflation adjusted in its first year by

@peterthiel

.

1

0

11

Proud to have worked on this with some exceptional co-authors:

@ShayneRedford

@RobertMahari

@ArielNLee

@campbellslund

@CaimingXiong

@luis_in_brief

@BlancheMinerva

@hanlinliii

@daphneipp

@sarahookr

@jad_kabbara

@alex_pentland

@didaoh

@naana_om

@TobinSouth

and many more!

1

1

9

Absolutely thrilled about Prof. Avi Wigderson's Turing Award – a true genius in computational theory. Sharing lunch with him

@HLForum

last year was unforgettable. His profound insights on non-determinism were incredibly inspiring.

🏆 We're thrilled to announce the recipient of the 2023

#ACMTuringAward

: Avi Wigderson! Wigderson is recognized for his foundational contributions to the theory of computation. Join us in celebrating his incredible achievements! Learn more here:

@the_IAS

12

250

799

0

0

9

AI researchers at Intel are definitely onto something. I wish their foundry business and other verticals were as promising…

0

0

7

This work would not be possible without the amazing feedback from George-Alexandru Adam (

@uoft

) & Kenyon Tsai (

@VectorInst

)

Special thanks, to

@savvyRL

,

@jasonyo

& the entire

@ml_collective

community for their continued support, insightful discussions and compute grant🙂

(2/3)

1

0

7

Check out

@steveddev

's presentation on 2nd December

@MasakhaneNLP

!

The talk will focus on the development of a pioneer dataset for Nigerian Sign Language (5000 images), and outline key results from our preprint :

📢

@MasakhaneNLP

is delighted to host

@steveddev

to presents his fantastic work bringing the benefits of

#NLProc

to more people

@AiDisability

#Diversity

#Inclusion

#Accessibility

Please join our slack to get zoom links at

2

10

26

2

2

5

So seems like

@trsohmers

the ex-director of technology from

@GroqInc

went ahead and made his own inference box,

@positron_ai

debuted at NeurIPS.

Llama 2 70B performance:

Batch 1 → 480 tokens/sec/user

Batch 8 → 1,280 tokens/sec/batch

(160 tokens/sec/user)

1

0

5

Thanks

@mozilla

for featuring our work and supporting the open future of the web!

Can

#AI

training undermine the Open Web?

The Data Provenance Initiative thinks so.

The

#DataFuturesLab

awardee has found that blunt restrictions from the websites that feed popular training datasets could have an impact on the data-economy⤵️

0

14

38

0

0

5

@Ethan_smith_20

Kinda similar but I ran a bunch of experiments on depth augmented activations so basically modifying the activation function as you go deeper into the network. For instance changing the angle within ReLU. Saw very marginal training improvements on toy networks.

1

0

4

“AI will not replace developers”

-

@thomasdomke

, CEO Github

Was an absolute pleasure listening to the

@ashtom

talking about the future of AI today and future of work

@CollisionHQ

1

0

4

Had a lovely time reading through the little book of deep learning by

@francoisfleuret

. Definitely recommended for people who want a handbook that covers everything you need to know in ~100pages !

0

1

4

@steveddev

Congratulations Steven ! I am sure this is the first in many amazing papers to come :)

1

0

4

@savvyRL

@peterdavidfagan

@andrey_kurenkov

@k_saifullaah

@steveddev

@cchoi314

Thank you too for organizing this !

0

0

3

This is literally from 2018. I was there in the audience. I am not sure why people on LinkedIn and Twitter are hyping up - this retinal myopathy use-case from 5 years ago.

Sundar Pichai has unveiled a revolutionary

#healthcare

technology that employs

#AI

and eye scans to predict cardiovascular events with remarkable accuracy. This new development could potentially eliminate the necessity for CT scans, MRIs, and X-rays, enabling doctors to obtain a

203

1K

4K

2

0

4

What a wonderful group of people ! Truly grateful to

@ZEISS_Group

for sponsoring us for this opportunity to spend a week in Germany and meet various laureates.

#HLF23

Great to meet all the Abbe grant holders of

@HLForum

as well as the program managers of

#CZS

for lunch. It was particularly interesting to learn about the connection to

@ZEISS_Group

and

@SCHOTT_AG

, how the foundation operates or the career path of those working at

#CZS

.

#HLF23

0

2

8

0

0

3

This approach performs on par or better when compared to previous approaches like ToMe while being closer to the baseline in image quality and fidelity.

1

1

2