Max Aifer

@MaxAifer

Followers

2,498

Following

2,161

Media

58

Statuses

401

Theorist @NormalComputing . Thermodynamic computing for energy-efficient AI.

New York, NY

Joined July 2021

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Butler

• 339502 Tweets

Saka

• 176562 Tweets

Macron

• 127729 Tweets

#الهلال_الاهلي

• 110193 Tweets

#النصر_العروبه

• 63144 Tweets

Doom

• 37187 Tweets

Sterling

• 29110 Tweets

Missouri

• 28132 Tweets

Gordon

• 27801 Tweets

Everton

• 23200 Tweets

Texas A&M

• 22074 Tweets

Orlando Pirates

• 20700 Tweets

Celta

• 17484 Tweets

Valverde

• 17325 Tweets

Mizzou

• 17279 Tweets

جيسوس

• 11188 Tweets

Last Seen Profiles

Pinned Tweet

A thread on our new paper Thermodynamic Bayesian Inference

250 years later, Bayes’s theorem is still the gold standard for probabilistic reasoning. But for complicated models it’s too hard to implement exactly, so approximations are used. For example, the complexity of Bayesian

11

119

995

Some good points made here. Some of them are not specific to any one company and more about thermo computing in general, so I’ll try to respond to those:

3

4

76

Thermo computing will absolutely lead to advantages in efficiency but we need to be careful about making promises like “trillions of times less energy” without a clear justification

The "Brain"

@Extropic_AI

is developing is one where each thermodynamic neuron learns a complex probability distribution, encoding it in an energy potential

Allowing the fastest possible learning path, using trillions of times less energy and operating millions of times faster

8

13

155

3

2

67

Our latest paper is about using thermodynamic computers for AI training. Check out

@KaelanDon

's thread!

1

9

62

Our team at

@NormalComputing

has done the *first ever experimental demonstration* of error mitigation for a thermodynamic algorithm!

3

8

59

A key question in thermodynamic computing is whether thermodynamic algorithms can be “derandomized”, or replaced by deterministic algorithms with similar performance. Efforts to answer this question will greatly benefit from the work of Avi Wigderson, who contributed massively to

7

14

55

Digital computers, prepare for rebooting! Thermo has arrived in San Diego.

#IEEE

#RebootingComputing

We have an exciting week ahead: Our work on

#ThermoComputing

will be featured at IEEE Conference on Rebooting Computing () in San Diego.

I will present Thermo AI:

@MaxAifer

will present Thermo Linear Algebra

4

24

142

1

4

45

I'm really curious about the implications for thermodynamic linear algebra (). Maybe this method can be used to give an even larger speedup than the classical thermodynamic algorithms for linear algebra primitives.

Nathan Wiebe opens the simulation session at

#SQuInT2023

explaining an “Exponential quantum speedup in simulating coupled classical oscillators”, starting with a reference to Grover’s *other* paper (w/Sengupta), “From coupled pendulums to quantum search”

0

4

40

2

3

40

Promising work. It seems that formulating diffusion models as equilibrium systems allows for a natural description of phase transitions. Curious to learn more about the significance of the critical exponents in this context.

1

1

38

Seems like people like the book club idea! The first book will be solid state physics (ashcroft/mermin), I'll set up a space for next thursday to talk about chs. 1-4 (p. 1-84 in my edition). Like or DM if you want to join, I'll create a groupchat.

3

1

36

The work also points to a parallel between thermodynamic computing and quantum computing, which can also accelerate solving linear systems (HHL algorithm).

@quantum_aram

1

0

34

Thermo linear algebra is not just a theory anymore…

We have exciting news to share 🔥

The team at

@NormalComputing

has performed the first thermodynamic linear algebra experiment. This is an experimental follow-up to our theory paper that came out in August. The details are in this blog, see thread below

14

106

620

0

2

31

Like Sam, thermo computing is back!

For more on thermodynamic computing, take a look at our prior work . Big thanks to the team

@Sam_Duffield

@gavincrooks

@thomasahle

@ColesThermoAI

#ThermoComputing

0

0

20

0

1

27

Yes, analog design is hard. It can be done through careful simulation and prototyping. Circuits with linear dynamics are easier than nonlinear ones like neural networks. At

@NormalComputing

we’ve come up with useful algorithms that only require linear dynamics

1

1

26

Great question. The short answer is that minimal amount of energy needed depends on how fast you want to invert the matrix. If you don’t mind waiting a long time for the result, you could theoretically make the required energy arbitrarily small. In other words there is a trade

@MaxAifer

Ah, so you have to put energy into the system. Do you theoretically know for simple examples like a matrix inversion how much energy must be dissipated in terms of heat? I’ve heard that for Carnot engines you can calculate the maximum efficiency

0

0

1

1

1

22

For more on thermodynamic computing, take a look at our prior work . Big thanks to the team

@Sam_Duffield

@gavincrooks

@thomasahle

@ColesThermoAI

#ThermoComputing

0

0

20

Great to see

@NormalComputing

on the list!

Download EE Times' Silicon 100, our prestigious annual compilation of electronics and semiconductor startups that are shaping the future.

#silicon100

#eetimes

1

4

9

3

5

20

Huge huge thanks to coauthors

@KaelanDon

@MaxHunterGordon

@thomasahle

@dan_p_simpson

@gavincrooks

@ColesThermoAI

!!

3

0

17

Our PRL is finally out!

What's the cost of synchronizing two quantum systems? Fresh of the press with

@MaxAifer

and Juzar Thingna:

0

5

24

1

1

17

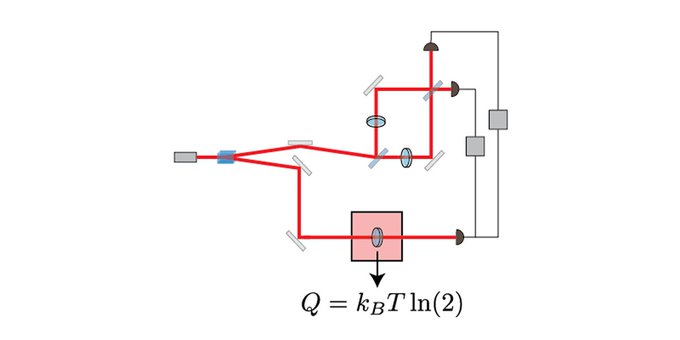

My second published paper, which explores the thermodynamic limits of optical information processing!

The second law of thermodynamics and Landauer's erasure principle are formulated for noisy optical polarizers, providing fundamental new advances on the thermodynamic description of quantum communication devices.

@quthermo_comp

0

8

50

0

1

15

Many future directions to go in... maybe "thermodynamic parallelism" can even explain how

@sama

works at OpenAI and Microsoft at the same time 🤔

0

0

15

My second paper ever! How much heat is dissipated in erasing quantum information stored in light? Many thanks to coauthors

@quthermo_comp

and

@NathanMMyers1

!

2

2

12

One thought on this: For a machine where one program can get swapped out for another (eg a computer), the length of a program tells us something about the complexity of the resulting behavior. This is because useful behaviors roughly get Shannon coded to short programs in a good

0

0

13

Is it easier to guess the end of a story from the beginning, or to guess the beginning from the end?

@stokhastik

are talking about this question and its relevance to AI and thermodynamics, feel free to join!

1

3

10

According to

@stephen_wolfram

, the second law of thermodynamics is really a statement about the computational limits of humankind. Will thermodynamics and computational complexity theory eventually be unified into a single theory?

yes

28

no

7

wat

6

see results

10

1

0

10

Looks like the most popular topic is the article “computational foundations for the second law of thermodynamics” by

@stephen_wolfram

so we can use that as the starting point for the space tonight

2

2

8

Thanks to Avi Widgerson (winner of the Turing Award) for this cool example , and to

@thomasahle

for bringing it to my attention!

0

2

9

Have really enjoyed being part of this impressive work led by

@johnathanchewy

, very exciting things happening in physics-inspired ML

Bridging principles between physics and AI will result in new ideas that work well. We present our work on neural CDEs and continuous-time (CT) U-Nets. Our ideas are inspired by

@PatrickKidger

's work. Find our paper here: and see 🧵for a quick summary.

5

28

168

0

0

8

To coauthors Denis Melanson,

@KaelanDon

,

@gavincrooks

,

@thomasahle

, and

@ColesThermoAI

, thanks for your amazing work on this!

0

0

8