Emily Li

@EmilyLiJiayao

Followers

503

Following

713

Media

30

Statuses

186

@acadiaai , @zfellows_ | cs @ @carnegiemellon | prev research @modern_ai , ml @ evolution_devices |

San Francisco, CA

Joined May 2022

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Cheney

• 509910 Tweets

Michigan

• 165321 Tweets

England

• 136011 Tweets

YEONJUN

• 123060 Tweets

Ireland

• 117980 Tweets

Lookman

• 34058 Tweets

金メダル

• 33289 Tweets

リスアニ

• 29895 Tweets

Grealish

• 27821 Tweets

Gordon

• 24858 Tweets

Big House

• 22855 Tweets

Arkansas

• 20700 Tweets

#MustafaKamalinAskerleriyiz

• 19275 Tweets

Super Eagles

• 18417 Tweets

Lee Carsley

• 17323 Tweets

All Blacks

• 15581 Tweets

Waka

• 15260 Tweets

Penn State

• 15154 Tweets

Ann Arbor

• 11853 Tweets

Boniface

• 11118 Tweets

Last Seen Profiles

Pinned Tweet

Super excited to introduce 🌳Acadia (

@AcadiaAI

) Playground, an interpretable data exploration tool to understand your evaluation data’s quality and help unlock insights into model performance using AI!

🧵

12

21

148

super thrilled to join the

@Contrary

squad as a VP and work with so many brilliant & fun ppl!

0

0

26

from last minute late night ideas to fruition, the beautiful Figma offices to the inspiring ppl. true thanks

@hackclub

and the Assemble team for making things happen!

#assemble22

#sf

2

0

19

not tagged but excited to have worked on this

@AGIHouseSF

!

3/ Hierarchical semantic clustering

Clustering scheme that generates an interconnected hierarchy that links ideas together into a single post

Consolidate your notes into a blog post

🥇First place

@JvNixon

@_nathanmarquez_

@zvhgpyxqtnys

1

2

25

2

0

9

wanna play no-contact hologram style Tic Tac Toe? check out HoloTicTacToe open-sourced at

(initially built for Assemble workshop

@hackclub

)

1

0

7

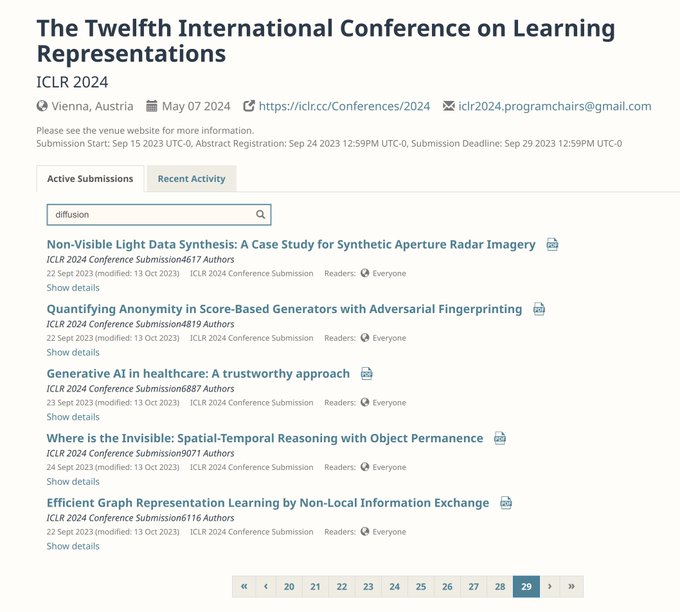

Here's a new SOTA text-to-image eval metric that's much better at complex compositional reasoning than current ones (e.g CLIPScore, PickScore)!

We also show that it generalizes to video/3d evaluation + released a comprehensive t2visual meta-eval metrics benchmark.

Great to have

0

1

6

@AcadiaAI

Playground is multimodal! We used it to analyze

🖼️ Winoground (VLM image caption matching task)

💻 HumanEval (LLM code generation task)

More details coming soon :)

3/6

1

0

4

and so happened to be neighbors without ever knowing!! you’re cooler 🩷

love meeting online twitter friends irl, makes the world feel so small 🩷

@EmilyLiJiayao

you’re so cool!!

1

0

8

0

0

4

@AcadiaAI

Playground can also be used for:

- Cross comparison of various models to evaluate the best model for your use case

- Identify and target weaknesses in your dataset distribution (such as duplication or misrepresented categories), inform better data curation

4/6

1

0

3

200 on clip is crazy 😱. there’ll probably be a lot more on nerfs / 3d vision once 2d vision is solved (alr feels like it has by gpt4v but opensource still has a long way to go)

0

0

2

demo day was awesome. cv has always been extremely interesting to me but I had never first-hand witnessed how inspiring it may also be for others until today, esp by it’s real world applications that bridge imaginative sci-fi with reality. 🦾

#gangstaminecraft

0

0

3

@itsandrewgao

yea and i wonder how of it is scaling parameters/more training data vs consequential architecture improvements

0

0

2

@itsandrewgao

the swin transformer for example. also, although the naive attention’s work is in order n^2, multi-headed attention/parallelize-ability makes the span closer to linear or logn.

0

0

2

@YiMaTweets

hmm feels like it's more prior ⊆ latter. classification/recog. are discriminative tasks whose objective is to learn conditional prob distribution P(X|Y) aka decision boundaries, which is a subset of generative models that learn a joint distribution P(X,Y) where we sample from

1

0

2

@akbirthko

awesome, this was what i was leaning towards. but in this case, what is the point of even having different heads if their end result is concatenated together anyways b4 the linear layer? don't the q, k, v operate independently between the different hidden dims anyway?

1

0

2

@HaoliYin

I've actually thought about this b4 haha! I feel like generating accurate and robust 3d mesh/point cloud/surface is pretty difficult and unsolved problem.

1

0

2

currently playing with

@runwayml

's gen-2 video gen models -- definitely something going on

"A baker pulling freshly baked bread out of an oven in a bakery"

send in some prompts👇

1

0

2

@MarioKrenn6240

Due to the influx of papers, bec it's rare for any AI researcher to have read every single paper in their relative subdomain, there're undoubtedly lots of overlapping "novelties." So even just having a systematic approach for tracking defs and training paradigms would be helpful

0

0

2

@tengyuma

@HongLiu9903

@zhiyuanli_

@dlwh

@percyliang

@StanfordAILab

@stanfordnlp

@StanfordCRFM

@Stanford

pytorch compatibility would encourage usage!

1

0

1

@gdb

increase in RPD limits; random server errors occur at times; browser version feels like it’s much more willing to describe; log probs would be great!

1

0

1

@O42nl

actually, the W_Q, and W_K don't have to be square matrices, they just have to be d_model x d_k, and W_V has to be d_model x d_v. d_k doesn't have to equal to d_v, but by convention it is, right?

1

0

1

@HaoliYin

@alexfmckinney

i say try the former, if not good enough then the latter, we def have stronger text embedding models than vision. also i'm interested to see how close CLIP img encoder embeddings are to img->description->CLIP text embeddings, perhaps that could be a finetuning objective for CLIP

1

0

1

why is this soo true...is definitely something that wastes a lot of my time

0

0

1

simple math shows that training

@MetaAI

's llama would have costed anyone ~ $4 mil to train according to A100's global pricing of $4/hr/GPU. 504hrs *$4*2048 GPUs. and it is only 65B params

1

0

1