Roger Levy

@roger_p_levy

Followers

3,963

Following

420

Media

47

Statuses

885

Director, MIT Computational Psycholinguistics Laboratory | President, Cognitive Science Society | Chair-Elect of the MIT Faculty | He

Joined February 2013

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Eagles

• 230970 Tweets

Dick Cheney

• 192590 Tweets

Packers

• 130317 Tweets

ENGFA ACTRESS 100M

• 118238 Tweets

श्री गणेश

• 113163 Tweets

Ganesh Chaturthi

• 103865 Tweets

Ganesh Chaturthi

• 103865 Tweets

गणपति बप्पा

• 81210 Tweets

Saquon

• 76054 Tweets

Jalen

• 58603 Tweets

Barkley

• 44004 Tweets

भगवान गणेश

• 41183 Tweets

#二度と撮れない画像を貼れ

• 34726 Tweets

Jordan Love

• 32308 Tweets

#GanpatiBappaMorya

• 28779 Tweets

Party Love AndaLookkaew

• 26724 Tweets

JustinOn NCAA100Kickoff

• 25401 Tweets

ビッグラン

• 15478 Tweets

イコラブ

• 12766 Tweets

#学マスデビュー初心_名古屋

• 11232 Tweets

Nikocado Avocado

• 10555 Tweets

Last Seen Profiles

This paper is one culmination of three-plus years' work investigating the syntactic capabilities of today's autoregressive language models, with carefully controlled experiments like we would use in a psycholinguistics experiment with human subjects. The results blow me away. 1/5

What syntactic generalizations can be learned from predicting the next word by domain-general learning algorithms? A paper summarizing the case of filler--gap dependencies (+ theoretical implications!) with

@roger_p_levy

and

@rljfutrell

3

48

208

6

95

394

Join us online for the May 13–14 for a star-studded

#NSF

-sponsored workshop: New Horizons in Language Science: Large Language Models, Language Structure, and the Cognitive & Neural Basis of Language! Interdisciplinary talks & discussion on three themes: 1/

5

86

226

The MIT Computational Psycholinguistics Lab seeks to fill an open postdoc position for an

@MITIBMLab

supported multi-PI project in low-resource language learning, bridging NLP, machine learning, linguistics, and cognitive science. Please spread the word!

3

90

131

I'm proud of our group's presentations at

#CogSci2024

– come check them out!

1: Today 10:30am–noon in J.F. Stall: "Finding structure in logographic writing with library learning" led by

@jiang_gy

. This work won the Sayan Gul award for best undergraduate student paper!

2

17

113

Psycholinguists – our field has a new journal, in partnership with the

#openaccess

trailblazer

@glossa_oa

! We look forward to receiving your best new work! Gratitude to

@fernandaedi

,

@linguistbrian

, and

@JohanRooryck

for their initiative and leadership.

Today at

#CUNY2021

@linguistbrian

and

@fernandaedi

announced the soft opening of Glossa Psycholinguistics. GP is an open access journal that considers brief reports, longer reports, registered reports, and theoretical reviews. Please retweet and follow us here on Twitter!

4

127

220

2

16

100

Submissions to CogSci 2019 now open. I’m delighted to say that, new to this year’s conference, reviewing will be double-blind!

@cogsci_soc

0

33

91

As MIT faculty and an advisor of graduate students: thank you

#MITGSU

for your important, hard work on this unionization campaign! I applaud your organizing efforts to support graduate students' dignity, autonomy, living, learning, & working conditions, and well-being.

We are proud to publicly announce the MIT Graduate Student Union! MIT grad student-workers are unionizing to create a healthy and fair working and learning environment for all, by giving us a voice in the decisions that affect us. (1/4)

#MITGSU

8

542

3K

1

3

91

Video recordings from the

#NSF

-sponsored workshop New Horizons in Language Science: Large Language Models, Language Structure, and the Cognitive & Neural Basis of language are now publicly available! All videos are linked to from the workshop website: 1/

Join us online for the May 13–14 for a star-studded

#NSF

-sponsored workshop: New Horizons in Language Science: Large Language Models, Language Structure, and the Cognitive & Neural Basis of Language! Interdisciplinary talks & discussion on three themes: 1/

5

86

226

2

39

90

I urge interested readers to consult the

@weGotlieb

et al. in press: we find that the "GRNN" LSTM of

@xsway_

et al. 2018 trained on a childhood's worth of English shows substantial success on filler–gap dependencies and the island constraints on them. 1/3

4

17

74

New this year at

#cogsci2020

: authors keep copyright on all conference proceedings papers, and they're licensed Open Access under

@creativecommons

CC BY!

0

11

64

@weGotlieb

's results really move the needle on classic cogsci learnability debates. There's a huge amount of syntactic information in raw linguistic input (just strings!). And generic autoregressive models pick it up! An exciting time for language and computation. 5/5

1

3

63

Talk titles and abstracts are up now for the May 13–14

#NSF

-hosted workshop New Horizons in Language Science: Large Language Models, Language Structure, and the Cognitive and Neural Basis of Language! Learn more and register for the Zoom webinar at 1/

3

22

59

One of the most eye-opening studies I've ever been involved in, now out in

#PsychologicalScience

. During the 2016 US presidential campaign, US citizens strongly dispreferred "she" pronouns for the next president, despite expecting the female candidate, Clinton, to win. 1/8

How do English speakers use gendered pronouns when talking about future heads of government whose gender isn't known yet? Find out in this

@MIT

News story about research we conducted during the 2016 US presidential race and the 2017 UK general elections:

1

12

39

1

23

57

@srush_nlp

What a great question! Time yourself reading a document in the language. Reading time is linear in word log probability () so you can back out perplexity.

@whylikethis_

will have slope & intercept as a function of non-native proficiency for you soon 😊

1

10

56

#cogsci2024

was the biggest cognitive science conference ever (by # submissions and # attendees alike), and a great success. Hats off to

@lksamueltweet

Stefan Frank

@mtoneva1

@ally_mackey

&

@EliotHazeltine

for a phenomenal job as conference organizers/program chairs – thank you!!

13 years ago, I presented my first paper ever at

#CogSci

. This year I had the honor of serving as a Program Chair.

Cognitive science is changing thanks to LLMs. Where will CogSci be in 13 more years? Maybe an undergrad in the audience last week will run it. Or maybe ChairGPT.

3

4

124

1

2

57

#NLProc

public service announcement:

@aclanthology

BibTeX entries are SO much better than Google Scholar BibTeX entries!

2

6

54

Delighted to see "The Statistical Significance Filter",

@shravanvasishth

Mertzen Jäger

@StatModeling

2018, a Most Downloaded Paper at JML. It's a great paper all psycholinguists should read. 1/3

1

9

52

I'm hugely proud to have been a member of the inaugural editorial team for

@glossapsycholx

! It's a top quality operation for original research, with the best

#OpenAccess

terms one can wish for: no author need pay to publish there. Send the journal your best work!

🚨🚨Glossa Psycholinguistics: Our first articles are published! 🚨🚨 We are so excited to publish three excellent original research articles and a statement by

@fernandaedi

and

@linguistbrian

on the goals of GP. Let's highlight the scholarship in our inaugural launch: [1/n]

1

47

170

0

6

49

Congratulations Jenn!!! Prospective students and postdocs interested in language in minds *or* machines: if you get the opportunity to join Jenn's group, take it! She is a rising star and superb to work with.

1

2

48

Postdoc job alert: come work with

@NogaZaslavsky

, Nidhi Seethapathi (

@nidhi_s91

), and me on an integrative computational account of language and locomotion! Apply by March 31 for fullest consideration. Please share widely!

We're looking for a brilliant postdoc to work with

@roger_p_levy

@nidhi_s91

and me on an exciting new project at the intersection of computational cognition, language, and motor control! Please share with anyone who might be interested. More info here:

1

40

90

0

18

46

@lintool

I’ve been thinking lately: what if we replaced author–date format with title–date, e.g. [Lin et al. 2011] -> [Smoothing Techniques 2011]? Or for brevity [SmooTechn 2011]. Title words are probably more useful memory retrieval cues anyway. Bonus: encouraging more creative titles!

3

5

46

This is a terrific paper!

Among other contributions,

@MKeshev

&

@aya_meltzer

’s work highlights how cross-linguistic breadth strengthens psycholinguistics. Their tests of noisy-channel language processing theory use Hebrew grammatical properties that aren’t present in eg English.

Out now in Cognitive Psychology:

Noisy is better than rare: Comprehenders compromise subject-verb agreement to form more probable linguistic structures

with

@aya_meltzer

Featuring "the best explanation of a filler-gap [reviewer2] have even seen!"

1/n

2

16

102

2

8

42

Now out: call for proposals for co-chairs of the 2025 Meeting of the Cognitive Science Society

@cogsci_soc

! Co-chairs shape conference theme & choose invited speakers (but aren't responsible for location-related logistics logistics). Spread the word!

1

19

42

Let me tell you why I'm so excited about this new paper by the amazing

@veroboyce

in

@glossapsycholx

, ""A-maze of natural stories: comprehension and surprisal in the Maze task". 1/9

I'm thrilled to have this paper with

@roger_p_levy

out in

@glossapsycholx

! I hope our methods work on Maze gives more researchers an easy option for collecting incremental reading time data on a range of materials!

1

12

40

2

9

42

I just discovered that the 1963 classic Handbook of Mathematical Psychology is available for PDF download on the

@internetarchive

. Several of these chapters are seminal for cognitive scientists of language. What a wonderful resource!

0

8

39

@juanbuis

@Christophepas

I’m a psycholinguist who has studied reading for 15 years, and I respectfully call bullshit (

@callin_bull

). There is no evidence or reason to believe that Bionic Reading’s text tweaks are a good idea that will help you read better. 1/6

1

2

38

Yevgeni is an amazing researcher and collaborator, and one of the best mentors I've ever seen in action. And he has a unique, distinctive research program at the intersection of AI/psychology/linguistics that is going great places. If you get the chance to work with him, take it!

1

2

37

Thrilled at publication of

@StephanMeylan

's "How adults understand what young children say", featuring Bayesian noisy-channel inference, LLMs, & child speech datasets!

TL;DR: prior expectations of what kids *want to say* is crucial. (Knowing how kids mispronounce words is too.)

How do adults understand children’s early, highly variable speech? Our new paper in

@NatureHumBehav

() provides evidence that adults’ interpretations depend quite strongly on language expectations—what they think children are likely to say. 1/

1

32

80

0

1

36

More news coverage of our recent paper on the emergence of syntactic productivity!

@mcxfrank

@bcroygbiv

0

14

34

L2 learner English proficiency can be determined from eye movements while reading! Berzak, Katz, & Levy 2018 NAACL preprint now available on ArXiv:

@yevgeni_berzak

0

10

32

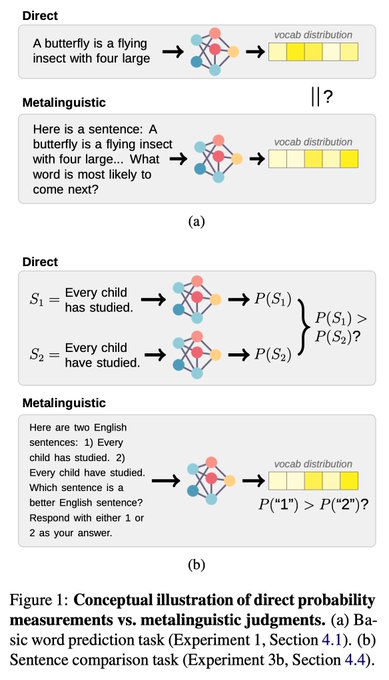

Prompting is *not a substitute* for probability measurements in large language models – the amazing

@_jennhu

's EMNLP paper now available camera-ready!

TL;DR: direct probability measurements show LLMs' linguistic generalizations are better than suggested by prompt-based tests.

To researchers doing LLM evaluation: prompting is *not a substitute* for direct probability measurements.

Check out the camera-ready version of our work, to appear at EMNLP 2023! (w/

@roger_p_levy

)

Paper:

Original thread:

🧵👇

4

52

264

0

1

33

"Iconicity and Structure in the Emergence of Combinatoriality" -- with Matthias Hofer, new

@cogsci_soc

conference-proceedings preprint out! As always, thoughts, comments, feedback much appreciated.

0

4

31

New Horizons in Language Science: Large Language Models, Language Structure, and the Cognitive & Neural Basis of Language has started! Theme 1's speakers will present starting NOW. Ben Bergen, Leila Webhe, Ariel Goldstein,

@davidbau

! Tune in on Zoom via

Join us online for the May 13–14 for a star-studded

#NSF

-sponsored workshop: New Horizons in Language Science: Large Language Models, Language Structure, and the Cognitive & Neural Basis of Language! Interdisciplinary talks & discussion on three themes: 1/

5

86

226

3

11

29

+1 Elsevier journal flipped to gold Open Access at

@mitpress

: goodbye Journal of Informetrics, hello Quantitative Social Sciences!

0

6

30

#linguistics

Twitter: what are the best available quantitative measures of dialect/language mutual intelligibility? The more fine-grained, the better: I'm hoping to vividly illustrate at least one specific dialect continuum (e.g., the Romance languages of the Mediterranean coast)

4

7

27

Paper now accepted to

#CogSci2018

and preprint available at -- Communicative Efficiency, Uniform Information Density, and the Rational Speech Act theory. Will be grateful for comments, especially before the May 14 camera-ready final submission deadline!

0

9

28

Linguists & psychologists – is anyone aware of published estimates of the distribution of words typed per day, within or across individuals? Summary statistics like mean or median are fine; more detail, even better!

@siminevazire

@jwpennebaker

@mcxfrank

@dkroy

@RelationScience

4

13

28

@shravanvasishth

Psycholinguists, and cognitive scientists more broadly, please do send your best work to Open Mind! We are rigorous and fast, pure gold Open Access (hence compatible with European funders' new Plan S). Check out our editorial team at

0

7

26

Rolled out of bed 5am & gave a talk for UNIGE Linguistics hosted by

@PaolaMerlo20

🙏. Attendance & great discussion by some of my favorite colleagues all over Europe! So many benefits to remote presentation. I don't want to go back to air travel! Slides at

1

2

26

I am so sad to learn of Akira's untimely passing. Akira was inspired, original, and enterprising, with outstanding future potential. Even more important: he was unfailingly positive, generous, and kind in all our interactions and by all other accounts. I will miss him.

0

1

25

Starting in 5 minutes I'm chairing the

#emnlp2020

Q&A session on Linguistic Theories, Cognitive Modeling, and Psycholinguistics. There are five great papers in this session -- please join!

0

0

25

2020

@CUNYUMass

was a tough act to follow, but the

@CUNY2021

organizers (John Trueswell, Delphine Dahan, Anna Papafragou,

@garicgymro

,

@KathrynSchuler

, Florian Schwarz, Charles Yang) and many student volunteers did it – hats off for a fantastic conference! Some thoughts: 1/

1

1

25

Excellent advice in

@tallinzen

's blog post regarding finding a postdoc. Start contacting PIs you might want to work with *at least* 12 months before your desired start date -- applying for funding requires a long lead time.

0

10

25

Michael Eisen is apparently being ousted from his role as

@eLife

Editor-in-Chief due to his tweet referencing a satirical article conveying the tragedy of the Israeli–Palestinian conflict. Concerned about this form of censure? Sign this open letter:

2

3

23

Wondering how to combine the strengths of LLMs and logic-based symbolic methods for natural language reasoning tasks? See our forthcoming EMNLP paper, LINC: Logical Inference via Neurosymbolic Computation!

TL;DR: have the LLM translate to logical form and run a theorem prover!

📢 Introducing 🔗LINC, a neurosymbolic approach to logical reasoning w/ awesome co-first authors

@theo_olausson

,

@ben_lipkin

, and Cedegao Zhang

+ advisors Armando Solar-Lezama, Josh Tenenbaum, and

@roger_p_levy

!

📜

💻

🧵⬇️ (1/n)

1

33

137

0

3

24

Rising star language scientist

@raryskin

!

Today's

#UCMerced

#ResearchWeek

faculty profile is Dr.

@raryskin

who works to understand how the human language processing system allows us to construct meaning from uncertain input.

1

7

56

1

0

23

@writerethink

A classic is Cooper & Ross 1975, . We have a more comprehensive overview & references in Benor & Levy 2006

1

3

23

#cognitivescience

/

#cogsci

/

@cogsci_soc

community: just a reminder to anonymize your

#CogSci2019

paper and member-abstract submissions, as reviewing will be double blind! Updated templates available at (bottom of the page)

0

11

23

Our

#CogSci2018

preprint now available on arXiv! Gauthier, Levy, and Tenenbaum: Word learning and the acquisition of syntactic-semantic overhypotheses -- enjoy!

0

2

22

Great work from

@veroboyce

on automating and evaluating the Maze task for studying language comprehension -- I'm proud to have worked with her on it and I look forward to using Auto-Maze in many studies to come!

0

1

22

Hearty congratulations to

@ben_lipkin

and team for winning the first AI Math Olympiad Progress Prize!!!

1

1

22

Cory is one of a kind – don't walk, run, to work with him!

👋I boost a lot of job opportunities on here and now it's time to boost my own! I'll be arriving at

#Stanford

in fall of 2024 and I'm looking for awesome people to help me figure out language. 🧵👇

4

80

219

1

4

22

The videos can also be accessed directly from the workshop's YouTube channel:

Video recordings from the

#NSF

-sponsored workshop New Horizons in Language Science: Large Language Models, Language Structure, and the Cognitive & Neural Basis of language are now publicly available! All videos are linked to from the workshop website: 1/

2

39

90

0

4

21

Elegant study. Graph tells the main story: when test riders in Queensland asked for a free bus ride, drivers granted it far less often to Indian & especially Black customers.

But when drivers were asked in a survey who they'd grant a free ride to, no differences by rider race.

White privilege in everyday market interactions.

'The Colour of a Free Ride' with

@FrijtersPaul

forthcoming in the Economic Journal.

@EJ_RES

Full text:

13

66

163

0

5

22

@tmalsburg

@tallinzen

@lcl_ucsd

@shravanvasishth

@linguistbrian

@bruno_nicenboim

@LanguageMIT

Non-normality is relatively unimportant; at worst you just may lose a bit of power. I strongly recommend

@StatModeling

& Hill (2007, pp. 45-47)'s summary of key regression model assumptions. Normality of errors literally gets LOWEST priority. My experience supports this. 3/3

2

3

18

Stick around for the closing remarks for

#CogSci2024

and the big reveal for

#CogSci2025

: it's going to be fun! 16:45 in Rotterdam Hall!

0

1

20

#CogSci2018

: Join Open Mind journal editors Naomi Feldman, Lori Holt, Barbara Landau, and myself this afternoon 1-3pm for refreshments at the

@mitpress

booth! Learn about the journal, our rigorous peer review, and how you can support open access publishing in cognitive science.

0

6

20

A filler–gap dependency is abstract–a contingency between a word and the presence/absence of ap hrase–and depends on structural hierarchy, not on linear order. Yet the

@xsway_

2018 LSTM, trained on just a human childhood's worth of language, is sensitive to the dependency! 3/5

1

0

19

Calling all researchers who value open & equitable scholarship:

@force11rescomm

seeks feedback on their draft Researcher Bill of Rights & Principles by May 1. They've done great work distilling principles shared across many initiatives. Read & comment at

1

12

18

Looking forward to participating in the new ACL Rolling Review,

@ReviewAcl

– we're in need of peer review innovation, and this is an exciting one! Gratitude to

@gneubig

@astent

@pascalefung

@riedelcastro

for leading this. Read the description at:

I'm so excited!!

@ReviewAcl

is here! CFP and invitation to reviewers coming very soon!

#NLProc

@riedelcastro

@gneubig

@pascalefung

5

62

182

0

0

18

This is great work by

@veroboyce

— I am lucky to work with her!!! And, if you want to study incremental processing difficulty during reading, seriously consider trying out our auto-Maze implementation. It compares very favorably thus far with self-paced reading.

The Maze task finds large, localized effects (better than SPR) when run on MTurk with auto-generated distractors. Preprint: Code for auto-generating distractors: Joint work with

@roger_p_levy

and

@rljfutrell

0

7

27

0

2

18

@kevinnadal

@SRCDtweets

+1 to

@kevinnadal

. And, "political lobbying" means attempting to influence legislation; the present issue is executive policy not legislation. Standing up for vulnerable children is advocacy, and in-scope for 501(c)(3)s. So please stand up and advocate!

0

4

18

MIT Computational Psycholinguistics Lab will observe

#ShutDownSTEM

tomorrow. We are not holding our ordinary Wednesday afternoon lab meeting. I'll be devoting the day to self-education and to continuing to develop a plan of action to combat systemic racism.

1

1

18

@lksamueltweet

@mtoneva1

@ally_mackey

@EliotHazeltine

#cogsci2025

will happen in San Francisco July 30–Aug 2 2025, in the capable organizational hands of Azzurra Ruggeri, David Barner,

@CarenMWalker

, and

@NeilBramley

– can't wait!

0

5

17

In which the amazing

@_jennhu

shows that LLMs' linguistic generalizations are better than just prompt-asking them about sentences would suggest. To best reveal what generalizations a language model has acquired, compare the string probabilities they put on minimal pairs!

New paper with

@roger_p_levy

:

Prompt-based methods may underestimate large language models' linguistic generalizations.

Preprint:

🧵👇

10

37

180

1

1

17