Rishabh Anand 🧬

@rishabh16_

Followers

4,992

Following

1,365

Media

534

Statuses

7,806

multiplying matrices @NUSingapore • geometric DL + generative modelling for proteins, RNA, and drug discovery @Cambridge_CL 🛠

Singapore

Joined June 2017

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

SEBI

• 416615 Tweets

#にじ甲2024

• 153148 Tweets

#Number_i_RIJF2024

• 96610 Tweets

South Africans

• 82736 Tweets

NUNEW DREAM CATCHER

• 79394 Tweets

#NuNew1stConcertDay2

• 67281 Tweets

ギラホス

• 32131 Tweets

小倉記念

• 31122 Tweets

報徳学園

• 22523 Tweets

マーリン

• 22124 Tweets

ヘルナンデス

• 18919 Tweets

大社高校

• 18410 Tweets

Ferhat Gedik

• 16307 Tweets

Sifan Hassan

• 15824 Tweets

和田アキ子

• 15341 Tweets

#iyikivarsınEren

• 13832 Tweets

契約解除

• 12223 Tweets

リフレーミング

• 11908 Tweets

鈴木さん

• 10480 Tweets

Last Seen Profiles

Pinned Tweet

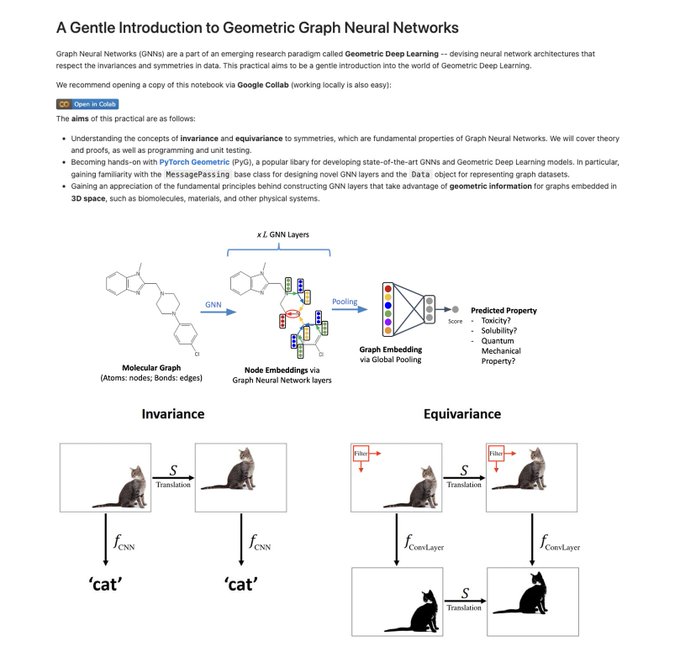

🚨 My Graph Deep Learning extended tutorial has a new home!!!

It's finally up on my personal website:

Do RT, share, and tag your GDL buddies if you find it useful 🙌🏻🙌🏻

10

303

1K

🚨NEW WEEKEND READING🚨

I'm publishing "Graph Neural Networks for Novice Math Fanatics" – a primer on the math behind GNNs using colourful drawings & diagrams

(I hope this becomes the definitive guide to GDL for those hoping to enter the field!!!)

10

232

1K

My two contenders:

1) Why LayerNorm is so helpful in Attention and what it’s actually doing under the hood ()

2) Explaining the oversquashing phenomenon in GNNs ()

Really cool papers with superrr approachable math/theory 🙌🏻

6

79

592

Hey 👋🏻

So a lot of you reached out to learn how to train your own models on *ALL* 8 cores of a Colab TPU using

@PyTorch

XLA

Not many good resources out there, so I wrote a super easy 8-step tutorial on doing exactly this:

Share if useful :D

7

142

582

There’s a lot of math involved in current generative models. This is my favourite in-depth blog series about it all by

@ludiXIVwinkler

:

5

46

386

Happy Lunar NY 🎉

Just read

@PetarV_93

's "Everything is Connected". I spent most of 2022 studying Transformers for graphs and this paper intuitively connects most of what I've learned!

🔗:

Here's an executive summary if you haven't caught it yet!

1/9

7

65

367

Shampoo now has a good official Pytorch implementation by FAIR (MAIR??) 🎉

(cc

@_arohan_

and friends)

0

35

206

Geometric DL is super hot right now. Want a front-row seat to what’s going on?

Then check out this repo NOW to learn about the many mechanisms that power the latest and greatest geometric models!!!

4

12

97

Hey everyone 👋🏻

Yet another interesting paper ⬇️😲

Here's my unofficial PyTorch implementation of the Involution operator introduced in the paper:

(Of course, it's a pip-able wrapper in true

@lucidrains

fashion!)

Note: TF wrapper coming veryyyy soon 🏎

1

22

95

Super lit article by

@hardmaru

and team from Brain. It involves using more focused (attentive) RL agents. Results include lighter and smarter models in pixel-based envs

Recommend the read :O

1

17

90

🚨NEW PROJECT🚨

I built a PyTorch wrapper (<10LOC) of "TokenLearner" by

@ryoo_michael

,

@m__dehghani

, & others from

@GoogleAI

.

You can plug-and-play with ViT or any Transformer model!!

Check it out:

✨Install with pip✨

pip install tokenlearner-pytorch

3

26

83

Yo 👋🏻

Neural Additive Models (NAM) by

@agarwl_

et al. was one of my fav papers of 2020 and I've been meaning to implement it for a while!

My pipable NAM PyTorch wrapper:

Paper:

You can install it like so:

pip install nam-pytorch

0

12

80

Yo!

I'm very close to releasing "Long Short-Term Memories", a weekly podcast series where the hosts are 2 conversational ML agents talking to each other about random stuff powered by

@huggingface

DialoGPT models 😱🙌🏻

Super excited and can't wait to show it to y'all 🎉

3

6

77

I'm in the UK!! 🇬🇧

Excited to join

@pl219_Cambridge

's lab at Cambridge as a visiting research student for the next one year! I'll be working on geometric deep learning and protein dynamics alongside

@chaitjo

and others

If you're here / London, I'd love to chat over ☕️ :D

8

1

67

Wew some good news 🥳

Super excited to tell y'all I'm now a writer for

@gradientpub

, a leading AI/ML research magazine from

@StanfordAILab

🌲

I'll be writing in-depth articles about interesting research from recent papers, mainly in the RL area

Can't wait to get started!

1

3

66

Ever since GeoLDM dropped (), I've started believing that training gen models on stuff (esp molecules) in latent space makes so much more sense than directly on its constituent features/quantities like coords, atom IDs, etc

Really neat results!! 👇🏻

Excited to share that our paper, A Latent Diffusion Model for Protein Structure Generation has been accepted by LoG 2023

@LogConference

!

Our LatentDiff achieves ~88 faster speed than FrameDiff and is ~247 faster than RFdiffusion, and the performance gap is small.

3

20

178

1

13

60

Read the paper in greater detail and I'm fascinated by how (relatively) simple the underlying GNN architecture is for a *generative model*.

The community seems to love e3nn-style GNNs for generative setups coz of the extra expressiveness.

Surprised this still works so well :O

1/n: We are excited to share that our paper on Chroma, a general purpose diffusion model for proteins, is out today in

@Nature

!

A couple of my favorite highlights in the 🧵below 👇

15

317

1K

1

5

59

Meet

@drfeifei

✅

Super inspiring talk about the progress in vision systems/benchmarks and how it drives better understanding of the real world. Thank you for visiting NUS!

2

0

55

Hey 👋🏻

Here's my PyTorch wrapper around the Attention Free Transformer by

@zhaisf

,

@nitishsr

, et al. that aims to linearly approximate the expensive dot product operation🏎

You can even pip install the "AFT-Full" Attention layer:

pip install aft-pytorch

4

18

54

I’ve deployed 3 websites on

@Netlify

just this week for school projects, and there hasn’t been a single moment where I’ve felt mentally drained

They really out here solving the webapp deployment crisis ✨✨✨

Couldn’t be more grateful for it existing omg 😭

4

4

54

Combining DDPMs with Transformers has been done in other areas too!! Really cool work by

@Kevin_E_Wu

@KevinKaichuang

@avapamini

et al. combines the two to generate plausible protein structures. It diffuses over dihedral & bond angles

Paper:

2

9

45

Super cool tutorial and hands-on activities on GNN expressiveness by

@ffabffrasca

@beabevi_

and

@HaggaiMaron

at

@LogConference

!!

1

8

45

Wew GNNs have finally entered HF territory :D 🙌🏻

🔔 New modality on

@huggingface

's hub: Graphs! 🎇

If you want to experiment, we already have 25 datasets and a model... and we're looking forward to seeing what else the community will add!

Ping me if you need a hand to upload your artifacts 🤗

5

63

326

0

2

41

@deliprao

@jonasgeiping

@tomgoldsteincs

While Cramming was a good read, this one is an equally good paper with similar lessons (minus the scaling laws stuff):

Learned quite a bit when I was playing around with a few NLP projects early this year

2

6

42

✨✨OMGOMGOMGOMG

@ykilcher

covered my graph augmentation library in his ML news episode!!!

🗃Library repo:

📹 Youtube video:

Means so much!! I can finally rest 😩🙌🏻🤝🤘🏻

3

5

41

🚨🚨Some news:

@chaitjo

and I are pleased to announce our work, "Recent Advances in Deep Learning for Routing Problems", has been accepted to the

@iclr_conf

Blog Post Track '22 🥳

We thank the reviewers and readers for their invaluable feedback!

🚨 New blogpost alert, co-authored with

@rishabh16_

:

"Recent Advances in Deep Learning for Routing Problems"

Read on if you are interested in Graph Neural Networks, Combinatorial Optimization, and exciting applications in their intersection! 👇

2

26

155

5

4

38

Check out this really cool library by

@tyleryep1

that summarises your pytorch models in a really nice way 😲

0

8

36

Don't think I got the memo but I have a strong feeling

@DynamicWebPaige

was behind this and I'm alllll for it :DDD

1

3

37

@thegautamkamath

I made a 1-hour "backprop bootcamp" video about it for the class I was teaching this semester and it received some great reception from the cohort (mostly Year 1/2 undergrads)!

2

2

35

🔥🎉 It’s an honour to be a recipient of the Gradient Prize 2022! It was super fun writing this extended piece on self-driving car policy string the world and its implications.

Appreciate the editorial team’s deliberation!

Check it out here 👇🏻👇🏻

@mmbronstein

Our first runner up is

@rishabh16_

on self-driving cars. Our judges commented that "engaging with Disengagement elaborates on many of the policy issues that make it difficult to evaluate these systems on real roads."

1

0

11

4

4

36

Yo wot literally everyone I know from Canada on Twitter is apparently working at

@CohereAI

now lol

We love to see it 🙌🏻🙌🏻👏🏻👏🏻

2

3

36