Robert Long

@rgblong

Followers

6,788

Following

987

Media

2,486

Statuses

15,194

Explore trending content on Musk Viewer

Real Madrid

• 360205 Tweets

Vini

• 198121 Tweets

Eminem

• 167043 Tweets

River

• 139180 Tweets

Celtics

• 135828 Tweets

The Atlantic

• 84846 Tweets

Knicks

• 69441 Tweets

LeBron

• 65311 Tweets

Tatum

• 57745 Tweets

Lakers

• 53791 Tweets

Tulsi

• 52401 Tweets

Bronny

• 41135 Tweets

Mineiro

• 38005 Tweets

John Kelly

• 37960 Tweets

#WWENXT

• 30165 Tweets

Cano

• 28256 Tweets

Deyverson

• 27245 Tweets

Nacho

• 26935 Tweets

Hulk

• 25833 Tweets

Gallardo

• 20468 Tweets

Chandler

• 19687 Tweets

Fonseca

• 18718 Tweets

#DWTS

• 17594 Tweets

Mikal Bridges

• 16952 Tweets

#ManiaDeVocê

• 16054 Tweets

Borja

• 12938 Tweets

Brunson

• 11935 Tweets

東京メトロ

• 11759 Tweets

Last Seen Profiles

not saying today's ML systems are sentient, but this is not a reliable argument form.

once you realize that neural activity is a series of ion channels opening and closing, the question "are humans sentient" becomes fairly easy

43

120

1K

very fun genre

here is a capybara that’s getting more and more spiritually enlightened

8

50

507

1/ Could AI systems be conscious any time soon?

@patrickbutlin

and I worked with leading voices in neuroscience, AI, and philosophy to bring scientific rigor to this topic.

Our new report aims to provide a comprehensive resource and program for future research 🧵

32

104

382

Here's

@davidchalmers42

trying to discuss the hard problem with Tom Bombadil, with limited success.

12

16

197

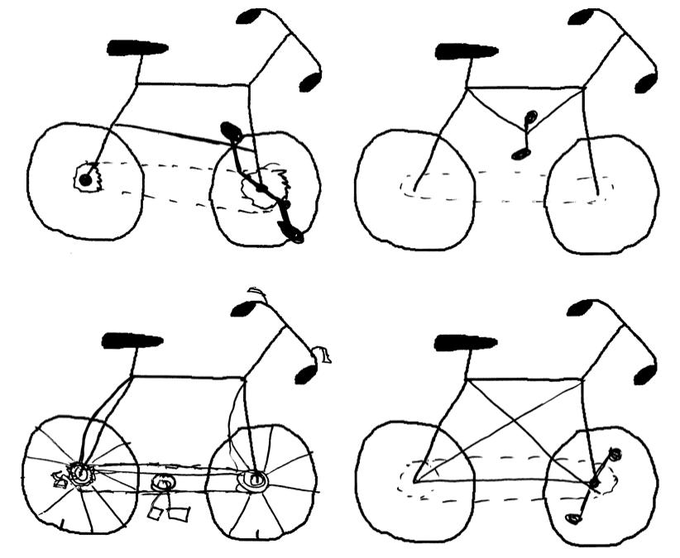

It depends on the domain. I asked DALL-E to draw a diagram of WWII airplanes highlighting where they need extra armor, and it did a great job

5

12

173

When I first became vaguely aware of the jhanas from randomly coming across

@nickcammarata

tweets, I figured they couldn’t possibly be a real thing.

But no, they seem like an incredibly well attested phenomenon and, as many have pointed out, wildly understudied

4

3

121

@nabeelqu

early covid had Islam vibes too, I still think about this tweet

People are

-washing hands several times a day

-social distancing

-using bidets to replace toilet paper

-getting 0 interest rates

-praying more

-calling on their elders

-covering their body, head to toe, to prevent exposure

Welcome to Islam my sisters & brothers!

#coronavirus

6

17

151

3

6

114

A thread of my reactions to

@davidchalmers42

recent talk “Are Large Language Models Sentient?”

tl;dr the talk hit upon all of the key issues; but I have quibbles with how the evidence was organized, and have a somewhat different way of approaching the question 🧵

5

19

113

the Compliment Deficit:

"There is this enormous compliment deficit in the world....Almost everybody's friends know a whole lot of really good things about them, that the person doesn't know about themselves." -

@michael_nielsen

3

15

103

I have an article in the latest issue of

@asteriskmgzn

, about lessons from the history of animal cognition for how we talk and think about AI systems today

6

24

93

when Gimli says "He was twitching because he's got my axe embedded in his nervous system" how does he know what a nervous system is

did Gimli go to 20th century medical school?

10

1

92

Thread of key quotes from a pair of 2015 posts by Sam Altman which outline his views on superhuman AI and regulation

I posted in part because I'm seeing a lot of speculation about Altman's views and motives which are wildly implausible, given this history

4

14

87

I'd totally wear an Our World In Data t-shirt with this Venn diagram on it

@MaxCRoser

The example Max uses in the post is child mortality. But it is true of almost all of the problems we cover on

@OurWorldInData

.

Communicating that all three of these statements are true at the same time is one of the most challenging parts of our job.

5

47

178

4

8

83

@ben_j_todd

I'm hearing reports that one third of the ToddGPT team has resigned, say they plan to announce their own more safety-focused project later today

0

1

71