Karandeep Singh

@kdpsinghlab

Followers

10,278

Following

2,664

Media

3,021

Statuses

18,596

Jacobs Chancellor’s Endowed Chair @UCSanDiego @InnovationUCSDH . Chief Health AI Officer @UCSDHealth . Creator of @Tidierjl #JuliaLang . #GoBlue . Views own.

Joined September 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

FEMA

• 1286699 Tweets

Liz Cheney

• 283207 Tweets

MOONLIT FLOOR OUT NOW

• 232000 Tweets

JUMP

• 210092 Tweets

Mets

• 159942 Tweets

Falcons

• 64255 Tweets

Baker

• 63283 Tweets

Brewers

• 57446 Tweets

天使の日

• 47422 Tweets

Pete Alonso

• 45580 Tweets

Bucs

• 27285 Tweets

Spiritual Awakening

• 25237 Tweets

Kirk Cousins

• 24931 Tweets

Phillies

• 21562 Tweets

Bijan

• 21525 Tweets

Mooney

• 17487 Tweets

ワートリ

• 14007 Tweets

Kirko

• 11283 Tweets

#PowerGhost

• 11073 Tweets

Last Seen Profiles

Pinned Tweet

I have been an advocate of better transparency in AI reporting for research () and the broader public ().

Really appreciated the opportunity to contribute to the new TRIPOD+AI guidelines to address the “how”:

NEW PAPER out today in

@BMJ_latest

TRIPOD+AI: reporting recommendations for studies developing or validating prediction models for use in healthcare that use

#machinelearning

methods

#ArtificialIntelligence

#AIstandards

#OpenAccess

Please share 🙏

10

263

481

1

10

62

My lab is moving to

#JuliaLang

, and I’ll be putting together some R => Julia tips for our lab and others who are interested.

Here are a few starter facts. Feel free to tag along!

Julia draws inspiration from a number of languages, but the influence of R on Julia is clear.

27

136

799

Some professional news - being on the faculty at

@UMich

, I have learned from great students, colleagues, and mentors.

Next year, I’ll be joining

@UCSanDiego

as the Joan and Irwin Jacobs Chancellor’s Endowed Chair of Digital Health Innovation and Chief AI Officer for

@UCSDHealth

.

82

24

541

The DeepMind team (now “Google Health”) developed a model to “continuously predict” AKI within a 48-hr window with an AUC of 92% in a VA population, published in

@nature

.

Did DeepMind do the impossible? What can we learn from this? A step-by-step guide.

14

190

529

Thank you for folks who have shared or commented on our paper. I know the paper is being used by some to dunk on Epic. Rather than piling on, I want to provide a clear-eyed view of what we found, what it means, and what I would suggest to Epic (& other model devs) going forward.

Study suggests that the Epic Sepsis Model poorly predicts

#sepsis

; its widespread adoption despite poor performance raises fundamental concerns about sepsis management on a national level

1

45

138

21

173

504

I’m excited to join

@UCSanDiego

@UCSDHealth

@InnovationUCSDH

!

I pledge to work with colleagues and patients to improve the experience of receiving and delivering care, and to help advance the science of using AI towards better, faster, and more accessible care.

29

26

302

Me, on Google: How do I do this R thing in Julia?

Google: Not so fast. First, you’re going to need to scroll past 10 pages of stuff by

@juliasilge

.

9

6

262

In case you coincidentally happen to be looking for alternative ways to learn

#rstats

and tidyverse because your current learning platform is busy ... *checks notes * ... suing your interactive development environment, may I suggest this series of 73 YouTube lecture videos:

Our

@umichDLHS

#LHS610

#rstats

Exploratory Data Analysis course lectures have been online-only all semester.

Want to follow along? Here’s what we’ve covered thus far...

- R + tidyverse

- dplyr, tidyr, ggplot

- shiny docs

- tidymodels

+ more...

6

38

139

2

42

222

@OscarBaruffa

As data scientists get older, they slowly, molecule by molecule, begin to merge with all the datasets they’ve ever worked with until one day, they leave behind the mere shadow of an inner join.

1

13

217

👋 🧹📊 TidierPlots.jl for

#JuliaLang

A 100% Julia implementation of

#rstats

ggplot2. Powered by AlgebraOfGraphics.jl, Makie.jl, and Julia’s meta-programming capabilities, TidierPlots.jl is an R user’s love letter to data visualization in Julia.

3

50

195

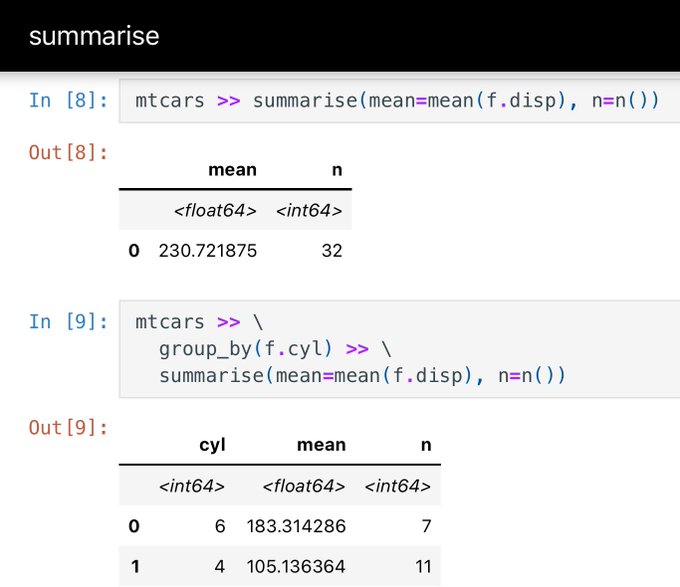

Introducing Tidier.jl for

#JuliaLang

:

A 100% Julia implementation of the

#rstats

{tidyverse}. Powered by the DataFrames.jl package and Julia’s meta-programming capabilities.

Still a work in progress.

Here's a quick tour of the highlights.

5

41

192

Students: We are happy to move to Julia, but can you put together some resources for us to learn the tidyverse equivalent in Julia?

Me: Give me a month to prepare some “resources.”

***frantically re-creating tidyverse in Julia***

My lab is moving to

#JuliaLang

, and I’ll be putting together some R => Julia tips for our lab and others who are interested.

Here are a few starter facts. Feel free to tag along!

Julia draws inspiration from a number of languages, but the influence of R on Julia is clear.

27

136

799

8

18

190

@matloff

What can I say. Clinicians are just Bayesians with really strong priors about their expected p-values.

6

24

177

Why does a proprietary sepsis model “work” at some hospitals but not others?

Is it generalizability? Measurement? Intervention? Patient population? Margin for improvement? Resource constraints?

Working with a team led by

@_plyons

, we looked at a 9-hospital network.

A story.

Factors Associated With Variability in the Performance of a Proprietary Sepsis Prediction Model Across 9 Networked Hospitals in the US | Critical Care Medicine | JAMA Internal Medicine | JAMA Network

@WashUi2db

@OHSUPulmCCM

@umichDLHS

1

8

36

4

47

173

Tidier.jl 1.0.0 is now on the

#JuliaLang

registry.

It’s 𝘧𝘪𝘯𝘢𝘭𝘭𝘺 a meta-package. It re-exports TidierData.jl, TidierPlots.jl, TidierCats.jl, TidierDates.jl, and TidierStrings.jl.

2

39

172

I’ll be giving a talk on implementing predictive models at

@HDAA_Official

on Oct 23 in Ann Arbor. Here’s the Twitter version.

Model developers have been taught to carefully think thru development/validation/calibration. This talk is not about that. It’s about what comes after...

6

48

150

.

@elonmusk

Hospital recruitment

“An MD is definitely not required. All that matters is a deep understanding of biology, biochemistry, pharmacology, physiology, pathophysiology, genetics, social dynamics, life and death, and an ability to implement this in clinical practice.”

7

18

152

8 months later and still haven’t turned back.

We still have existing projects in R and Python, but the syntax, tooling, and developer experience in Julia are so smooth that it’s hard to go back.

Language interoperability is great, and it’s nice to have a fast glue language.

My lab is moving to

#JuliaLang

, and I’ll be putting together some R => Julia tips for our lab and others who are interested.

Here are a few starter facts. Feel free to tag along!

Julia draws inspiration from a number of languages, but the influence of R on Julia is clear.

27

136

799

5

15

142