Jacob Schreiber

@jmschreiber91

Followers

4,897

Following

1,126

Media

435

Statuses

4,875

Visiting Scientist @impvienna , incoming prof @UMassGCB . Previously, @StanfordMed @uwcse . Studying genomics, machine learning, and fruit.

Vienna, Austria

Joined March 2017

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

GCF HEADLINER LISA

• 404113 Tweets

Georgia

• 214638 Tweets

#precure

• 74999 Tweets

Primanti Bros

• 71392 Tweets

Parque Lezama

• 68559 Tweets

Martinez

• 66689 Tweets

Román

• 66543 Tweets

PRETTY GIRL MANTRA

• 52570 Tweets

Bama

• 48284 Tweets

#仮面ライダーガヴ

• 38795 Tweets

Travis Hunter

• 37781 Tweets

#ブンブンジャー

• 34520 Tweets

Tuscaloosa

• 30495 Tweets

Heisman

• 28362 Tweets

Auburn

• 25592 Tweets

Riquelme

• 23999 Tweets

Kirby

• 23266 Tweets

#MostRequestedLive

• 21548 Tweets

Milroe

• 18992 Tweets

Saban

• 17185 Tweets

Dawgs

• 14927 Tweets

Jeremiah Smith

• 14827 Tweets

Carson Beck

• 14333 Tweets

#RollTide

• 13829 Tweets

Belgrano

• 13303 Tweets

ショウマ

• 11941 Tweets

Ocee

• 10374 Tweets

Last Seen Profiles

@TheBcellArtist

They probably go places that will pay them appropriately for their skills. Paying post-docs under $70k is common but obscene in most fields, given how critical they are.

7

10

755

Thrilled to announce that I'll be joining the incredible researchers at

@IMPvienna

for a year as a visiting scientist and then joining

@UMassChan

as an assistant professor in Genomics+CompBio in 2025!

At both places, I'll be continuing my work on deep learning + genomics.

65

17

378

@CT_Bergstrom

This entire time I knew in the back of my mind that you were a person but, because I've only seen you on Twitter, I just assumed you were a benevolent bird sharing your vast knowledge of biology with us. Illusion shattered by the picture in this article. :(

12

10

357

@naomirwolf

@BillGates

As a researcher at U of Washington, I remember when

@BillGates

walked into my lab and said "Stop working on this, we must work on vaccine microchips!" and we dropped all our grant-funded work immediately. We would've gotten away with it too, if you didn't point it out on Twitter.

4

18

305

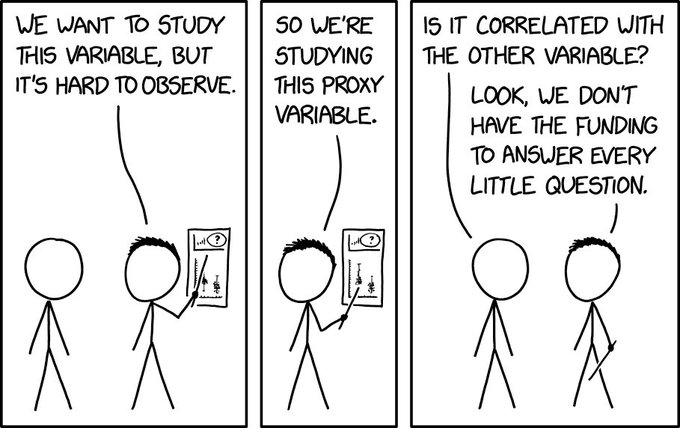

CS/ML people venturing into biology frequently assume that the data they're given is clean and that all the upstream processing steps have been figured out. This is absolutely not the case.

I would encourage CS/ML people to really look into the gritty details like this.

8

58

290

Finally out in

@NatureRevGenet

: Navigating the pitfalls of applying machine learning in genomics! w/

@seawhalen

et al.

Our key point: you MUST evaluate your models in the same setting you want them to be used or they might not actually work in practice.

4

83

240

A bit ago, I got a grant from

@NumFOCUS

to rewrite pomegranate from the ground up using a PyTorch backend. The goal was to increase speed, decrease code size, and decrease the barrier to writing custom components or integrating w PyTorch. The results have been incredible so far.

8

21

207

Found out last night that

@NumFOCUS

funded my proposal to rewrite

#pomegranate

from the ground up using

@PyTorch

as the backend! Need to train massive HMMs using multiple GPUs, or want a mixture of negative binomials as part of your neural network? Watch this space!

16

22

205

pomegranate v1.0.0 has been released! This major release is a complete rewrite using

@PyTorch

to replace the Cython backend.

Same great probabilistic models, now WAY faster, GPU support, fewer installation issues, and easier to extend.

Check it out! 1/

3

33

194

This fiasco is exactly why I read ML papers in genomics with such a critical eye, and try to write about pitfalls as much as I can.

Genomics data is COMPLICATED and ML methods are eager to please. It's easy to mess up, and when you do, you'll appear to get good performance.

5

33

191

Computational biology is becoming the same thing. So many papers and talks I see recently are benchmark-driven, not science-driven.

Uncovering something scientifically interesting is seen as an optional final step if you want to get into a top journal, not a key motivation.

9

22

179

At the beginning of 2018 at an

@ENCODE_NIH

meeting, the idea for the ENCODE Imputation Challenge was born: an open contest to predict genome-wide genomics experiments given fixed train/test sets and encourage development of large-scale imputation methods.

warning: drama 🧵

1/

2

35

168

Happy to share new work on a pitfall you can fall into if you train ML models to predict across cell types. TL;DR, always compare your predictions to the per-locus average activity, it's a hard baseline to beat!

@uwescience

@uwgenome

@uwcse

@EncodeDCC

2

70

166

After several months of work, I'm excited to announce the first release of torchegranate, my

@PyTorch

rewrite of pomegranate!

torchegranate is faster, more readable, better tested, and easy to extend.

Try it out with `pip install torchegranate`! 1.

7

29

158

after a wild 6 years in grad school, tomorrow i get to find out what life is like after defense. i will report back.

@AcademicChatter

15

2

155

@michaelhoffman

No one will use your computational method outside your group, unless it's for basic data processing, so you better be prepared to do all the legwork of applying it all the way to scientific discovery because no one else will.

4

8

150

Last week was my last at

@uwgenome

. Today, I start a post-doc with

@anshulkundaje

at

@Stanford

! When I took the position I imagined there would be more pomp and circumstance than logging out of one server and logging into another...

5

3

145

Sometimes I feel like using

@numba_jit

is cheating. I was concerned that an analysis was taking too long, at ~40 minutes per file, so I just slightly rewrote and jitted the function and now it takes 7 seconds.

3

12

128

Just released our preprint on apricot, a Python package implementing submodular selection for machine learning! It efficiently finds subsets of data that are representative of the whole space. Check it out!

@uwescience

@uwcse

3

48

122

Finally, after ~6 years of work, this is published!

Thanks to all my co-authors and the participants of the challenge for seeing this through.

At the beginning of 2018 at an

@ENCODE_NIH

meeting, the idea for the ENCODE Imputation Challenge was born: an open contest to predict genome-wide genomics experiments given fixed train/test sets and encourage development of large-scale imputation methods.

warning: drama 🧵

1/

2

35

168

6

30

101

Literally everyone studying gene regulation using transcription instead of protein abundance.

@lkpino

4

16

95

In this episode of

@bioinfochat

, I interview

@lkpino

about the limits of mass spec measurements and how proteomic measurements can be integrated with genomic measurements. Every time I talk to her I always learn a ton!

1

29

88

I think it says something about my experiences in academia (and I doubt I'm alone) when I'm shocked to get reviews back that, although will require a lot of work to address, are generally supportive and provide constructive feedback.

@AcademicChatter

3

4

85

After delaying my commencement by two years due to the plague that ravages this land, I'm finally a real doctor! With

@thabangh

9

2

86

It's always fun to fail to make basic connections about your data as a computational person.

me: so, this sample is labeled "healthy" but are we sure the person is healthy?

@anshulkundaje

: well, it's a heart sample, so they're dead

2

8

86

My

@uwcse

@uwescience

thesis is now online ()! Check it out if you want to learn about my work with Avocado, imputing >30k genomics experiments, and ordering future experiments. I also wrote a 2 page tl;dr overview:

3

10

79

Just found out about `.numpy(force=True)` for

@PyTorch

tensors and it's life-changing. Never touching `.detach()` again.

5

5

76

In our latest episode of

@bioinfochat

, we talk with

@Avsecz

about research in academia vs industry, Enformer, and deep learning libraries! Great to hear about the work directly from the source. Hope other people enjoy our conversation!

4

15

72

Super excited to be joining the amazing team at

@JOSS_TheOJ

as a topic editor for bioinformatics and machine learning. If you wrote a great software tool that supported amazing research, write it up and send it my way! Good software deserves more recognition in research.

0

13

65

@timrpeterson

The biggest problem I've seen in biotech is people who don't understand their data and lose years just learning bias. I'm not sure that getting rid of people with domain knowledge will solve this.

1

0

65

Glad to see that the

@numpy

review article is out! The package has had a massive effect on the adoption of Python and the development of the entire ecosystem.

0

17

61

@nomad421

@MicrobiomDigest

That's what I tell my advisor when he asks me to get a second paper out of my postdoc.

0

0

63

pomegranate v0.9.0 released! The main focus was on adding missing value support for model fitting / structure learning / inference across all models. Read more about it here:

@uwescience

@uwcse

@NumFOCUS

0

29

62

On Thursday (4:40am PST ugh) I'm giving a talk at

#ISMBEECCB2021

#MLCSB2021

on five pitfalls to avoid when applying ML to genomics data! Although conceptually simple, they can be extremely difficult to identify in practice if you don't know what to look for. 1/

2

14

61

After 6 years of challenges, setbacks, successes, and corgi viewings, I've scheduled my thesis defense. It always seemed so far away until suddenly it was here. I know that I wouldn't have made it without a support network.

@AcademicChatter

#AcademicChatter

7

2

60

Once again I accidentally fed in a similarity matrix to UMAP instead of a distance matrix.

@leland_mcinnes

implemented the best warnings for when this happens---your plot looks like a creature whipping you for being wrong.

1

4

59

Proud to finally release Avocado! Avocado is a deep tensor factorization model that imputes epigenomic signal better than prev work, and the latent factors yield better ML models on genomics tasks than the data it was trained on.

@uwescience

@uwcse

4

22

58

@jxnlco

The GZIP paper is going to cause new researchers to independently rediscover kernel methods

2

5

53

Regretting coming to

#RECOMB2022

. Most people not wearing masks, coughing and sneezing are near constant in the audience, someone I know already has gotten COVID. Who would feel safe sitting in the audience of this? Talks are good though.

4

4

55

The paper just dropped on

@biorxivpreprint

. Give it a read, and let us know what you think!

Our main point: doing genomics work correctly is HARD. Please don't just use data you find on the internet without knowing how it was processed. 18/

3

16

55

My

@SciPyConf

talk, "apricot: Submodular optimization for machine learning," is online! Learn about a principled way to reduce massive data sets down to representative subsets that are widely useful. Also,

#GossipGirl

.

Thanks

@uwescience

for support!

2

13

52

Excited and proud to receive the

@acm_bcb

2020 best paper award for my work on making zero-shot imputations across species! Like most work, this would not have been possible without my co-authors. Here is a thread summarizing the paper:

3

4

54

Roman and I just released a new

@bioinfochat

episode! () This time, we interview

@drklly

about

@calico

, Basenji, and how machine learning models can be used to help us understand the functional consequences of genetic variation.

2

10

53

@rasbt

Okay okay, I'll turn my GTX 1080 Ti off and stop training GPT-5 if that's what the nation wants.

2

0

51