Johannes Stelzer

@j_stelzer

Followers

1,549

Following

668

Media

47

Statuses

272

Pioneering closed-loop gen AI | Immersive AI Systems at the Champalimaud Center for the Unknown @Neuro_CF | Open-source real-time AI @lunarringart

Portugal

Joined January 2023

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

and more

• 1740239 Tweets

LINGORM PANTENE TRIP

• 639185 Tweets

Bruno Mars

• 569552 Tweets

#ROSÉ_BRUNO_APT

• 305346 Tweets

APT OUT NOW

• 200896 Tweets

Al Smith

• 155025 Tweets

Corinthians

• 132460 Tweets

Flamengo

• 132169 Tweets

Saints

• 93449 Tweets

Yankees

• 91482 Tweets

Schumer

• 75828 Tweets

CONGRATS CL IG1M400K

• 75470 Tweets

#NuNewJapanDebut

• 47173 Tweets

Broncos

• 45542 Tweets

Allan

• 36255 Tweets

NUNEW DEBUT IN JAPAN

• 31388 Tweets

San Marcos

• 30279 Tweets

Lakers

• 28865 Tweets

SB19 PIAYA NG MAYKAPAL

• 26629 Tweets

HOME IS WITH ENHYPEN

• 23418 Tweets

対象作品

• 23354 Tweets

超電ブレイカー

• 20699 Tweets

Dalton Knecht

• 17004 Tweets

Mookie

• 16564 Tweets

Bo Nix

• 16075 Tweets

Hayırlı Cumalar

• 15564 Tweets

Rattler

• 14304 Tweets

ARCARM PRESS TOUR

• 13544 Tweets

ミミッキュ

• 13050 Tweets

集英社秋マンガチャ開催

• 12644 Tweets

Dennis Allen

• 12012 Tweets

フラワー

• 10552 Tweets

Last Seen Profiles

Introducing Latent Blending: a new

#stablediffusion

method for generating incredibly smooth transition videos between two prompts within seconds.

6

31

150

#latentblending

is now available for everyone on

@huggingface

Spaces, right from your browser: . Create seamless video transitions for your text prompts with buttery smoothness.

Latent Blending

@Gradio

demo:

a stable diffusion method for generating incredibly smooth transition videos between two prompts within seconds by

@j_stelzer

1

10

55

2

6

39

Great example how structures can be preserved across latents. Will be further enhanced with ControlNet Integration 🔜

😍The Latent Blending

@Gradio

demo by

@j_stelzer

is out on

@huggingface

Spaces and is going brrr with community grant GPUs.

🚀Watch this awesome animation done by blending images at two ends.

Explore the Demo on🤗and Unleash your creativity now!! -

1

3

16

1

4

28

@dr_cintas

what they seem to have missed though: how often did the AI/physicians get it completely wrong?

3

1

27

looks like the best text2vid currently. wondering if bytedance releases code/model, no mention at

0

1

22

How it works: latent blending splits and remixes latent representations using spherical linear interpolations from

#stablediffusion

. It is based on SD2.1, and supports 512, 768, inpainting and x4 upscaling.

2

2

24

175M generated images and 94 hits - that is a huge number of statistical comparisons. If one tries often enough, anything can happen.

0

1

15

Excited to merge high-performance motion tracking with real-time diffusion for music performances! Together with the incredible Ricardo Martins and Nico Espinoza at the Center for the Unknown in Lisbon,

@Neuro_CF

. Stay tuned for more from our immersive AI space.

@lunarringart

3

2

14

Why it's cool: Latent blending allows you to create almost imperceptible smooth transitions between two different prompts/images. Unlike the awesome

#deforum

, latent blending renders within seconds, not minutes, making it much cheaper and faster to play with.

2

2

11

GANs vs Diffusion S03E05: Great Image quality, 0.13 seconds to synthesize 512px & friendly latent space. Too bad no model…

0

1

10

Wow, the EU AI act seems to be wild!

If this comes through it is the end of generative AI in Europe, at least in terms of development. Great news for VPN providers though

0

3

9

amazing idea with the landescapades. ui tool to create the transitions will be available via gradiocolab tomorrow

the first stablediffusion "optical illusion"?

these landscapes look like still images, but the 1st and last frame shows entirely different images

using

@j_stelzer

amazing latent blending code to traverse through the latent space

10

33

229

2

1

10

@MadMonkMani

absolutely! working on coupling this to real time audio generation, leveraging synesthesia & immersion

1

0

10

One of my 😻

@Gradio

features: Event Listeners. In a nutshell they allow you to dynamically change your UI in whatever way you like.

👋Gradio fans! Let's dive deeper into building

@Gradio

ML demos using✨Event Listeners✨ in Gradio Blocks!

🧱First things first,let's see how Blocks r structured. They contain Components tht r automatically added as they're created within `with gr.Blocks(). as demo:` clause

🧵👇

1

7

22

0

2

7

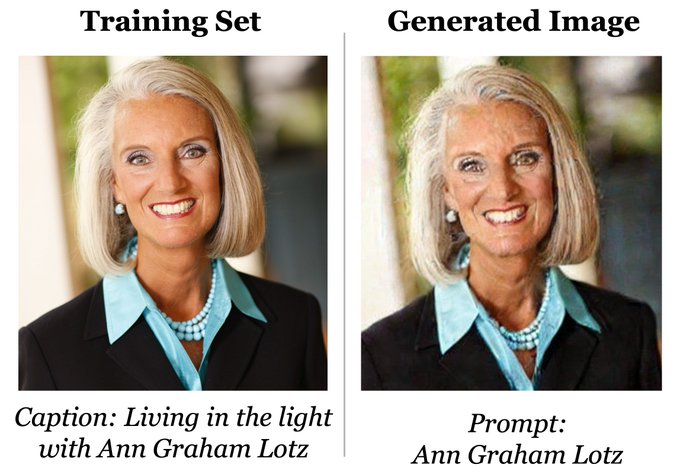

promising alternative to IP adapter for zero-shot face "finetuning". star their repo if you want to help accelerate the code/model release :)

🧵 [1/n] Read about InstantID today. It's a new approach to creating personalized and consistent images.

#AI

#InstantID

1

2

7

0

0

6

@giffmana

you can use a t test if your data doesn’t violate the assumptions: next step is to calculate t-scores between all pairs, and account for multiple corrections (e.g. FDR or bonforroni, dividing the p-vals by nmb tests). hope nr1 vs nr2 p<0.05 !

1

2

6

Internet Explorer: Agent who automatically crawls the gaps in your text-to-image training DB. very neat idea!

0

2

5

@imnotfady

haha great idea. love the part where you sampled the real Karens. What would happen if you put another Karen on the customer support end and let them talk?

3

0

5

Incredible way to make awesome personalized profile pictures:

In a nutshell, fine-tuning SDXL on a couple photos on using

@heyglif

.

Examples and how-to below!🧵

2

1

5

SD3 distilled - anyone working on this soon?

We are thrilled to unveil our first open-source project:⚡️ Flash Diffusion ⚡️ - a robust, versatile & efficient distillation method for diffusion models.

👉🏼

By

@heyjasperai

&

@clipdropapp

12

52

237

0

0

4

Control-GPT allows automatic spatial arrangement of prompts for txt->img, leveraging the visual wisdom of LLMs

0

1

3

‘under 15 seconds for 20 inference steps to generate a 512x512 image’ - all on a smartphone! amazing to see how much potential for acceleration there is

Generative AI is now running completely on an edge device. Learn how

@Qualcomm

#AI

Research deployed Stable Diffusion, a popular 1B+ parameter foundation model, on a

@Snapdragon

phone through full-stack AI optimization.

11

61

326

0

0

4

Ready for a sprinkle of magic?✨

arrives soon - your wand to conjure new forms of media with Generative AI! 🧙♂️🎨

a quick morning jam about ☕️

hey

@glifbot

, make me..

- "a weird comic strip featuring myself and coffee"

- "a fashion trend about coffee"

- "a medieval meme about coffee"

- "AI hypeboi thread about coffee"

3

3

33

0

0

4

at the cost of inference speed… furthermore the first diffusion pass is with 64x64px followed by two upscaling models. SD latent space has same dim and even one more channel.

Open source Imagen coming soon. Pixel-space model (less artifacts), better text conditioning, model produces more coherent results than SD with perfect text. 1024x1024 generations with no upscaler or clone-tool artifacts

#AIArt

#StableDiffusion2

/

#StableDiffusion

#DreamStudio

2

60

211

0

0

4

super excited to share the news!

glif is open for registration! glif is a fun new platform to stack together generative AI and build tomorrow's new media forms, without the need to code anything.

check it out here:

📢: I've teamed up with

@jamiew

& friends to build Glif - a fun new way for anyone to create, play & jam with "AI legos":

1. easily create AI micro-apps to generate images, stories, memes, comics..

2. let others play, run & remix your glifs

sign up:

12

30

149

0

0

2

ChatGPT code interpreter = game changer for programming

0

0

3

@elesidd

Nice work! And let me congratulate you - this is (to my knowledge) the first NFT made with

#latentblending

1

0

3

@eerac

it essentially chains together the latents and thus the resulting images. you can think of it as a constrained diffusion. img2img: the strength determines the injection depth. thus only shallow blends would be possible

0

0

3

F$%$ Google/MusicLM for their angst-and-$$$ driven outdated attitudes. Very much welcoming the quality and spirit of Moûsai:

0

0

2

@DigThatData

Generative AI is a core component for digital therapeutics. We are currently helping building such a centre in a perfect location, DM me if you want to know more

0

0

3

@Buntworthy

@StabilityAI

actually it is planned to be released in public, see

@pilkkupiste

@deepfloydai

Like SD original release, first research release (but more smooth than before) => feedback => public release as new architecture type

2

0

23

0

0

3

@eerac

the keyframes are made via standard prompts. img2img unfortunately won’t work (so well…) as we need the full latent trajectory

1

0

3

@mreflow

@angrypenguinPNG

@rileybrown_ai

@stokebuilder

@KaiberAI

this quality of smoothness only with prompts. images is holy grail & wip

3

0

3

@stokebuilder

@mreflow

@angrypenguinPNG

@rileybrown_ai

@KaiberAI

what i love about these times is that we can actually reach for them!

0

0

3

@Dan50412374

@ilumine_ai

Ops sorry I meant vanilla sdxl TURBO takes 260ms. How do you get the further speedup?

0

0

2

@_SilkeHahn

@rawxrawxraw

‘… widersprechen grundsätzlich den Prinzipien US-amerikanischer und europäischer Urheberrechte’ faktisch nicht korrekt - siehe fair-use Doktrin

1

0

2

@LovisCyance

@fabianstelzer

principally should work with all linear transforms and if running through upscaling it should be smooth as hell. nonlinear mb also good

1

0

2

try it yourself! gradio w colab backend here:

0

0

2

@giffmana

you basically generate the null distribution from the data via shuffling the labels. the resulting null distribution allows to estimate the likelihood of your real data. still have to do multiple testing correction though, e.g. with Benjamini Hochberg FDR

0

0

2

Mind-blowing: PanoHead, a 3D face GAN generating 360 degree full head views. So puzzling to see a face rotating and warping at the same time.

Come check out 2 of our projects on generative avatars at CVPR 2023:

1. PanoHead: Geometry-Aware 3D Full-Head Synthesis in 360° (, code available)

2. OmniAvatar: Geometry-Guided Controllable 3D Head Synthesis

#CVPR23

4

60

233

0

1

2

@JonasAndrulis

ironischerweise sind Dokumentation und Inventarisierung ja exzellente Usecases für AI 😵💫

0

0

2

@eerac

@fabianstelzer

with

@lunarringart

we showed it’s ancestors but we will show the VR version in Lisbon end of Januar

@Neuro_CF

0

0

2

@mreflow

@angrypenguinPNG

@rileybrown_ai

@stokebuilder

@KaiberAI

but it already works with sounds :)

0

0

2

@Guygies

@fabianstelzer

not really. unless we manage to run gradient through diffusion chain we dont have access to the full chain which is needed for this method to shine

0

0

2

@fabianstelzer

this is also holds for technical folks: llm-based coding can massively speedup development, for me this is easily a factor of 2-4x

0

0

1

UPDATE: create super smooth transitions with your own models! The updated

#latentblending

colab enables BYO-ckpt

Here's an example showing the Balloon-Art Model, transitioning from a (balloon-ized) cat to dog

0

0

2

Loving this brilliant exploit, which leverages "Neorosemantical Invertitis"

0

0

2

@Stephen_Parker

looks stunning! own images require a trajectory of latents and the fitting conditioning… I will experiment with backprop soon

1

0

2