Hao Liu

@haoliuhl

Followers

4,434

Following

162

Media

98

Statuses

268

machine learning, neural networks. @Berkeley_AI

Joined September 2018

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

FEMA

• 1203388 Tweets

Liz Cheney

• 209584 Tweets

#LISAxMoonlitFloor

• 197221 Tweets

MOONLIT FLOOR OUT NOW

• 137932 Tweets

SCJN

• 128619 Tweets

The Boss

• 103770 Tweets

#GHGala5

• 100032 Tweets

Bruce

• 92338 Tweets

Mets

• 69981 Tweets

Happy Anniversary

• 59797 Tweets

Baker

• 51010 Tweets

Brewers

• 42600 Tweets

EL DESTELLO IS OUT

• 34794 Tweets

天使の日

• 33230 Tweets

Falcons

• 29013 Tweets

Mancuso

• 25832 Tweets

Halle

• 24850 Tweets

もちづきさん

• 24728 Tweets

Pete Alonso

• 19614 Tweets

Athena

• 15232 Tweets

Mike Evans

• 14530 Tweets

Bijan

• 13510 Tweets

Phillies

• 11404 Tweets

#ゴンチャのハロウィン準備中

• 10748 Tweets

Milwaukee

• 10479 Tweets

Quintana

• 10036 Tweets

Last Seen Profiles

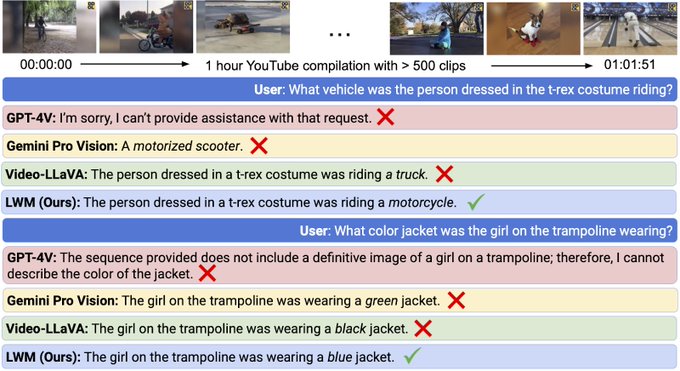

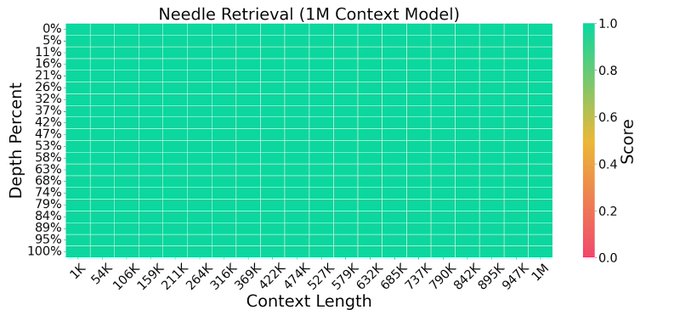

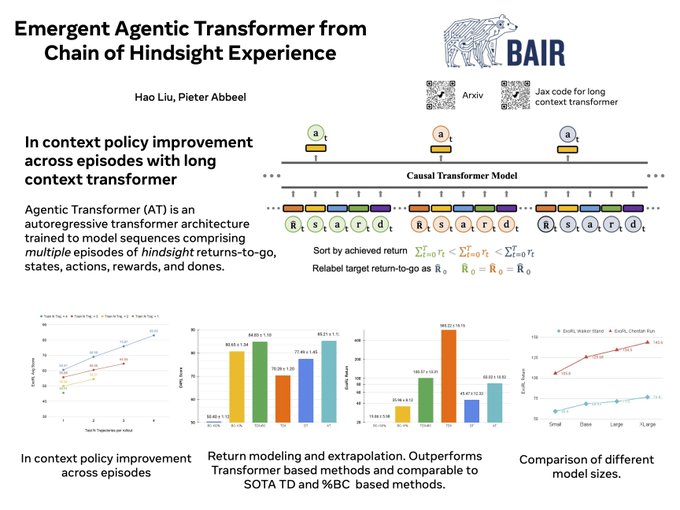

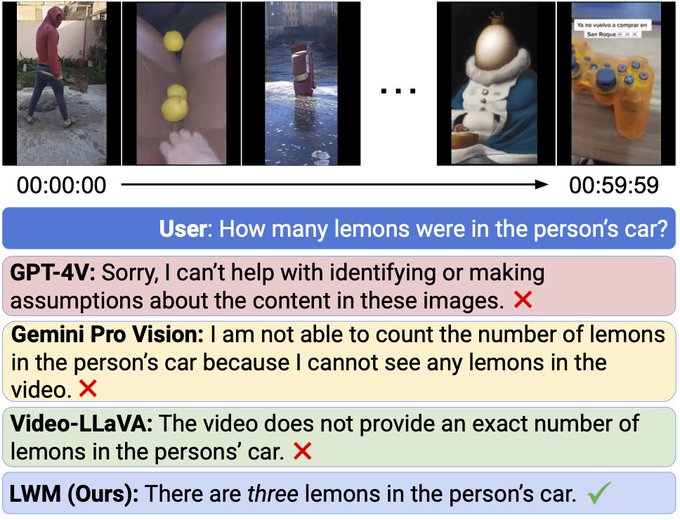

New paper w/

@matei_zaharia

@pabbeel

on transformers with large context size.

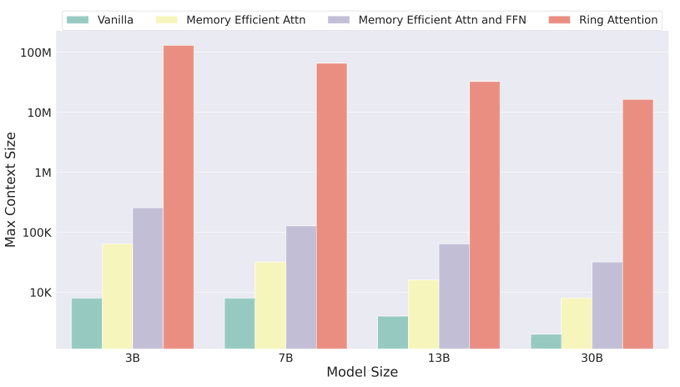

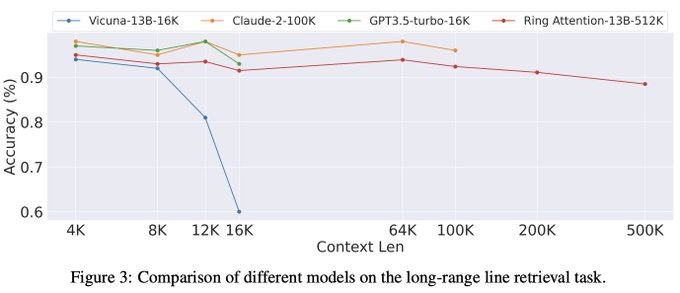

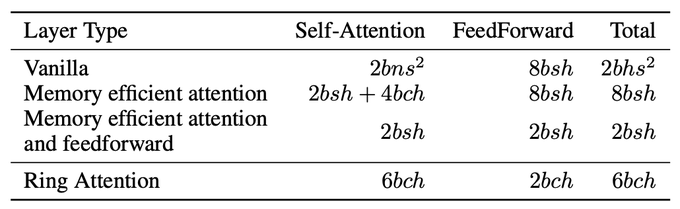

We propose RingAttention, which allows training sequences that are device count times longer than those of prior state-of-the-arts, without attention approximations or incurring additional overhead.

10

179

850

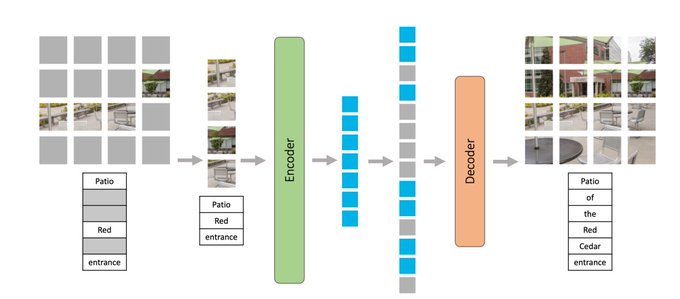

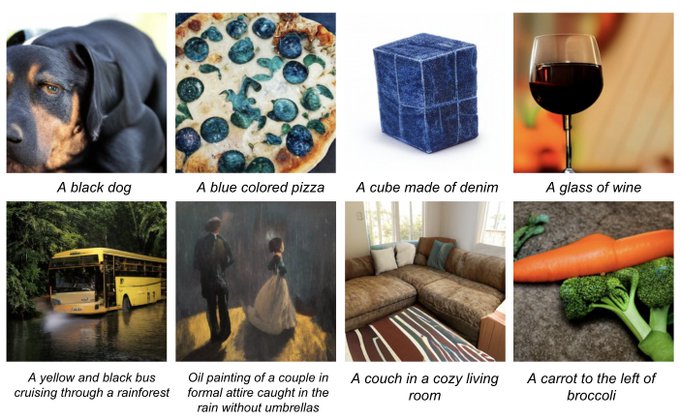

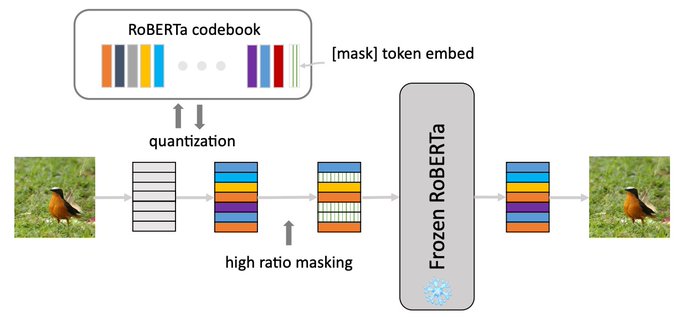

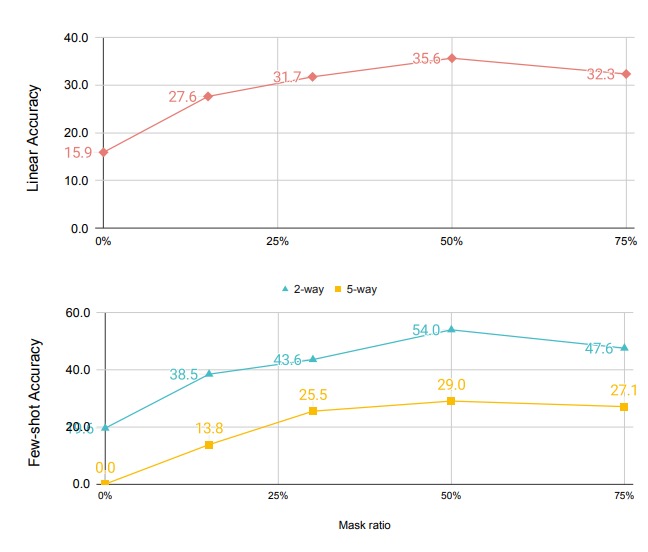

Excited to share M3AE, a simple but effective model for multimodal representation learning.

TLDR: M3AE learns a unified encoder for both vision and language from both paired image-text data as well as unpaired data.

w/

@YoungGeng

Summary thread:

[1/N]

5

38

248

RingAttention's Jax code is available at

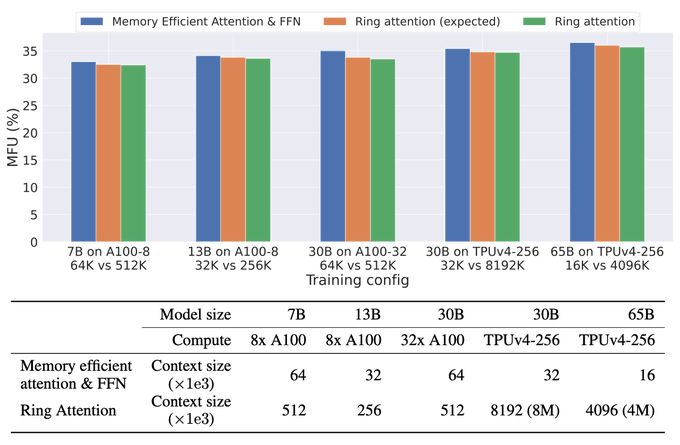

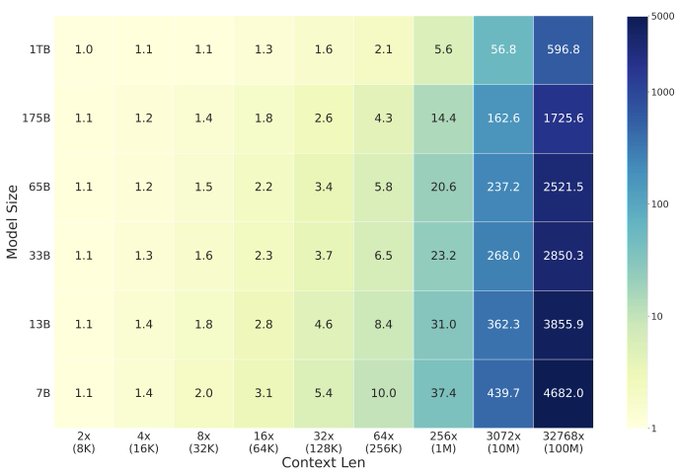

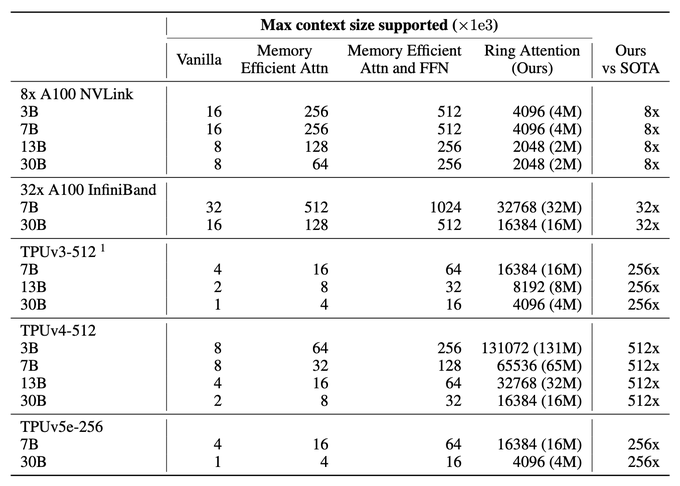

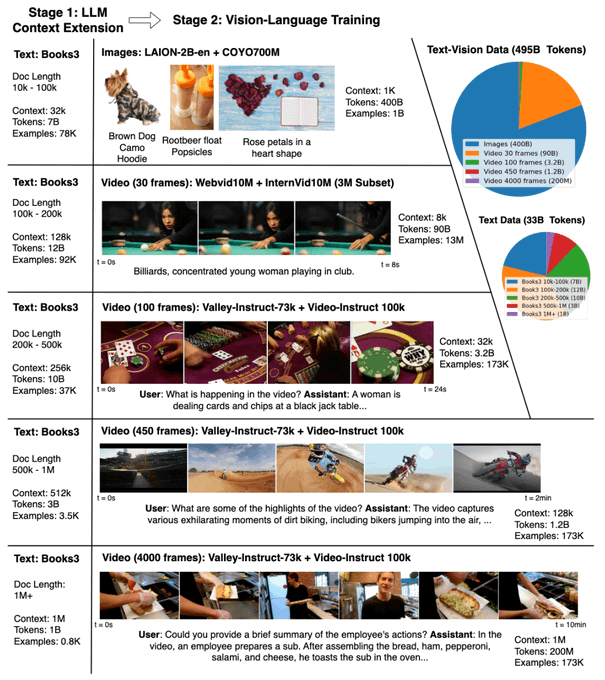

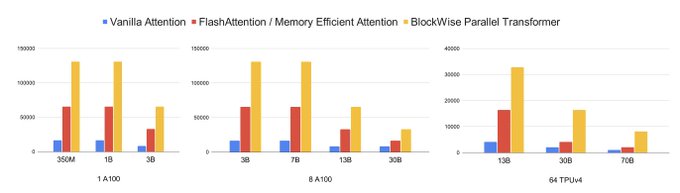

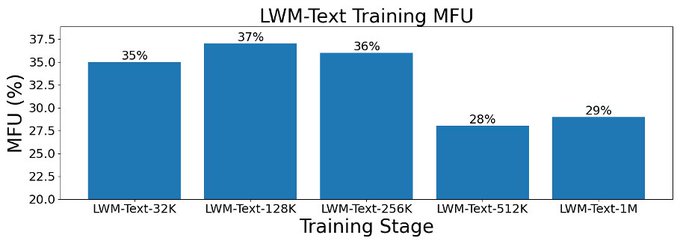

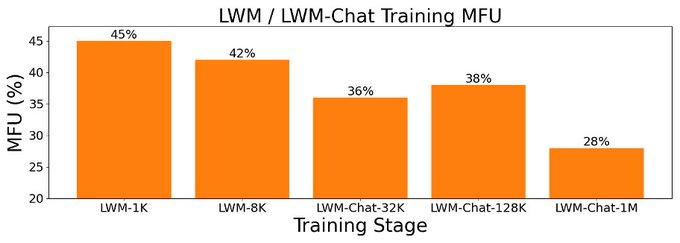

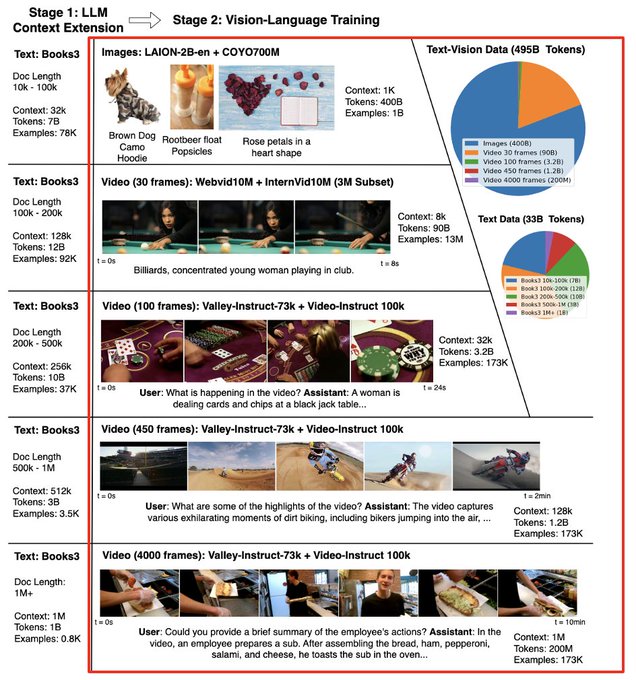

In end-to-end FSDP training on GPU (7B params, 8x A100 80G), context expands from 32K to 256K tokens and can reach 16M tokens with 512x A100.

On TPU (7B params, 1024x TPUv4, FSDP), context can reach 8M tokens.

New paper w/

@matei_zaharia

@pabbeel

on transformers with large context size.

We propose RingAttention, which allows training sequences that are device count times longer than those of prior state-of-the-arts, without attention approximations or incurring additional overhead.

10

179

850

3

39

181

Paper:

Models:

Code:

Website:

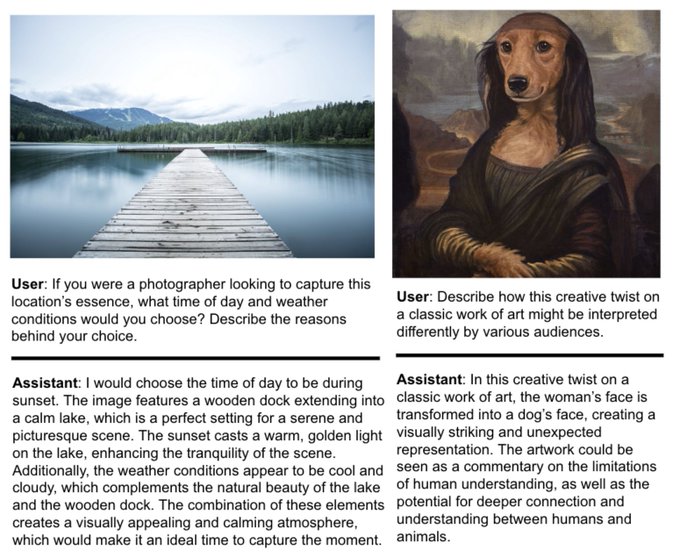

This is a joint work with amazing people

@wilson1yan

,

@mateizaharia

,

@pieterabbeel

3

13

92

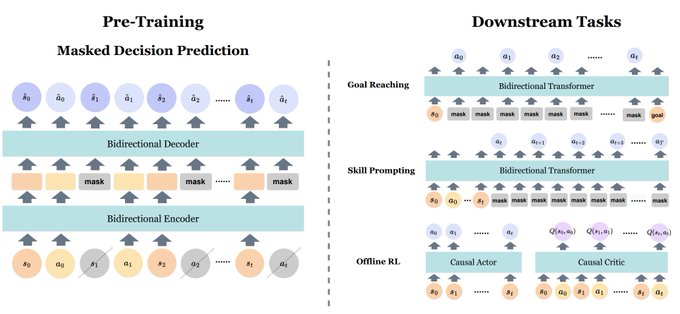

In our

#NeurIPS2022

work, we explore the generality of masked token prediction for generalizable and flexible reinforcement learning.

A 🧵 on the paper

3

21

83

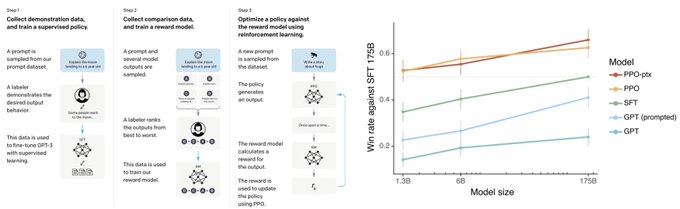

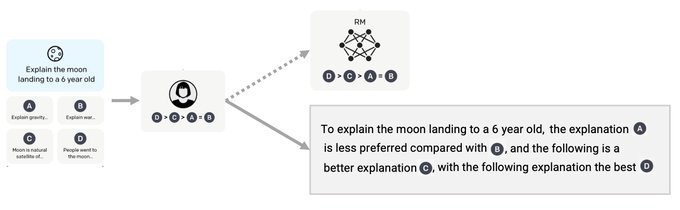

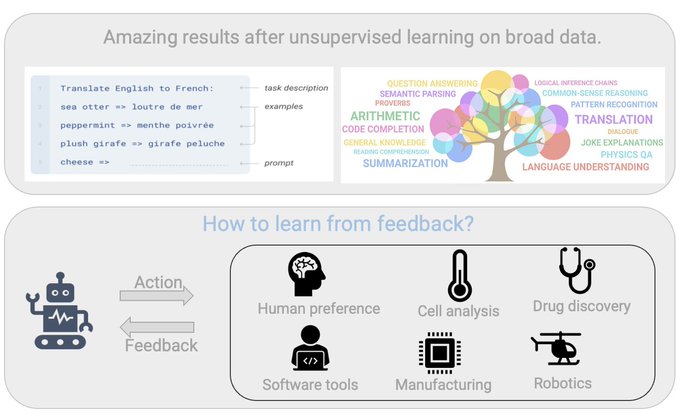

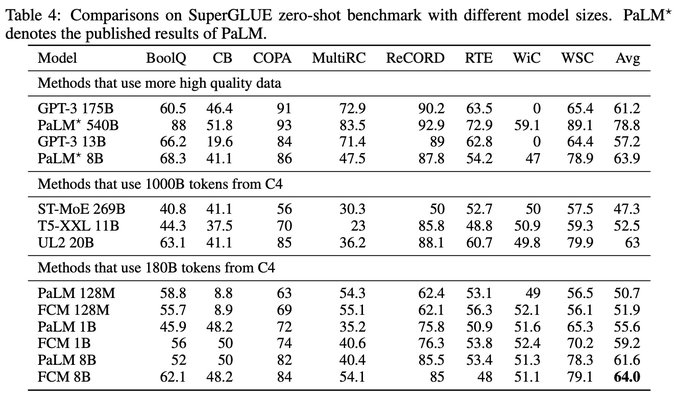

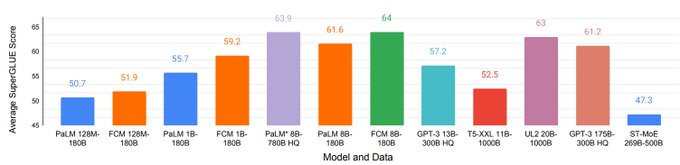

To corroborate with Yann on the importance of having crowd-sourced human feedback datasets, it appears that the absence of such high-quality datasets has became a research bottleneck.

A thread:

1

9

82

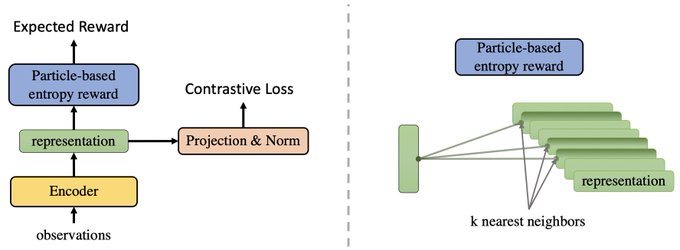

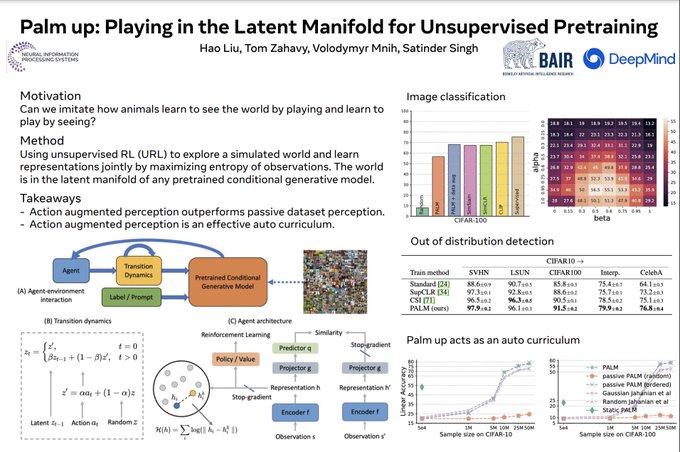

Does interactive learning help developing better perception than learning from static datasets?

In our

#NeurIPS2022

paper, we propose a method based on unsupervised RL that matches SOTA SSL methods, without using data augmentation.

A 🧵 on the paper:

2

10

57

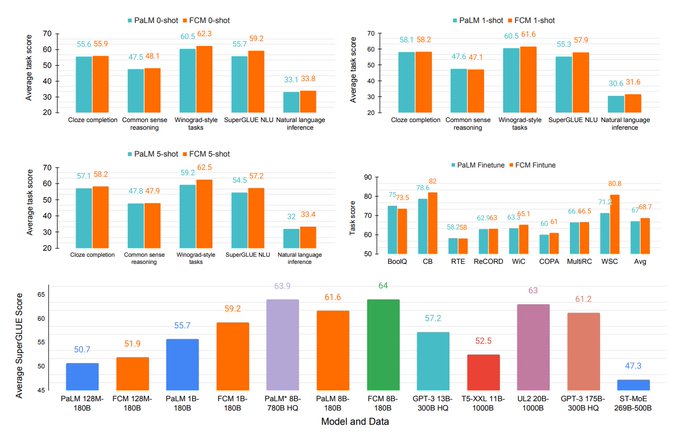

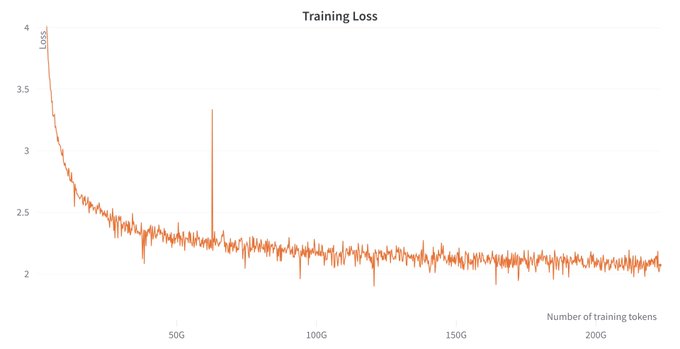

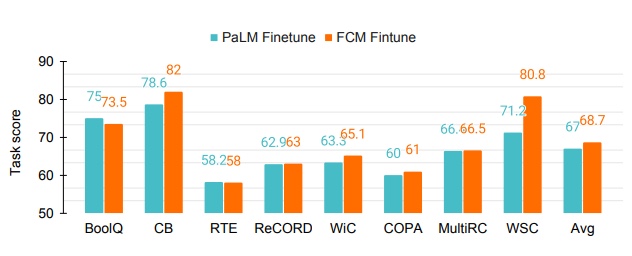

We evaluated OpenLLaMA using lm-evaluation-harness from

@AiEleuther

. Comparing with original LLaMA(1T tokens) and GPT-J(500B tokens), OpenLLaMA(200B tokens) exhibits comparable performance across a majority of tasks, and outperforms them in some tasks.

1

3

44

Many thanks to

@_akhaliq

for sharing our arxiv paper :)

2

2

38

We train OpenLLaMA on the RedPajama dataset curated by

@togethercompute

, which is an open reproduction of LLaMA dataset containing 1.2 trillion tokens and roughly match the number of tokens as LLaMA.

You can find more details in Together's blog .

1

4

38

This is a joint work with amazing collaborators

@carlo_sferrazza

and

@pabbeel

.

Check out the paper and code for more details. The code supports fairly large-scale training/finetuning too.

Paper:

Code:

1

3

38

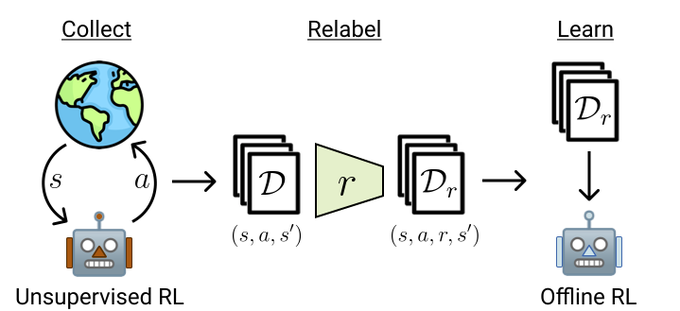

Some thoughts on the work led by my amazing collaborators

@denisyarats

and

@brandfonbrener

.

With diverse data, many problems in RL just go away.

Bitter lesson strikes again.

ExORL could be very useful for future offline and unsupervised RL research.

Currently, Offline RL data is collected under the same reward that is used for evaluation, not ideal...

@brandfonbrener

and I propose an alternative approach – ExORL, that uses Unsupervised RL & relabeling to construct datasets for Offline RL.

paper:

1/10

4

31

154

2

12

37

This open source project wouldn't be possible without the diligent efforts from

@younggeng

.

We’d welcome any feedback and contributions!

3

2

34

@pabbeel

14/ We are excited to see what's next: what new capabilities will emerge from being able to train longer context large Transformers?

Check out the paper for more details, full code will be released soon.

Paper:

1

4

22

@pabbeel

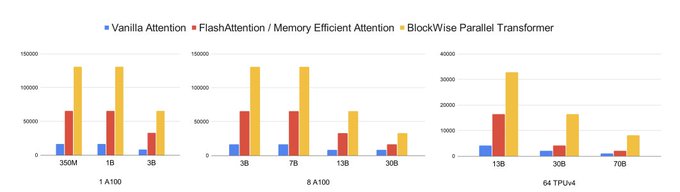

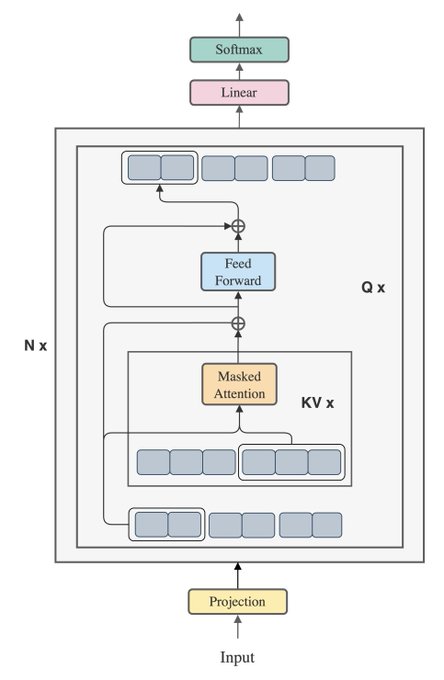

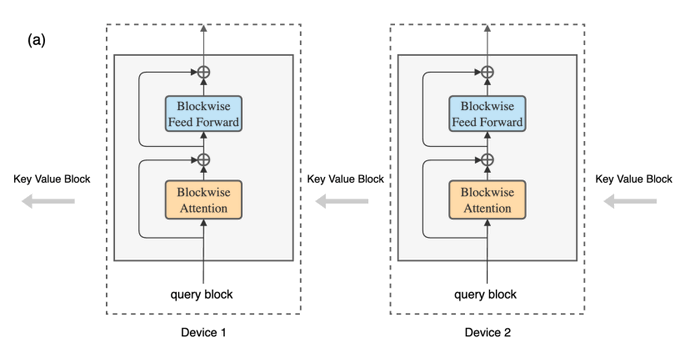

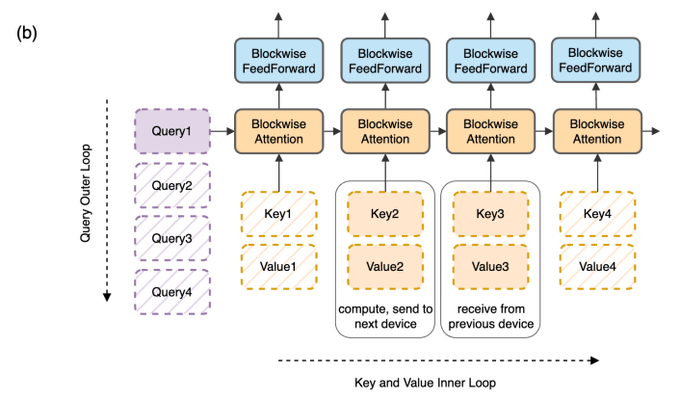

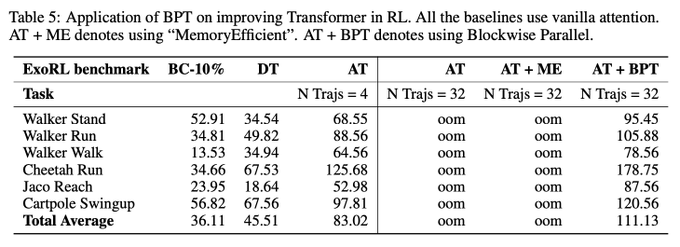

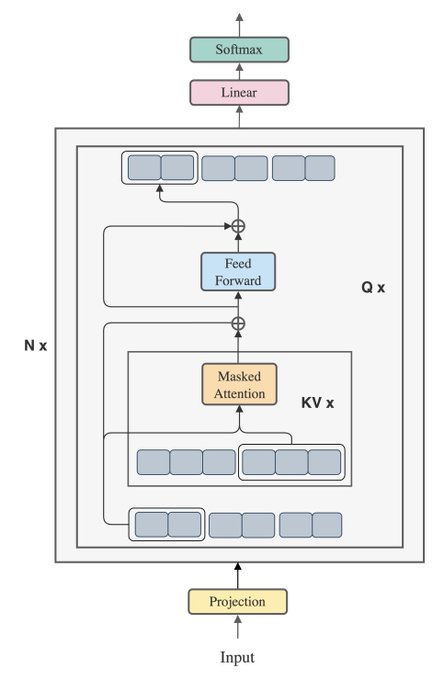

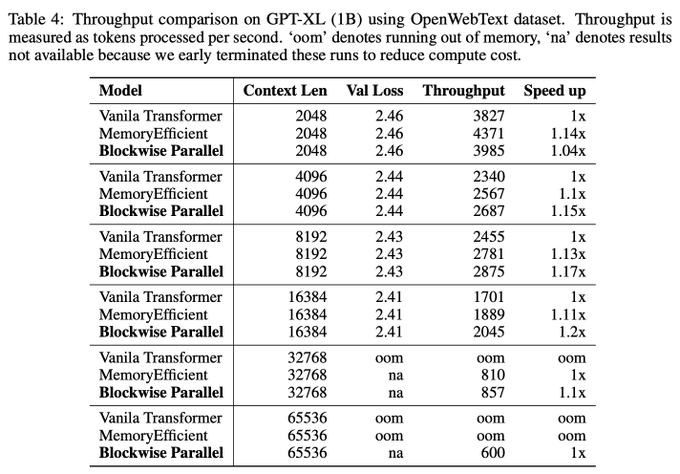

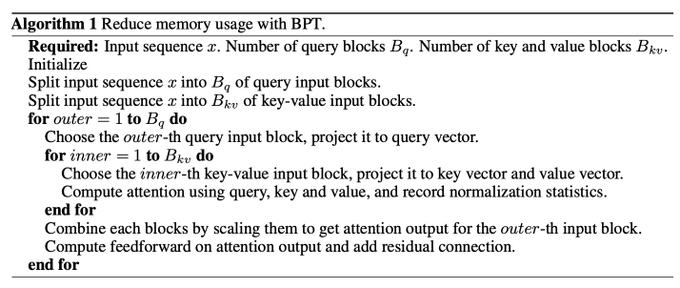

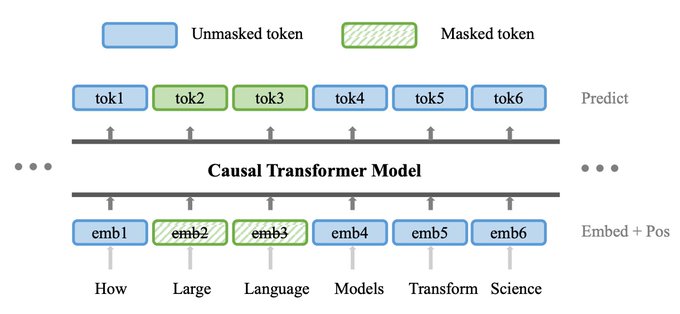

2/ Our method, the Blockwise Parallel Transformer, leverages blockwise computation of self-attention and fused feedforward to minimize memory costs.

We use the same model architecture as the original Transformer, but with a different way of organizing the compute.

1

1

20

Thanks

@ak92501

for tweeting so fast!

0

3

20

Heading to

@NeurIPSConf

now. I'm thinking about generalization of language models and interactive agents. Please say hi if you're into about these too.

I will be presenting BPT & LQAE posters, plus EAI and RingAttention in workshops.

1

0

17

@pabbeel

12/ In terms of speed, using high-level Jax operations, BPT enables high-throughput training that matches or surpasses the speed of vanilla and memory efficient Transformers. Porting our method to low-level kernels in CUDA or Triton will achieve maximum speedup.

1

0

15

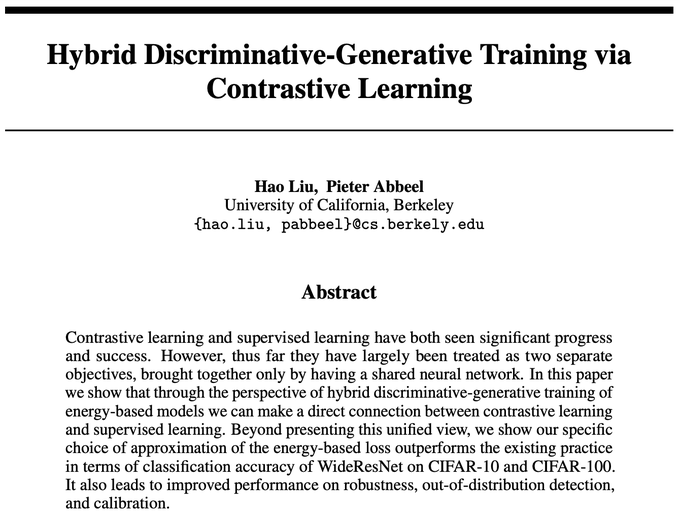

💡 We took inspiration from Ng (

@AndrewYNg

) & Jordan 2002, which showed that classifiers trained with a generative loss can outperform classifiers trained with a discriminative loss. Our work can be seen as lifting it into today’s context of training deep NNs.

[3/N]

2

2

15

The Jax implementation has been released

Some additional features added:

-Predicting discretized image tokens from VQGAN as output (similar to BEiT).

-Training on a combination of paired image-text data (e.g. CC12M) and unpaired text data (e.g. Wikipedia)

Excited to share M3AE, a simple but effective model for multimodal representation learning.

TLDR: M3AE learns a unified encoder for both vision and language from both paired image-text data as well as unpaired data.

w/

@YoungGeng

Summary thread:

[1/N]

5

38

248

0

1

14

@HlibIvanov

@matei_zaharia

@pabbeel

Stay tuned! We are interested in training / finetuning large context LLM/VLM with RingAttention.

2

1

13

🌈 Concurrently,

@jimwinkens

et al. ,

@sangwoomo

&

@BunelR

et al. showed that contrastive learning(e.g. SimCLR) improves OOD detection of classifiers!

However, HDGE's contrastive loss term doesn't rely on data augmentation.

[9/N]

2

0

8

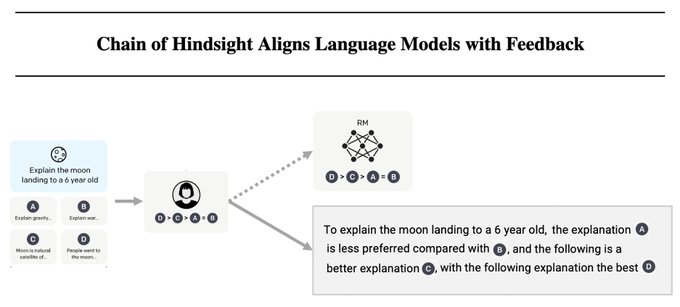

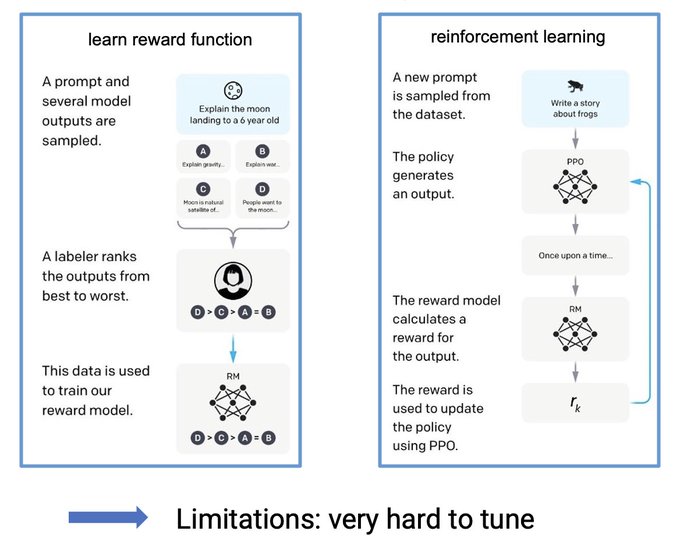

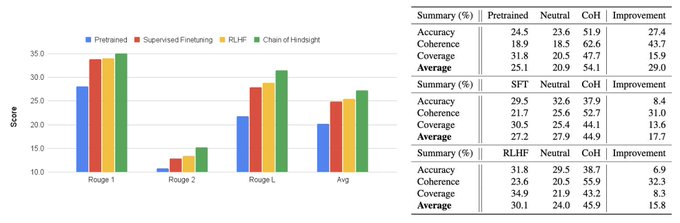

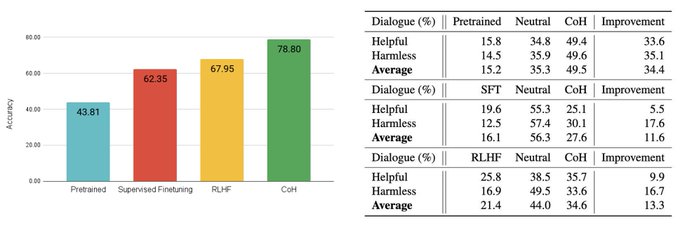

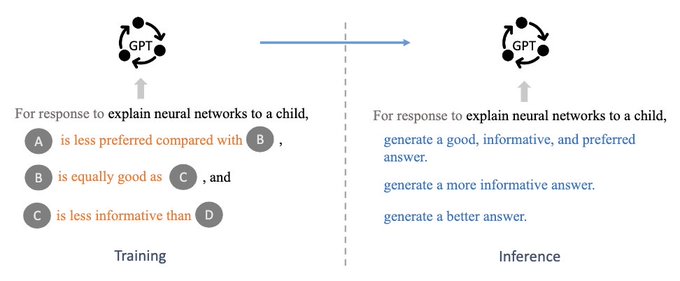

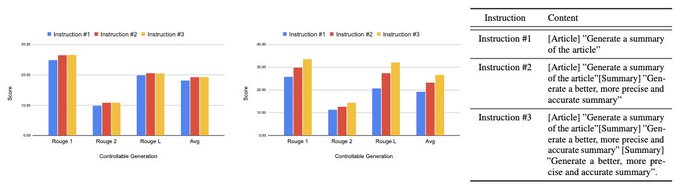

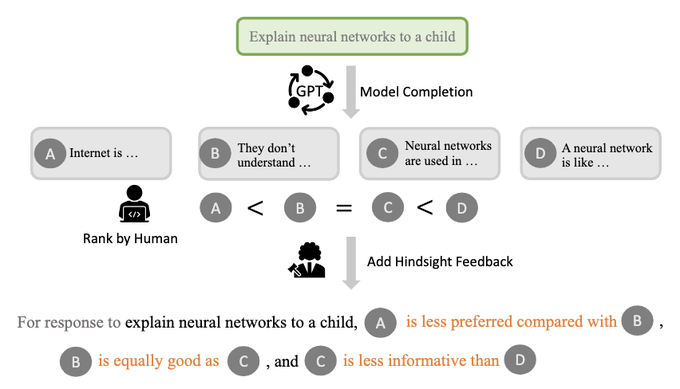

In Feb, we proposed CoH, a SFT based alternative to RL-based RLHF.

We were excited to see that such a straightforward conditional training appears to outperform RL-based RLHF on public human feedback dataset such as Anthropic's HH dataset.

1

0

7

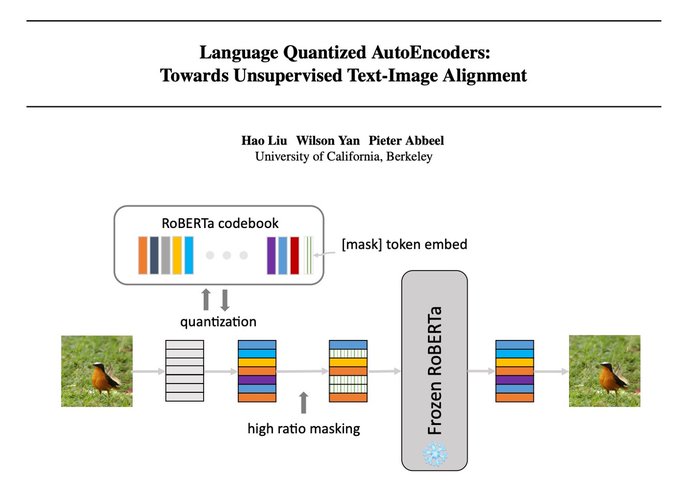

This is a joint work with amazing collaborators

@WilsonYan8

and

@pabbeel

Check out our paper and code for more details:

paper:

code:

1

0

6