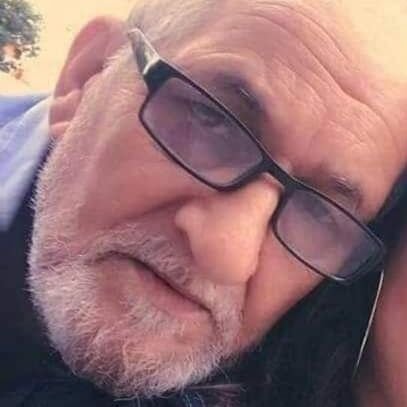

Damien Henry

@dh7net

Followers

6,864

Following

930

Media

528

Statuses

3,942

Cofounder @ClipdropApp , acquired by @heyjasperai AI x Images @googlearts Created GoogleCardboard @Google

Paris

Joined September 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Davido

• 173622 Tweets

WONWOO

• 108691 Tweets

からくりサーカス

• 99707 Tweets

ケンタッキー

• 94292 Tweets

ニンテンドーミュージアム

• 76378 Tweets

FAYEYOKO BTS 1ST FANMEET

• 73841 Tweets

BTS NUNEW 1ST CONCERT EP2

• 69944 Tweets

#swallows

• 60596 Tweets

フォーエバーヤング

• 42260 Tweets

青木選手

• 31290 Tweets

#しぐれういリアルライブ

• 23457 Tweets

青木さん

• 23100 Tweets

Alcaraz

• 19769 Tweets

Wizkid

• 18850 Tweets

サンライズジパング

• 15720 Tweets

Cassper

• 13913 Tweets

TORE

• 13298 Tweets

ダービー

• 11652 Tweets

#クイズフェスティバル

• 11534 Tweets

שנה טובה

• 10514 Tweets

Last Seen Profiles

Someone asked me recently why the Paris AI ecosystem is so 🔥 these days.

On the surface, it looks like Paris became a major

#AI

center overnight, but it didn't.

It took time and didn't appear by accident.

Here is a story that started more than 10 years ago.

👇

18

110

601

This code is so beautifully written, it almost hurts.

😍 Scraping + Analysis: 158 lines

😍 Server: 77 lines

😍 HTML: 50 lines

😍 JS: 61 lines

😍 CSS: 127 lines

Total: 396 lines for a full app!

Congrats

@karpathy

🙏🙏🙏

9

91

593

Was cleaning my basement today due to the

#confinement

and found the very first iterations of the Cardboard

#VR

headset.

15

53

509

#NeurIPS2018

is over! Time to wrap up!

In this thread I'll share what I found the most interesting in the field of ML and creativity.

Everything below worth your time IMO!

1

82

290

The best thing about ML is simplicity.

This paper explains how to create a speech to text system that works on all languages, without labelled data.

It's fascinating to see that this task can be achieved with a simple concept.

0

33

196

@YayaChanArtist

Thanks for sharing our tool!.

It was originally made for portraits. Here is how it works:

4

7

168

Very proud to work with

@NASA

and release today a new

@googlearts

experiment.

We used NLP to make a semantic maps of the concepts extracted from NASA images metadata.

Check it out:

1

65

168

@Piles0fPeaches

This is done by the

@clipdropapp

team!

We explained how it worked here:

2

8

127

@izbubbles

Fun fact: this tool was created for portraits initially!

Here is how it works:

0

15

134

And that's it!

That's the way you can create pictures like these ones:

With the app, you can transform your portraits into extraordinary visuals. 🪄 💫 ✨

Photo by Léa Genoud &

@clipdropapp

2

2

35

3

2

130

Hey if you want to generate video based on next frame prediction, I published my code:

#MadeWithMagenta

5

26

112

I'm so glad I have the chance to work with

@WayneMcGregor

. Using Machine Learning to build a choreographic tool that learns from his archive:

1

28

102

GAN Demystified, WTH do they learn. An article from

@henddkn

who I had the chance to meet during

#NeurIPS2018

1

26

85

Edit:

This thread is gaining popularity, so I'll make more content like this one.

Follow me or

@clipdropapp

if you are interested.

4

0

82

#Dalle

,

#StableDiffusion

, etc.. are amazing to imagine things like avocado chairs, but they can't draw specific objects.

This is a major blocker for those who want to use this tech for advertising a real product.

But this will change.

👇 This thread to explain how.

7

7

60

If there is still someone in the room that don't understand why Computational Photography is a game changer, here another proof ;-)

Today I'm thrilled to launch ✨

A free tool to remove objects and defects from any picture.

💻 Try it:

🤖 Code:

⬆️ PH:

🥰 100% free & open-source thanks to

@clipdropapp

20

265

1K

1

11

58

@JungleSilicon

AR can be a distraction, too.

But simple headphones augment reality and are just amazing.

3

0

56

Very proud of this new feature we are launching today!

🔥🔥 Today we,

@clipdropapp

are launching our 💡image relighting

#AI

application 💡

The app allows you to apply professional lights to your portrait images 📸 in real time ⚡

Try it now! it is free 🙂

#photography

#MachineLearning

16

103

630

3

9

53

I'm now COO of :-)

I have the privilege to work with the 2 very best co-founder you can think of:

@cyrildiagne

&

@jblanchefr

I can't be more excited by the idea of helping people through machine learning. There are so many things waiting to be done!

1

0

52

Hey! There is an open call for an artistic residency with

@somersethouse

about ML, with mentoring from

@googlearts

and

@memotv

:

2

33

46

Today we just released 5 new experiments with the amazings

@bgirschig

@carolinebuttet

@jblanchefr

@jonathan_tanant

@onemorestudio

@romaincazier

@vogler_voice

and more talented people at the

@googlearts

lab.

Please have a look!

1

13

45

Very few people knows that just after the

#deepdream

storm that

@zzznah

initiated, he continued to refine this technique and produced crazy beautiful artwork while the world was invaded by puppies eyes.

I feel incredibly lucky and grateful to have this one at home. 😊

3

6

46

Joelle Pineau talked about reproducibility in reinforcement learning. She gave a a checklist if you want to summit to

#NeurIPS2019

and contribute to solve the reproducibility crisis.

2

9

44

In 2015,

@ylecun

created FAIR Paris.

(Facebook AI Research, now Fundamental AI Research).

Yann Le Cun is recognized as one of the 3 inventors of deep learning as we know it today.

Deep learning was at least a third French thing from the beginning!

(The other two are Canadians.)

3

1

43

@invissivni

@Piles0fPeaches

Great to see it's working on anime too!

More info there:

2

2

38

@MasonStormAI

@mo1ok

Was it the visual or was it the music?

In my experience music is what triggers emotions.

Video without audio never does.

10

0

39

@kohaku__0

Fun to see

@clipdropapp

used there!

Here is more info about how it works:

0

14

37

I have seen this picture a couple of time today.

Each time IT MAKES ME SO HAPPY!!!

This picture is *Perfect*.

Here's the moment when the first black hole image was processed, from the eyes of researcher Katie Bouman.

#EHTBlackHole

#BlackHoleDay

#BlackHole

(v/

@dfbarajas

)

483

14K

49K

1

0

36

Following

@karpathy

recommendation, I just read "Understand"

I loved it.

While most SF authors optimize their writings for video adaptation, Ted Chiang uses the written language medium to convey much more than any video would.

Any recommendations for other books like this?

6

2

34

The

@googlearts

was also busy keeping the lab open despite most of us stuck at home.

With

@zachlieberman

@onemorestudio

@cyrildiagne

and so many more!

0

6

32