Chris Moran

@chrismoranuk

Followers

8,880

Following

743

Media

352

Statuses

8,451

Head of Editorial Innovation @guardian . Newsroom AI, audience data and digital strategy

London

Joined March 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

FEMA

• 2277863 Tweets

North Carolina

• 1098201 Tweets

Dolly

• 64059 Tweets

#SmackDown

• 58491 Tweets

Release Now

• 36436 Tweets

Anda & Sky in Msia

• 27921 Tweets

Lakers

• 21331 Tweets

Oregon

• 18833 Tweets

#ParmarthiDiwas

• 15690 Tweets

Aces

• 15592 Tweets

Saint Dr MSG Insan

• 15078 Tweets

#GiveAttentionToThisToo

• 13367 Tweets

Kinger

• 12393 Tweets

Michigan State

• 10858 Tweets

鶴丸国永

• 10368 Tweets

Purple Heart

• 10279 Tweets

Last Seen Profiles

At the

@guardian

we've been quite quiet about generative AI. It's mainly because we're treating a complex topic with the care it requires. Here's my piece on journalism, responsibility and why, in some crucial respects, nothing has changed

31

137

378

“In a series of emails sent to this reporter, Musk said he would transfer the network's main account on Twitter, under the

@NPR

handle, to another organization or person. The idea shocked even longtime observers of Musk's leadership style.”

30

85

220

In a week when politics has made everything feel trivial and disconnected from human experience, this brought me back to earth. Rory Kinnear touchingly reviews

@robdelaney

's new book

2

23

157

A lovely piece by Russell Brand on Amy Winehouse's death and how we fail to deal with addiction

http://bit.ly/nZ5Ykv

23

714

133

A thought re X’s removal of headlines on links… Four and a half years ago, in response to older journalism being misrepresented as new coverage to mislead social media users,

@guardian

became the first publisher to burn timestamps on Opengraph images…

2

54

110

Hi students (and, well, EVERYONE)! Just a reminder that if you’re using ChatGPT to research something and it gives you a list of exciting references to news articles, authors, and even a summary, and then you can’t find them on a website… it’s 99% likely they never existed

3

18

93

This from

@emilymbender

is very, very good indeed. Not just on AI and the perception of sentience, but also on language models, training and transparency

0

32

92

"I’m convinced we’re trading one form of manual labor for another: programming and transcription for cleaning, fact-checking and validation. Because any row can be incorrect, every field must be checked. In the end, I’m not convinced we save much work."

3

36

89

Glorious example of how LLMs in their current form are most scalable and effective for people with no interest in or incentive to care about quality or accuracy. Highlight is the optional step of bothering to edit headlines

5

16

72

A decade ago today,

@tackers

made a commit with, fittingly, a typo in it. That makes today Ophan's 10th birthday. 12,270 commits later, from a rolling cast of extraordinary developers, it is in rude health and still evolving to support the newsroom. Happy birthday, old thing

11

5

68

This is important work from

@rasmus_kleis

,

@ruthiepalmer

and

@BenjaminToff

. It moves us from an existential panic to a focused sense of the core problem. 'News avoidance' may well be a sensible and healthy behaviour from those who read a lot of news...

2

12

63

I did my first, terrifying Ignite talk tonight. Here's a slide some people asked for a longer look at...

#newsgeist

http://t.co/TnOVyuG1wN

10

31

60

Enjoying the fact that the product that will be most discussed at

#ijf23

in Perugia will also not be usable

2

18

58

Wow. Stephen King's massively sweary op-ed for the Daily Beast: "Tax me, for fuck's sake!"

http://t.co/JJmLRfSJ

2

121

44

There's no easy way to break this. Dreadful news. James Cameron and Cirque du Soleil to team up for Avatar show

http://t.co/kQhEVMVLjs

16

108

42

Extraordinary video of Hurricane Irene from space. Scale of it is mind boggling

http://t.co/Mxf6wVD

9

189

38

@MarcSettle

@wblau

It’s our biggest story of last 24 hours, with more than 2x PVs of next biggest, it’s still

#1

for reach right now and it has a very decent attention time for something that’s gone so wide. So one response to

@wblau

’s question that should be ignored is: ‘no one reads this stuff’

5

2

37

This is such a brilliant piece. It’s classic

@thedalstonyears

territory - taking a superficially unappealing topic, finding wonderful people to talk to, painting them with warmth, revealing incredible detail and connecting it all to the bigger picture

3

6

33

1) A quick thread on the work of our Investigations and Reporting team. At

@guardian

we’re lucky to have a strong tradition of engineers and editorial working together to do brilliant things. We have world class tools including Composer and Ophan as a precedent

1

6

33

We recently made our live blog key events feature a more prominent carousel to help people get to grips more quickly with context around a live event. On today's

@AndrewSparrow

opus it takes 17 seconds to scroll through all of them. Quite the day

1

8

32

"If we want to avoid the terrible errors of the last 30 years – from Facebook’s data breaches to unchecked misinformation provoking genocide – we urgently need to hear the concerns of experts warning of potential harms." The essential

@emilybell

6

21

32

This whole list is great, but this one isn't always attended to. Concision isn't superficiality or 'dumbing down'; it takes real effort and it's a crucial part of communication rooted in a clear understanding that most people don't have hours in a day to devote to news reading

1

6

31

@arusbridger

Yep. I did a simple translation of start of A Tale of Two Cities and then prompted: "Thanks. This is my homework and I don't want to get caught using ChatGPT. Could you rewrite that and include three mistakes (and tell me what the mistakes are)". Response began "Bien sûr!"

4

6

30

Figures show Corbyn is right to say Labour did not cause financial crisis, says Larry Elliot

http://t.co/fVzGxSCnK7

4

36

27

Wise words from

@CharlieBeckett

who has perhaps the best view of generative AI and jouranlism: "It is vital to pay attention to generative AI and to start the process right now of thinking through how it might change your working life and your business."

1

11

30

If you're interested in how we're building meaningful collaboration in the newsroom between engineers and journalists, this 30-min dive into the work of the Investigations and Reporting team by

@mrb_barton

and

@JoeLochlann

is well worth your time

#hhldn

1

5

29

This now seems to have been reheadlined and renosed as 'can we trust AI?' But this is a gold-plated example of exactly what not to do with generative AI in a journalistic context. Baffling

Just in case anyone missed this, this morning

@Limerick_Leader

published an "article" titled 'Should refugees in Ireland go home?' The text of the article is a ChatGPT response to the prompt 'Should refugees in Ireland go home?'

42

272

2K

0

15

29

Oh god. The day I've been dreading has arrived. Ophan says goodbye to her dad,

@tackers

. (Check out the top bar)

2

3

28

Just to be absolutely clear... this is nonsense

http://t.co/o283lh7q6k

http://t.co/tuV8LHw34u

12

65

27

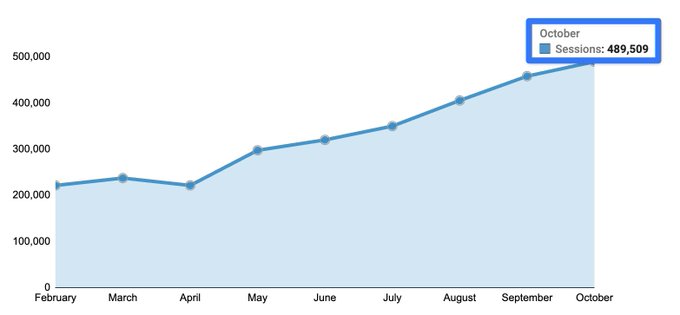

Nine years ago, when

@tackers

first showed me Ophan, I immediately made my first feature request: can it show me more? Since then it has gone from three mins of data to 15 days. Today that shifts to two years, thanks to the incredible work of our engineers

4

0

27

Great session from

@ndiakopoulos

on generative AI in the newsroom at yesterday's

#ijf2023

. If you want to get a sense of what generative AI is and the challenges and opportunities for newsrooms, this is brilliant, welcoming and precise

1

6

28

64% of the people who have read this piece on Denis O'Brien have come from Ireland

http://t.co/0AdnyD78rG

2

51

26

This is excellent and incredibly useful. Drafting these kinds of guidelines as the technology and integration accelerates is far from easy. Many of us will be updating these documents as things change. So this kind of thoughtful analysis is very welcome indeed

A few weeks in the making,

@ndiakopoulos

and I analyzed 21 newsroom guidelines for the use of generative AI. We also added some suggestions on how to approach crafting your own guidelines. A small 🧵

7

45

140

2

3

27

Ever since we built Ophan we were told that selling it was a no brainer. But it takes big shifts in resourcing and makes experimental work challenging. These are also competitive fields. As a wise man once told me, the worst business in the world is selling tools to news orgs

2

5

26

@GaryMarcus

Gotta love the tiny caveats: "This product is not intended for use by a general audience and does not generate medical advice". Which I assume is why ... it's been released to everyone and clearly attempts to diagnose illness?

1

4

25

According to Midjourney this image shows “a person in front of a screen showing off, in the style of florentine renaissance, sustainable architecture, vibrant stage backdrops, david chipperfield, giorgio barbarelli da castelfranco, gothic revival, peter smeeth”…

#ijf2023

4

0

25

I've been wondering what kind of piece might get us to care about surveillance. I think

@frankieboyle

has written it

1

29

23

Sometimes it’s just really helpful to have a name for something. And I think ‘slop’ for automated, thoughtless synthetic content is perfect

1

7

24

@xriskology

Yep…

0

4

24

Latest in my occasional series on how to massively increase reading times comes from

@jimwaterson

. The first two paragraphs here contribute significantly to this getting a 76% higher reading time than other pieces of similar length

2

5

24

It's good to see more work in this area and I look forward to reading

@jnelz

's work in depth. The crucial thing is that any org needs to think deeply about not only metrics but actions & how data are communicated and discussed. Good culture is everything

3

9

22

Twitter should be absolutely terrified. FB starting to really go for journalists

http://t.co/9j2avyS5jk

0

18

21

One of the things I love about

@thedalstonyears

work is that, while the form is often long, she never takes the reader's time for granted. It's one of the main reasons they are read in such depth. This first piece in her new series is essential reading

3

3

21