Artsiom Sanakoyeu

@artsiom_s

Followers

3,781

Following

668

Media

383

Statuses

2,777

Staff Research Scientist @Meta Generative AI PhD in Computer Vision @ Heidelberg University, @Kaggle Competitions Master (Top-50 worldwide)

Zürich, Switzerland

Joined December 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Beirut

• 645597 Tweets

الاهلي

• 618228 Tweets

Nasrallah

• 393149 Tweets

حسن نصر

• 159724 Tweets

الزمالك

• 145089 Tweets

Acapulco

• 106298 Tweets

Tigers

• 83387 Tweets

マクゴナガル先生

• 76544 Tweets

Miami

• 65407 Tweets

Asheville

• 37794 Tweets

#SmackDown

• 36540 Tweets

Naomi

• 36281 Tweets

Willie

• 32524 Tweets

Independiente

• 31048 Tweets

Melo

• 17235 Tweets

Chucky

• 13912 Tweets

Laso

• 12333 Tweets

White Sox

• 11510 Tweets

日本破壊クソメガネ

• 11412 Tweets

Last Seen Profiles

We have released the code and weights for our

#CVPR2023

paper "Avatars Grow Legs: Generating Smooth Human Motion from Sparse Tracking Inputs with Diffusion Model"!

code:

abs:

project:

The demo is below:

4

53

312

Lol. Dude it's not the model that takes 100 Mb, but an extra thing that they train on top of 1B parameter model!

Don't distribute fake news

8

12

188

Really nice introduction into hyped Diffusion Models by

@lilianweng

. With (almost) all necessary theory packed inside.

0

20

154

Check out our new

#CVPR21

paper!

Discovering Relationships between Object Categories via Universal Canonical Maps

In collaboration with FAIR (

@NataliaNeverova

, P. Labatut,

@davnov134

and A. Vedaldi)

🌐

▶️

📝

2

23

134

Our paper "Avatars Grow Legs" (CVPR 2023) is out!

TL; DR: Fast Diffusion models to generate full body motions based on head and hands tracking inputs,

Will release the code in a few days.

2

16

100

To learn more about our 3rd place solution for the

@Kaggle

@LyftLevel5Motion

"Lyft Prediction for Autonomous Vehicles competition" read my blogpost:

0

18

99

My new video on self-supervised representation learning (also easy to understand for beginners). I explain CliqueCNN which builds compact cliques for classification as a pretext task and I discuss other self-supervised learning approaches.

@itsbautistam

3

19

95

Last week I gave a talk at Heidelberg SIAM chapter

"Identification of Humpback Whales using Deep Metric Learning".

I talked about our recent CVPR'19 paper and about Humpback Whale Identification challenge at

@kaggle

.

Slides:

0

36

89

Cool work from

@facebookai

!

It can generate an image of input text in any style provided an example of reference style. Architecture loosk similar to StyleGAN, but instead of noise, every nomalization layer is conditioned on the encoded style vector.

1

13

77

Check out our new

#CVPR20

paper on Transferring DensePose to Animals

In collaboration with FAIR (V. khalidov, A. Vedaldi and

@NataliaNeverova

)

🌐

▶️

📝

1

19

71

I will present our

#CVPR2020

paper on Transferring Dense Pose to Animals

Today at 10am PDT / 7PM CET.

Join Q&A

🌐

📝

0

24

69

Dense pose for animal classes with transfer learning

@facebookai

blog post about our

#CVPR20

paper.

🌐 Blog

📝 Paper

1

17

64

In 1 hour at

#NeurIPS2022

I will be presenting VisCo-Grids, a grid-based surface reconstruction method incorporating Viscosity and Coarea priors.

Joint work at

@MetaAI

with

@AlbertPumarola

,

@YarivLior

,

@alitabet

and

@lipmanya

Details in the thread 🧵

1

15

63

I'm happy to announce that our team (me,

@KonevSteven

, K. Brodt) was awarded 3rd place within the Waymo Motion Prediction Challenge 🥳

Task: predict trajectories of the agents for 8 seconds into the future.

📜Technical report

We also released our code ↓

3

11

60

Presenting our work "Re-ReND: Real-time Rendering of NeRFs across Devices"

#ICCV23

We show how to bake a NeRF on a mesh with rich view-dependent textures to allow rendering 100-1000 FPS on different devices without loss of quality.

Visit our poster:

ID: 3760

Foyer Sud"- 140

1

8

59

I wrote a blog post which briefly explains the SMAL model for fitting 3D shapes of animals to RGB images paper.

Based on paper “3D Menagerie: Modeling the 3D Shape and Pose of Animal”, CVPR 2017

@silvia_zuffi

@Michael_J_Black

🌐

2

13

58

Happy to share that we got 1/1

#NeurIPS2022

papers acepted this year from our small team in Reality Labs Zurich!

Working on the camera ready and will upload it to arXive soon. Small spoiler: it's on learning implicit 3D shape representations for shape reconstruction.

1

0

51

Our team (me,

@ppleskov

and

@shakhrayv

) finished 10th (out of 2131 teams) in Humpback Whale Identification challenge on

@kaggle

.

Special thanks to

@odsai_en

community for fruitful discussions!

6

14

49

🔥Fresh drop - Mixtral-8x22B!

As usual,

@MistralAI

stays true to their style by simply leaving a magnet link to a torrent with the weights of their new model. Nice trolling!

The new model is a Mixture of Experts Mixtral-8x22B:

- Model size: 262 GB (I assume the weights are in

3

4

40

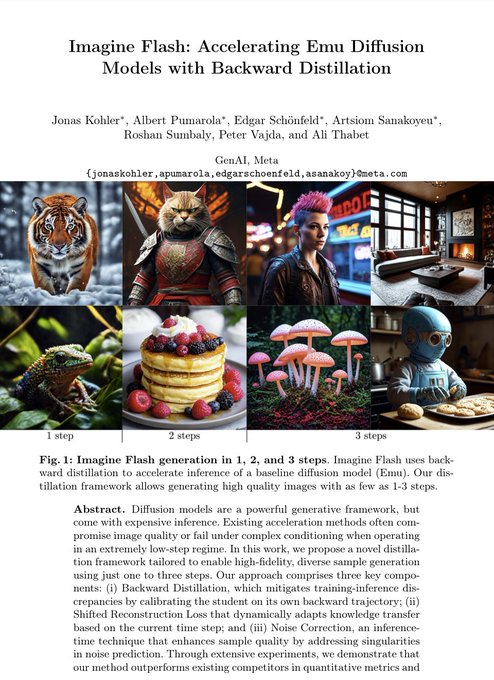

Mark

@finkd

is talking about our Imagine Flash right here. I won't lie, it feels really good when your CEO speaks about your work this way 🙂

2

1

39

My youtube video explaning HOW to EARN $6000 By WINNING A KAGGLE AUTONOMOUS DRIVING COMPETITION.

The video is on our 3rd place solution for the

@Kaggle

@LyftLevel5

Motion Prediction for Autonomous Vehicles competition.

🛠️

🎬

0

13

39

Best Paper award

#iccv19

: SinGAN

I really liked their results on the task of Super resolution!

2

5

40

Our paper was accepted as oral at ECCV 2018!

"A Style-Aware Content Loss for Real-time HD Style Transfer"

Artsiom Sanakoyeu*, Dmytro Kotovenko*, Sabine Lang (

@lang254

) , Björn Ommer

Project page:

Source code is coming soon.

0

16

38

I'm delighted to share that I was selected as an outstanding reviewer at NeurIPS for the second time in a row!

#neurips2020

2

0

37

Our

#CVPR2020

paper on DensePose for animals got covered in the weekly AI newsletter of ,

@AndrewYNg

's AI education startup

1

9

37

I was promoted to the rank of Expert Reviewer by

@icmlconf

. This is nice and gives some extra motivation to keep the high quality of reviews!

0

0

34

Some nice stylization results on style transfer from our work "A Content Transformation Block For Image Style Transfer",

#CVPR2019

. More result are on the project page

🌐

▶️

📝

1

7

31

PULSE: Self-Supervised Photo Upsampling via Latent Space Exploration of Generative Models

#CVPR2020

Upsample photo by finding a proper latent vector in pretrained StyleGan

0

9

31

Our style transfer on steroids at ICCV19!

"Content and Style Disentanglement for Artistic Style Transfer"

We learn subtle variations of styles and disentangle style from content

Project page:

Video:

#ICCV19

3

16

30

Happy to share that our GCPR'19 paper was selected for an oral presentation!

"Semi-Supervised Segmentation of Salt Bodies in Seismic Images" : 1st place solution at TGS Salt Identification Challenge

@kaggle

@TGScompany

Paper:

#GCPR19

#kaggle

0

7

26

Check out our recent work at Meta GenAI on Accelerating the Diffusion models by caching.

0

0

23

It's such big honour to be selected as as one of the best

#Neurips2019

reviewers!

Moreover, I will get a free conference registration! Awesome!

@hugo_larochelle

2

0

22

Novel View Synthesis of Dynamic Scenes With Globally Coherent Depths From a Monocular Camera

#CVPR2020

@JaeShinYoon2

This is pretty cool! The model can do space-time navigation and bullet time effect!

🔗

1

5

22

Attending

#CVPR2023

tutorial Efficient Neural Networks: From Algorithm Design to Practical Mobile Deployments

organized by

@SergeyTulyakov

@Snap

0

2

21