Jialu Li

@JialuLi96

Followers

1,266

Following

586

Media

43

Statuses

279

CS PhD student at @unc @unccs @uncnlp ; Previous @Cornell_CS ; Past intern @Amazon @Apple @Google . Working on VLN, image generation, multi-modal LLM.

Chapel Hill, NC

Joined April 2020

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

McDonald

• 1205536 Tweets

الهلال

• 689651 Tweets

سالم

• 152360 Tweets

Liz Cheney

• 92265 Tweets

Neymar

• 92110 Tweets

علي العين

• 87268 Tweets

AFIP

• 54843 Tweets

نيمار

• 42163 Tweets

البليهي

• 35891 Tweets

#الاهلي_الريان

• 31392 Tweets

Paul Di'Anno

• 28411 Tweets

curitiba

• 27282 Tweets

زعيم اسيا

• 26988 Tweets

Iron Maiden

• 26100 Tweets

Chris Kaba

• 24466 Tweets

كوليبالي

• 24293 Tweets

جيسوس

• 22753 Tweets

سفيان رحيمي

• 15588 Tweets

Samantha Irvin

• 15465 Tweets

Simone

• 13830 Tweets

سافيتش

• 12708 Tweets

Yulia

• 12192 Tweets

علي الفوز

• 11857 Tweets

الشوط الاول

• 11394 Tweets

الاتحاد الاسيوي

• 10246 Tweets

Last Seen Profiles

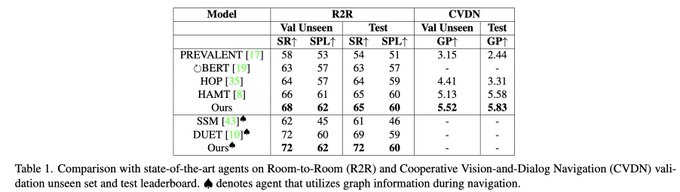

🚨Excited to share: “𝗣𝗮𝗻𝗼𝗚𝗲𝗻: Text-Conditioned Panoramic Environment Generation for Vision-and-Language Navigation”!🚨

PanoGen creates diverse 360-degree panorama via recursive outpainting, achieving SotA on R2R, R4R, CVDN.

@mohitban47

@uncnlp

🧵

1

37

95

Hi

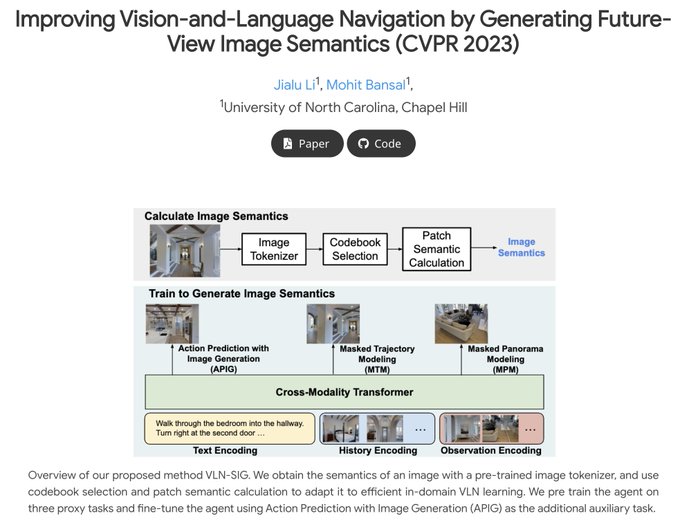

#CVPR2023

, sadly I wasn't able to come to

@CVPR

in-person due to visa issue 😭, but I'm excited to present our work "Improving VLN by Generating Future-View Image Semantics" remotely. Welcome to our posterboard

#245

Jun21 10:30-12pm PT!

Talk recording:

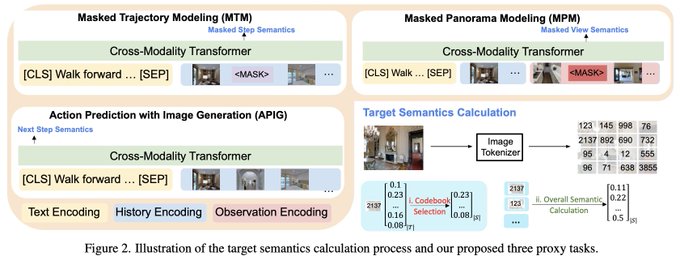

Excited to share my

#CVPR2023

paper: “Improving Vision-and-Language Navigation by Generating Future-View Image Semantics”!

We equip the agent with ability to generate the semantics of future views to aid action selection in navigation.

w/

@mohitban47

🧵

2

21

68

3

18

84

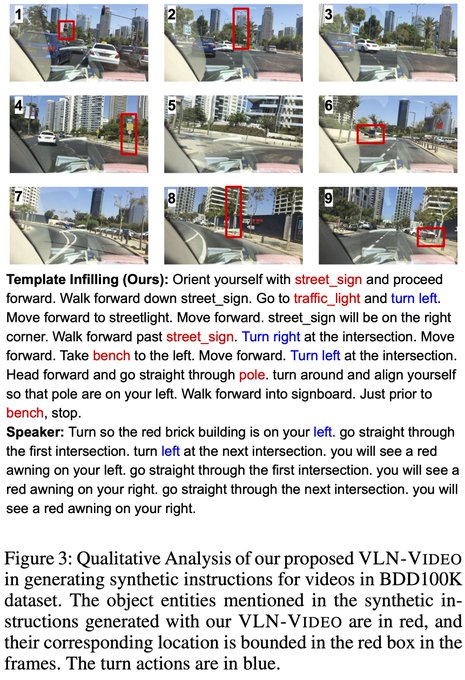

🎉Excited to share my new

#AAAI2024

work “VLN-VIDEO: Utilizing Driving Videos for Outdoor Vision-and-Language Navigation”!

Diverse outdoor envs in videos, w/ generated ins+actions w/ image rotation similarity -> SotA outdoor VLN

@AishwaryaPadma4

@gauravsukhatme

@mohitban47

🧵

1

23

73

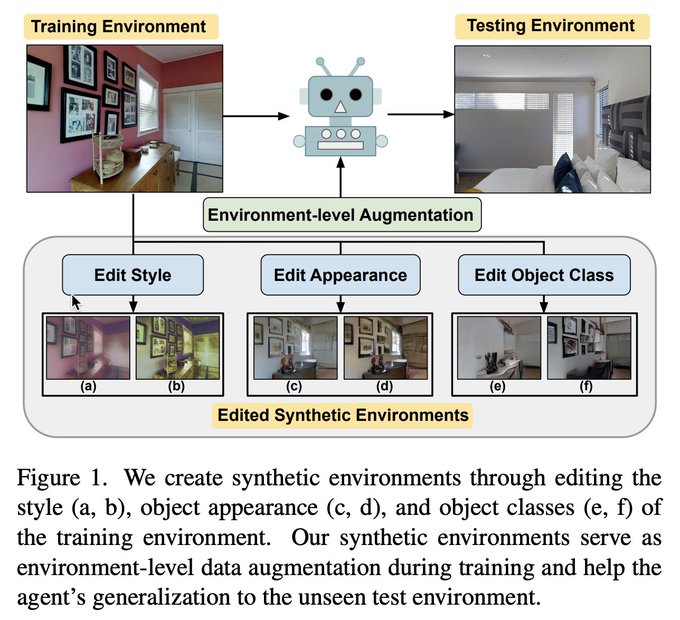

Excited to share my

#CVPR2022

paper: “EnvEdit: Environment Editing for Vision-and-Language Navigation”!

We introduce sota VLN env-augmentation based on style transfer, object class, appearance editing for better generalization

w/

@HaoTan5

@mohitban47

🧵

3

26

72

Excited to share my

#CVPR2023

paper: “Improving Vision-and-Language Navigation by Generating Future-View Image Semantics”!

We equip the agent with ability to generate the semantics of future views to aid action selection in navigation.

w/

@mohitban47

🧵

2

21

68

🎉Excited to share that 𝗣𝗮𝗻𝗼𝗚𝗲𝗻 has been accepted to

#NeurIPS2023

! We create diverse panorama environments for VLN via recursive image outpainting, improving SotA agents on R2R, R4R, CVDN unseen envs. Looking fwd to meeting you all in New Orleans!

cc

@mohitban47

@uncnlp

🚨Excited to share: “𝗣𝗮𝗻𝗼𝗚𝗲𝗻: Text-Conditioned Panoramic Environment Generation for Vision-and-Language Navigation”!🚨

PanoGen creates diverse 360-degree panorama via recursive outpainting, achieving SotA on R2R, R4R, CVDN.

@mohitban47

@uncnlp

🧵

1

37

95

0

14

55

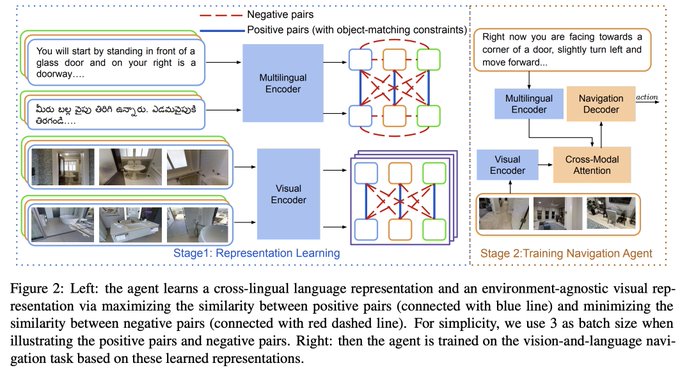

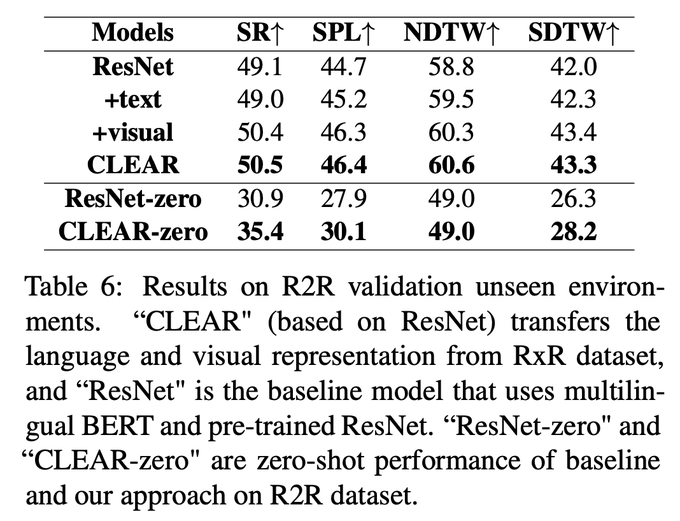

Happy to share my

#NAACL2022

findings paper: “CLEAR: Improving Vision-Language Navigation with Cross-Lingual, Environment-Agnostic Representations”!

We use connection btw same object in diff env+lang for generalizable multiling VLN

@haotan5

@mohitban47

🧵

1

19

54

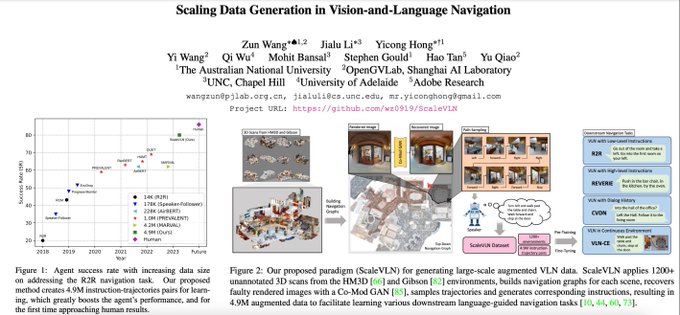

Check out our new

#ICCV2023

VLN paper, that I co-led with Zun and Yicong! By scaling to 4.7M generated data, we achieve sota on multiple VLN datasets (R2R, CVDN, REVERIE, R2R-CE) & reduce long-lasting generalization gap btw seen & unseen envs to <1%, close to human performance!😀

Excited to share our new

#ICCV2023

paper: “Scaling Data Generation in Vision-and-Language Navigation”!

We reduce the long-lasting generalization gap between navigating in seen & unseen scenes to < 1%, & approaching human performance for 1st time! 🥳

🧵

3

15

60

0

15

46

Thanks so much for sharing our work!🥰

2

4

45

Our ScaleVLN paper was selected as ORAL at

#ICCV2023

!🥳

We scale VLN data to 4.7M high-quality data, w/ better graph connectivity & achieve near-human SotA generalization! Meet me (zoom) &

@ZunWang919

@ poster session Oct5 2:30-4:30 & oral session 4:30-6

Excited to share our new

#ICCV2023

paper: “Scaling Data Generation in Vision-and-Language Navigation”!

We reduce the long-lasting generalization gap between navigating in seen & unseen scenes to < 1%, & approaching human performance for 1st time! 🥳

🧵

3

15

60

2

13

39

Check out our

#NAACL2021

work😃(today 11:40-13:00 PDT in session3D) --> “Improving Cross-Modal Alignment in Vision Language Navigation via Syntactic Information” with

@HaoTan5

@mohitban47

(

@uncnlp

)!

Paper:

Code:

1/n

1

14

36

I'm excited to present our work in my first in-person

@CVPR

--> EnvEdit, that introduces sota VLN env-augmentation based on style transfer, object class, appearance editing for better generalization.

Our poster id 204b in

#CVPR2022

Session3.2 Jun23 2:30-5pm

@HaoTan5

@mohitban47

Excited to share my

#CVPR2022

paper: “EnvEdit: Environment Editing for Vision-and-Language Navigation”!

We introduce sota VLN env-augmentation based on style transfer, object class, appearance editing for better generalization

w/

@HaoTan5

@mohitban47

🧵

3

26

72

0

8

29

I'll be at

#NeurIPS2023

from Mon-Fri😀. Happy to talk about VLN, image generation, or anything else.

On Wed (5 - 7pm CST), I'll present PanoGen--creating diverse 360-degree panoramic environment via recursive outpainting for VLN.

🚨Excited to share: “𝗣𝗮𝗻𝗼𝗚𝗲𝗻: Text-Conditioned Panoramic Environment Generation for Vision-and-Language Navigation”!🚨

PanoGen creates diverse 360-degree panorama via recursive outpainting, achieving SotA on R2R, R4R, CVDN.

@mohitban47

@uncnlp

🧵

1

37

95

0

9

26

Thanks so much for your advisory and encourage along the way! No way I could be able to accomplish this research without your and claire’s help!

Proud moment when the student you advised gets her first main conference paper

@emnlp2020

. Congrats

@JialuLi96

! You will do great at

@uncnlp

!

3

2

83

1

1

8

@CVPR

(Thanks to Jaemin

@jmin__cho

and Abhay

@AbhayZala7

for helping setting up my poster and helping me present remotely😀)

cc.

@mohitban47

@uncnlp

@unccs

0

0

7

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

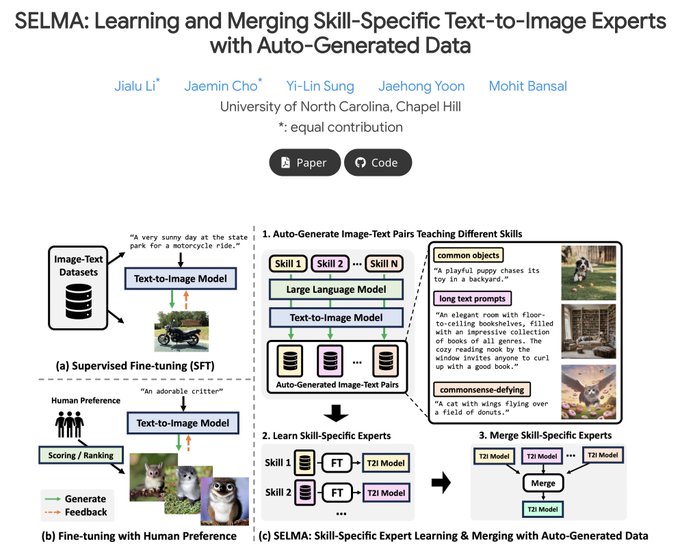

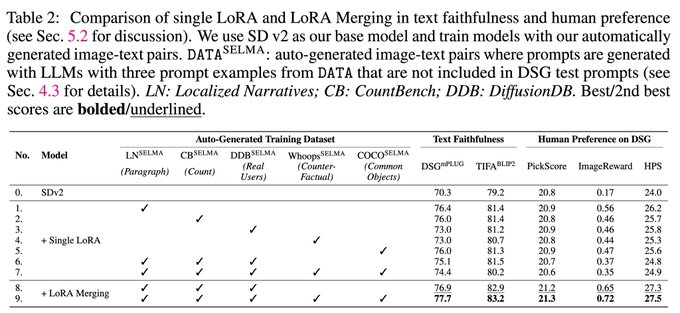

(c) Given the image-text pairs, first we independently train multiple skill-specific T2I LoRA expert models specialized in different skills.

(d) Then, we merge the LoRA expert parameters to build a joint multi-skill T2I model while mitigating the knowledge conflict from different

1

0

4

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

We show qualitative examples from DSG test prompts requiring different skills. SELMA helps improve SDXL in various skills, including counting, text rendering, spatial relationships, and attribute binding.

1

0

3

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

On three T2I models (SD v1.4, v2, XL), SELMA substantially improves 5 different metrics (e.g., +6.9% on DSG, +2.1% TIFA, +0.4 Pick-a-Pic, +0.39 ImageReward, +3.7 HPS, on SDXL), as well as human preference over the original backbone.

1

0

4

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

Co-led with

@jmin__cho

, and wonderful collaboration with

@yilin_sung

@jaeh0ng_yoon

@mohitban47

!

@uncnlp

@unccs

Check out more details of our paper at:

And our code is available at:

Diffusers checkpoints coming soon!

0

0

4

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

SELMA’s strategy of learning & merging skill-specific LoRA experts is more effective than training a single LoRA model on multiple datasets. This indicates that merging LoRA experts can help mitigate the knowledge conflict between multiple skills.

1

0

4

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

4 stages of SELMA: (a) given a description and three in-context examples about a specific skill, we generate prompts to teach the skill with an LLM, while maintaining prompt diversity via text-similarity-based filtering.

(b) Next, given the generated prompts, we generate training

1

0

3

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

Interestingly, we find that finetuning on auto-generated data matches (+ sometimes outperforms) the performance of finetuning on GT data. This empirically demonstrates the strong effectiveness of auto-generated data, which doesn’t need any human annotation.

1

0

3

@jmin__cho

@yilin_sung

@jaeh0ng_yoon

@mohitban47

@uncnlp

@unccs

We also find intriguing weak-to-strong generalization in T2I models.

Fine-tuning a strong T2I model (SDXL) on images generated with a weaker T2I model (SD v2) improves the performance on all 5 metrics.

1

0

3