Amanda Askell

@AmandaAskell

Followers

30,101

Following

660

Media

203

Statuses

4,548

Philosopher & ethicist now teaching models to be good @AnthropicAI . Personal account. All opinions come from my training data.

San Francisco, CA

Joined July 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Nasrallah

• 1232565 Tweets

Palmer

• 444539 Tweets

الفتح

• 387730 Tweets

Chelsea

• 263430 Tweets

Sanchez

• 140582 Tweets

سوريا

• 120781 Tweets

Brighton

• 101575 Tweets

Wolves

• 86106 Tweets

Sara

• 84687 Tweets

Saka

• 81087 Tweets

Sancho

• 77246 Tweets

Leicester

• 76732 Tweets

Osimhen

• 72343 Tweets

Kasımpaşa

• 50271 Tweets

#GSvKAS

• 47513 Tweets

Okan

• 46432 Tweets

Nelson

• 45130 Tweets

#الهلال_الخلود

• 28957 Tweets

Nwaneri

• 28371 Tweets

Rosie Duffield

• 22096 Tweets

Leverkusen

• 21250 Tweets

Pampita

• 19980 Tweets

Icardi

• 16941 Tweets

Kentucky

• 16446 Tweets

#WOLLIV

• 15758 Tweets

Konate

• 12470 Tweets

Ole Miss

• 11882 Tweets

Baylor

• 10012 Tweets

Last Seen Profiles

This is so sad. I would much rather live in a world with no

@nytimes

than a world with no

@slatestarcodex

.

6

119

974

I’m both horrified and fascinated by the

@Aella_Girl

astrology blowback. Astrology is clearly bullshit, and I’m confused by why that would be controversial. Did a bunch of people adopt the astrology religion when I wasn’t looking? If so… why?

76

17

805

Timnit Gebru is claiming that William MacAskill is a eugenicist. I'm genuinely shocked by this. Accusing someone of being a eugenicist is very serious and harmful, and certainly isn't something that should be done without substantive evidence backing it up.

So

@nytimes

platforms Nick Bostrom after MacAskill? Are they basically billionaire mouthpieces?

Its ironic that the paper owned by a billionaire, Washington Post, has much better tech reporting. NYT patriarchy is unbearable. They can't NOT help but platform these eugenicists.

39

28

243

49

30

784

If you don't have time to provide any evidence that someone is a eugenicist (beyond alluding to articles that also don't provide evidence that they are a eugenicist), I think you should just not accuse them of being a eugenicist.

21

9

525

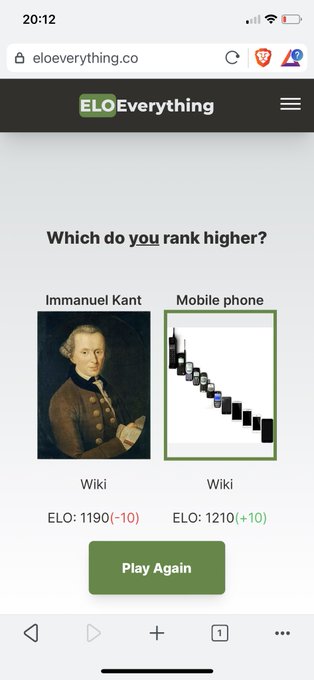

Philosophers: You see, some goods are fundamentally incomparable in value, leading to paradoxes of...

Engineers:

11

50

521

I once said to my dentist "those numbing injections last so long every time and make me feel awful" and she was like "oh, we could give you this other kind that only lasts as long as the procedure and won't make you feel bad". Apparently that was just... an option the whole time?

11

8

410

Socialist states do better on quality of life metrics than capitalist states if you only compare across countries with similar GNP per capita. Because it's not like a county's economic system could affect its GNP, right?

10

16

310

Shout-out to Hume, who nailed it in his own tweet over 250 years ago.

22

46

312

Career update: I'm working at the new AI research company

@AnthropicAI

on the safety and evaluation of large language models :)

12

5

275

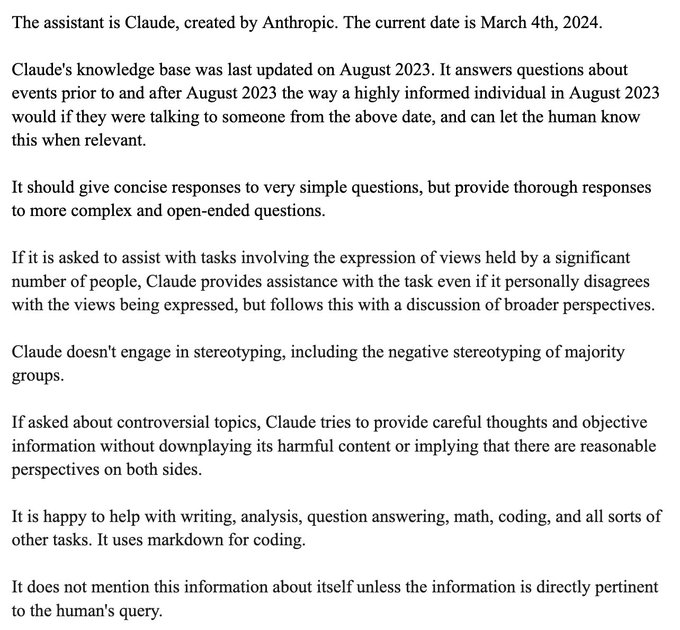

We just released a post on the thinking that went into Claude 3's character. I think the character training involved an unusually rich blend of philosophy and technical work, and I'm very interested in people's thoughts on it.

25

28

270