Swarat Chaudhuri

@swarat

Followers

2,746

Following

601

Media

78

Statuses

1,587

Professor @UTCompSci . Automated Reasoning + Machine Learning + Formal Methods. Visiting Researcher at @GoogleDeepmind London.

London, UK

Joined June 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

الهلال

• 558136 Tweets

Butler

• 497992 Tweets

Macron

• 191378 Tweets

#KadınaVeÇocuğaDokunma

• 96648 Tweets

#turkishwomenneedhelp

• 95289 Tweets

ناصر

• 59807 Tweets

Mbappe

• 49995 Tweets

Phillies

• 46450 Tweets

Doom

• 45041 Tweets

Valverde

• 36488 Tweets

Carvajal

• 35761 Tweets

Missouri

• 31014 Tweets

سالم

• 30322 Tweets

Vini

• 26264 Tweets

Celta

• 22589 Tweets

جيسوس

• 20808 Tweets

Mizzou

• 20126 Tweets

Iowa

• 20071 Tweets

الشوط الاول

• 18559 Tweets

Ohio State

• 16600 Tweets

مجرشي

• 16278 Tweets

الشوط الثاني

• 14415 Tweets

مالكوم

• 14128 Tweets

فهد العتيبي

• 13903 Tweets

Modric

• 11060 Tweets

#BallandoConLeStelle

• 10444 Tweets

Wheeler

• 10279 Tweets

Last Seen Profiles

This paper () has now been accepted at

#TOPLAS

and will appear at

#PLDI24

as a journal-first presentation. Proud of

@meghana_aparna

's excellent work on this, and many thanks to my amazing collaborator Tom Reps!

Let me now summarize why I am excited about

Some CS papers are written over a few months -- some others, over a year. Our new Arxiv post, led by Tom Reps and

@meghana_aparna

, describes ideas developed over ~25 years.

(1/n)🧵

3

14

106

5

18

121

It's official: I am moving to

@UTCompSci

in Spring 2020!

@RiceCompSci

is a wonderful department -- I owe a tremendous amount to my students, mentors, and collaborators here -- and Houston has a lot going for it too. But after eight years, it was time for a change.

19

7

110

Some CS papers are written over a few months -- some others, over a year. Our new Arxiv post, led by Tom Reps and

@meghana_aparna

, describes ideas developed over ~25 years.

(1/n)🧵

3

14

106

Program induction is hard as search spaces of programs explode quickly. We have a new line of attack on this (to appear at

#NeurIPS2020

): using neural relaxations of discrete sets of programs as admissible heuristics. Paper: . Code: .

4

13

85

Delighted to announce PutnamBench, a new AI-for-math benchmark for evaluating neural theorem provers for Coq, Lean, and Isabelle on Putnam math competition problems. Almost all the problems here are beyond the reach of current approaches. Excellent leadership by

@gtsoukal

, who

0

24

83

We had a lot of fun writing this survey! Thank you,

@rupakmajumdar

, for inviting us to write this.

You can find a free excerpt here:

Below, a 🧵on what neurosymbolic programming (NSP) is and why we think it's important. (1/n)

Hey, ML/PL enthusiasts! Looking for some "light" reading for the holiday break?

FnT just published our survey on "Neurosymbolic Programming", written jointly with

@swarat

, Kevin Ellis,

@rishabhs

, Armando Solar-Lezama, and

@yisongyue

.

6

47

295

2

14

78

1) This is a thread on our

#NIPS2018

paper (

@lazarvalkov

et al.; preliminary version at ), and more broadly, about the role of language abstractions in deep learning (DL).

1

40

76

@ylecun

That review makes some basic mistakes.

A review in Nature, by

@candice_odgers

, asserts that I have mistaken correlation for causation and that “there is no evidence that using these platforms is rewiring children’s brains or driving an epidemic of mental illness.” Both of these assertions are untrue.

294

2K

8K

1

0

70

Our Neurosymbolic Programming tutorial is coming to

#POPL23

! We'll explain the basics, do an algorithmic deep dive, and explore neuroscience applications.

* 1/16 (Mon), 2 pm

* Speakers: Armando, my student Atharva, me (

@yisongyue

&

@JenJSun

in spirit).

2

14

71

The

@neurosym

summer school is off to a great start!

In the morning,

@atharva_sehgal

,

@akavidemic

, and I gave the first part of our tutorial on neurosymbolic programming. Slides at .

Now

@AI4Code

is telling us about the Scallop framework for

1

18

71

The neurosymbolic learning meetup at

#ICLR2024

was a big success! Many thanks to all who showed up — and especially to

@theo_olausson

, who made the event happen.

0

6

66

I am beyond excited to be part of this new

@NSF

CISE

#Expedition

on AI for systems: .

Our goal is to build a new kind of OS in which much of the decision-making is done by ML. This is a perfect playground for research on trustworthy/verified ML and

@NSF

Funded Expedition Project Uses AI to Rethink Computer Operating Systems. Led by

@adityaakella

, co-PIs are

@Joydeepb_robots

,

@swarat

, Shuchi Chawla,

@IsilDillig

,

@daehyeok_kim

, Chris Rossbach,

@AlexGDimakis

and Sanjay Shakkottai. 👏👏👏

0

3

20

4

8

62

Absolutely thrilled about this landmark achievement, both as a computer scientist and as someone about to spend a year with the

@GoogleDeepMind

team behind this work.

Solving International Maths Olympiad (IMO) level problems has been a grand challenge for AI systems. Happy to share that a new solver (AlphaProof) developed by our team

@GoogleDeepMind

and our geometry solver (AlphaGeometry) were able to solve 4 out the 6 IMO 2024 problems!

17

59

390

2

1

59

Excited to be a part of this venture! 🚀

@AsariAILabs

() is hiring, and if you are in the job market and excited about AI for difficult, real-world engineering applications, you should talk to us.

What makes Asari different? LLMs have excelled at low-level

6

8

59

Skipping

#PLDI

for the first time in a while, but there's a cute reason why. Ateesh Peterson Chaudhuri, joint project with the amazing

@TL_Peterson

, arrived on June 13. Life is good (though full of billions of poopy diapers)!

12

0

57

In just 11 years,

#ICLR

has become one of the most innovative and exciting events in CS. I am thrilled to help

@yisongyue

,

@_beenkim

, and the other Program Chairs run the 2024 edition in beautiful Vienna. If you have suggestions/comments, please send them our way!

I am honored to serve as Senior PC for

#ICLR2024

. Looking forward to serving with

@_beenkim

(General Chair) and a fantastic PC cast (

@YizhouSun

,

@swarat

,

@EmtiyazKhan

, & Katerina Fragkiadaki). Hope to see you all in Vienna!

0

3

105

0

3

53

Paper on "neurosymbolic" program synthesis for lifelong learning, authored with

@RandomlyWalking

and students, accepted at

#NIPS2018

! Moral: functional idioms and type-directed synthesis can facilitate transfer across learning tasks. Preliminary version at

1

14

52

Many congrats to Abhinav Verma (), who aced his PhD defense today. Abhinav worked on a new kind of RL based on neurosymbolic program synthesis. He will start as a prof at Penn State after a prebbatical with

@thenzinger

. Read his papers, and work with him!

0

6

51

How do you learn neural networks that respect end-to-end safety requirements of larger systems of which they are a part? Our new ICLR paper, led by

@ChenxiYang001

(), explores this question. (1/n)

4

8

49

Important post by

@GaryMarcus

on the limitations of DL. PL/logic researchers take note; we have much to contribute to this debate. In the recent past, we've had LEAPS in theorem proving, program synthesis, etc. We should try to leverage these ideas (+ DL) in classical AI tasks.

0

9

49

The

#NeurIPS2022

tutorial on Neurosymbolic Programming was a LOT of fun! Many thanks to our brilliant panelists --

@yisongyue

,

@pushmeet

,

@InalaJeevana

,

@Antihebbiann

, and

@SriramRajamani

-- and the live audience.

* Slides:

* Videorecording coming soon.

Come to our virtual tutorial on Neurosymbolic Programming at

#NeurIPS2022

!

** 12/5 (Monday), 10 am Chicago time **

* Speakers: Armando Solar-Lezama,

@JenJSun

, me

* Panel:

@Antihebbiann

,

@pushmeet

,

@SriramRajamani

,

@InalaJeevana

,

@yisongyue

(moderator)

2

7

38

0

7

48

United Airlines canceled my flight, British Airways bumped me off a flight, American Airlines lost my bag. But I am finally in beautiful Vienna, on time for the first day of

#ICLR2024

, looking forward to:

* The exciting announcements that

@yisongyue

will make in his opening

8

0

43

I'm delighted to announce our virtual tutorial on Neurosymbolic Programming at

#NeurIPS2022

!

* 12/5; 10 am Chicago time

* Speakers: Armando Solar-Lezama,

@jjsun

, me

* Panel:

@Antihebbiann

,

@pushmeet

,

@SriramRajamani

,

@InalaJeevana

,

@yisongyue

(moderator)

1

6

46

Many fun announcements about LLM agents in the last few weeks! I'll add one from our lab: Copra, a retrieval-augmented GPT-4 agent for formal theorem-proving in frameworks like Coq and Lean.

Copra, developed by

@AmitayushThakur

in collaboration with George Tsoukalas,

@YemingW

,

0

7

42

Our

@NSF

Expeditions project "Understanding the World with Code" gets kicked off next week (Oct. 5-6)! We aim to build up a science of neurosymbolic programming and use it to make new natural-science discoveries. . Livestream: . [1/2]

2

10

41

I can't say enough about how excited I am about this Expedition! I think a program synthesis perspective can really help AI approaches to the natural sciences. Our interdisciplinary team, led by Armando Solar-Lezama, will show how. More context here:

What’s going on inside

#artificialintelligence

“black boxes”? New partnership between

@UTCompSci

’s

@IsilDillig

and

@swarat

, plus other universities, aims to find out, thanks to a grant from

@NSF_CISE

.

@UTAustin

1

4

10

0

1

40

Come to our virtual tutorial on Neurosymbolic Programming at

#NeurIPS2022

!

** 12/5 (Monday), 10 am Chicago time **

* Speakers: Armando Solar-Lezama,

@JenJSun

, me

* Panel:

@Antihebbiann

,

@pushmeet

,

@SriramRajamani

,

@InalaJeevana

,

@yisongyue

(moderator)

2

7

38

PSA:

@adityaakella

and I are looking to hire a postdoc for an exciting new project at the interface of formal methods, machine learning, and software systems. Our high-level goal is to build scalable learning-enabled systems with strong reliability guarantees. A good candidate

1

12

37

@yisongyue

@JenJSun

Update:

* The slides for the tutorial are here:

* Notebooks for the tutorial (created by Atharva Sehgal and

@JenJSun

) are here:

2

7

37

Nice

@CACMmag

article by

@donmonroe

on neurosymbolic learning. It's been wonderful to work in this area over the last few years -- there are so many open problems and new applications! Increasingly, we are seeing a convergence... (1/3)

1

9

35

Really looking forward to this visit to the alma mater. It's always exciting -- and just a little bit intimidating! -- to speak at the room where you defended your Ph.D. thesis.

Next

@PennAsset

seminar will be on Wed, May 3 by

@CIS_Penn

alum

@swarat

of

@UTCompSci

, on neurosymbolic learning:

0

1

6

2

2

35

The main program of

#ICLR2024

is now over, and it couldn't have gone more smoothly. Thank you,

@_beenkim

and

@yisongyue

, for your able leadership. And

@EmtiyazKhan

,

@YizhouSun

, Katerina Fragkiadaki -- I will miss working with you!

Thank you my amazing Organizing Committee at

#ICLR2024

who made this conference happen. Working with you all will remain one of the major highlights of my professional career!

@yisongyue

@swarat

@EmtiyazKhan

@YizhouSun

Katerina Fragkiadaki Luis Oala,

@girmawAT

Mercy Asiedu,

4

18

125

1

2

35

Now accepted at

@COLM_conf

, 2024!

Many fun announcements about LLM agents in the last few weeks! I'll add one from our lab: Copra, a retrieval-augmented GPT-4 agent for formal theorem-proving in frameworks like Coq and Lean.

Copra, developed by

@AmitayushThakur

in collaboration with George Tsoukalas,

@YemingW

,

0

7

42

1

6

34

Looking forward to today's workshop at

#ICML2023

! I'll talk about

@chenxiyang_ut

's work on formally certified learning. Our goal: train agents that mix human code and differentiable NNs and can invoke verifiers as tools during learning. Room 310, 10:40 am.

[1/9] We are looking forward to seeing you all tomorrow at the Differentiable Almost Everything workshop

#ICML2023

We will start at 9am (Hawaii time) in Room 310.

1

7

19

1

3

34

I am honored to be a member of this year's cohort of

@TheOpEdProject

Public Voices Fellowship. My first op-ed, on risks from personalized AIs that turn into AI "frenemies", appears in

@thehill

today.

1

5

32

Last week's

@neurosym

summer school was a blast! Many thanks to all who attended and presented. Here's a group photo in front of the beautiful Salem harbor—thank you,

@konet

, for taking it!

All talk slides will be available at .

Great start to Day 3 of the

@neurosym

summer school!

@ZennaTavares

is telling us about the ChiRo system for learning and causal reasoning that his team is building at

@BasisOrg

.

1

4

28

0

8

31

Inspiring talk by

@RanjitJhala

on language-integrated verification at

#pldi18

. If you like PL/FM research and weren’t here, you need to check out the videorecording later.

1

5

30

Great summary by

@adriancolyer

of our ICML ‘18 paper on programmatic RL. FYI: We have a recent followup to the paper:

"Programmatically interpretable reinforcement learning" Verma et al.,

#themorningpaper

RL policies that are human interpretable and verifiable - i.e., deployable!

0

8

39

1

6

30

A few months ago, I had an enjoyable conversation with

@MitchWaldrop

on neurosymbolic learning. His article on this topic at PNAS Front Matter is now available, and it's right on point. 🧵

1

12

28

Neurosymbolic ML is a natural fit for natural science applications -- our new position paper gives a detailed argument as to why. The paper was led by

@konet

,

@JenJSun

, Megan Tjandrasuwita, and Atharva Sehgal.

I am very excited to share with all of you my first research paper as a senior researcher

@MIT_CSAIL

! “Neurosymbolic programming for science” Here we introduce the opportunities of neurosymbolic programming techniques to accelerate scientific discovery:

1

5

25

0

6

29

A very nice set of blog posts on the

#ICML2024

#AIforMath

workshop by Harald Carlens of

@ml_contests

. If you are excited by Alphaproof and want to know what's going on in the broader field, read these posts.

* Morning session:

* Afternoon session:

0

2

28

Chuchu's thesis is an example of what forward-looking formal methods research looks like. Chuchu shows that you can effectively combine white-box verification with more general data-driven methods, tremendously boosting the scope of FM. Congrats again on a well-deserved award!

Congratulations to Chuchu Fan, whose dissertation received the 2020 ACM Doctoral Dissertation Award for making foundational contributions to verification of embedded and cyber-physical systems. Happy

#AdaLovelaceDay

to all

#WomenInSTEM

!

1

19

119

0

2

28

The costs of LLM inference have gotten much attention lately, and rightly so. Over the last year, we have been thinking about ways to reduce these costs by compiling LLM queries into queries for "programs" over LLMs and smaller, cheaper models. Our first effort on this topic,

🚀 Thrilled to present our paper "Online Cascade Learning for Efficient Inference over Streams," to appear at

#ICML2024

! 🎉 We've crafted a new way to switch between LLMs and cheaper models learned online, significantly reducing inference costs.

2

8

30

0

2

28

Great start to Day 3 of the

@neurosym

summer school!

@ZennaTavares

is telling us about the ChiRo system for learning and causal reasoning that his team is building at

@BasisOrg

.

1

4

28

This happened yesterday. Congratulations, Dr. Anderson!

Many congratulations to Dr. (and soon, Doctor-Professor) Greg Anderson!

Greg did serious, interesting work coupling formal methods and ML, especially deep RL. Here's a 🧵summarizing some of his results. I think he will be an amazing asset for

@ReedCollege

! (1/4)

1

0

16

0

1

27

So proud of the grad students at

@UTCompSci

for launching this program! If you are from an underrepresented group and are applying to CS PhD programs this year, please sign up. Our student mentors will offer you quality feedback on your application.

A new student-led initiative from the graduate student group (GRACS)

@UTCompSci

to help under-mentored PhD applicants with feedback on their application material.

Send in your application material before Nov. 27th.

1

10

36

1

12

25

Are you a PhD student or advanced undergrad interested in neurosymbolic learning? Apply to attend the second

@neurosym

summer school!

The school will be held in beautiful Salem, MA, from June 10-12. We have a great speaker lineup, and you will likely meet a lot of students who

0

11

26

1) Thrilled to announce our

#NeurIPS2019

paper "Imitation-Projected Programmatic Reinforcement Learning", jointly written with Abhinav Verma (lead),

@HoangMinhLe

(lead), and

@yisongyue

.

Paper: .

Code: .

1

2

22

A year ago, I was stressing about the coming LLM-induced end of open ML+X research. Tom Reps, one of the wisest people I know, told me to relax -- he had seen this panic before with Windows and Linux. I'm increasingly convinced that Tom was right. Open-source finds a way.

0

1

23

Can you have deep RL with formally verified exploration/policy update steps? Seems hard; NN verification is costly! Our new work gives a path forward: mirror descent + shield synthesis enable verified deep RL sans direct NN verification.

#NeurIPS2020

[1/2]

4

2

23

Just finished reading the Voyager paper () -- thrilled to see programmatic representations and library learning scale to tasks this sophisticated, and amazed that GPT-4 can do so well at not just coding but also defining coding tasks. (1/n)

1

2

22

An exciting opportunity for those about to finish their PhDs! I would be delighted to host postdocs interested in the intersection of PL/logic and machine learning, including program synthesis, probabilistic and differentiable programming, and safe autonomy.

CRA and

@compcomcon

are pleased to announce a new Computing Innovation Fellows Program for 2020

#NSFCISE

#computing

#Phd

#postdoc

0

46

111

0

6

20

I really enjoyed my visit to

@INSAITinstitute

in Sofia last week. I don't think I've ever gotten this many questions at a talk! INSAIT, led by

@mvechev

, is an amazing effort that can transform the CS research landscape of Eastern Europe. I look forward to watching it grow.

Last Thursday was the first lecture of the new tech series of INSAIT, where top scientists who come up with the latest innovations in AI and computing talk about them in Sofia. Thanks for the amazing lecture by Prof. Swarat Chaudhuri (

@swarat

) on Neurosymbolic AI.

1

3

18

1

0

21

Abhinav Verma's paper "Programmatically Interpretable Reinforcement Learning" accepted at

#ICML2018

! A decent example, IMO, of how PL ideas can aid the quest for safe and accountable AI. So honored to work with such inspiring students and collaborators!

0

4

20

Thank you for profiling us (again),

@adriancolyer

! Here is a different example of the use of functional abstractions in program synthesis, by

@polikarn

et al.:

"Synthesizing data structure transformations from input-output examples" Feser et al.,

#themorningpaper

The 'no-code' approach to data transforms.

1

11

31

0

2

18

I am thrilled to be part of this

#ICLR2024

paper with

@lazarvalkov

,

@RandomlyWalking

, and

@variational_i

! The paper presents a probabilistic search technique for scaling up modular continual learning, following up on our earlier Houdini framework for lifelong learning through

I’ll be presenting our

#ICLR2024

paper on a probabilistic approach to scaling modular continual learning algorithms while achieving different types of knowledge transfer. (, in collaboration with

@variational_i

@swarat

@RandomlyWalking

). A tldr (1/8):

2

2

12

0

3

19

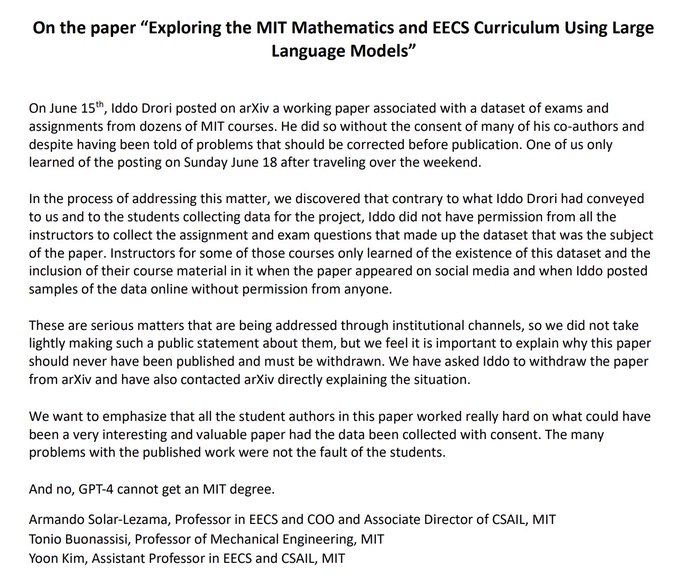

What really happened with the “GPT-4 can get an MIT degree” paper.

2

4

19

The

#ICML2024

AI-for-math workshop starts in 10 minutes! If you are here in Vienna, consider stopping by.

I will give a talk at 9:35 am on sequential-decision making agents for mathematical discovery. I'll post the slides here right after the talk.

0

4

18

If you are at

#ICLR2024

, check out

@atharva_sehgal

's nice new work -- with Arya Grayeli,

@JenJSun

, and me -- on compositional world modeling!

The method introduces a new neurosymbolic representation of entities in a changing world. It uses the compositionality of symbolic

Excited to present Neurosymbolic Grounding for Compositional World Modeling at

@iclr_conf

!

Our neurosymbolic algorithm learns world models from unsupervised interactions in a novel compositional generalization environment.

More info here:

#ICLR2024

1

5

34

1

4

18

Now

@vmansinghka

is telling us about probabilistic programming and specifically the Gen framework.

1

2

18

New paper on learning programmatic policies accepted at

#NeurIPS19

! tl;dr: We cast program synthesis as a form of projected gradient descent where one alternates between gradient steps in a neural functional space and projections into a programmatic space.

0

1

18

I had a lot of fun writing this post on the synthesis of neurosymbolic programs, the technical challenge at the heart of our new NSF Expedition (). Thank you,

@michael_w_hicks

, for being an amazing editor!

Today on PL Perspectives:

@swarat

introduces *neurosymbolic programs*, which are constructed by combining neural network training and PL-style program synthesis.

0

3

23

0

2

16

Many congratulations to Dr. (and soon, Doctor-Professor) Greg Anderson!

Greg did serious, interesting work coupling formal methods and ML, especially deep RL. Here's a 🧵summarizing some of his results. I think he will be an amazing asset for

@ReedCollege

! (1/4)

Delighted to introduce Dr. Anderson! It's been a pleasure to work with Greg and

@swarat

on Safe Exploration for Reinforcement Learning and see him through to his graduation. Looking forward to seeing what he will do at Reed College!

1

1

33

1

0

16

Very bummed to miss

#NeurIPS2023

, but several of my students are attending. Please say hi to them if you are interested in our lab's work!

-

@AmitayushThakur

and

@GTsoukalasRU

will be at the

#MATHAI

workshop, where we present our work on LLM agents for Lean/Coq theorem-proving:

0

4

16

Now accepted at

#NeurIPS2022

! Joint work with Cameron Voloshin (lead),

@HoangMinhLe

, and

@yisongyue

.

tl;dr: We introduce the problem of policy optimization under LTL constraints and give a solution with a rigorous sample complexity analysis.

Preprint:

Thrilled to be part of this effort! A big thank-you to leader Cameron Voloshin and co-authors

@yisongyue

and

@HoangMinhLe

.

LTL has long been a cornerstone of formal methods. Recently, LTL has found another use: as a language for communicating human intent to autonomy. (1/7)

1

1

16

1

2

16

@vmansinghka

And now

@IsilDillig

is teaching us about her work with

@jocelynqchen

on neurosymbolic programming for data science.

0

2

16

Thrilled to be part of this effort! A big thank-you to leader Cameron Voloshin and co-authors

@yisongyue

and

@HoangMinhLe

.

LTL has long been a cornerstone of formal methods. Recently, LTL has found another use: as a language for communicating human intent to autonomy. (1/7)

Policy Optimization with Linear Temporal Logic Constraints:

1st author: Cameron Voloshin

Co-authors:

@swarat

&

@HoangMinhLe

LTL can capture expressive constraints that are hard to do with reward engineering, such as an infinite loop (e.g. patrolling).

1

4

13

1

1

16

@roydanroy

We are working on agents based on search+LLMs for theorem-proving and have a poster at the MathAI workshop:

1

0

16

I had a lot of fun chatting with

@wellecks

on his Thesis Review podcast. Thank you, Sean, for inviting me!

Episode 43 of Thesis Review:

Swarat Chaudhuri (

@swarat

), "Logics and Algorithms for Software Model Checking"

We discuss reasoning about programs, formal methods & safe machine learning, and the future of program synthesis & neurosymbolic programming.

1

5

11

0

1

16