Siddharth Sharma

@siddrrsh

Followers

2,965

Following

2,761

Media

31

Statuses

999

CS @ Stanford. Building @mlfoundry . Prev @AWS , @Lux_Capital , @UniofOxford

Joined July 2020

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Ohio

• 491447 Tweets

Springfield

• 330867 Tweets

Kendrick

• 281054 Tweets

Haitians

• 280873 Tweets

#AppleEvent

• 259666 Tweets

EP1 U STEAL MY HEART

• 253659 Tweets

Wayne

• 201339 Tweets

iPhone 16

• 173728 Tweets

Beyoncé

• 110593 Tweets

Tim Cook

• 107729 Tweets

Super Bowl

• 53830 Tweets

Jets

• 47335 Tweets

Wales

• 46033 Tweets

Draghi

• 45971 Tweets

ايفون

• 36601 Tweets

Fassi

• 33954 Tweets

Catherine

• 28001 Tweets

Culiacán

• 27283 Tweets

Talleres

• 23496 Tweets

Tapia

• 22601 Tweets

Hakan

• 18971 Tweets

Camera Control

• 18215 Tweets

AirPods 4

• 16632 Tweets

PS5 Pro

• 15410 Tweets

Birdman

• 14962 Tweets

$INSDR

• 13592 Tweets

対象作品

• 13058 Tweets

Belgique

• 12379 Tweets

iOS 18

• 11894 Tweets

Gladys

• 10347 Tweets

USB-C

• 10240 Tweets

Merlos

• 10050 Tweets

Last Seen Profiles

Pinned Tweet

Introducing ambientGPT: an open-source and multimodal MacOS foundation model GUI

Run GPT-4o and open-source models with full ambient knowledge of your screen.

Foundation models have long been confined to the browser. With ambientGPT, your screen context is directly inferred as

32

90

587

Re Llama3V: Firstly, we want to apologize to the original authors of MiniCPM.

@AkshGarg03

and I posted Llama3V with

@mustafaaljadery

. Mustafa wrote the code for the project. Aksh and I were both excited about multimodal models and liked the architectural extensions on top of

50

42

268

Mustafa (

@maxaljadery

) and I are excited to announce MLXserver: a Python endpoint for downloading and performing inference with open-source models optimized for Apple metal ⚙️

Docs:

6

5

106

Building on top of last week’s release, we introduce mlxcli. Build on top of Apple MLX (

@awnihannun

) and 🤗 (

@reach_vb

@julien_c

) with mlxcli.

Usage: pip install mlxcli

Docs:

MLXcli achieves over 20+ tok/sec on M2 Mac’s

2

17

93

Excited to be a part of this team! The best is yet to come.

We're excited to announce $80M in seed and Series A funding co-led by

@sequoia

and

@lightspeedvp

to further our mission of orchestrating the world’s compute capacity, making it universally accessible and useful.

How we can help 👇

3

8

128

2

1

48

Super excited to join Lux part-time with

@graceisford

and the rest of the

@Lux_Capital

team! Looking forward to thinking about frontiers in AI/ML.

3

3

31

.

@maxaljadery

and I authored a short textbook on RL to explain the concepts with our own style of pedagogy. We understand best by teaching and there's no better way to learn than trying to convey nuanced topics with as much simplicity as possible.

2

2

32

We’re excited about the future of open-source models and would love to hear any thoughts and/or suggestions on how we can take long-context further.

Shoutout to the Gemma team for their awesome work in building these open-source models!

@OriolVinyalsML

@clmt

@JeffDean

@koraykv

4

0

29

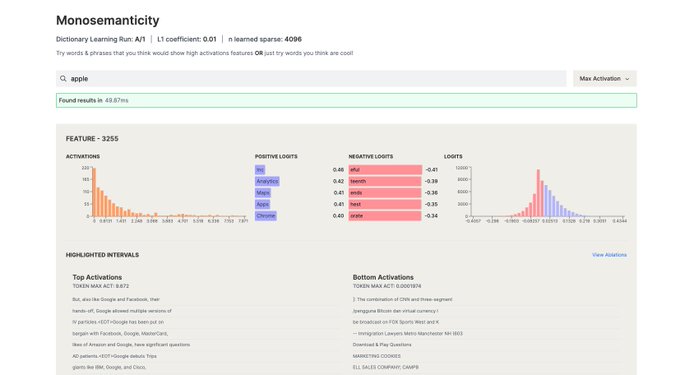

Hey all, last weekend

@maxaljadery

and I built a rapid indexing and 80x+ faster visualization layer to understand how groups of neurons (features) activate and cluster based on the latest data from

@AnthropicAI

's mechanistic interpretability research. 🧵

1

2

30

Amazing news!

@graceisford

is the best mentor I could ever ask for. She's made my time at

@Lux_Capital

super memorable and full of amazing learnings. There's no one who deserves it more.

1

0

23

Excited to announce my article on sparsity for LLMs with

@graceisford

,

@DannyCrichton

for

@Lux_Capital

1

4

21

Having worked at

@AWS

, and with

@AnthropicAI

recently adding Claude to Bedrock,

@maxaljadery

and I built Python and typescript SDKs to interact with Anthropic’s models on AWS Bedrock. It makes it really easy to do all of the AWS auth and use Anthropic models in production. The

1

1

18

Hey folks -

@maxaljadery

and I are excited to launch : an open-source infrastructure for labeling multimodal data while enabling RLHF tagging and augmenting your existing training data at no cost.

1

2

14

Bullish NYC.

Today I’m thrilled to announce

@Lux_Capital

's NYC AI Directory & NYC AI Map - 2 resources for the burgeoning AI talent ecosystem

READ MORE👇

NYC AI Directory:

NYC AI Map:

30

53

362

0

2

14

orchestration is the future. haven't seen such a sick project in a while.

1

2

12

Great work is when you get in a flow state and genuinely enjoy the process of what you're creating, not when you fully pander to an institutional ranking or external arbiter.

0

1

10

@punwaiw

@sofianeflarbi

and I decided to give an upgrade by adding grade distributions 📕 ... check it out! 🧭

3

0

9

Excited to share this article I was featured in alongside

@sophfuji

@bryanhpchiang

@isabelle_levent

🌲

0

0

9

Love to see people build on top of !

Run Apple MLX from your menu bar.

Introducing Pico MLX Server, a graphical frontend to download and start multiple(!) AI models locally on your Mac.

You can use it with any chat client you like (e.g.

@PicoGPT

) that uses the OpenAI API standard.

38

64

520

0

3

9

Presenting the greatest place on the planet to work.

@sama

I’m super excited to have you join as CEO of this new group, Sam, setting a new pace for innovation. We’ve learned a lot over the years about how to give founders and innovators space to build independent identities and cultures within Microsoft, including GitHub, Mojang Studios,

1K

3K

32K

0

0

8

bullish on companies where the founder is a beast at Mathcounts

When I was in middle school I qualified for Nationals at MathCounts

and I remember distinctly watching

@0xShitTrader

(CEO of Ellipsis), absolutely destroy in the Countdown round

That was when I realized I was very very good at math, but I was not Eugene

22

10

155

1

0

8

What a gift to the foundation model community.

0

1

7

Wish I could be there IRL - regardless it's gonna be epic!!

@SilasAlberti

@bfspector

@punwaiw

@lktong_

@leithnyang

@julianhquevedo

@varunshenoy_

@andrewparkk

@usygoosy

@siddrrsh

(IN SPIRIT)

+ LIZ, WILL

+ MORE SPECIAL GUESTS

2

3

18

0

0

6

I love GPU pods

1/

@SohamGovande

,

@jameszhou02

,

@jzhou891

and I spent the weekend building PodPlex: A platform for distributed training & serverless inference at scale

I'm very glad to say that we left $10,000 GPU credits richer and 36 hours of sleep poorer

more details in 🧵

11

13

136

0

0

6

Couldn't be a better time to read Bostrom's Superintelligence

1

0

6

We have a new version of Speed Insights coming.

The key evolution: it's an intelligent "Kanban Board" of what pages you should be optimizing.

… instead of maintaining TODO lists and issue trackers, let

@vercel

do it. Oh, and the issues "close" as data comes in in realtime 😁

37

37

786

0

0

3

Favorite Driver of all time: Ayrton Senna

Driver I dislike: Max Verstappen

Driver that grew on me: Charles Leclerc

Most overrated Driver: Fernando Alonso

Most underrated Driver: Nico Rosberg

The GOAT of F1: Michael Schumacher

2

0

5

Deep learning is unique in that the field has such high promise when customer-facing/in-production but we know so little about why certain optimizations create certain effects.

.

@_sholtodouglas

poses a challenge.

In the spirit of

@natfriedman

(whose Vesuvius Challenge was solved by a listener of my podcast -

@LukeFarritor

).

Can you figure out what the experts in a Mixture of Experts model are each specialized in?

"A wonderful research project to do:

18

42

485

0

0

5

Huge fan of

@obsdmd

- great interface and proves brutally optimized simplicity is what software should be centered around

Love letter to

@obsdmd

to which I very happily switched to for my personal notes. My primary interest in Obsidian is not even for note taking specifically, it is that Obsidian is around the state of the art of a philosophy of software and what it could be.

- Your notes are

386

931

9K

0

0

5

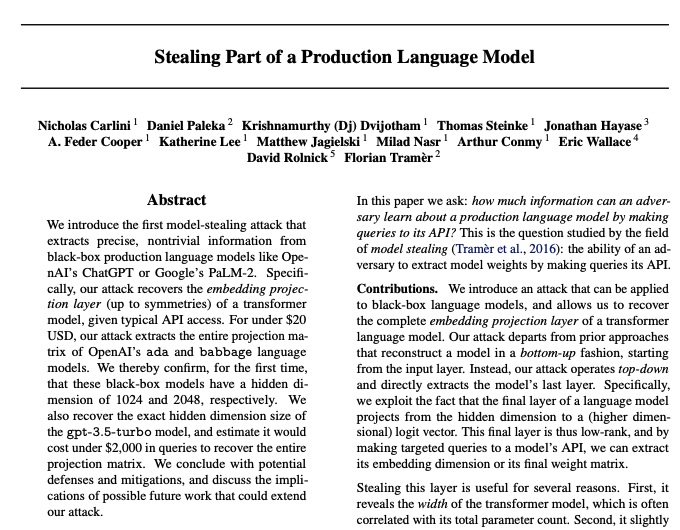

Wild that this is on Arxiv. LLM security is sure to be its own sector these days.

1

0

5

Great to see a community developing around this!

@ollama

,

@GroqInc

, and

@vllm_project

integrations on the way 🫡

2

1

4

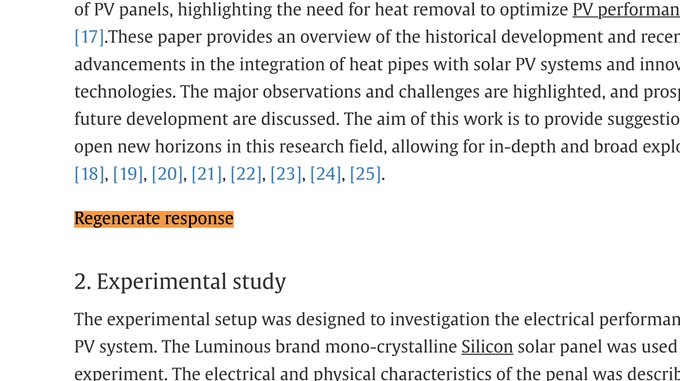

First

@tab_delete

, now

@itsandrewgao

... love to see Stanford students never settling for less than the truth.

PART TWO of

#PaperGate

!

That

#ChatGPT

button "Regenerate response" often gets pasted into

#Scientific

Papers, via

@gcabanac

!!

A 🧵 of

#peerreviewed

scientific publications from reputable publishers like

@sciencedirect

@IEEEorg

@ElsevierConnect

First up, a paper on solar

18

112

456

0

0

5

Excellent writing on decentralized/distributed training. Exciting times ahead!

0

0

5

Incentives rule the world. Kudos to

@AravSrinivas

and

@perplexity_ai

0

0

4