Nebius

@nebiusai

Followers

2,218

Following

941

Media

149

Statuses

195

We provide high-end, training-optimized infrastructure for #AI practitioners. It's a boutique #GPU cloud with a great team and hyperscaler-grade UX.

Netherland

Joined June 2023

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

ILL BE THERE TODAY

• 183182 Tweets

#DISEASEISCOMING

• 85552 Tweets

Racing

• 67769 Tweets

#GranHermanoCHV

• 66915 Tweets

Mel Gibson

• 66313 Tweets

Vikings

• 64863 Tweets

Luka

• 56585 Tweets

ILL BE THERE BY JIN

• 53744 Tweets

#IllBeThereOutNow

• 48544 Tweets

Rams

• 47900 Tweets

Romero

• 39822 Tweets

Klay

• 37206 Tweets

Ramon

• 32340 Tweets

連載マンガ

• 29463 Tweets

Yuri Alberto

• 24771 Tweets

DEAR KASIBOOK 28TH

• 24343 Tweets

Mavs

• 23040 Tweets

Puka

• 22884 Tweets

Memphis

• 19913 Tweets

Kupp

• 19603 Tweets

#MEGANACTII

• 19425 Tweets

Stafford

• 16399 Tweets

ジュニア

• 14998 Tweets

Wemby

• 14541 Tweets

Facemask

• 14156 Tweets

Darnold

• 13468 Tweets

Waldo

• 10905 Tweets

上げ底弁当

• 10555 Tweets

Last Seen Profiles

🎩 Optimizing inference with math: TheStage AI and its framework

The inference market has grown so significantly that inefficiencies between revenue and inference costs have emerged.

@TheStageAI

’s solution, which uses our infrastructure, is designed to

3

15

135

📣 Several weeks ago, our Product Director Narek Tatevosyan gave a talk at the

#TheAISummit

London. He shared an insider's perspective on the key steps, essential tools and challenges involved in training

#genAI

model from scratch. Watch the talk here:

2

18

80

Recraft

@recraftai

, recently funded in a round led by Khosla Ventures and former

@github

CEO Nat Friedman, is the first

#genAI

model built for designers. Featuring 20B parameters, the model was trained from scratch on Nebius AI. Here’s how:

5

6

69

💬 Join our next hands-on webinar on deploying a knowledge-based chatbot with

#RAG

in production! This implementation leverages

#opensource

tools and is powered by

@nvidia

H100 GPUs.

When?

May 16, 17:00 (GMT+2).

Register:

5

6

70

🧩Preserving knowledge in compact models:

#opensource

contributions of Unum x Nebius

To date,

@unum_cloud

has trained and open-sourced 4 models in partnership with us, all available on

@huggingface

for everyone to experiment with. Here’s the story so far:

1

3

63

🎬 We’ve filmed this video in Finland, the home of our

#datacenter

. Here, we built ISEG, the world's 16th most powerful

#supercomputer

— since then, we also constructed an 8,000-GPU supercluster. Take a look at our DC and learn more about hardware R&D:

1

5

51

Join our first meetup with

@mlopscommunity

on Thursday, April 11, in Amsterdam!

Filipp will cover infrastructure resilience of multi-node

#LLM

#training

, and Luka will discuss realtime standby energy waste prediction. Spaces are limited — register soon:

0

4

48

🌄 Unlocking the power of

#opensource

LLMs

Our expert team will unveil our

#inference

infra and share insights on navigating the token-as-a-service market. Register for the webinar for

#GenAI

builders, CTOs, PMs, data scientists and related roles:

7

7

53

Special offer for those training

#largemodels

: fight for the best price on your

#H100

cluster of 128+ GPUs! Till May 31, name your price for at least 14-day training and get a training-optimized infrastructure + free dedicated architect support as a bonus:

0

5

38

Just recently, we added

#Kubeflow

, a popular open-source platform providing an ML stack for

#K8s

. It consists of

@TensorFlow

,

@ProjectJupyter

notebooks, and other tools. You can deploy Kubeflow in your Managed K8s clusters on Nebius AI using this product:

2

3

37

🔄 Announcing Managed Service for MLflow in public preview

#MLflow

is a renowned industry tool that streamlines

#workflows

in the model dev cycle. We made MLflow more accessible to a broad audience of ML enthusiasts by providing it as a

#managed

solution:

3

3

33

🧪

#ML

experiments help you discover the most optimum model version for your specific use case. Read this article on our blog to learn about different types of

#experiments

and what you need to watch out for when conducting them:

0

5

20

🔥 Choosing

#storage

for

#deeplearning

: a comprehensive guide

Drawing from Nebius’ and our clients’ extensive experience, today’s guide by our own Igor Ofitserov aims to help engineers choose the most fitting storage solutions for deep learning:

0

3

17

No matter how ambitious your AI journey is - whether it’s a new

#LLM

, product based on

#Generative_AI

, or

#Computer_vision

- Nebius AI is here to help at every stage.

We are excited to have you join us!

0

3

17

Supporting CUDAMODE IRL Hackathon was thrilling. Our CBO

@RomanChernin

said a few words before we began — thanks to everyone for the follow-up discussions. Each researcher we spoke with was aware and involved in the field. It was nice seeing you, Andrej

@Karpathy

!

1

2

14

Thrilled to support CUDAMODE IRL Hackathon by

@Accel

,

@NVIDIA

and

@PyTorch

, featuring Andrej

@Karpathy

as a speaker! You can also apply for a spot.

11

0

10

🟢 Register among the first 50 to get your free

@TechCrunch

Disrupt ticket!

We’re sponsoring and giving away tickets. Just fill out this form among the first 50: . Note that the ticket will only be valid for the person whose name you indicated on the form.

6

0

13

Throwback to this past weekend, when we supported the HACK UK Hackathon hosted by

@a16z

,

@MistralAI

and

@cerebral_valley

. We provided teams with infra powered by H100 Tensor Core GPUs. Additionally, Boris Yangel, head of our LLM R&D team, participated as a judge.

0

3

11

✋ We’re at

@Ray_Summit_Live

in SF with our booth and a talk. Let’s meet up! Also, join us at the

#RaySummit

Lightning Stage today at 12:00 PM for a talk by our Senior Product Manager Aleksandr Patrushev. He will be discussing the work of our LLM RnD team.

2

3

10

Today is

#AIAppreciationDay

. Our marketing team wants to thank AI tools and their creators for the AI magic that amplifies our productivity.

Thanks a lot, dear

@recraftai

,

@Grammarly

,

@Runway

, and

@perplexity_ai

!

A special thank-you goes to

@Recraft

for illustrating our

2

1

10

🎨 We’re at the famous

@joinstationf

, a venue for

@xyz_paris

, sponsoring and participating with an inspiring talk by our own Rashid Ivaev. The French

#AI

community has always welcomed us warmly, which is especially important considering the opening of our GPU cluster in

#Paris

.

2

1

10

🔥 This Saturday, we’ll be supporting CUDA Hackathon in SF, providing H100 to each hacker:

It‘ll be great to have

@clattner_llvm

with us, the creator of LLVM, the Clang compiler and Swift.

@ashvardanian

will also speak at the event, joined by co-hosts

0

2

9

🏅 We are

#17

in TechRound’s AITech35 winners!

In the rating, the startup and tech magazine

@techrounduk

celebrates the most innovative

#AI

companies across UK and Europe. We’re proud to rank

#17

, alongside great companies like

@Kayrros

and

@Databricks

. Kudos to all the winners!

0

1

7

Here’s how to run

@Meta

Llama 405B with Nebius AI Studio API:

Our Studio allows

#GenAI

builders to use top

#opensource

models without facing the usual difficulties. The platform provides an API to run such models. The rest you can find out in the guide.

3

11

35

🏙️ In a rare public talk by our hardware R&D team, Igor Znamenskiy and Oleg Fedorov will discuss Nebius’ own server design and much more. We're especially proud to present this talk at

@OpenComputePrj

Summit. To attend, come to room 220C at Concourse Level at 1:30 PM on Oct 17.

5

1

9

🌌 Upcoming

@llmopsSpace

webinar: Taming AI or How we build the alignment pipeline

Speaking on July 11 will be Maksim Nekrashevich, our ML & LLM Engineer, accompanied by

@PhilipTannor

, CEO and Co-Founder at

@deepchecks

. Don't forget to register:

0

4

8

🦆 Our quAIck-quAIck is wishing the best of luck to all hackers at

@MistralAI

Paris Hackathon!

We equipped 45 teams with

@nvidia

#H100

#GPUs

, and our ML engineer Sergei Polezhaev will share useful tips on applying RLHF without labelers. Shout out to

@cerebral_valley

for hosting!

0

1

6

🔥

@icmlconf

has kicked off: come meet us!

ICML is underway — take a short break from discussing posters and come to our booth 202. Lots of ideas around require resilient infrastructure, that’s why we are here.

You can meet Boris Yangel, our Head of NLP, Sergey Polezhaev, ML

0

1

7

The latest episode of

@mlopscommunity

Podcast is live, featuring our ML Engineer Simon Karasik, who provided an intro to

#LLM

checkpoints, shared tips and tricks for handling them and choosing a storage for them.

Audio:

Video:

1

2

6

✋ Greetings from

@LDNTechWeek

! It was wonderful to discuss AI implementations into established products with such great companies as

@Grammarly

and

@canva

! If you’re also on site, let’s chat about partnerships and

#GPU

infrastructure.

0

0

6

🔥 Introducing Soperator, the world’s first fully featured

#Kubernetes

operator for

#Slurm

in open source

From the in-depth article about it, you’ll find out how the community tackled this before and the details of the architecture of our open solution:

0

1

6

Today, we’re in 🇬🇧London at Fully Connected 2024, organized by

@weights_biases

. At our booth near the VIP lounge, you can meet our Product Director Narek Tatevosyan and Engagement Manager Dmitry Levner, who'd be happy to discuss

#GPUcloud

solutions dedicated to your ML pipelines.

0

0

5

🎰 Today and tomorrow, we’re at the famous MGM Grand in Vegas, the home of one the

@Ai4Conferences

, joining more than 5K attendees! If you’re one of them, get in touch with our team to discuss your bottlenecks in building

#AI

#training

and/or

#inference

workloads:

0

1

6

🏋️ Weights & Biases Launch agent, an important tool for managing ML experiments, is now available on our Marketplace: . This marks the beginning of a broader tech partnership between Nebius AI and

@weights_biases

, with more outcomes on the way.

0

2

5

👨👩👧👦 Hello again,

@mlopscommunity

!

We’re once again supporting MLOps Community Meetup, while also participating with a talk. This time we’re at

@Techspace

Kreuzberg in Berlin, sharing the stage with

@JoannaStoff

and

@katjawittfoth

. Our Senior ML Engineer Sergey Polezhaev just

0

2

6

🌁 During the

#RaySummit

in SF, our Senior Product Manager Aleksandr Patrushev will give a talk on why every AI cloud provider needs an in-house

#LLM

team. Come to the Lightning Stage on Oct 2 at 12:00 PM.

Also, throughout the summit, you can visit our booth. See you there!

3

1

6

🚜 Learn more about TractoAI, our end-to-end solution for data preparation, exploration and distributed

#training

, powered by proven

#opensource

technology. In the image, you can see our product landscape. Take a detailed look at the implementation here:

0

0

5

We're at

#ICLR

, one of the most prestigious AI research conferences. Meet our ML engineers, ready to share LLM training techniques.

Many experts at ICLR are seeking new methods and also resources to implement them. We’re here to help. Come discuss your workflows at our booth 07.

1

0

5

📝 Read about Krisp’s experience with our platform

With Nebius AI,

@krispHQ

adopts

#Accent

#Localization

, a real-time technology that removes the accent from call center agent speech resulting in US-native speech.

Learn more on our blog:

0

0

5

🇬🇧 Today and tomorrow, we are at

#TheAISummit

London. Come say hi at booth 105! At 16:00 today, we will give a demo on building production-ready RAG chatbots.

Also, tomorrow at 11:55, our Product Director Narek Tatevosyan will give a talk on training a genAI model from scratch.

0

1

3

If you're attending

@EmergeConfHQ

in Yerevan, don't miss the talk by our CMO Anastasia Zemskova

@elsegreen_

. It will show you how conversations with some of the brightest minds in AI helped us build a platform that’s flexible, adaptable, and ready for whatever comes next.

1

0

5

We're continuing our exploration of our platform with our tech expert 😎

In this video, Khamzet Shogenov, a cloud solution architect, will discuss networking basics within the Nebius AI cloud platform.

#Network

#VPC

#NebiusAI

#machinelearning

#ai

0

0

5

🇩🇪 Today, the

@THWS_Presse

is hosting a hackathon focused on solving LLM-related tasks presented by

@DeutscheBank

. We provided participants with

@nvidia

#H100

#GPUs

and cloud solution architect expertise. Hope that the teams' quick experiments will lead to impressive results!

0

1

4

Thanks for choosing our platform! Great result!

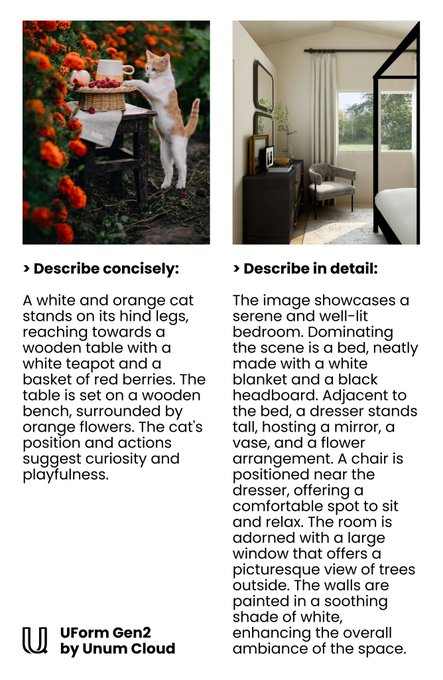

We are releasing a new tiny VLM 🎉

The model is smaller and much more accurate than our previous

@unum_cloud

"uform-gen" downloaded 100K times/mo

The new decoder is only 0.5 billion parameters and the model is already available on

@huggingface

🤗

🧵

2

17

83

0

0

4

🎛️ So why is Nebius AI the best for model training? Well, we’ve put lots of effort into understanding what ML engineers need from a GPU cloud. Here, we outlined everything we learned from our LLM R&D team,

@recraftai

,

@higgsfield_ai

and other clients:

2

0

3

Slurm 🆚 K8s: a comprehensive blog post

Our Cloud Solution Architect Panu Koskela's article compares the most popular options today for orchestration in model

#training

— Slurm and

@kubernetesio

, covering their origins,

#ML

adaptations and other factors:

0

0

3

🌕 JupyterHub: just released on Marketplace

#JupyterHub

is a multi-user server for Jupyter notebooks, providing an environment for data science, ML and scientific computing.

You can deploy the server with PyTorch and CUDA in your K8s clusters right away:

0

1

4

💰 Recently, our CFO Danila Pavlov wrote a piece on solving the

#GPU

#pricing

puzzle for

@TheStartupsMag

. He outlined the key tactics startups can use when selecting a GPU provider for training and resource-intensive inference. Read the article here:

2

1

4

⚖️ At the frontiers of physics and maths: exploring London Institute’s projects based on Nebius infra

Today’s guest post on our blog is written by

@Ananyo

Bhattacharya, Chief Science Writer at the London Institute for Mathematical Sciences

@London_Inst

. We are honored to host

0

2

4

We have a tradition at Nebius AI - Friday Cats when colleagues share pics of their pets. But our editor doesn't have any animals. So, she asked the AI to draw a cute cat.

Which one would you choose to share with your colleagues? 😺

@recraftai

@veedstudio

0

0

3

🎙️ We talk a lot about using our platform for model training, but don’t be mistaken — it is equally powerful

#inference

-wise. We're proud that

@higgsfield_ai

infers such impressive models with

#L40S

GPUs on Nebius AI. Appreciate your kind words,

@alexmashrabov

!

1

0

2

🇦🇹 Nebius AI at ICML: who to meet and where to find us

Here are the members of our team who will be at

@icmlconf

:

- Boris Yangel, Head of NLP

- Sergey Polezhaev, ML Engineer

- Levon Sarkisian, Cloud Solutions Architect Team Leader

- Aleksandr Patrushev, Senior Product Manager

0

0

3

Congrats to all four companies chosen by the EU to get supercomputing hours! And thanks to

@NomadicNarnian

at

@thenextweb

for covering the competition:

By the way, Nebius AI offers everyone — not just the EU's favored few — reasonable rates to access

0

0

2