Log10

@log10io

Followers

164

Following

244

Statuses

363

Scaling reliable LLM apps with data management, robust evaluations & fine-tuning Github: https://t.co/bRzXa0XyA6 Discord: https://t.co/FarXfemA6V

Joined May 2023

.@OpenBB_Finance transformed its AI-powered Copilot for financial analysts by integrating Log10's advanced observability tools. Improved accuracy, faster debugging, and user insights now ensure reliable performance, reducing churn and boosting confidence. Discover how observability is reshaping AI! 👉

0

0

2

Vibe checks are the way you get started building AI applications, but there comes a time when they're no longer a sufficient approach. Our Co-Founder and CTO, @NiklasQuarfot, shares the alternative and how Log10 changes the approach.

0

0

0

💡 Case Study Spotlight: See how Assort Health transformed patient experiences with Log10's observability tools! 🔍 Challenges: - Complex scheduling rules - Latency-sensitive AI - Debugging inefficiencies ✅ Solutions: - 5x faster debugging - Real-time insights with Log10's dashboard - Scalable observability for AI growth Result? Frustrating calls turned into personalized care. 📖 Read the full story:

0

1

1

Your dev team needs an end-to-end solution. Look no further than Log10! Get a glimpse at how it works in this quick demo video with Log10 CEO and Co-Founder Arjun Bansal (@CoffeePhoenix).

0

0

0

Log10 CEO and Co-Founder Arjun Bansal (@CoffeePhoenix) outlines what AI apps need to break into healthcare: 📊 Better data sets 🔏 Data privacy (SOC2, HIPAA) 🔎 Role responsible for AI oversight Here's how Log10 can help bridge the gap: 💡 Intuitive UI and workflow ✔️ Checkpoints for human review of evaluation models 📈 Continuously improving data quality

0

0

0

LLMs have a hard time reliably self-evaluating accuracy for a variety of reasons: • Biases in models • Tendency to prefer their own output • Positional + verbosity bias • Preferring outputs with more diverse tokens over accuracy • Model output inconsistency In this video, Log10 CEO and Co-Founder @CoffeePhoenix dives deeper into this subject.

0

0

0

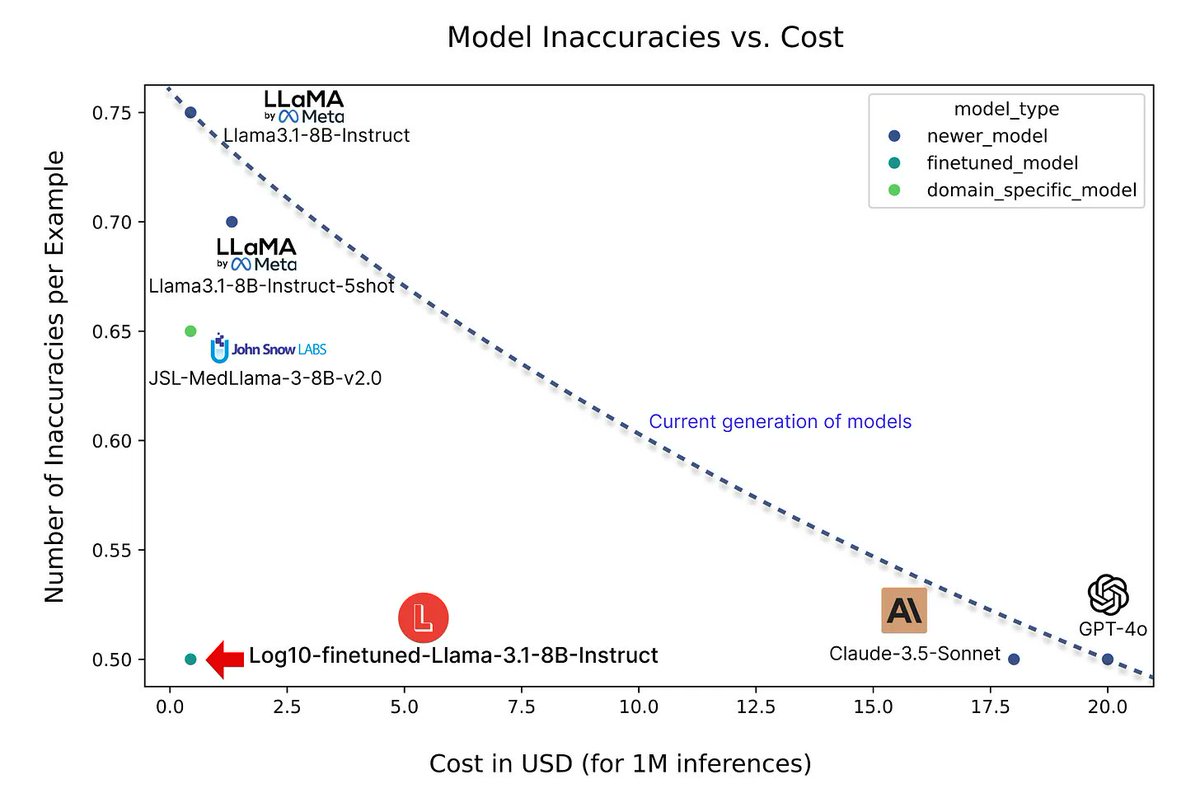

LLMs are transforming healthcare workflows by reducing provider burnout and streamlining administrative tasks. But many wonder if they deliver the accuracy required for processing complex medical domain text in high-stakes scenarios. In this blog, we evaluate their performance and highlight limitations. ⚡ Want a quick overview? Here are some key takeaways: 1️⃣ Accuracy Issues Remain: Human expert review of clinical text generated by gpt-4o and Claude-3.5-Sonnet uncovers consistent errors. 2️⃣ Common Error Patterns Result in Varying Risk: A recurring issue is LLMs’ tendency to infer or fill in information based on contextual cues, resulting in inaccuracies that vary in severity depending on the context. 3️⃣ Fine Tuning is Still a Good Bet: Fine-tuned, open-source models match the accuracy of proprietary systems at a fraction of the cost. 4️⃣ LLM-as-Judge Falls Short: Automated evaluation is fast but prone to biases and inconsistencies, making human oversight essential for clinical tasks. 5️⃣ Innovation Is Key: Techniques like Latent Space Readout, developed at Log10, surpass LLM-as-Judge in accuracy, offering a more reliable solution for healthcare. Why It Matters ⤵️ Clinical applications demand uncompromising accuracy while maintaining cost-effectiveness. While LLMs show great promise, achieving trustworthiness requires innovative methods and expert oversight. Take a deeper dive here:

0

1

1

. @AssortHealth leveraged Log10 to supercharge their AI-powered call centers, enabling 5x faster debugging, smoother patient interactions, and precise prompt tuning. 💡 Key results: ➡️ Enhanced patient experience through natural, intuitive conversations. ➡️ Granular observability for scalable growth. ➡️ Rapid troubleshooting to meet real-time demands. Learn how Log10 drives innovation in #healthcare automation while ensuring reliability and patient satisfaction:

0

0

1

Log10 CTO and Co-Founder, @NiklasQuarfot, shares insights on bridging the gap between traditional software engineering and LLM application development in this short video.

0

0

2

The Log10 #LLM Efficiency Suite: Accelerate development with #AI that scales expert review, detects errors in real time, and drives production-level accuracy. Tuning ⤵️ As production feedback increases, the quality of curated datasets improves, creating a closed-loop system that allows for fine-tuning of prompts and models, resulting in greater application accuracy.

0

0

1

Understanding the brain can help develop #AI solutions! 🧠 Log10's CEO and Co-Founder, @CoffeePhoenix, explains how in this quick video. #LLM

1

0

1

Scale expert review, enable real-time error detection, and empower your team to reach production-level accuracy with Log10's #LLM efficiency suite! Issue Triage ⤵️ Leveraging a real-time AutoFeedback accuracy signal, errors are automatically prioritized and queued for resolution. Engineers can debug and address these issues using the Log10 LLM IDE. #AI #LLMOps

0

1

1

If you're a "traditional" developer, Log10 CTO and Co-Founder @NiklasQuarfot recommends two main things for preparing yourself to work in the world of non-deterministic LLMs and #AI: • Think about making systems "mostly right" • Use systems like our AutoFeedback to rapidly assess performance #LLMOps #LLM

0

0

0

Have you ever wondered why there isn't a single solution for #LLM evaluation? 🤔 From companies having access to different data to evaluators requiring a lot of data to train, the reasons vary. Our CEO, @CoffeePhoenix, digs into the details in this short video. #LLMOps

0

0

0

Accelerate #AI development with the #LLM Efficiency Suite! Scale expert reviews, detect errors in real time, and achieve production-level accuracy—all with ease. Monitoring & Alerts ⤵️ With a real-time AutoFeedback accuracy signal, establish quality threshold targets and gain clear insights into your application’s performance. Receive alerts when quality dips below critical levels.

0

0

0