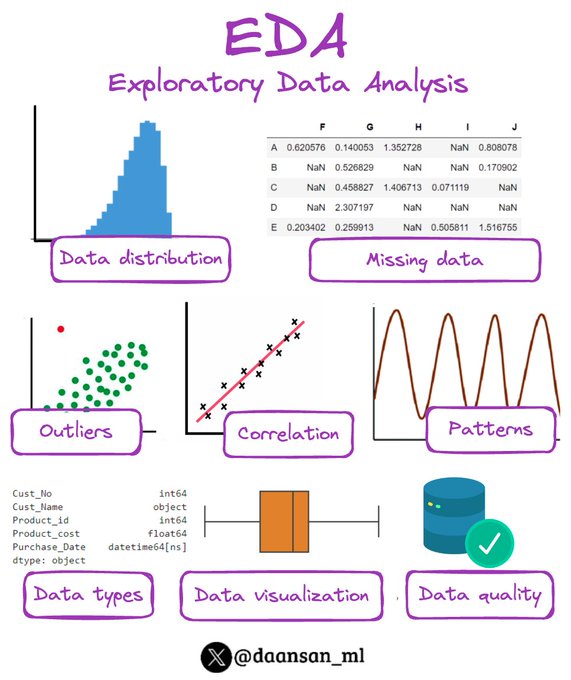

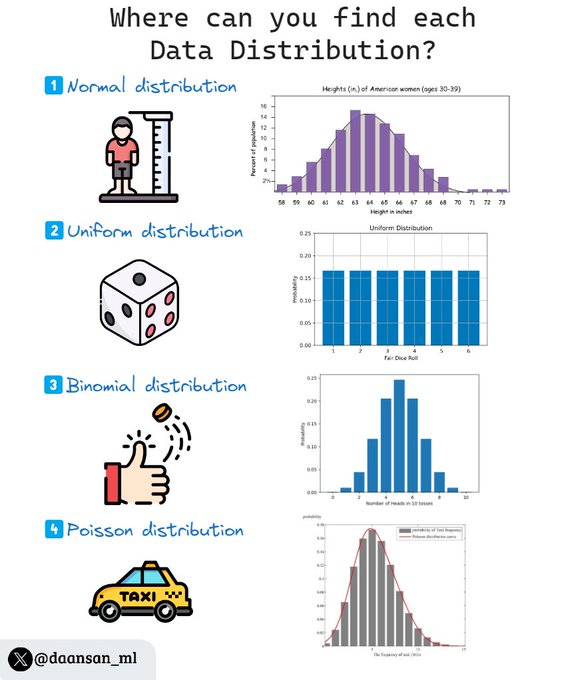

David Andrés 🤖📈🐍

@daansan_ml

Followers

11,305

Following

404

Media

841

Statuses

9,323

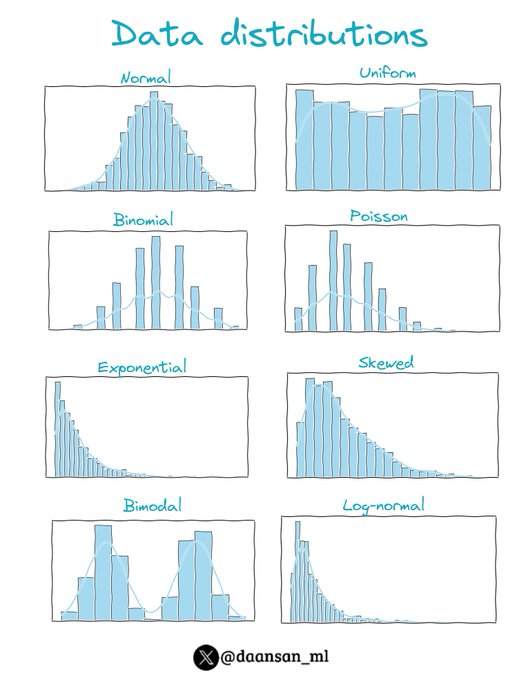

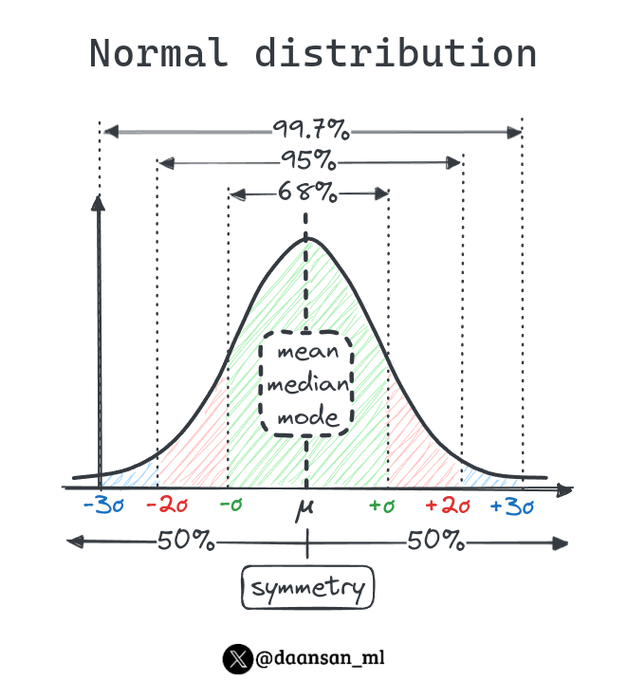

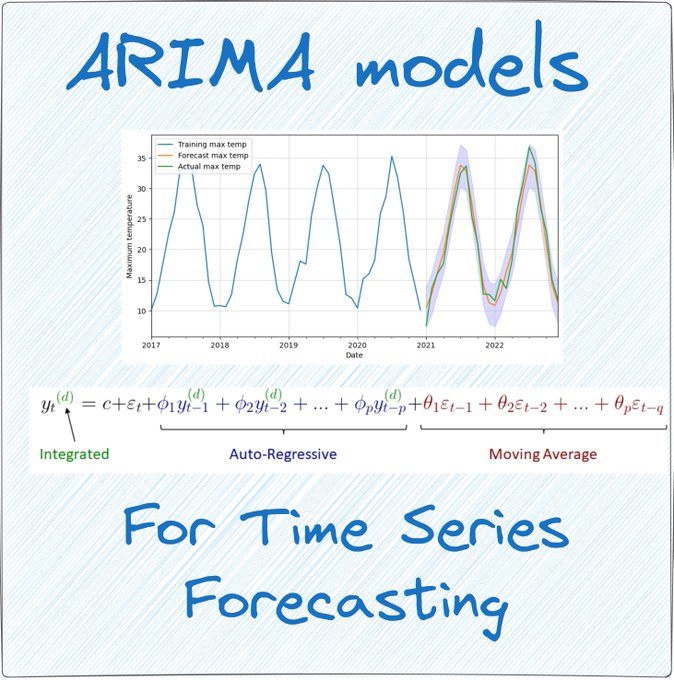

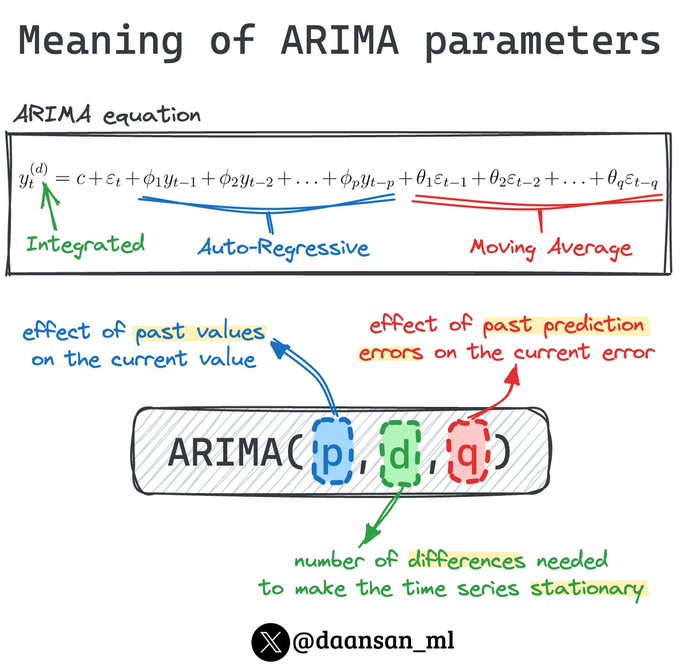

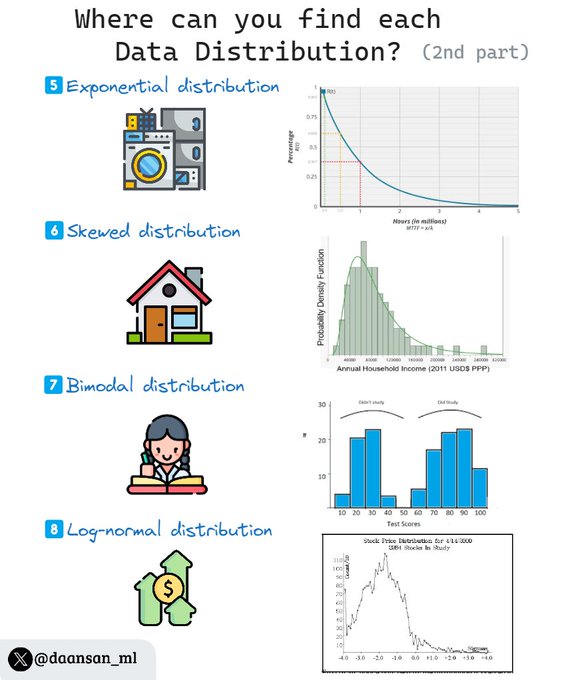

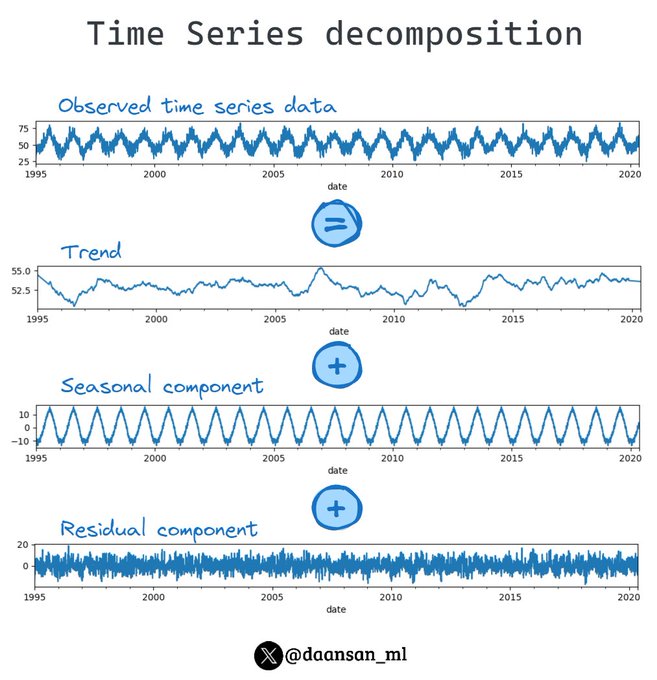

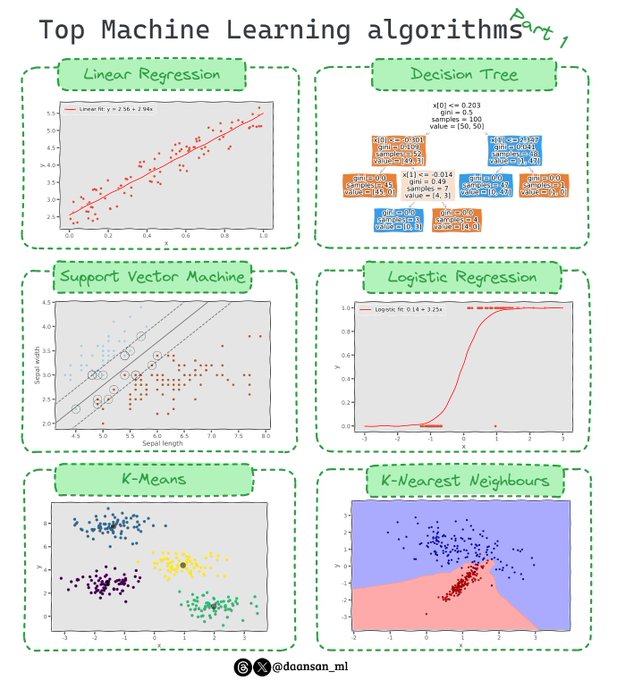

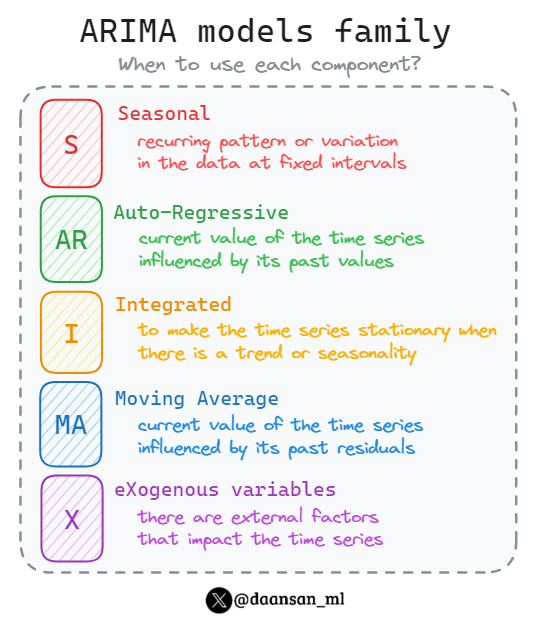

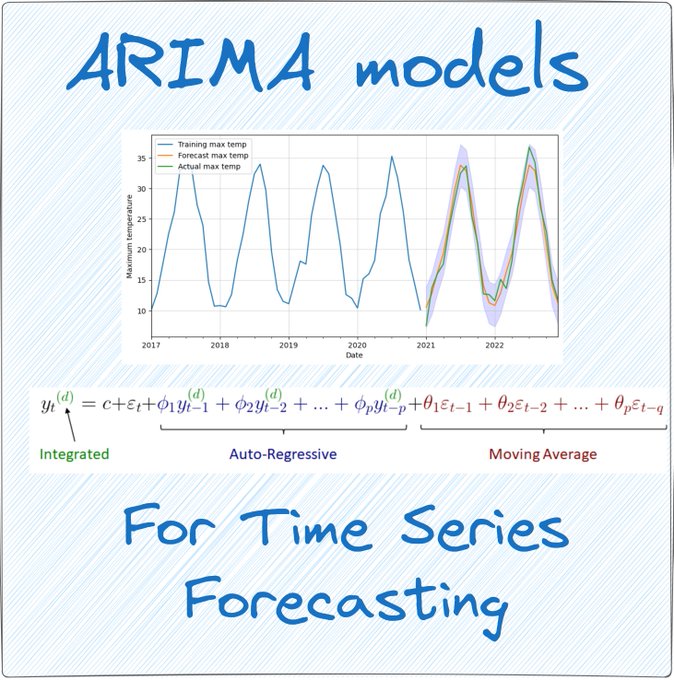

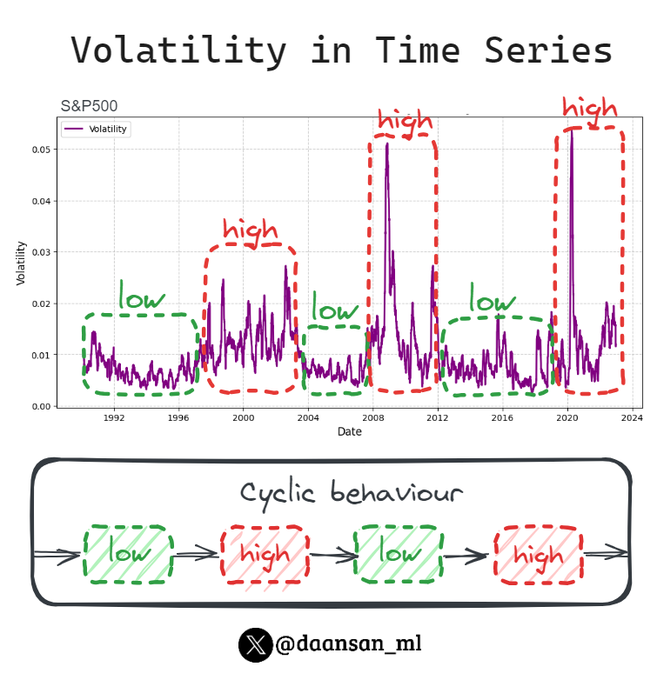

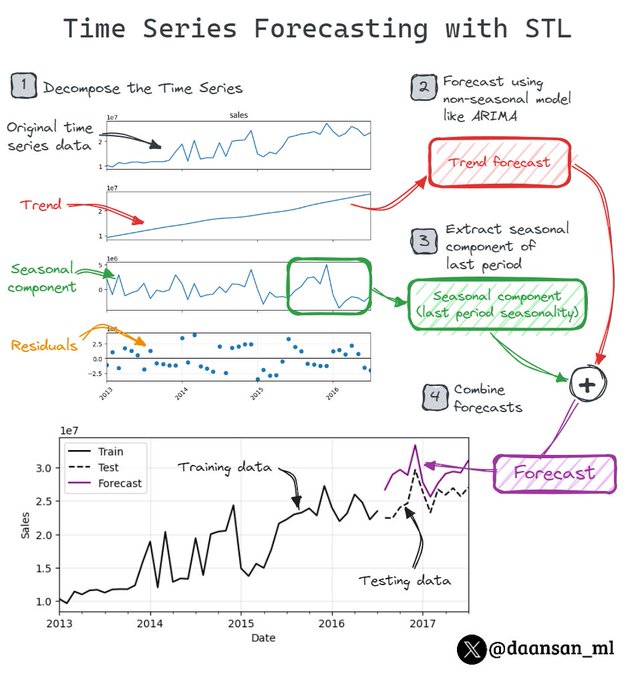

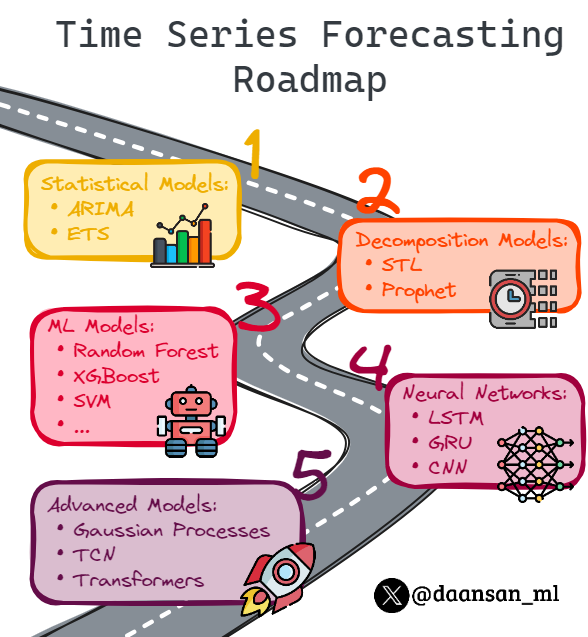

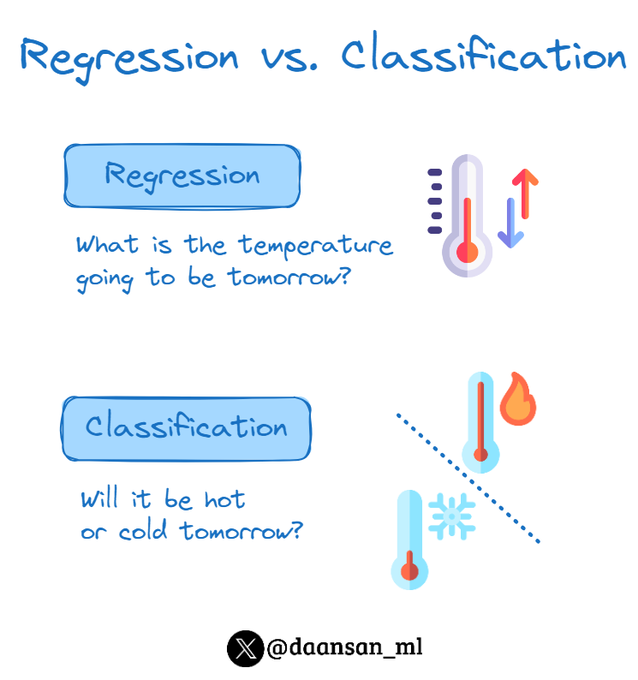

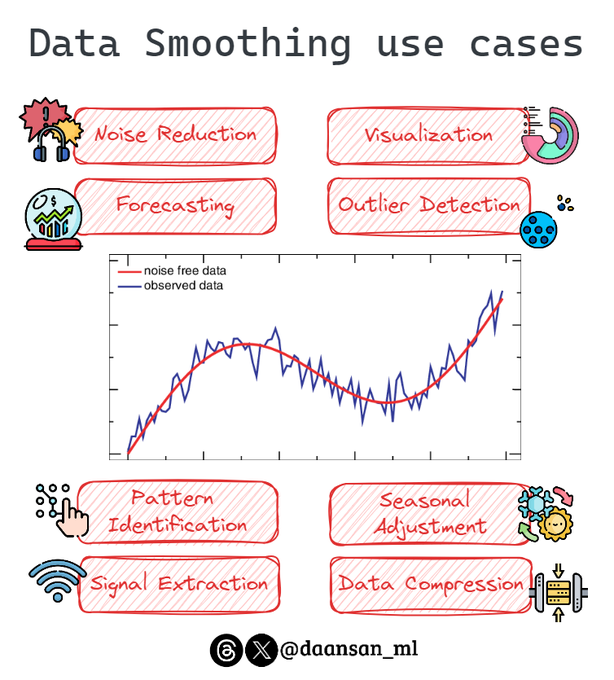

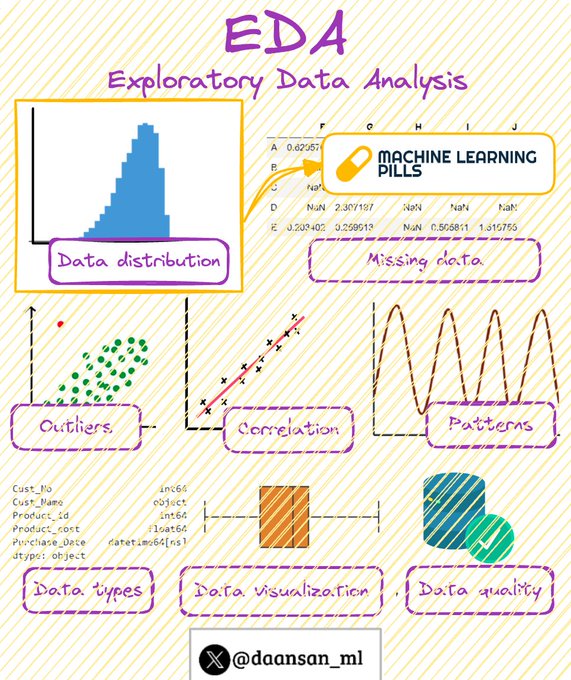

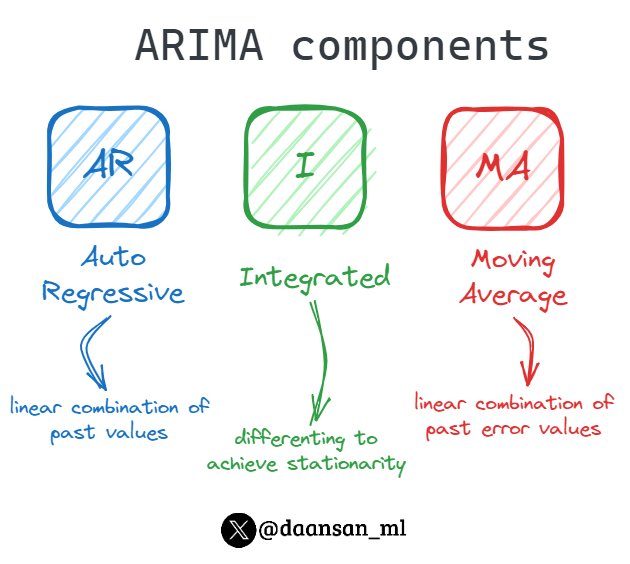

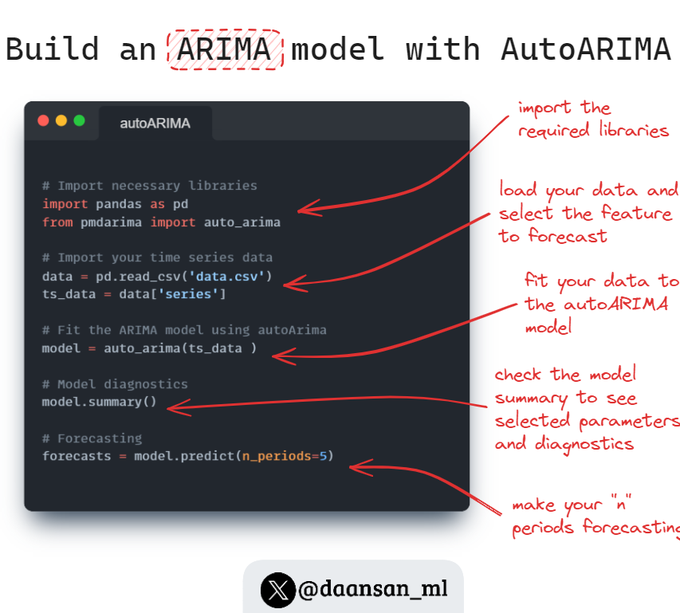

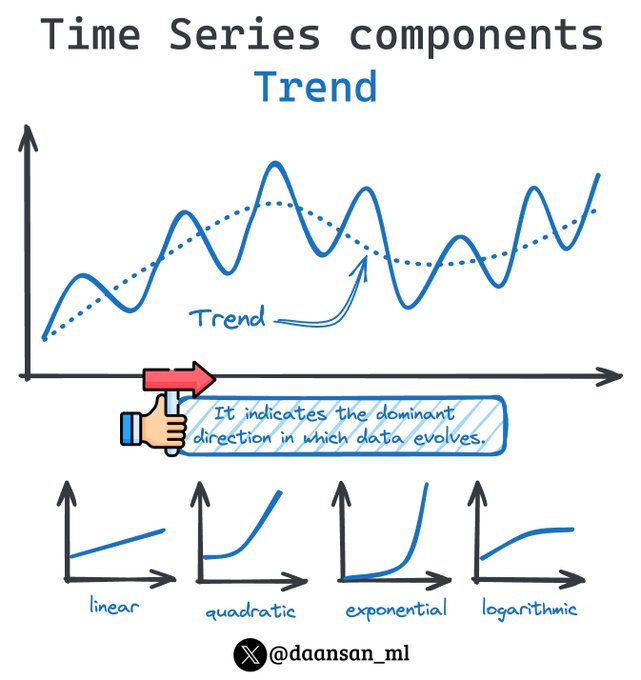

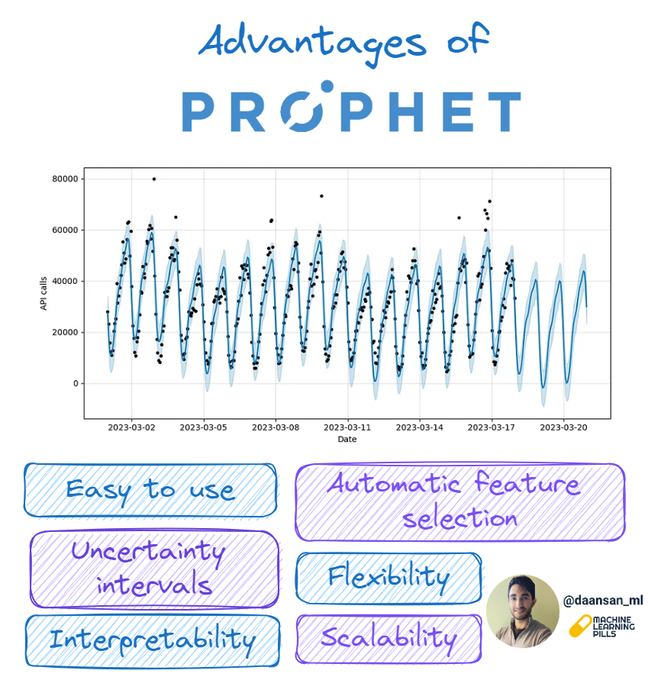

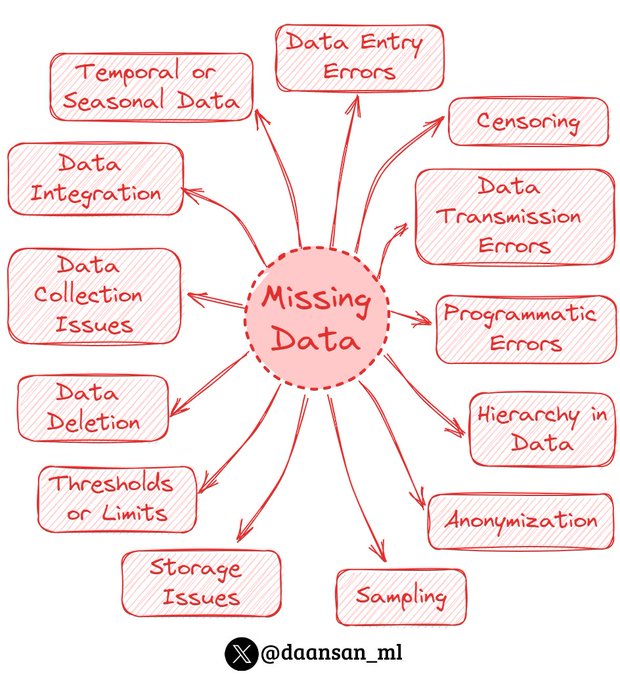

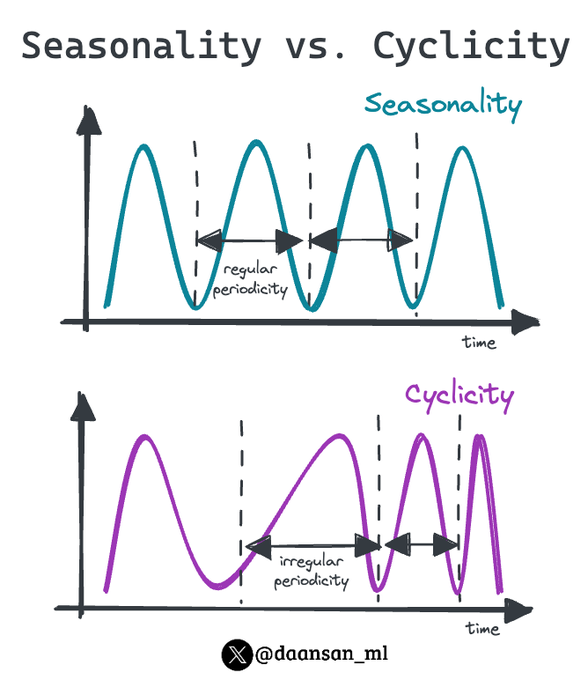

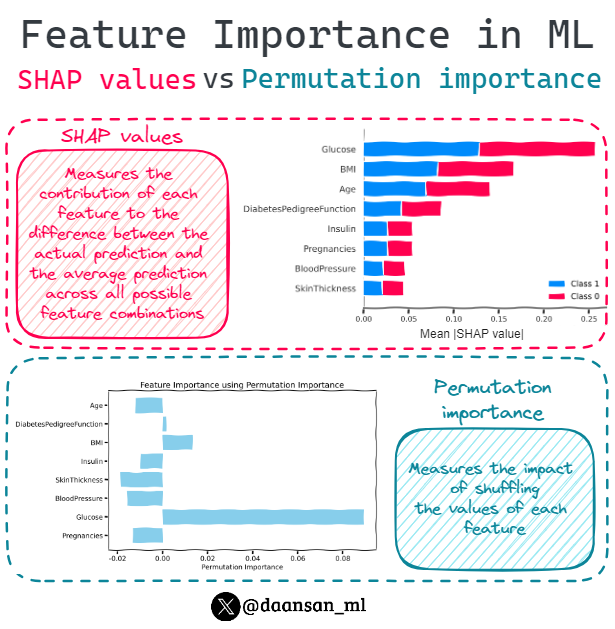

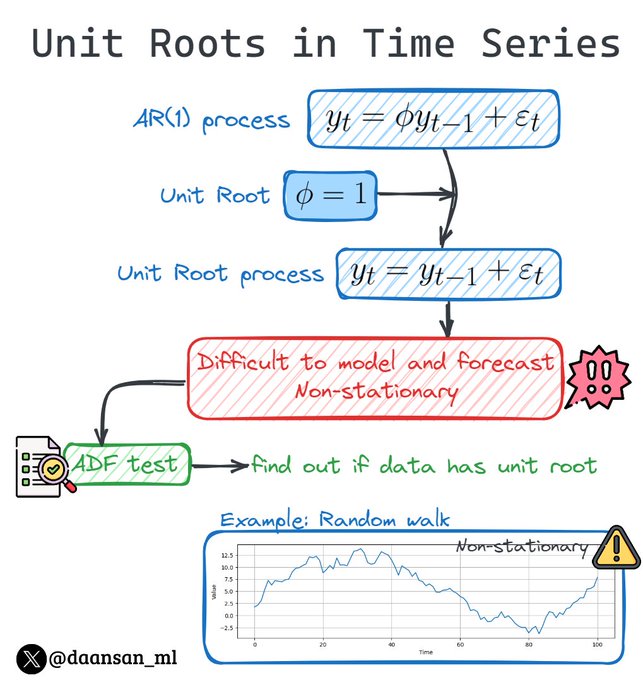

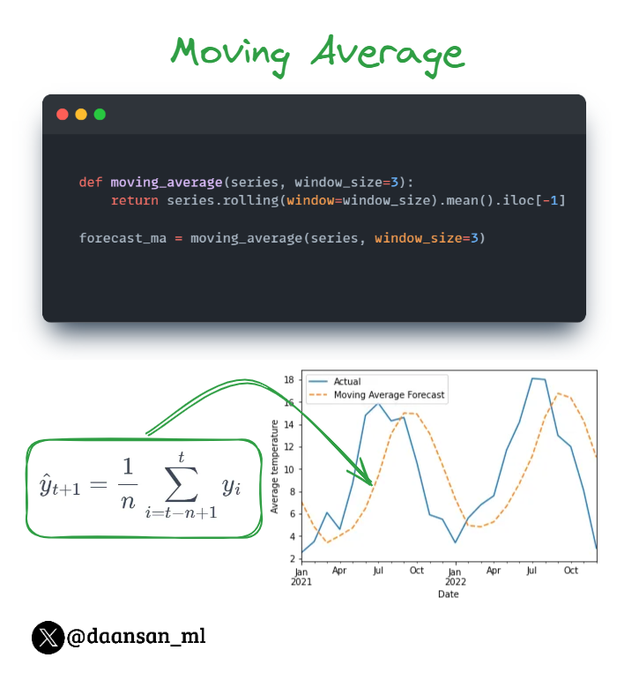

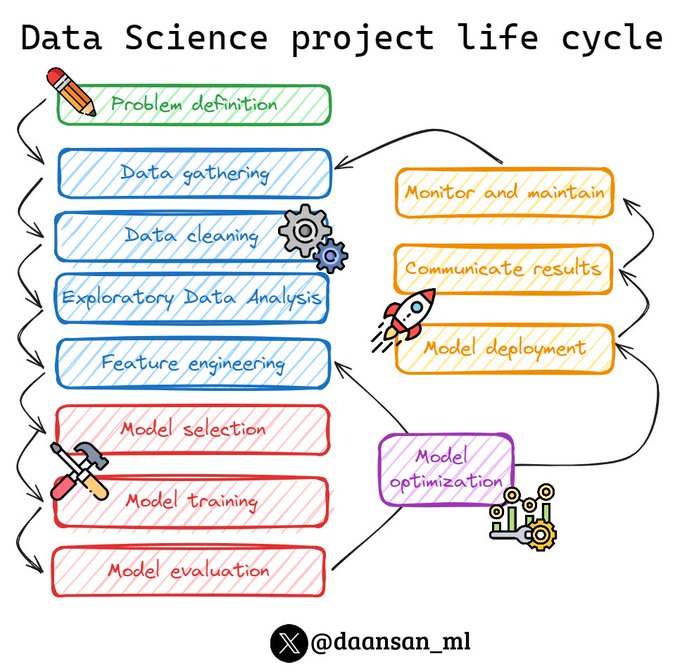

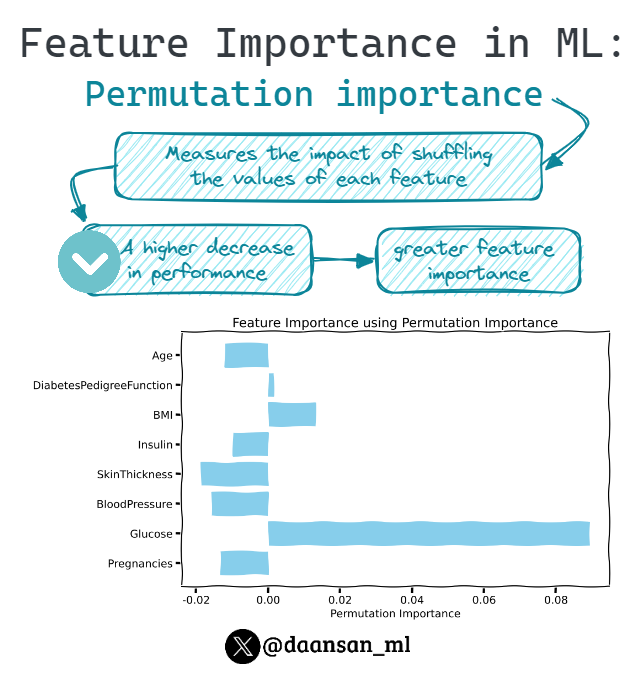

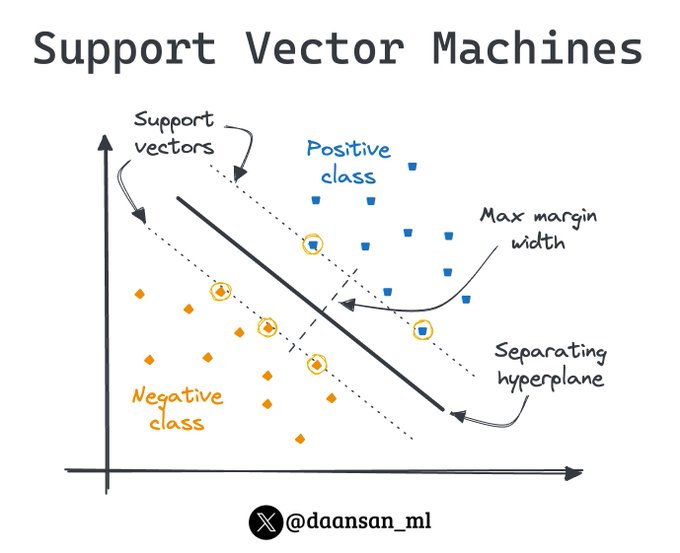

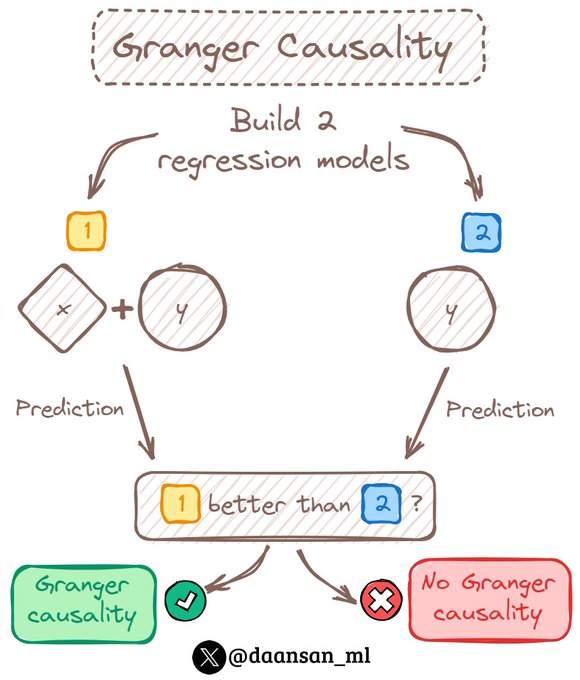

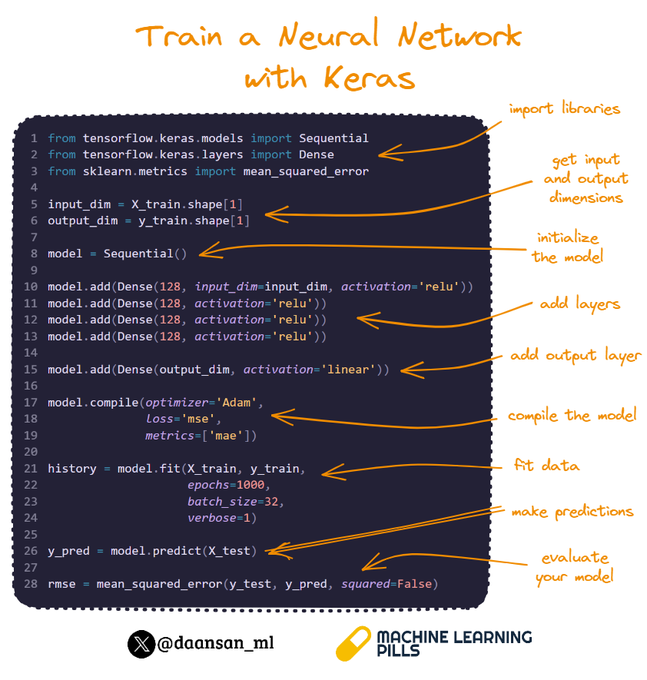

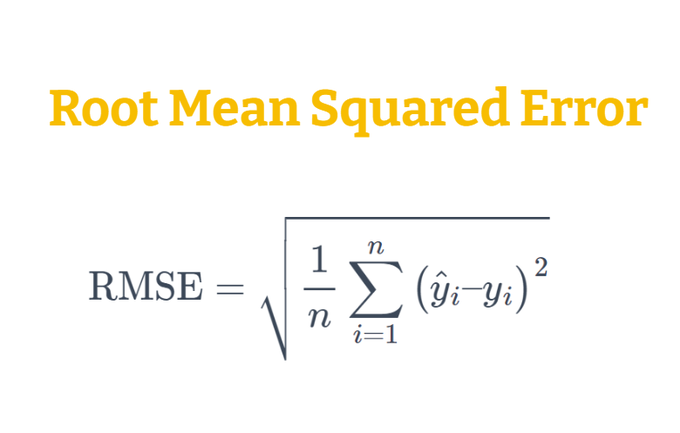

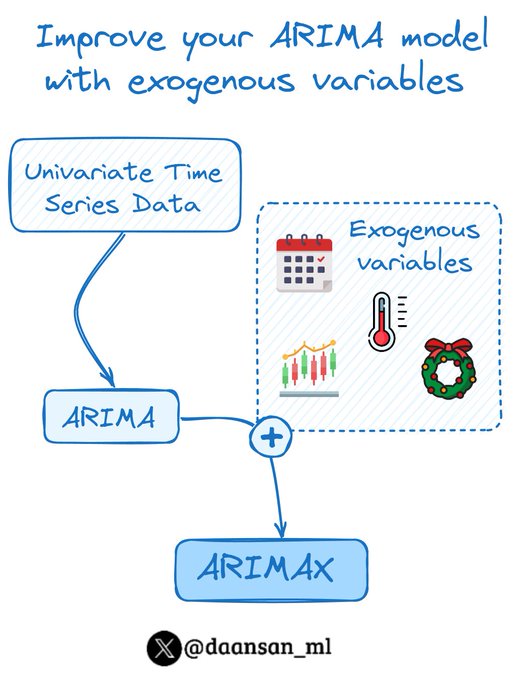

📈 I summarise Machine Learning, NLP and Time Series concepts in an easy and visual way • 💊Follow me in 👉 Inquiries in david @mlpills .dev

Spain

Joined May 2022

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Kyiv

• 431105 Tweets

LINGORM TSOU EP3

• 179134 Tweets

#ใจซ่อนรักEP3

• 174483 Tweets

#海のはじまり

• 104965 Tweets

Smeraldo Garden

• 102125 Tweets

#HappyBirthdayARMY

• 76260 Tweets

Kiev

• 75632 Tweets

STRAY KIDS WORLD TOUR

• 69317 Tweets

Houston

• 63407 Tweets

石丸構文

• 49698 Tweets

Morning Joe

• 45572 Tweets

#マウンテンドクター

• 44160 Tweets

XO MV TEASER 1

• 43442 Tweets

MIS TÍAS IS COMING

• 37898 Tweets

LATAM

• 36251 Tweets

Greenwood

• 34408 Tweets

進次郎構文

• 28410 Tweets

配信視聴

• 24691 Tweets

THANK YOU KIM TAEHYUNG

• 24345 Tweets

TYPE 1 BY V OUT NOW

• 23866 Tweets

KSPO

• 22455 Tweets

Kh-101

• 19409 Tweets

弥生さん

• 17434 Tweets

THE PIECE OF PEACE RETURN

• 16825 Tweets

海ちゃん

• 16616 Tweets

不要不急の外出

• 15183 Tweets

しんちゃん

• 12140 Tweets

니콜라스

• 10964 Tweets

Last Seen Profiles