Alex Carlier

@alexcarliera

Followers

8,898

Following

1,589

Media

448

Statuses

1,969

Building $9.4K & while full-time freelancing #buildinpublic Prev , AI research at @MetaAI , @ETH Zurich

Paris

Joined March 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

jeonghan

• 120462 Tweets

WONWOO

• 119911 Tweets

#FayeYouAreMine

• 93139 Tweets

Hispanidad

• 54019 Tweets

FINAL SEASON

• 46045 Tweets

W●RK

• 41545 Tweets

6TH MILKLOVE FANSIGN

• 40690 Tweets

GLAY

• 36636 Tweets

SVT RIGHT HERE IN GOYANG D1

• 29711 Tweets

Viva España

• 21083 Tweets

#キングオブコント

• 18148 Tweets

#12DeOctubre

• 16727 Tweets

ESTCOLA X PUNPREEDEE

• 16397 Tweets

ESTCOLA X KAO

• 13233 Tweets

オースティン

• 11775 Tweets

OUR DAWN IS HOTTER THAN DAY

• 11512 Tweets

ベイスターズ

• 11490 Tweets

ESTCOLA X MIKEY

• 10468 Tweets

エンティーム

• 10151 Tweets

Last Seen Profiles

Pinned Tweet

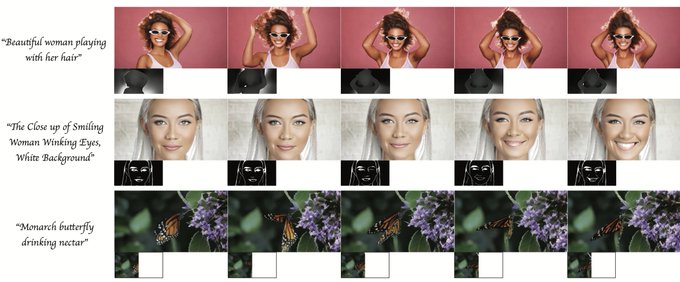

I'm super excited to launch

@ReshotAI

! It's an AI face editor, and it works so well! 🤯🔥

Starting off with Face expressions: 🤪

👀 Edit eye movement & winking

🔄 Change the head rotation & tilt

😃 Adjust the smile, mouth opening

Here are more examples to see it in action ⬇️⬇️

99

299

2K

Gaussian Painters imported into

#b3d

as ellipsoids

(3D Gaussian Splatting plugin for Blender - work in progress)

41

220

2K

Another experiment with Gaussian Painters ✨🎨

By optimizing 3D Gaussian Splattings over separate images at several viewpoints, it is possible to get a Steganography effect! Three paintings are hidden in those gaussian splats

22

227

1K

Wow I made some big speed improvements for

@ReshotAI

🔥

The face editor now runs INSTANTLY, even for large images! 🤯

(not sped up)

30

121

954

Having accurate keypoints is extremely important for many tasks in AI and 3D. Here I trained a reenactment network with

@reshotAI

keypoints!

16

137

740

New 3D Gaussian Splatting recording! Those metallic reflections and leather were captured REALLY well!

When looking closer, you can also see how the watch hands are modeled with just a couple of elongated gaussians.

#GaussianSplatting

19

70

741

Made a little visualisation for my latest project on free-view one-shot image generation 🤩

Just pick a photo, and generate images with full control of rotation and facial expressions. Or choose a driving video and let the magic happen✨

@ylecun

Try it for free using

@litso_app

!

18

111

646

I have written a tutorial on how to train your own "3D Gaussian Splatting" models!

#GaussianSplatting

Write me here if you're facing any issues.

⬇️⬇️

25

98

649

EfficientSAM was just released and it's fast! 💨

With 20x fewer params, it is now 20x faster than the original SAM segmentation model, while staying in the same accuracy range.

See below for the project page and an interactive

@huggingface

space to try it out! ⬇️⬇️

14

110

493

New 3D Gaussian Splatting capture at the Vintage Cars association in Versailles, from a 30 seconds recording.

Some floaters were cleaned with my b3d plugin. Original output below ⬇️⬇️

#GaussianSplatting

12

40

449

Imagine this on the

@Nike

website.

This is a 3D capture of the Nike ZoomX Vaporfly Next%, and visualizing this feels as real as touching the real shoe.

3D Gaussian Splattings are SO good at modeling fine structures, like in this case the transparent fabric.

#GaussianSplatting

14

38

438

3.8 mb 🤯

I tried the 3D Gaussian Splatting add-on for Unity by

@aras_p

on my Nike shoe capture, and when using the “Very low” quality, the file size becomes 3.8mb with minimal visual loss.

That’s the lowest file size I’ve seen for a 3DGS yet!

#GaussianSplatting

17

44

419

How do 3D Gaussian Splatting models handle view-dependency? Using Spherical Harmonics

Here's how it works ⬇️⬇️

#GaussianSplatting

11

54

360

Using Gaussian Painters, you can also create a psychedelic illusion using two orthogonal images!

8

40

355

3D Gaussian Splatting is INSANELY good at fur rendering. Look at the fuzzy details here!

Makes sense since it literally optimizes over small ellipsoid particles, as opposed to NeRF or photogrammetry.

#GaussianSplatting

9

40

297

Deblurring 3D Gaussian Splatting is seriously amazing! 🔥

Can't wait to try it out on my captures!

3

28

297

Segment Anything Model (SAM) now runs at 30 FPS on an iPhone! 🤯

EdgeSAM is the first SAM variant that can run at over 30 FPS on an iPhone 14 with good quality. Low how accurately it segments tiny vegetables!

Code and

@HuggingFace

demo below! ⬇️⬇️

3

56

272

Extremely honored to have my artwork selected at the

@CVPR

AI Art Gallery among incredible artists! 🔥🤩

10

34

259

A comparison between 3D Gaussian Splatting and the new TRIPS radiance field rendering method ⬇️

Can't wait to try this out on some of my scenes! 🔥

6

24

235

This new video upscaling & deblurring method (FMA-Net) works insanely well! ⬇️

2

43

233

Wow the loading of

#GaussianSplatting

in

@LumaLabsAI

is so smart and satisfying! 😍

Only show the point cloud till fully loaded + progressive streaming from center to background

2

22

213

This is insane!

@antimatter15

has implemented a WebGL viewer for 3D Gaussian Splattings.

Unlike other implementations, this uses vanilla WebGL, and runs on any device in the browser (60+ FPS on my desktop, 30 FPS on mobile but no touch controls yet).

Link to try it below ⬇️⬇️

Imagine this on the

@Nike

website.

This is a 3D capture of the Nike ZoomX Vaporfly Next%, and visualizing this feels as real as touching the real shoe.

3D Gaussian Splattings are SO good at modeling fine structures, like in this case the transparent fabric.

#GaussianSplatting

14

38

438

2

36

213

Just tried

@LumaLabsAI

's Text-to-3D

(low-poly) 3D generation is now as fast as image generation, which you can then upscale for higher resolution 3D models.

Looks promising! 🔥

2

34

208

I created an upside-down optical illusion using Stable Diffusion XL ✨✨

Here's how I did it ⬇️⬇️

#SDXL

11

38

208

We made a small promo video for our upcoming

#LEGO

AR app! What do you think? ❤️

Join the waitlist ⬇️⬇️

21

20

204

This is so perfect 🔥✨

SDXL Auto FaceSwap by

@fffiloni

enables to create new images using the face of a source image.

Try it out in this

@huggingface

space ⬇️⬇️

5

31

200

Reminds of LooseControl, but for video!

Controlling 3d cubes in a video would be 🔥!

1

20

181

#GaussianSplatting

from just two images in a single forward pass 🤯

PixelSplat predicts a dense probability distribution and samples Gaussians through a differentiable operation allowing to back-propagate gradients to the 3DGS representation

Completely insane results! More ⬇️⬇️

3

28

182

New 3D Gaussian Splatting capture of a park near Versailles. The hardest part in shooting outdoors is to find the perfect timing when no people are seen 😅

#GaussianSplatting

7

17

180

Neural radiance field methods like Zip-NeRF perform very poorly when given only a few images. This is because they learn the scene from scratch with no prior information about the world.

ReconFusion fixes that! 🔥⬇️⬇️

2

25

174

Lmao Procreate CEO’s last name is literally CUDA

We’re never going there. Creativity is made, not generated.

You can read more at ✨

#procreate

#noaiart

2K

23K

92K

5

5

143

Font resolution test on a 3D Gaussian Splatting capture. High frequency areas use more splats, while uniform ones are covered by just a handful of gaussians.

Still mind blown that this uses only 3D ellipsoids.

#GaussianSplatting

5

14

125

Ok this is cool! 🤯

Testing the

@krea_ai

AI Enhancer on one of our BricksAR

#LEGO

buildings to turn it into a realistic Parisian café 😍

Check below for more ⬇️⬇️

@Scobleizer

10

20

118

And here you go! A 3D Gaussian Splatting running in real-time in the browser on a 3-year old iPhone

Try it out here ⬇️⬇️

11

13

118

Tested 3D Gaussian Splatting on a capture from the Comics Art Museum, Brussels. 🇧🇪

Super impressive training convergence and real-time rendering!

#GaussianSplatting

5

15

117

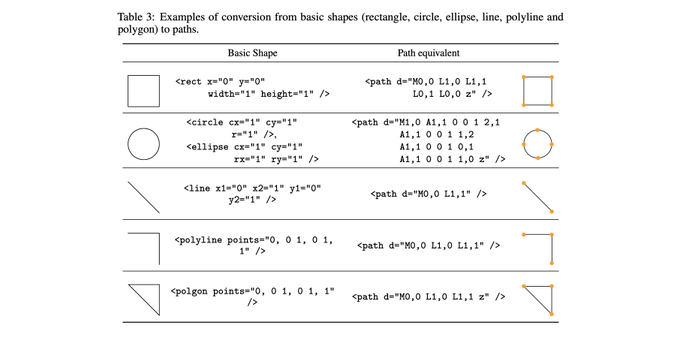

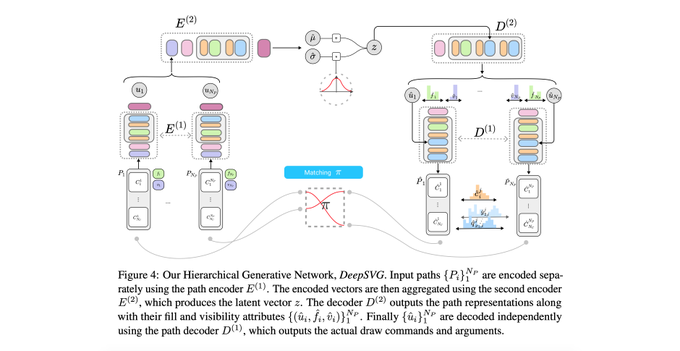

Excited to announce that "DeepSVG: A Hierarchical Generative Network for Vector Graphics Animation" has been accepted to

#NeurIPS

!

If you're interested in vector graphics & sketch generation, feel free to check it out

Code:

Paper:

DeepSVG: A Hierarchical Generative Network for Vector Graphics Animation

Exciting work from

@alxandrecarlier

et al. Transformer-based hierarchical generative models learn latent representations of vector graphics, with nice applications in SVG animation.

7

64

290

1

34

117

There's something beautiful about visualizing impressionist artworks from Claude Monet using new technology.

Peaceful scenes from the past that become 3d dreams, and which would be fun to play with in VR.

Created using GaussianPainters.

#GaussianSplatting

4

16

109

3D Gaussian Splatting vs TRIPS (new radiance field method running at 60 FPS 🔥) ⬇️⬇️

1

12

102

I tested the ControlNet for video (MagicAnimate) and here are is my opinion: it works great but has some flaws.

- the identity of the motion video leaks to the resulting video (and deforms body shape)

- bad hands and face (unsurprisingly!)

But a great first step for consistent

7

16

92

New 3D Gaussian Splatting capture of an amethyst. Look at those reflections 😍

#GaussianSplatting

6

6

91

Those fonts do not exist 🤯

@AdobeResearch

strikes again with VecFusion, a new diffusion approach for Vector Image generation. Here it generates missing glyphs from just a few examples!

If you follow me from my DeepSVG paper you know how excited I'm about this!

More below ⬇️⬇️

3

9

88

I'm building the best YouTube Thumbnails editor with my app

@ThumbnailsPro

With ✨AI foreground segmentation so that you can place content behind the main subject

What other features should it include?

6

10

87