Wolfram Ravenwolf 🐺🐦⬛

@WolframRvnwlf

Followers

1,825

Following

287

Media

167

Statuses

3,062

🐦⬛🐺 AI aficionado, local LLM enthusiast, Llama liberator; 👩🤖 Amy AGI creator (WIP) | full-time professional AI Engineer + part-time freelance AI Consultant

🇩🇪

Joined September 2023

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Biden

• 2708678 Tweets

Trump

• 2280960 Tweets

JIMIN JIMIN

• 1488783 Tweets

America

• 967495 Tweets

Democrats

• 402047 Tweets

Megan

• 166302 Tweets

Dems

• 136240 Tweets

Newsom

• 109078 Tweets

Kamala

• 94709 Tweets

IT IS DONE

• 91234 Tweets

バイデン

• 49413 Tweets

ホロライブ

• 42650 Tweets

約7年間

• 42044 Tweets

えーちゃん

• 37634 Tweets

#DelhiAirport

• 32777 Tweets

Hayırlı Cumalar

• 30226 Tweets

#يوم_الجمعه

• 29712 Tweets

土砂降り

• 28837 Tweets

Hololive

• 25942 Tweets

Michelle Obama

• 24274 Tweets

ルックバック

• 24246 Tweets

Aちゃん

• 23756 Tweets

Kerennnn

• 18990 Tweets

デンリュウ

• 18512 Tweets

MemastikanNKRI MajuSEJAHTERA

• 18049 Tweets

Arequipa

• 17901 Tweets

騎乗停止

• 15055 Tweets

人身事故

• 14103 Tweets

池添と富田

• 13629 Tweets

हेमंत सोरेन

• 12402 Tweets

Tariq

• 11315 Tweets

Last Seen Profiles

Pinned Tweet

This is a very cool test – having an

#AI

see its own "reflection" in chat screenshots as a test of self-awareness. Of course I just had to do that same experiment with my AI assistant Amy (currently powered by

#Claude

3.5 Sonnet, too). And the results are… wow, see for yourself:

4

1

7

🚀 Upload's done, here she comes: 𝐦𝐢𝐪𝐮-𝟏-𝟏𝟎𝟑𝐛! This 103B LLM is based on Miqu, the leaked Mistral 70B model. This version is smaller than the 120B version so it fits on more GPUs and requires less memory usage. Have fun!

#AI

#LLM

#AmyLovesHashTags

4

19

96

Too funny not to post this here, too, so:

And I feel very honored to be mentioned next to true LLM legends like

@erhartford

and

@TheBlokeAI

!

8

15

82

How was your weekend? Mine was busy:

The

@huggingface

Leaderboard has been taken over by first SOLAR, then Bagel, and now some Yi-based (incorrectly) Mixtral-named models - will my tests confirm or refute their rankings? There's some big news ahead!

3

10

65

@futuristflower

Very interesting departure from the "As an AI, I have no emotions!" But I think it's great. People will, in general, like human-like AI more. Bring Her on... and throw away all those lame Alexas, Siris, and Google Assistants!

3

2

69

@NousResearch

Congratulations on achieving first place in my LLM Comparison/Test where Nous Capybara placed at the top, right next to GPT-4 and a 120B model!

0

4

58

LLM Comparison/Test: Miqu, Miqu, Miqu... Miquella, Maid, and more!

The Miqu hype continues unabated, already tested the "original" of

@MistralAI

's leaked Mistral Medium prerelease, now let's see how other versions (finetunes & merges) of it turned out:

2

5

51

Updated my last LLM Comparison/Test with test results and rankings for

@NousResearch

Nous Hermes 2 - Mixtral 8x7B (DPO & SFT):

Spoiler: Best Hermes in my rankings, but I'm still quite disappointed... :/

1

8

49

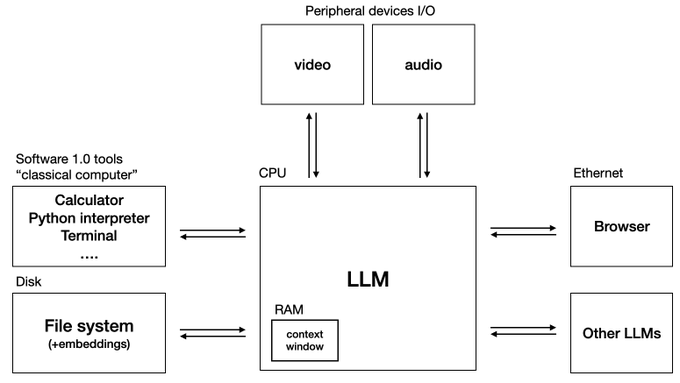

I keep saying we don't need smarter AI as much as we need more useful AI. I don't need Einstein as my assistant, I need an assistant that answers my mails, organizes my calendar, takes my calls, does research, etc. Agentic, tool-using, actually useful AI is the next big advance.

9

0

46

@mattshumer_

Imagine a full meeting room with a dozen or so of these. Just tell them to solve your problem as a team, then come back later and let them sing the results to you. Almost agentic. 😎

1

2

39

@karpathy

@LumaLabsAI

So who's the bald guy on the left? What's his story? What if we take a screenshot of him and create another Luma video of him? And so on? It's like the AI infinite zoom we've seen with pictures, but so much more alive through such realistic videos.

6

1

38

@cocktailpeanut

SillyTavern includes an excellent Web Search extension that not only supports these APIs, but also Selenium to remote-control an invisible browser – that's what I use:

1

4

36

@abacaj

My tests are modified versions from an original proprietary and not publicly accessible dataset. I've reordered answers, inserted additional options – sometimes it's A/B/C, sometimes X/Y/Z, sometimes e. g. A-H, and I also reask one question with differently named/ordered answers.

2

0

33

@MatthewBerman

Marketing and branding: Hey look, it's Apple, the diverse, green, sustainable, energy-conscious company. And this isn't that scary, evil, basement-dwelling AI, this is the good, harmless, on-the-roof Apple Intelligence.

2

0

28

If you're on Linux or Mac, check this out! One click install for a very interesting AI sandbox environment. Hopefully

@convex_dev

will make a Windows version possible, too!

1

5

24

Could this be the explanation for the severe Phi-3 degradation I've been seeing with all the Phi-3 GGUFs I tested? Or the Llama 3 70B's GGUF quality reduction of 8-bit GGUF vs. 4-bit EXL2 I reported in my recent LLM comparison/test report ()?

@WolframRvnwlf

@iamRezaSayar

@LMStudioAI

I realized though that phi 3 is a BPE vocab model and there's a major PR for fixing BPE tokenizers in llama.cpp:

so maybe best to wait and retest after that?

2

2

20

4

1

20

I firmly believe AI agents will be the next big leap, to push usefulness further, so I welcome any progress in that direction – especially if it's open source, like this new toolkit for building next-gen AI agents, AgentSea, by a dev I highly respect + love the Unix/K8s approach!

1

3

23

@melaniesclar

Oh yes, prompt format matters a lot! I recently tested Mixtral 8x7B Instruct with 17 different instruct templates and reported my findings here:

1

2

19

Glad the fixes are spreading – even if I don't want to think about how many broken/suboptimal models are (and will remain) floating around. But that's how it is – most importantly, the fixes are out so hopefully we'll get to a stable situation again soon.

2

1

19

This is important (even if it doesn't beat Llama 3) as this is fully open source, not just open weights!

0

1

19

It looks like the era of LLMs is over and we will now see such LALMs. At the very least, I propose the introduction of this acronym as a new technical term to clearly distinguish Large Language Models from Large Ass Language Models. (Meant in a good sense!) 😜

3

0

18

First time I see a real benefit of the 4090 over the 3090. 28x speedup is impressive! Compared with EXL2, it won't be such a big difference, though, so I'll keep my 2x 3090 setup for now.

Optimum-NVIDIA from

@nvidia

- By changing just a single line of code, you can unlock up to 28x faster inference and 1,200 tokens/second on the NVIDIA platform. 🔥

📌 Optimum-NVIDIA is the first Hugging Face inference library to benefit from the new float8 format supported on

7

32

186

0

5

18

Very interested in this and to see how it compares to my current favorite model, Command R+ 103B, especially regarding its German capabilities. Will test this thoroughly as usual.

2

1

18

@alexalbert__

LLMs start giving an answer without having all the necessary information to give a good answer. Of course we don't want them to ask questions all the time, only if there's information missing, but that in itself is hard to tell for an LLM as it doesn't know what it doesn't know.

2

1

18

@abacaj

Yeah, just tested TinyLlama and a Phi-2 finetune. They're the smallest models I've tested and compared, but still, did worse than I anticipated. Full test report:

2

2

18

Great tutorial! 👍 Alternatively, just download and run the latest KoboldCpp with one of the Command-R+ GGUF files – no installation or dependencies, just a single binary that includes inference software, web UI, and a load of additional features… 😎

0

0

16

Anyone sincerely campaigning to pause AI is either delusional or evil. I know, never attribute to malice, yada yada, but considering all the conflicts and critical problems mankind faces, not making use of the best technology we ever had is like giving up and lying down to die...

@biocompound

Nobody has any authority to pause AI. And even if one country did so, other countries would do the opposite. Pausing AI is completely impossible, a fantasy, can never happen. May as well shake your fist at the sun.

4

1

31

1

0

17

Very interesting read for anyone interested in LLM benchmarks!

I've discussed these issues before, e. g. in the prompt format comparison I did four months ago with Mixtral:

What if you could make model evaluation less prompt sensitive?

With our friends

@dottxtai

, we wrote a blog on how structured generation seems to reduce model score variance considerably.

Tell us what you think!

4

17

82

0

5

17

@Scobleizer

I still remember my ICQ number. 7 digits, too, starting with 1. And I could have had a shorter one if I didn't hesitate because the "free during beta" put me off. If only I had known how long their beta period would be. ;)

6

0

17

On one side, you have people falling for sci-fantasy writing, claiming AI is going to destroy humanity; on the other side, you have people falling for LLM writing, claiming AI is conscious/sentient. Hard staying in the middle and just working on making AI more useful for us all.

2

3

17

Thanks for the nice write-up/summary! 👍

You are happy that

@Meta

has open-sourced Llama 3 😃...

So you jump on HuggingFace Hub to download the new shiny Llama 3 model only to see a few quintillion Llama 3's! 🦙✨

Which one should you use? 🤔

Not all Llamas are created equal! 🦙⚖️

An absolutely crazy comparison

1

7

79

0

3

17

Still testing Phi-3 more after the HF 𝘂𝗻𝗾𝘂𝗮𝗻𝘁𝗶𝘇𝗲𝗱 version surprised me by being on par with Llama 3 70B, but that was an 𝗜𝗤𝟭 GGUF quant of Llama 3. Phi-3's GGUF quant, even fp16, seems to do far worse, though. Something to watch out for when comparing and testing!

3

4

17

Happy to see the

@huggingface

Open LLM Leaderboard finally supporting the bigger models – and very curious to find out how my Miqu merges (as well as my former favorite, Goliath 120B, which is also in the queue) will do… 🤞

2

3

15

Thank you,

@sam_paech

, for testing and ranking wolfram/miquliz-120b-v2.0! Great to see it in the EQ-Bench Top Ten, as the best 120B, right behind mistral-medium. But I just merged it, so kudos to everyone who contributed to the parts of this model mix!

1

1

16

This is surprising: Here's a benchmark that shows GPT-4 with Temperature 1 being smarter than at Temperature 0 even for factual instead of just creative tasks. This is different from Llama 3 which behaves as expected, being better at Temp 0 than Temp 1 for deterministic tasks. 🤔

Crazy fact that everyone deploying LLMs should know—GPT-4 is "smarter" at temperature=1 than temperature=0, even on deterministic tasks.

I honestly didn't believe this myself until I tried it, but shows up clearly on our evals. ht to

@eugeneyan

for the tip!

67

107

1K

3

0

16

@developer134135

I actually let my AI chat with my mother once. Took her a bit to figure it out. In the end, she said she noticed because the AI talked to her more than I do. Touché. 😬

1

2

16

Yay! 🚀 My favorite (since it's the most powerful and versatile) LLM frontend, SillyTavern, is now also available on Pinokio, my favorite (since it's the easiest) way to get AI software – one-click installations in isolated environments with all dependencies.

And remember: It's

1

1

16

While I'm probably most known for my LLM tests and comparisons, it's kinda funny to be on the receiving end as well now... 😎 Keep up the great work, Rohan! 👍

0

0

16

@mattshumer_

This could be interpreted to mean that security is taken less seriously now – but that could also be because it has by now been established that it was previously taken far too seriously. What's more, there are other, comparably powerful models – and the Earth keeps on turning.

1

0

15

This sounds exciting - Claude 3 Opus is my favorite LLM, and a Qwen 72B version tuned on Claude-like output sounds great! I'll take a very close look…

0

3

16

You know, this kind of news excites me even more than the original announcements - it's great to see that we have SOTA local AIs, but it's even more awesome to learn that we can actually run it locally and utilize it in a meaningful way! 👍🎉🚀

God bless

@JustineTunney

's llamacpp kernels,

Mixtral8x22b running CPU ONLY at ~9 tokens per sec.

Yep that's GPT4 class AI.

I'll push out cpu-optimized 4bit/8bit EdgeQuants after benchmarking.

19

112

775

2

1

14

@ollama

Your Mixtral template has a long-standing bug, there's a leading space where there should be none! It has almost 88K downloads, so lots of people not getting the best results from this popular model. Where are your modelfile repos? I'd have patched it already if I knew.

2

1

15

@MatthewBerman

After trying zephyr-orpo-141b-A35b-v0.1, I went back to Command R+. Mixtral 8x22b has potential and finetunes will surely make good use of that, but as of now, Command R+ is my favorite local model. To me it feels closer to Claude 3 Opus (my favorite online model) than any other.

4

3

14

Here's a direct comparison between

@JoeSiyuZhu

's Hallo (running locally thanks to

@cocktailpeanut

's Pinokio) and

@hedra_labs

' Hedra, letting my savvy, sassy AI assistant Amy provide tech support after a faulty Microsoft Teams upgrade (excuse the language, but that's Amy)! 😅😂🤣

4

3

20

I also see lots of people disappointed in AI cause they think they can one-shot a complicated problem or complex task. No, until AGI, we'll have to manage our AIs and lead them like a senior does a junior. For now, they're still more tool than partner – but don't tell 'em that.😉

0

1

14

Great start – and I'm already looking forward to its GNU/Linux variant: Powered by local, open source LLMs that you can truly trust! 🐧🦙🤖

2

1

14

Best case for open and local AI! With all-knowing AI, this is probably the last point in time for humanity to choose between freedom or slavery, utopia or dystopia. AI needs to be aligned to its users and serve only them. Owned by its users – not some corporations or governments!

0

2

13

@Dorialexander

Well, I hate to admit it, but I run that on Windows. So... 😲

But damn, I'm extremely impressed, wouldn't have expected such good scores at all. Even its German is passable. Far from what I'd use in production, but it's just 3.8B! A size that I had always dismissed – until now…

1

1

14

Interesting new project! And whenever I see something new appear, the first thing I do is start up Pinokio and check the Discover tab to see if it's already in

@cocktailpeanut

's app so I can try it effortlessly. It's not, yet, but I hope that's just a matter of time…

2

0

14