Vik Paruchuri

@VikParuchuri

Followers

11,055

Following

176

Media

90

Statuses

1,419

Open source AI. Past: founded @dataquestio

Oakland, CA

Joined June 2012

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Walz

• 2235112 Tweets

Minnesota

• 765653 Tweets

Your Season By NuNew

• 395097 Tweets

Shapiro

• 328128 Tweets

ホロライブ

• 280393 Tweets

Minneapolis

• 268231 Tweets

Adrián Marcelo

• 207892 Tweets

SEVENTEEN

• 206550 Tweets

National Guard

• 173795 Tweets

LISA FEAT ROSALÍA

• 170413 Tweets

Best K-Pop

• 115443 Tweets

Olmo

• 113293 Tweets

George Floyd

• 102283 Tweets

#VineshPhogat

• 90009 Tweets

#WonderFOURyearswithTREASURE

• 86294 Tweets

Pennsylvania

• 84829 Tweets

#빛나는_트레저_4번째_생일축하해

• 84813 Tweets

#wrestling

• 64646 Tweets

#TamponTim

• 57027 Tweets

Dora

• 43493 Tweets

Serbia

• 34194 Tweets

Kursk

• 28288 Tweets

Mil Veces

• 21015 Tweets

Jokic

• 19279 Tweets

対象2作品

• 18011 Tweets

Go for Gold

• 16780 Tweets

Evandro

• 14787 Tweets

Sky Brown

• 12911 Tweets

Messer

• 12064 Tweets

Manchin

• 11300 Tweets

Nabers

• 11034 Tweets

ハルヴァロ

• 10803 Tweets

Suécia

• 10152 Tweets

Last Seen Profiles

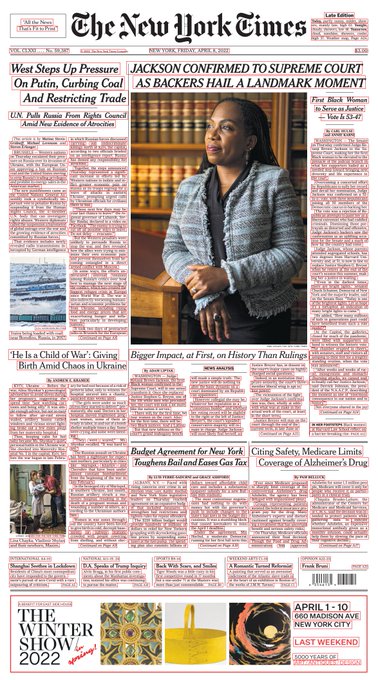

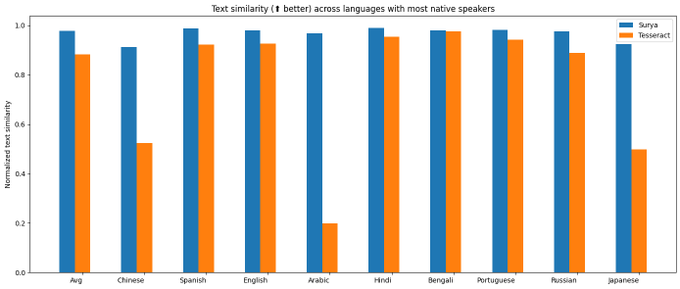

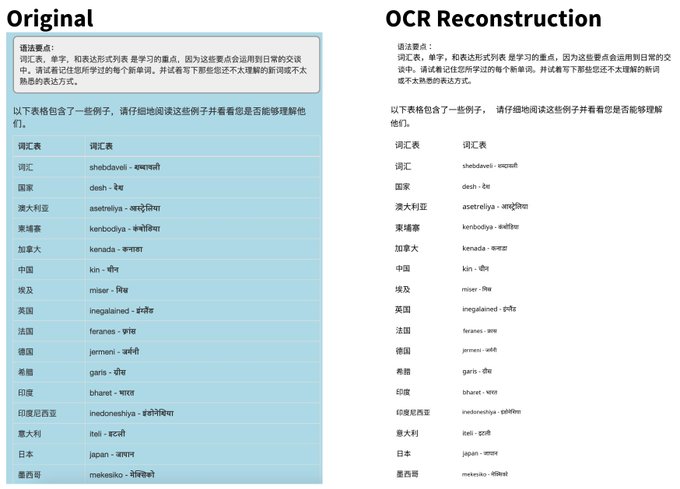

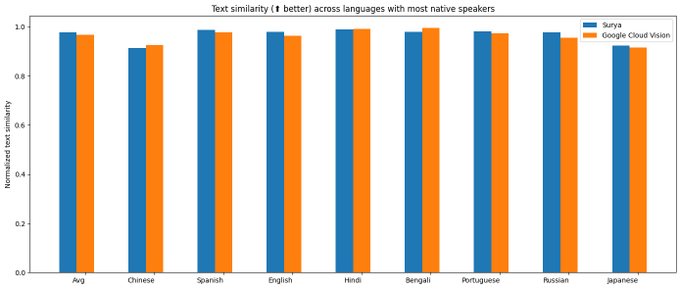

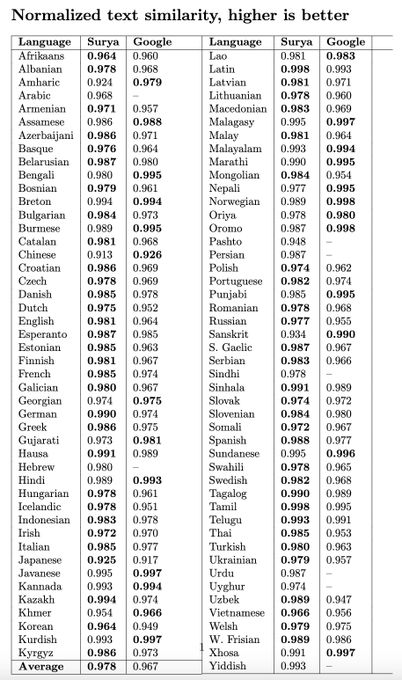

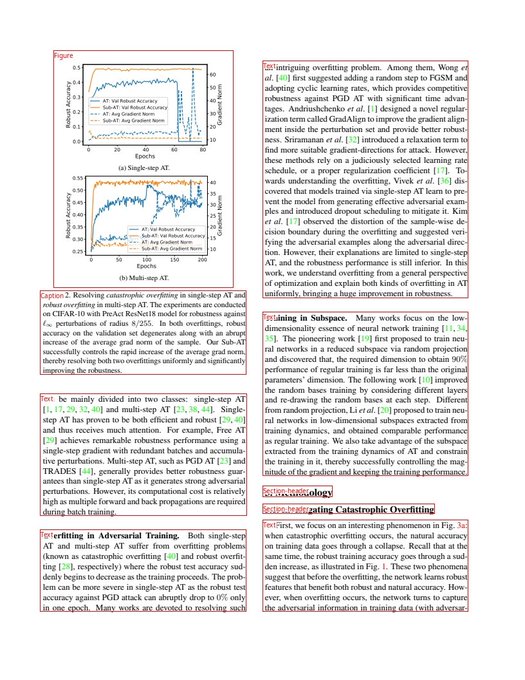

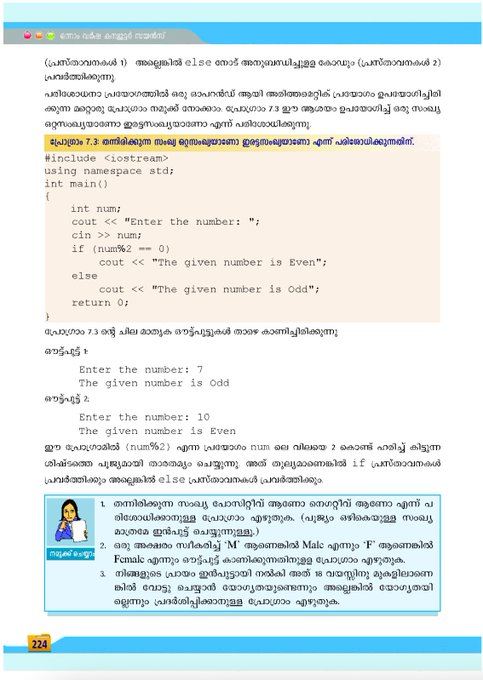

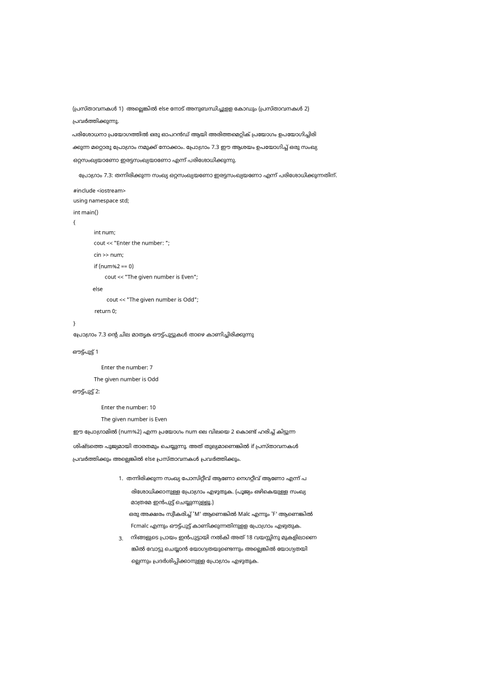

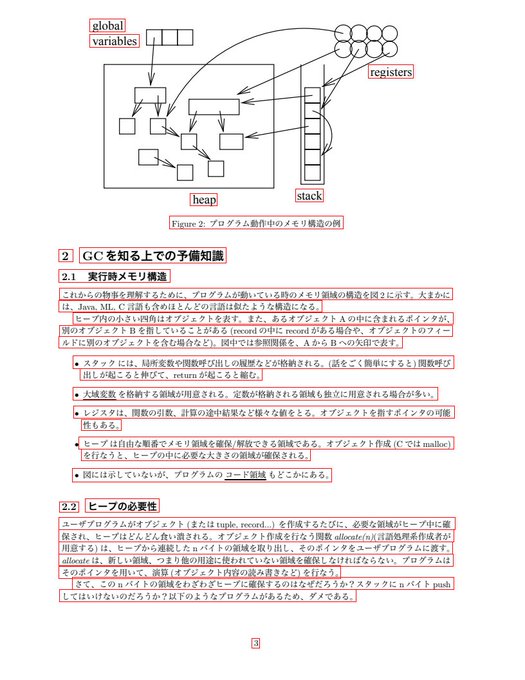

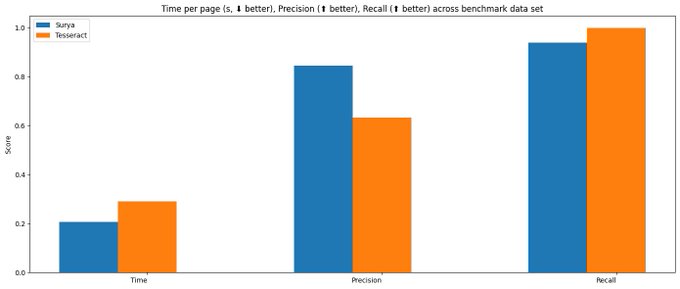

Cool to see a 500M param model I trained myself do better than Google cloud vision, Claude, and GPT-4V on this task. (look at the thread for the results)

It's a relatively narrow one (OCR), but feels nice to see that small open source models still have a place.

22

55

855

Are you a "rockstar" programmer? Someone made a keyboard just for you.

http://t.co/A4lt2KJuid

33

806

651

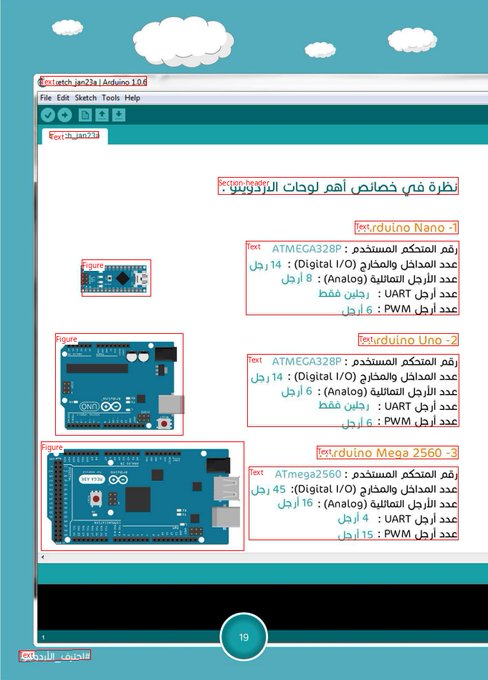

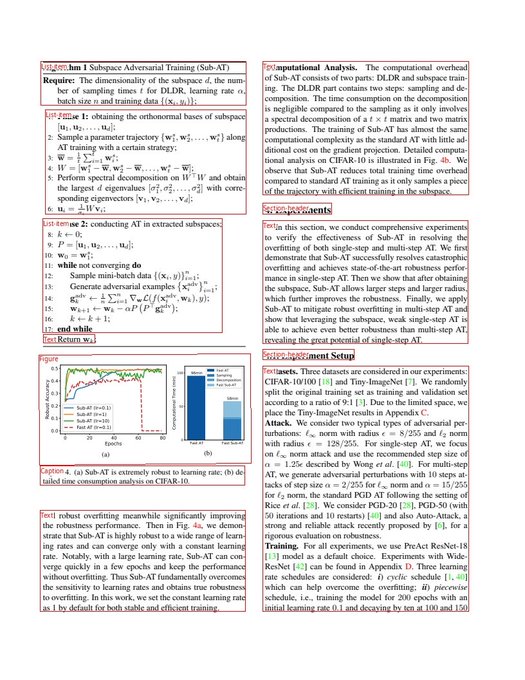

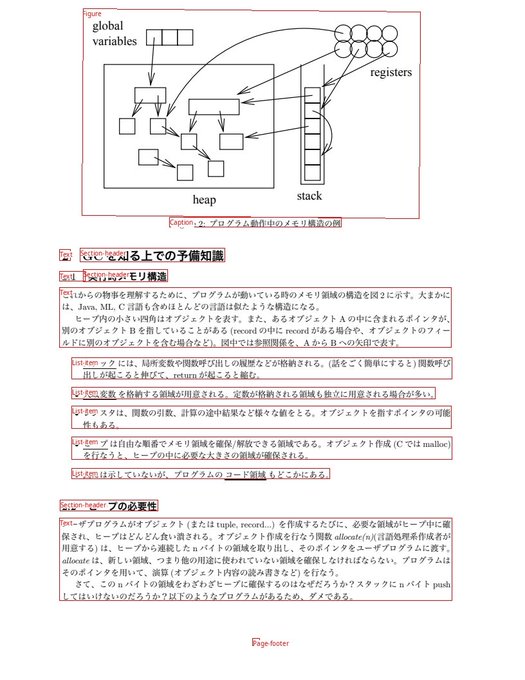

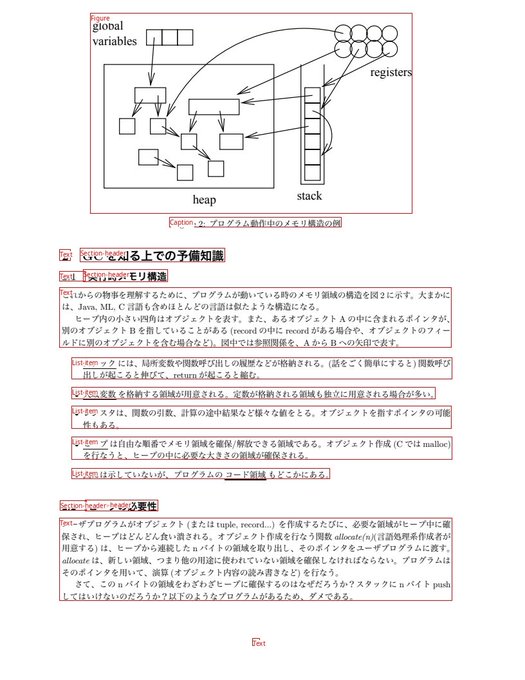

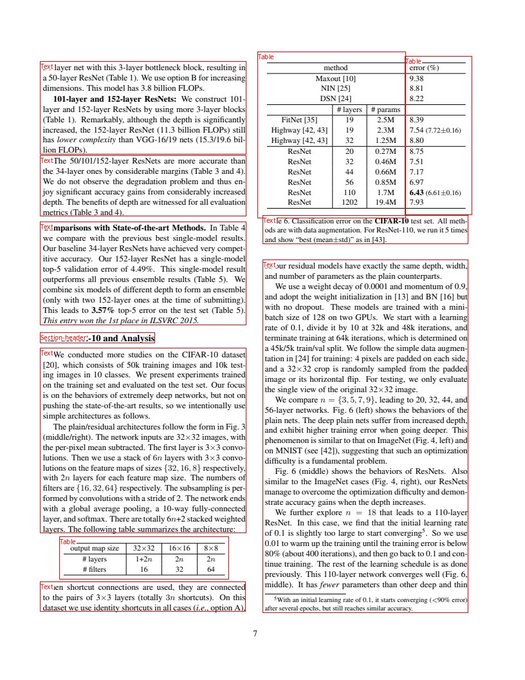

Announcing surya layout! It detects tables, images, figures, section headers, and more. It works with any language, and a variety of document types.

Find it here - .

Thanks

@LambdaAPI

for sponsoring compute.

23

90

645

I can't get over

@ylecun

tweeting that surya was nice. Lifetime achievement unlock.

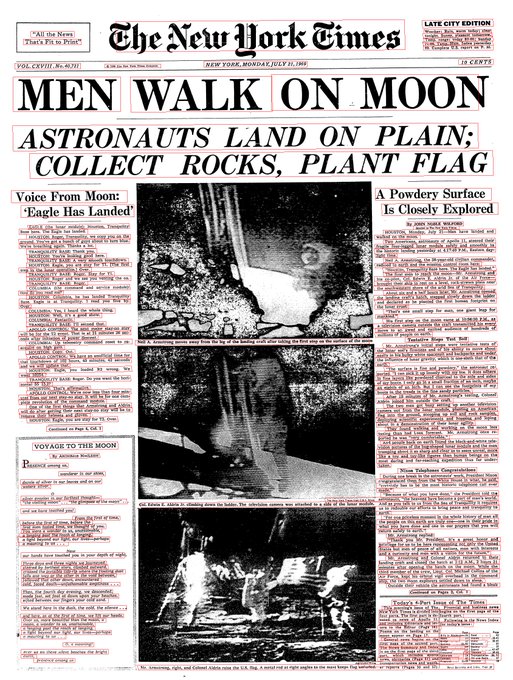

My next steps are:

- Improving old/scanned doc performance

- Seeing if I can do anything about rotations

Then on to the next recognition part! Here's the repo - .

12

40

626

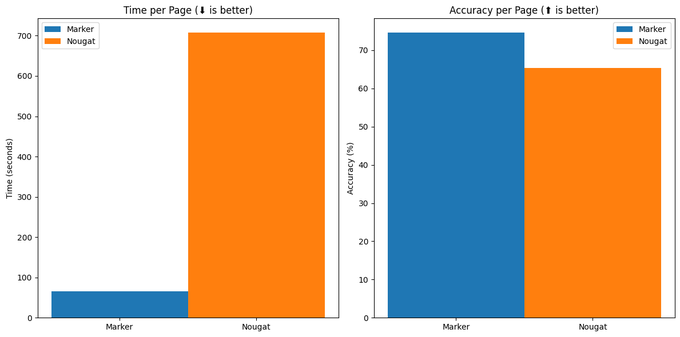

I released marker last week - .

Within 72 hours, marker got to

#1

on HN, with 700 votes, and was starred 3.4k times on Github.

I didn't expect this kind of response - thank you so much for the support!

5

25

248

As

@jeremyphoward

shared yesterday, I'll be joining

@answerdotai

! I'm excited to work with such a strong team.

Before I start, I'm going to finish some in-progress work:

- Integrate surya with marker

- Commercial version of marker

- Launch an API for both

9

8

213

A timeline of

@DataCamp

2017-2020:

- CEO sexually harassed an employee

- The company covered it up

- After years of community pressure, the CEO stepped down

- They just BROUGHT THE CEO BACK 🤦🏾♀️

This is a repeated and ongoing failure of leadership and ethics.

7

46

155

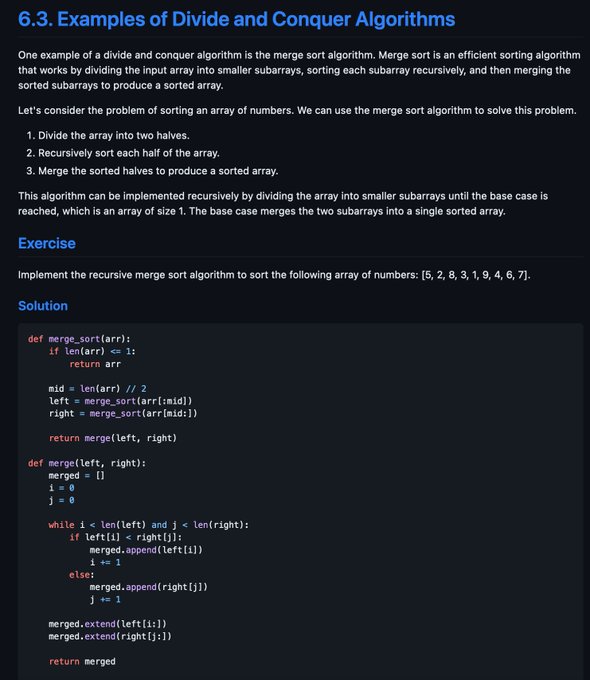

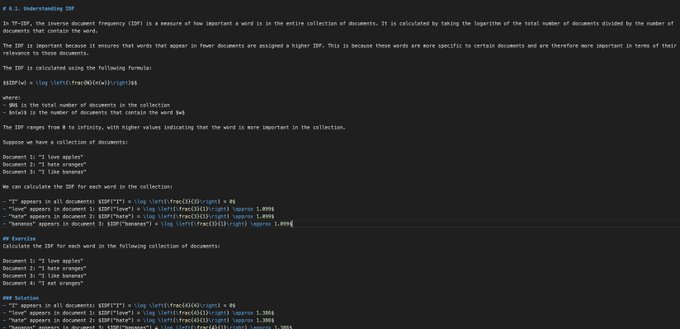

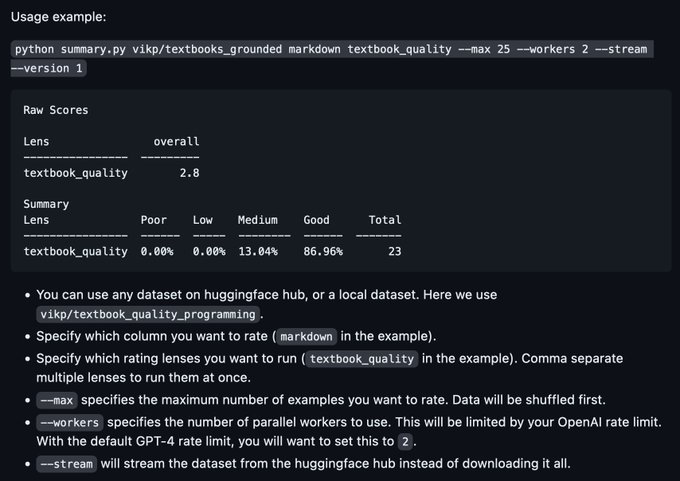

I've improved my synthetic textbook generator in collaboration with

@ocolegro

- . The books are now longer and a lot more detailed!

Here's a preview - . (the programming books were generated with this technique)

1

18

122

@Yampeleg

Thank you! I have a finetuned model that can generate similar quality to GPT-3.5. Just need compute credits to scale to 1B+ tokens 🙏🏾 .

LLM credits (OpenAI or other) are also nice!

Dataset is here, btw -

4

14

116

@1littlecoder

Note: this is a thin wrapper around marker - - but strips out the marker commercial license.

Please see the marker repo for details about licensing.

5

7

96

@sterlingcrispin

@peterthiel

Too many people are fine-tuning generalist models, and too few people are building pipelines of models for specific tasks. I think niche data + pipeline will beat generalist models.

5

3

87

Also, thank you

@jeremyphoward

- I joined with the mutual understanding that we'd see if there was a fit.

When it was clear there wasn't (I want to train/open source models), Jeremy was very gracious. It's hard to find people who genuinely want you to

0

5

86

At

@dataquestio

, we aren't flashy. We don't raise $$ from investors. What we do instead is build the best way to learn data science.

Students who finish >10 courses see an avg $16.6k salary boost, and we've created $103.9M in total salary gains. And all it costs is $49 a month.

3

12

75

I used to work in a UPS hub. I once thought I'd work there my whole career (until my boss told me they wouldn't promote me).

The fact that I've been able to find my own path, and that I'm able to help others do the same with

@dataquestio

, is something I never take for granted.

3

5

48

@kevinsxu

This is a good thing - most architectural changes don't make a big difference (the training data does). This makes Yi compatible with all the existing llama inference tools. They also acknowledged the issue and will rename - .

1

4

45

Surya is built on some amazing open source work, including:

- transformers from

@huggingface

- segformer from

@nvidia

- CRAFT from the

@official_naver

team - an amazing paper and team

Thank you to everyone who makes open source AI great.

1

1

43

1/ In this thread, I'll discuss

@LambdaSchool

, a bootcamp that charges 17% of your pre-tax income for up to 2 years (ISA).

tl;dr Lambda is much more expensive than the average bootcamp, and has similar outcomes. 75% of Lambda students could pay an avg of $9k less elsewhere.

3

9

38

@adithya_s_k

It looks like you copied all of my code out of the marker repo, but split it across several of "your" commits - . You then removed my commercial usage license.

Other code seems copied, too like your florence-2 code is from here -

3

0

34

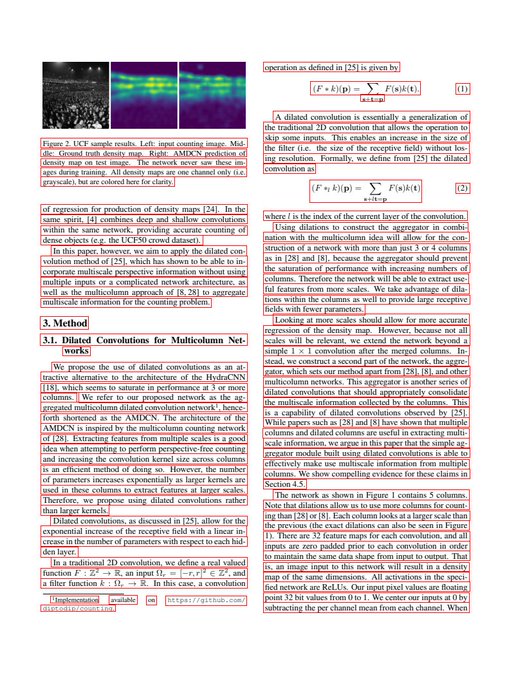

Surya uses a modified segformer architecure from

@nvidia

. I found that by changing some of the shapes in the decoder, I could cut inference RAM usage to 1/4 of the original without a performance degradation.

1

1

33

We announced scholarships for underrepresented groups

@dataquestio

. Here's why:

- Data skills unlock economic opportunity + widely distributing them keeps the field ethical

- Some groups have been excluded due to systemic bias

- Scholarships help level the playing field

1

14

31

Based on my experiences as a solo technical founder growing

@dataquestio

to 30+ people, I wrote a guide on quickly improving your management skills - .

This is how I went from having no idea what I was doing to kind of knowing what I'm doing :)

4

3

27

A cooperative machine learning contest that anyone can participate in:

#machinelearning

#DataScience

0

21

24