Suvidriel 🌸🐈🇫🇮 VTuber Tutorial Lady

@Suvidriel

Followers

10,553

Following

1,009

Media

664

Statuses

3,935

VTuber Dev striving to make VTubing more accessible | she/her | Twitch Partner | Dev of #VNyan | #SuviArt | 📧 business @suvidriel .com | Model: @mintexce

Helsinki, Finland

Joined June 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

مدريد

• 1370268 Tweets

Real Madrid

• 338628 Tweets

Champions

• 236283 Tweets

Vini

• 186979 Tweets

Dortmund

• 171444 Tweets

River

• 113905 Tweets

Ballon

• 112310 Tweets

Eminem

• 101297 Tweets

Mbappe

• 84102 Tweets

Celtics

• 81292 Tweets

Bernabéu

• 66221 Tweets

Blade

• 65467 Tweets

The Atlantic

• 59483 Tweets

LeBron

• 57828 Tweets

Balón de Oro

• 50510 Tweets

Saka

• 49181 Tweets

E. Coli

• 47751 Tweets

Lakers

• 46936 Tweets

Ancelotti

• 45951 Tweets

Bola de Ouro

• 44378 Tweets

#GHLímite7

• 43109 Tweets

Knicks

• 39576 Tweets

Sahin

• 32062 Tweets

#ろふまおは

• 28198 Tweets

Mark Meadows

• 27925 Tweets

Tatum

• 24488 Tweets

Lucas Vázquez

• 22775 Tweets

#TemptationIsland

• 22740 Tweets

Arteta

• 22374 Tweets

#RMABVB

• 18979 Tweets

Rodri

• 18157 Tweets

週の真ん中

• 13196 Tweets

الريال

• 13111 Tweets

Trossard

• 12985 Tweets

おはスタ

• 10737 Tweets

Fonseca

• 10603 Tweets

انشيلوتي

• 10100 Tweets

Last Seen Profiles

Pinned Tweet

Hewwo~ 💕

I'm Suvi, a variety streamer and software developer from Finland. Nice to meet you all~

🍪Twitch:

🍪YouTube:

🍪Discord:

🍪Art:

#SuviArt

10

33

342

Thanks to the addition of Spout2 to VTube Studio it's now very easy to get a 2D avatar in

#VNyan

through a Spout2 receiver prop and use most of the redeems just fine~

26

217

1K

Are you a 2D VTuber wanting to add some interactive redeems to your streams?

Did you know that

#VNyan

has supported VTube Studio, Inochi2D as well as face camera streams for almost 2 years already?

It's super easy to bring any 2d image to VNyan through the builtin Spout2 props

Thanks to the addition of Spout2 to VTube Studio it's now very easy to get a 2D avatar in

#VNyan

through a Spout2 receiver prop and use most of the redeems just fine~

26

217

1K

10

158

1K

To celebrate the big UI/UX redesign milestone the

#VNyan

community helped me put together a showcase reel of some of the awesome things you can do with the app~

Enjoy~ 💕

#VTuber

#VTuberUprising

28

254

1K

As seen on stream yesterday, the next version of

#VNyan

comes with built-in Look at Camera so you don't have to make props for it anymore.

32

80

930

#VNyan

1.08 introduces the Pendulum Physics-system for creating eye wobbles.

The release also fixes several bugs and contains improvements to anti-alias and bloom.

Download:

18

131

854

Side by side comparison of the ARKit tracking in

#VNyan

using Web Camera and iPhone.

While it doesn't quite reach the same quality it's still way better than no ARKit at all

With some tweaking of the expression settings the quality could be improved even further

22

125

835

#VNyan

1.3.1 adds one of the popular community created Node Graphs as a builtin feature to allow more expressive, Live2d-style body movement.

It also adds new post processing effect and a lot quality of life features such as object picker

Enjoy~ 💕

33

128

828

Just tested LeapMotion 2 in

#VNyan

and I must say the tracking is way way better and much wider range than in the first version.

The device was used with neck holder

21

86

735

#VNyan

1.1.5 introduces experimental Web Camera tracking with ARKit and simple blendshape support

This finally making VNyan a full fledged VTuber App with its own tracking. Enjoy cuties~ 💕

24

134

674

This upcoming

#VNyan

feature may be of interest to all you 3d modeller types~

Camera Angle Blendshapes

The app will track the angle between avatar face and camera, and applies it to 4 camera blendshapes if you want to change how your avatar's mouth etc looks based on the angle

27

81

601

Valentine's Day is coming soon so here's some wiggly heart blob throwables and droppables for

#VNyan

💕5 Colors

💕Sticky and non-sticky versions

Get them for free from my ko-fi:

#freeVTuberAssets

#VTuberAssets

4

71

522

The VR Tracking support in

#VNyan

is coming along nicely~ 💕

Still a lot of work and fine tuning of the tracking result left before public release though~

21

49

520

#VNyan

1.0.19 adds support for reading virtual and physical trackers from VMC-protocol. The trackers can then be linked to props.

It also adds several Leap Motion updates including Screentop-mode

16

50

482

Having worked on

#VNyan

for nearly 2 years, I keep forgetting we had support for certain features until someone requests them again.

For example this leaning forward-feature's been in the app over a year. Never used it myself but now it's definitely going to the ASMR streams

11

47

485

#VNyan

's UI/UX rework is progressing nicely~

You'll be able to design and share your own themes~ 💕

20

60

483

The upcoming version of

#VNyan

will increase the shadow precision by quite a bit. To some degree even making the contact shadows unneeded.

This is a comparison video of the current mode with contact shadows vs the new one without 💕

11

51

482

Some new stuff coming to

#VNyan

soon~

Expression Mapper for toggling blendshapes based on combination of other blendshape values.

Also various rain effects~

15

56

476

#VNyan

1.2.0 adds the long awaited YouTube-support to the application.

It also introduces multitude of new features such as a built-in Look At-camera and various new Post Processing effects.

The update and more detailed changes are available on the itch-page as always~ 💕

11

51

392

Testing

#VNyan

's Web Camera based ARKit and head tracking~

Might possibly be included in the next release 💕

17

57

384

Hewwo~ 💕 I'm Suvi, a variety streamer and tutorial cat lady from Finland. Nice to meet you all~

🍪Twitch:

🍪YouTube:

🍪Discord:

🍪Art:

#SuviArt

11

42

348

#VNyan

1.2.6 brings several updates to the Bubble Shooter-node including stickiness and GIF-image support.

Other features include web camera hand mirroring and virtual camera background selector.

Enjoy~ 💕

9

59

352

Testing ARKit tracking through iFacialMocap, VTube Studio and MeowFace in

#VNyan

Both head position and rotation tracking working to some degree

10

27

350

#VNyan

1.2.2 introduces a lot of community requested features such as Virtual Camera-support and VMC-smoothing.

It also adds and updates Worlds that come with the app.

The update is up on itch as usual~ 💕

16

47

345

#VNyan

1.2.1 introduces a redone User Interface and Starup wizard to make the application easier for new users.

It also adds Modding/Plugin-support to allow creation of new features and custom unity scripts 💕

8

40

328

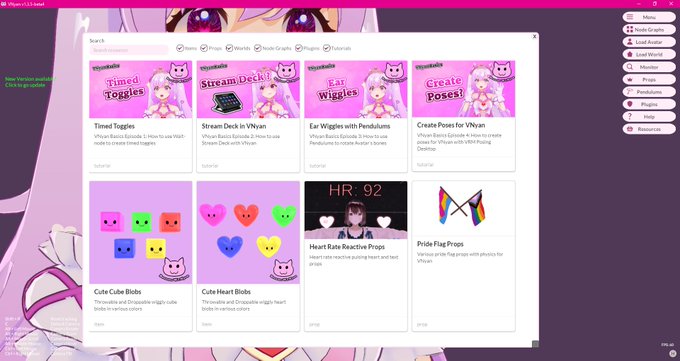

The upcoming version of

#VNyan

is going to add resource browser for easy discoverability of assets for the app

If you want your asset links added to the browser you can submit them in the discord already~ 💕

9

59

324

#VNyan

1.0.9 introduces experimental ARKit-support through VTube Studio (iphone), iFacialMocap and MeowFace.

The tracking can be combined with VMC-protocol as well.

Download:

13

47

305

#VNyan

1.0.16 introduces the new Expression Mapper that allows you to map for example ARKit expressions to blendshapes.

The new version also adds some IK fixes and a new rain-effect

Tutorial link is in the comments~ 💕

9

51

306

The LeapMotion-support in

#VNyan

will allow node graph to react when the tracking status of each hand changes.

This allows for example swapping to some arm sway or similar automatically when hand tracking is lost

12

37

289

#VNyan

1.0.13 finally introduces the experimental MMD motion-support for both humanoid and camera motions.

Motions that use IK are sadly not supported.

9

44

281

#VNyan

1.2.8 adds support for NSFW content creation with Chaturbate-integration as well as support for Lovense Toys

It also adds couple new post processing effects including Motion Blur

Enjoy~ 💕

14

36

276

Since the video quality on this platform seems to be stuck to 270p, here's a screenshot showing the precision of the shadows in the next version of

#VNyan

Each finger casts a shadow correctly now even without contact shadows on

12

31

272

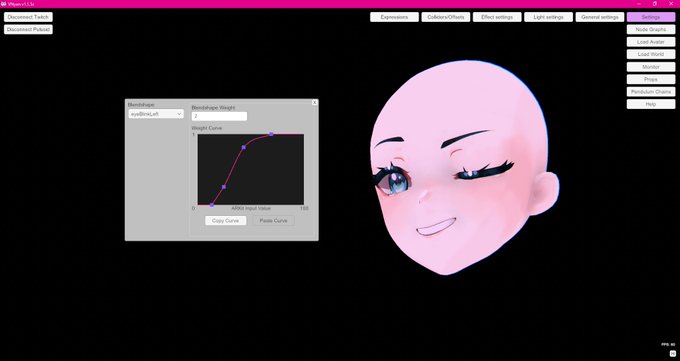

Testing curve-based ARKit Adjustment in

#VNyan

This allows configuring Web camera and Phone ARKit tracking to suit your face/specific needs 💕

17

28

266

Not sure if this needs to be said but

#VNyan

is not impacted by the new Unity price changes. The thresholds are very very far.

Of course, this kind of change is a huge loss of trust towards the engine and definitely encourages one to pick different one for future projects

6

31

265

The upcoming 1.4 version of

#VNyan

allows Plugin Developers to create new post processing effects for the app~

7

41

268

#VNyan

1.1.1 is mostly a bug fix release but it also introduces for example Feet Planting to ARKit tracking which allows avatar to float.

9

38

261

Audio Reactive shaders are coming to

#VNyan

soon through AudioLink-support.

This means that you'll be able to utilize AudioLink-capable shaders in Avatars, Worlds and props~

11

38

255

#VNyan

1.1.6 adds ARKit Adjustment settings to adjust blendshapes for both phone and web camera tracking

It also adds optimizations to VRM 1.0 performance in the app

9

32

236

I just released a small flag asset pack for

#VNyan

All the flags come with physics and there's also unity package for making your own flag assets.

You can get it for free from my ko-fi:

Enjoy~ 💕

5

60

234

#VNyan

1.1.0 introduces completely redone tracking layers, 4 vmc receivers, animation injection, camera angle blendshapes etc.

The update is big so I urge you to reserve enough time to reconfigure your settings before your next stream~ Please do not try to update mid stream~ 💕

9

43

226

Hewwo

#Vtuber

#ENVtuber

In today's tutorial we get into more advanced animation mechanics of VSeeFace to create animations that can be started through VMC protocol. This works perfectly with Twitch integrations etc.

#VTuberUprising

6

63

223

💕VNyan Launch & Partner Anniversary Subathon💕

NOV 12 AT 11AM UTC

Let's have comfy and fun times together~ and then release VNyan - the game changing 3D VTuber App~

Hope to see you there~💕💕

#ENVtuber

#Vtubers

#Vtuber

#VtuberUprising

12

73

226

#VNyan

1.3.5 introduces a new Resource Browser-feature allowing much easier discovery of various kinds of assets for the application.

It also adds a Cooldown-node that can help simplify a lot of the existing Node Graphs.

Enjoy~~ 💕

12

59

220

Cuties a reminder~

#VNyan

is a free app and available only on my itch-page. If you see someone selling it on some random website then it's a scam. Please do not download it. Stay safe!

4

61

212

#VNyan

1.1.4 introduces Depth of Field-node for blurring the background. It also adds gamepad button/stick detection, Pose Injector and a lot more~

6

24

209

Testing VTuber setup with Rokoko Mocap Suit and Gloves with one Vive Tracker for positional tracking~

#madewithrokoko

#Vtuber

12

23

207