Richard Riley (R²)

@Richard_D_Riley

Followers

12,765

Following

1,267

Media

236

Statuses

6,738

Prof of Biostats • BMJ Stats Editor • Books: "Prognosis Research in Healthcare" & "IPD Meta-Analysis: A Handbook ..." • • Doctor Who ❤️❤️

Joined August 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Dodgers

• 227658 Tweets

ドジャース

• 106897 Tweets

#HappyDussehra

• 73690 Tweets

JENO FIRST PITCH

• 56869 Tweets

#Perfect10Liners

• 45604 Tweets

राष्ट्रीय स्वयंसेवक संघ

• 38518 Tweets

Pilar

• 36330 Tweets

#lovefighters

• 33276 Tweets

#chibalotte

• 33175 Tweets

Hispanidad

• 26632 Tweets

ホームラン

• 24718 Tweets

플레이오프

• 17759 Tweets

#お笑いの日

• 14996 Tweets

JAMESSU WITH ADDA

• 11816 Tweets

キングオブコント

• 11482 Tweets

Viva España

• 10772 Tweets

Last Seen Profiles

Pinned Tweet

Five years pregnant with this … finally popped out today 🥳

Many thanks

@LesleyCRD

& Jayne Tierney for embarking on the journey with me - wonderful people to work with & learn from.

And to our amazing co-authors for guiding, correcting & challenging us - thank you 🙏

23

41

371

Personal news👇

In November, I will move to the University of Birmingham (

@unibirmingham

) as Chair of Biostatistics

Excited to work with a leading Biostats team in

@UoB_IAHR

@unibirm_MDS

& to continue our applied & methods work & courses in prognosis, prediction & meta-analysis

67

15

511

Using software modules or packages (within R, Stata etc) in your research?

Novel idea: reference them in your publications too!

These packages often take years of hard work.

Their creators deserve to be acknowledged

(indeed, their career progression depends on it)

#academia

13

67

329

📽️NEW VIDEO 📽️

"How to get your article rejected by the BMJ:

12 common statistical issues"

I discuss common stats issues we encounter at

@bmj_latest

Please check before you submit next article 🫣

Santa would check the list twice 🎅

Hope helpful 🙏

10

116

321

🎄NEW

@bmj_latest

Christmas article🎄

"On the 12th Day of Christmas, a Statistician Sent to Me..."

Fun & light-hearted, flags common issues the BMJ Stats Editors encounter during peer review

Hoping for a positive educational impact, so please share 🙏

4

120

252

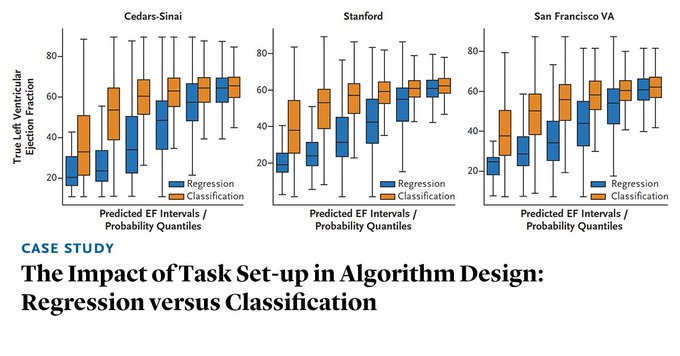

Important message below but infuriating

#AI

field has to 'discover' this rather than being guided by long-standing stats literature

eg,

by

@GSCollins

says:

'Categorising continuous predictors is ... inefficient & should not be used in model development'

AI models perform better when trained on underlying measurements rather than the associated diagnoses, because dichotomizing data loses information that is useful in training AI models. Read the Case Study by

@amey_vrudhula

, BS, et al.:

5

37

102

13

64

246

In case you missed it: our

@bmj_latest

paper on how to calculate the sample size required for developing a clinical prediction model (aimed at a broad audience)

8

77

249

I keep being asked to review 'urgent'

#COVID19

articles that identify optimal cut-points (e.g. for d-dimer levels). Time to repeat again: dichotomisation of continuous variables is biologically implausible, statistically inefficient, & against ethos of individualised prediction

9

58

219

So important for researchers to have quality reading time.

Being on top of the literature is an academic super power. Yet i fear most of us rarely get chance to prioritise this. I increasingly don’t.

How do we find time during our working hours to read?

#AcademicTwitter

14

18

194

End of days

We should not be promoting this technology with words such as ‘biggest update’ or ‘simply’ or ‘analyse it for you’

Statistics & data science is a profession requiring substantial training at degree level(s) - there is rarely anything simple or automated about it

16

24

190

** Writing an academic text book - a thread **

I've written/edited a couple of textbooks in the last 5 years, & gained experience about the process & what worked well.

So 👇 I share 10 learning points to help others considering their own book project.

#AcademicTwitter

3

50

183

The

#academia

career bootstrap:

1) Get existing data (call it 'big', ignore quality)

2) Form Qs based on variables recorded

3) Analyse until 'novel' findings

4) "Sensitivity analyses did not change results"

5) Publish

6) Inform comms team & media

7) Repeat 1-6 until tenure/chair

3

30

174

Delighted to announce our new book,

*** Individual Participant Data Meta-Analysis: A Handbook for Healthcare Research ***

will be published by Wiley in May 2021.

Edited by myself,

@LesleyCRD

& Jayne Tierney.

More details below...

1/5

9

31

164

2002: print out articles & place in work bag to read later

2012: download article PDFs to read later

2022: use Twitter 'Bookmarks' to save links to articles to read later

Always: never actually read later

#Academia

2

11

162

**NOW PUBLISHED - with

@GSCollins

**

"Stability of clinical prediction models developed using statistical or machine learning methods"

So you've developed a fancy new model ... but have you checked instability of individual predictions?

You should!

1/3

2

43

159

Many thanks to

@NIHRcommunity

@NIHRresearch

@nihr

for supporting methodologists on their career pathway - humbled to receive this, & at same time as

@DrLauraGray

&

@GSCollins

too

Will continue to champion the importance of methodology & high research standards in coming years

Today,

@NIHRresearch

announced the research leaders receiving the prestigious NIHR Senior Investigator Award🏅

We couldn't be more pleased to congratulate our very own Professors

@drmelcalvert

&

@Richard_D_Riley

on this important recognition!

Read more:

5

7

38

27

11

162

***NEW PAPER***

"Minimum sample size for developing a multivariable prediction model using multinomial logistic regression"

Extends our work for continuous, binary & survival models

Led by Alex Pate &

@glen_martin1

1

30

143

"Is Medicine Mesmerized by Machine Learning?" - spend 10 minutes reading this excellent blog by

@f2harrell

on why stats methods remain crucial for risk prediction in healthcare; the ML field is too focused on classification & rarely examines calibration

1

61

142

📽️ NEW VIDEO 📽️

"Sample size calculations for external validation of a clinical prediction model"

- what sample size is required for a validation study aiming to precisely estimate model performance?

- 30 min broad overview 👇

#stats

#MachineLearning

4

50

141

ICYMI:

"On the 12th day of Christmas a statistician sent to me"

Our educational article in

@bmj_latest

Christmas 2022 issue

The recommendations are for life, not just for Christmas - so please share 🙏

Here's a one-page summary to pin to your wall

1

52

138

NEW BMJ GUIDANCE PAPER: Calculating the sample size required for developing a clinical prediction model - with collaborators

@MaartenvSmeden

@GSCollins

@joie_ensor

@Kym_Snell

@CarlMoons

@f2harrell

@glen_martin1

@hans_reitsma

4

45

137

Well written introductory article: "Overview of clinical prediction models" Provocative, though, that it is aimed at clinicians doing prediction model research who don't have time to read the books of

@f2harrell

or

@ESteyerberg

... 1/3

4

50

136

*NEW PAPER*

"Poor handling of continuous predictors in clinical prediction models using logistic regression: a systematic review"

@JClinEpi

thanks to Jie Ma,

@pauladhiman

&

@GSCollins

for leading this important paper showcasing current shortcomings

3

40

137

*** NEW PAPER ***

"Minimum sample size for external validation of a clinical prediction model with a binary outcome"

Many thanks to co-authors

@Kym_Snell

@GSCollins

@joie_ensor

@LucindaAArcher

@MaartenvSmeden

@TPA_Debray

Open-access⬇️

4

38

134

Really pleased to share the cover for our new book on IPD meta-analysis - hope you like it!

Asked my young daughter for her verdict.

She laughed out loud & said:

"It says 'willy' in the corner!"

Not the response I expected.

Apologies

@wileyinresearch

4

15

130

NEW: Minimum sample size for developing a multivariable prediction model PART II: binary & time‐to‐event outcomes. Big thanks to

@GSCollins

@f2harrell

@Kym_Snell

@CarlMoons

@DanielleBurke88

@joie_ensor

Calculate minimum EPV required based on three criteria

4

61

126