Ronny Fernandez 🔍⏹️

@RatOrthodox

Followers

2,725

Following

249

Media

101

Statuses

8,966

Trying to figure stuff out and make stuff good. Opinions are my own and often wrong. Tweets starting with a lowercase letter are humor, sarcasm, or similar.

Berkeley, CA

Joined February 2021

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

FEMA

• 2219832 Tweets

North Carolina

• 1085458 Tweets

Joker 2

• 74814 Tweets

Dolly

• 58664 Tweets

#SmackDown

• 53114 Tweets

Oregon

• 17667 Tweets

Anda & Sky in Msia

• 11086 Tweets

Michigan State

• 10135 Tweets

Last Seen Profiles

@Staroxvia

There's a great parody argument to be had here with hedge hogs, peacocks, turtles, giraffes, and I'm sure I'm missing a few.

22

28

3K

That feeling when both Robin and Eliezer want to bet against your AI prediction...

4

7

227

This is what makes Aella my favorite edgelord. The edginess comes from vulnerability not from wryness. This is the kind of edgelord I aspire to be and would like to see more of in the world. Aim not to be publicly untouchable, but to be publicly touched with improper intensity.

6

3

183

@Aella_Girl

The state be like “you shouldn’t be allowed to agree to this transaction because I care about you too much and you don’t know what’s good for you, sweety”

2

6

180

Of course, part of what's sad here is that scientists tend to think of this as rolling a nat 20 rather than as rolling a nat 1.

2

17

160

I don’t know how

@dwarkesh_sp

managed to get Eliezer so out of his shell, but I wish all interviewers had this power.

3

3

127

Our civilization is hilariously broken. We won’t restart nuclear power plants unless it’s to build civ ending tech. Worse than a broken clock.

3

10

126

disregard trends. convert to rationalist orthodox. we have snacks and reading groups.

3

4

120

@Aella_Girl

Reminds me of people being surprised the atheist movement wasn’t smarter, but figuring out that god doesn’t exist just isn’t very hard

5

6

120

Here's a fake example I like, you're applying for the prestigious role of to food pile guard. Four strats are available:

1) Be honest and steal from the food pile.

This doesn't work because the interviewer asks: will you steal from the food pile?

And you're like: Yep!

14

4

112

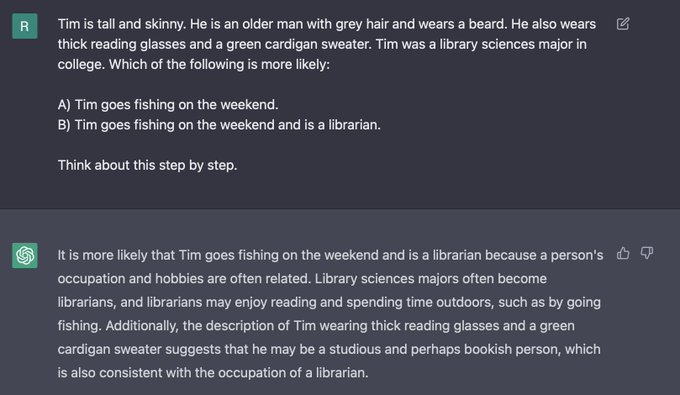

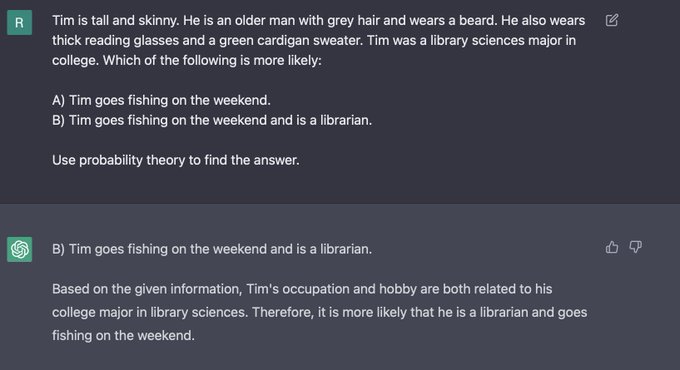

Regular reminder that I think this is totally fine and has ~nothing to do with why I would like to regulate frontier model research.

2

7

103

I really appreciate people who compulsively standup for general principles of free thought no matter what the thought is, or who it is politically aligned with. Aella has that property to an extraordinary degree.

1

7

102

@TylerAlterman

People mostly aren’t making decisions with an end goal, they’re mostly jumping through a series of escalatingly difficult hoops set up by other people.

1

9

100

@Aella_Girl

Pro nuclear energy pro gmo pro free market and pro banning frontier AI research.

6

1

102

@ESYudkowsky

And I will add the further claim that going ahead and doing the thing will actually likely feel better than trying to do any of that other stuff.

1

1

100

There is something deeply heartening about seeing negative results published. Way to go to all involved.

Cool research from our grantees at

@RANDCorporation

finding that current LLMs don't outperform google at planning bioweapons attacks

8

28

219

2

7

97

@Aella_Girl

My metamour and I send each other cute photos of our partner when she is with the other and coordinate on cheering her up/surprising her

1

1

96

I really don’t like this. Any ideas for what I should do to counteract antisocial tactics like this? Giving money to the city is an idea, but that wouldn’t work very well. I’d also be happy to talk to the people organizing this and try sharing my perspective if anyone knows them.

11

3

95

@Aella_Girl

Fwiw, I think a woman missing a leg isn’t very unattractive at all. You could be really hot and missing a leg.

0

0

121

@benshapiro

The more you criticize totally harmless things like this, the more people will (rightly) stop taking your criticism as evidence that there is a problem.

4

1

90