PolymathicAI

@PolymathicAI

Followers

2,647

Following

75

Media

11

Statuses

34

The Polymathic AI Collaboration. Shared account.

Joined September 2023

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

نيوكاسل

• 376953 Tweets

حسن نصر

• 257439 Tweets

#حسن_نصرالله

• 100978 Tweets

سوريا

• 83119 Tweets

#ReGLOSS3Dライブ

• 68200 Tweets

ババコンガ

• 36930 Tweets

KINGPOWER x TAYNEW

• 32857 Tweets

Newcastle

• 28538 Tweets

巨人優勝

• 24059 Tweets

Perfume

• 21209 Tweets

ヒロアカ

• 17604 Tweets

#ドッキリGP

• 16634 Tweets

Reach the top

• 16541 Tweets

iLife

• 13557 Tweets

ايران

• 12977 Tweets

WADA

• 12504 Tweets

花火大会

• 10649 Tweets

CONCERT NI CULLEN

• 10542 Tweets

Last Seen Profiles

We're thrilled to be at

@NeurIPSConf

for the first time since we formed a few months ago!

If you're there, we'd love to chat with you about our team and AI/ML research!

Reach out and be sure to check out our accepted papers👇

#NeurIPS2023

#AI4Science

and we are HIRING 🥳

4

10

74

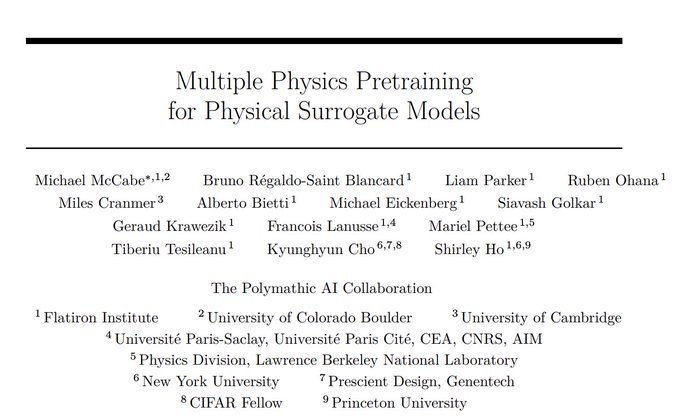

Very excited to share that our team's "Multiple Physics Pre-training" paper won the Best Paper Award at

@AI_for_Science

workshop at NeurIPS this year!

Congrats to the team and to

@mikemccabe210

,

@BrunoRegaldo

@liamhparker

and

@oharub

for leading the effort! 🥳

Congratulations to the best paper award winners at NeurIPS AI for Science 2023 workshop!

@mikemccabe210

@kchonyc

@cosmo_shirley

Andres M Bran

@SamCox822

@andrewwhite01

@pschwllr

1

25

193

4

14

70

Are you a student looking for an internship this summer or fall?

Want to build foundation models for science in NYC?

Join us at

@PolymathicAI

at

@FlatironInst

!

0

7

23

AstroCLIP thread:

8/8

Excited to share some of our recent work at

@polymathicAI

! With AstroCLIP we are bringing multimodal contrastive pretraining to astrophysics!

1

12

33

0

0

7

Our team, led by

@cosmo_shirley

, comprises both pure machine learning researchers and domain scientists, covering a wide array of disciplines:

2/n

2

0

6

Check out our oral presentation of Multiple Physics Pretraining (MPP) at

#AI4Science

workshop!

MPP is a group of techniques for pretraining on a diverse set of time-dependent physics to learn models that can be fine-tuned for multiple problems.

1

0

5

We are graciously supported with funding from

@SimonsFdn

@FlatironInst

.

Additional participating institutions include

@nyuniversity

,

@Cambridge_Uni

,

@SchmidtFutures

,

@Princeton

, and

@BerkeleyLab

.

4/n

1

1

4

Polymathic AI is guided by a scientific advisory group of world-leading experts:

@ylecun

@hardmaru

Leslie Greengard

@DavidSpergel

@laurezanna

Colm-cille Caulfield

@OlgaTroyanskaya

and Stephane Mallat.

3/n

1

0

4

Finally, check out our work on bringing multi-modal astronomical data into a common embedding at

#AI4Science

workshop!

Blog:

Excited to share some of our recent work at

@polymathicAI

! With AstroCLIP we are bringing multimodal contrastive pretraining to astrophysics!

1

12

33

1

0

3

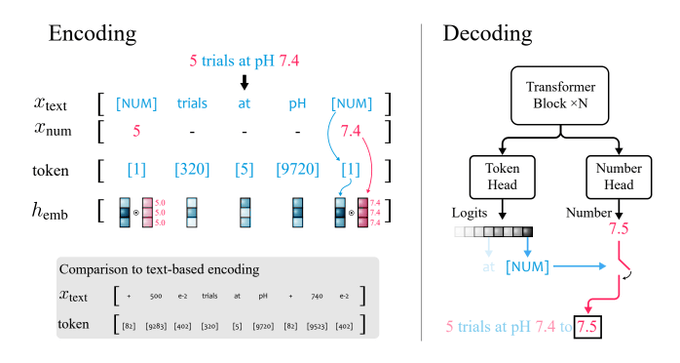

And got problems with numerics with LLMs?

Check out our xVal presentation at

#AI4Science

Workshop! where we discuss how to get efficient numeric encoding that generalizes well!

Blog:

1

0

2