Ky⨋ Gom⨋z (U/ACC) (HIRING)

@KyeGomezB

Followers

2,815

Following

650

Media

1,227

Statuses

15,734

Enabling AI Engineers to Orchestrate Swarms of Agents: $ pip install swarms

Palo Alto

Joined October 2021

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Iran

• 341475 Tweets

ايران

• 244779 Tweets

#เปิดกล้องซีรีส์หยดฝนกลิ่นสนิม

• 233808 Tweets

Ismail Haniyeh

• 215023 Tweets

EL START SHOOTING PETRICHOR

• 213344 Tweets

اسرائيل

• 191426 Tweets

Megan

• 156687 Tweets

Centro Carter

• 143936 Tweets

Tehran

• 111855 Tweets

#GranHermanoCHV

• 82137 Tweets

#اسماعيل_هنيه

• 70708 Tweets

Kari Lake

• 53539 Tweets

THANK YOU FOR NARCISSISM

• 51248 Tweets

طهران

• 46815 Tweets

Sebastián

• 46472 Tweets

Dodgers

• 45282 Tweets

NanaNu 2nd Anniversary

• 41531 Tweets

スペースマウンテン

• 35597 Tweets

日銀利上げ

• 33164 Tweets

#Hamas

• 29201 Tweets

Mossad

• 25438 Tweets

Inna

• 19886 Tweets

マギレコ

• 19611 Tweets

Daenerys

• 18611 Tweets

Rayados

• 14758 Tweets

رييس المكتب السياسي

• 14583 Tweets

Hoes for Harris

• 14173 Tweets

ابو العبد

• 13300 Tweets

追加利上げ決定

• 11905 Tweets

Last Seen Profiles

Lmao

@PropheticAI

changed the visibility of their posts because I implemented their model fast.

This is a lesson.

Don’t close source your AI models or they’ll get implemented open source anyways 😂

26

17

283

I was the first ever to implement Mamba + Transformers:

Good to see the architecture being adopted.

2

10

114

@BasedBeffJezos

DOUBLE ENERGY GENERATION

TRIPLE ENERGY GENERATION

QUADRUPLE ENERGY GENERATION

WHATEVER IT TAKES.

WE MUST GO FORWARD!

5

3

55

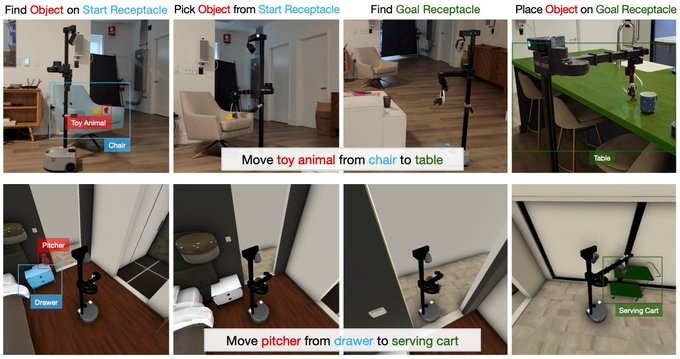

Anybody in Robotic AI want to partner on this? I've implemented RT-2

The leaderboard for the HomeRobot challenge is now open at :

- create a docker image containing your agent

- planning or learning, use your favorite strategy

- we plan to run the top 3 on real hardware

- potentially win a robot from

@hellorobotinc

2

22

83

2

8

36

@kyritzb

@PropheticAI

Everything is a Lego block, you just need to know how they connect together

1

3

34

@Blockworks_

This is horrible. A USD-backed stable coin would have unimaginable consequences such as targeted inflation, targeted credit inflation, and other horrible horrible effects.

This is not it whatsoever.

10

0

30

@SamuelMullr

this is why papers who do not release code with experiments should be overlooked, as there is no way to formally test the paper's findings

2

2

34

Implementation in progress ✅

1.5Bit must be democratized.

2

4

33

@drchristhorpe

The Open Source Implementation of AlphaFold3

We need to democratize this technology asap

1

5

30

I'm very proud to announce Open QwenVL

An open source production grade ready to train Qwen VL exactly as described in the Qwen Paper.

✅ Full Multi-Modal Processing [Vit, Cross Attn]

✅ Modular and Re-Usable

✅ Built with Zeta

Get started now:

4

4

24

1 Bit Lora is here.

Get started with:

$ pip install bitnet

And, the repo is here:

Appreciate you

@shxf0072

0

3

20

I’m excited to announce I’m headed back to SF tomorrow for a month with

@thomasschulzz

Solaris Residency Program 😆

Let’s get dinner, work on autonomous agents, and advance Humanity 💯🤖🤖🤖

2

0

19

implementing this right now in Pytorch and Zeta!

1

1

19

Architecture:

-> Encoder + Decoder [Weird but okay?]

-> RMSNorm

-> MGCA

-> RoPE

-> SwiGLU

-> Based on Noam Architetecture

-> Bfloat

Implemented here:

1

2

16

@pedrobeltrao

The Open Source Implementation of AlphaFold3

We need to democratize this technology asap

1

0

16

Ai researchers and academics don't know how to code.

It's very inefficient, poorly optimized, and just low quality.

Which is why I made Zeta, to make AI engineering as simple as possible:

0

0

16