Franck Pachot

@FranckPachot

Followers

15K

Following

47K

Media

6K

Statuses

35K

🥑 Developer Advocate ▝▞ YugabyteDB 🐘 PostgreSQL 🔸 AWS Data Hero 🍃 MongoDB Data Modeler 🅾️ Oracle Certified Master 𝕏posting to bsky/mstdn/lnkdn

Lausanne🇨🇭

Joined October 2012

The most useful I've read about microservices and databases is this article by @GaryStafford. Nothing is better than a Sakila example on PostgreSQL🤩.

5

139

546

If you want to know "What is SQL", don't ask @MongoDB. Normalization was not invented to reduce storage but for data integrity, and may require more space in tables and indexes. It is not about physical consideration. By the way, SQL is a language.

30

168

489

Do you know what means #backward #compatibility? .You take a binary dump from 1986 and import it into Oracle 20c with one simple command and no additional tool🤓.Different characterset, different OS, different DB version. but same table and data. This is @OracleDatabase

17

58

265

My next step: joining @Yugabyte in July. After 20 years as a consultant, with awesome years at @dbiservices I'll move to a new challenge😎.Here are the reasons: #follow to know more about this #opensource #postgres-compatible 🌍🌎🌏 #distributed #database.

39

13

175

So, today, #monolithic is used as a pejorative word for software which:. - link their functions to be executed by one processor without unpredictable latency. - are clever enough to share memory among multiple processes. - can read/write to same files from multiple nodes. 🙄.

12

41

139

France is going to #Lockdown2 and 2 persons out of 3 interviewed on TV doesn't know how to wear a mask🤦♂️ When publicly interviewed about covid. You can imagine late in a restaurant drinking with friends. #nosurprise🤷♂️

10

21

129

This is crazy. Oracle has a great free replacement for CentOS and nobody wants it because of the name of the company behind it. How can a company be so wrong in its marketing strategy that nobody wants their free product? 🤷@OracleLinux is really great.

CentOS replacement Rocky Linux 8.4 arrives, and proves instantly popular #linux #centos #rockylinux.

33

28

128

Few weeks ago @ludodba announced that he is joining the database group at @CERN Now my turn ;) I'll join Ludo and the @CERN DBA team in September. And even if it is quite hard to quit the amazing @dbiservices company, I'm very exited by this new challenge

44

8

117

The most efficient ways to learn:.#community #sharing #meetups #workshops #conferences.Share / Explain👩🏫✍️🎙. Hands-on⌨👩💻. Meet 🍻🥤. Live demo 👩💻🖥️. Podcast / Video 📻📺. Books 📚📑. Slides session lecture🤳🎞️👏.

2

30

117

If FRIENDS is a relationship between people, then REAL FRIENDS means that the foreign key is ENABLED and VALIDATED, right @MongoDB ?.

11

23

116

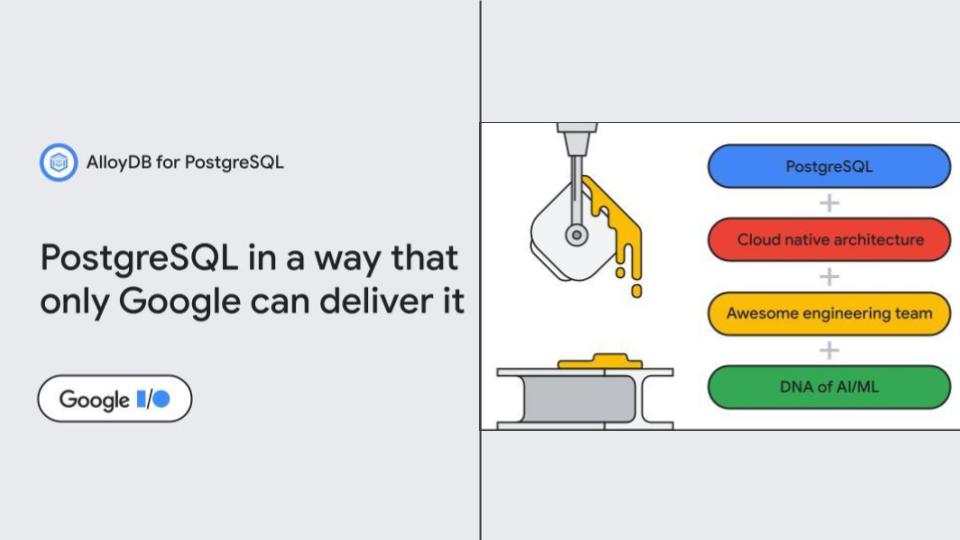

Interesting. Google has built its Aurora. Another proof that PostgreSQL compatibility is the new SQL standard.

Today at #GoogleIO, we’re thrilled to announce AlloyDB for PostgreSQL. Based on our decades of experience designing and managing database systems, it provides superior performance and scale for the most demanding enterprise workloads.

5

28

113

SQL databases can wait on conflict (often called pessimistic locking) or fail on conflict (optimistic) but to dequeue and be scalable, just skip on conflict 🤓.

If you are building a task scheduler, a ticket booking system, or a flash sale, one SQL clause you will be heavily utilizing is `SKIP LOCKED`. Let's explore this clause and learn what it is and how to use it. We need a pessimistic lock to ensure correctness when multiple.

3

4

115

Here is an @OracleDatabase ORA-2020 for 2️⃣0️⃣2️⃣0️⃣ 🥳 . And a New Year's resolution: don't forget to end your distributed transactions. even when you didn't modify any data.

3

17

109

A Key-Value "get" is in single-digit millisecond response time. No difference in #NoSQL vs. #RDBMS as long as table is partitioned. Here 11ms to read an item from a 7TB table in @OracleDatabase. Because B*Tree #indexes maintain O(logN) and #hash #partitioning reduce it to O(1) 😎

6

20

107

This is an @OracleDatabase .rpm accessible from everywhere without having to manually click the "I accept the Oracle License Agreement". Like just:. yum install -y _ Huge thanks 🙏 to @GeraldVenzl long work for that 👏

2

38

107

You know why RDBMS are not #serverless? Because they add a buffer cache to reduce I/O. And a library cache to use less CPU. Warming up a #cache and keeping it between peaks of activity is actually a #feature. To use less resources and get higher and predictable performance.

5

19

99

Quickly find the bottleneck @nixcraft.

Wanna see which process is the bottleneck in your Linux command line chain - #pSnapper can help:. Tar spending most of its time *waiting* writing output to STDOUT. Gzip is 100% on CPU and consumes as fast as it can. Gzip's CPU usage hits the bottleneck!

0

26

97

If you think that Year #2020 brings only bad things, there's something that will change your mind. 🎈Predicate Information is finally visible @OracleDatabase 20c #awrsqrpt 🙌 (Huge thanks to @vldbb and all who voted on 🙏)

4

21

92

Me to wife exactly one year ago: don’t worry this Ethernet cable through the corridor is just temporary to see if zoom connection is better 🤣 #stillThere

11

2

92

The beauty of @OracleDatabase MVCC implementation.You can:. - get data from the past (select … as of timestamp). - query it with the past value of the key. - and still use index access to it 😎.It is like a time machine, implemented at block level (data blocks and index blocks)

3

23

84

A dump is not a backup. A dump is not a backup. A dump is not a backup. A dump is not a backup. A dump is not a backup.

@samokhvalov @ascherbaum @the_hydrobiont For your information, I use this tutorial from Digital Ocean to show my students they need to be very careful about any tutorial they can find on the internet regarding postgres. No, pgdump is not a backup. It's a data export.

13

14

82

A RDBMS should allow:. create table demo (n int unique).insert into demo values(1);.insert into demo values(2);.update demo set n=n+1;. Without error. I let you run this:.on @MySQL @mariadb @PostgreSQL @_sqlite_ . then with commercial databases. .

12

24

78

When @OracleDatabase was used for the construction of @CERN LEP (large electron-positron collider), predecessor of LHC (large hadron collider), total data was 2GB. Today: 2PB in Oracle databases, with Maximum Availability Architecture. #AmazingTechnology.

0

28

83

@pioro @oraesque @brendantierney @RACMasterPM @floo_bar Ok, I didn't plan to make it public before DOAG, but we are there to share😀.

9

20

74

In 19c. any CDB. in any edition. without any option. can contain 3 PDBs. Even in Standard Edition you can clone, thin clone, refreshable clone, relocate online, unplug/plug, lockdown, throttle, isolate and #consolidate!.

1

37

78

I'll talk about wait events at @POUG_ORG workshop ( and thinking about an easy way to remember the wait event colors we see in grid control performance hub.

3

16

79

😢 To his team, his friends, his family. @vanpupi was an enthusiastic oracle community advocate, an always willing to help product manager, and a friend.

14

10

77

@MongoDB Real friends will patiently explain to n00b friends why relational model was invented. #consistency #security #abstraction #encapsulation #normalization #joins #multi-purpose model.

1

8

76

If I hear one more time that NoSQL semi-structured data stores are more agile than RDBMS I write a blog post about SQL add column and Codd rule #9.

7

9

74

Finally an official @OracleDatabase XE (the free version) container image available without the need to login:. podman pull gvenzl/oracle-xe. A medium and large version for more features 👍. Thanks @GeraldVenzl

2

23

71

Sometimes joining two tables is cheaper than fetching from a single table. Do not try to reduce joins in a premature optimization attempt. What matters is how rows are stored and accessed. Not which table they belong to. That's how SQL works.

@houlihan_rick @vlad_mihalcea @databasestar The cost depends on many other things than being from one or many tables. Here is a simple example of printing 100000 "Hello World" from one or two tables, where the merge join is faster:

4

9

73

In @OracleDatabase 20c the ORACLE_HOME will be Read-Only - the feature introduced in 18c is now the only way to go

4

13

74

This place is 30 min walk from where I give the @dbiservices Oracle Tuning workshop tomorrow. Shouldn’t we just bring the laptops there? All labs are in the cloud.

6

3

72

You don't scale a SQL database with a distributed filesystem. You scale out a DB with a distributed DB. It has to distribute all read/write intents, locks, and transaction status. Monolithic DBs write this into a single machine (shared buffers in RAM).

@abreng01 @eatonphil Because it is a misconception that databases write their changes into files. They write to the shared buffers. This is what doesn't scale-out in monolithic databases: the shared memory. Scaling out the filesystem improves checkpoint and recovery time but doesn't distribute the DB.

1

5

68

It was the time in the year where contributions are evaluated for @Oracle ACE level evaluation. There's always some questions about this, and some misconceptions. Then I've written a blog post to explain what the @oracleace program is not:.

6

19

70

😭My demo on roundtrips between client and server takes 2x longer when the DB runs on Docker.😲Most of the CPU time wasted in docker-proxy (paravirtualisation spin lock slow path?).🤔This @Docker thing is a bad joke.😡wasting my time with software delivered as docker image only

8

13

69

More than NoSQL vs. RDBMS the big difference in database engines today is about B*Tree vs. LSM-Tree indexing optimizing high throughput reads or writes in priority👇.

📚 Looking for some weekend reading? We published a two part blog series on #database storage engines for busy #developers. Check it out: . Part 1: The Basics. Part 2: Advanced Topics. #DistributedSystems #NoSQL #SQL #RDBMS.

2

9

69

#OOW17 Oracle XE 18c and one new each year (19c. ) limited to 12GB but with all EE features (incl basic compression).

9

41

68

@jdarrow @CacheFlush shutdown abort on the primary 🤔 The story doesn't tell if there is a FSFO observer transparently failover to the standby ensuring the application continuity 😜

3

11

67