Al

@Al_3194

Followers

1,522

Following

494

Media

563

Statuses

3,182

Explore trending content on Musk Viewer

Oasis

• 450763 Tweets

P-POP IWAGAYWAY ANG WATAWAT

• 198793 Tweets

#SB19atGENTOsaKorea

• 188625 Tweets

台風の影響

• 178614 Tweets

Ugarte

• 93904 Tweets

#SB19atBillboardKPower100

• 84342 Tweets

SB19 GRACES BILLBOARD KOREA

• 61893 Tweets

オアシス

• 43428 Tweets

PS5値上げ

• 42859 Tweets

周辺機器

• 30975 Tweets

#Startend

• 27143 Tweets

月見バーガー

• 24275 Tweets

ニンダイ

• 23924 Tweets

ゲーミングPC

• 19176 Tweets

#STAWIN

• 15838 Tweets

中国外務省

• 12688 Tweets

ゲーム機

• 11352 Tweets

スタテン

• 11243 Tweets

Last Seen Profiles

So.. $TAO is about to be listed on two new exchanges on December 14th. 👀

@BitrueOfficial

and

@UpholdInc

. Nice.

$TAO

13

57

284

.

@neural_internet

just dropped sneak peeks of their upcoming os front end. 👀

"The goal is to give every validator easy access to the GPUs available on Subnet 27. Validators can use the compute for free or start monetizing the front ends to provide compute to clients."

$TAO

12

51

224

For a long time QNT has been my main bag since I bought in at 25$.

Then $TAO replaced it.

Recently I sold all QNT I had for TAO at 51$, 4 days before the pump.

I'm glad I did it, I was kind of married to that bag.

I just realized

#Bittensor

is the tech we needed.

25

10

198

A leading AI research organization

@NousResearch

launched a finetuning competition on Bittensor.

Prize: 203 TAO/day (~$127k) shared among the top performers.

As subnet owner, Nous earns 89 TAO/day (~$56k).

There's no way the OS AI community won't notice or be interested.

$TAO

8

55

193

Another application being built on

#Bittensor

. 👀

WikiTensor powered by the Bittensor network and built by

@NorthTensorAI

.

$TAO

18

44

178

5,649,641 TAO minted so far, and only 0.16% are available to buy on exchanges after a 3x from 50$.

Let that sink in.

$TAO

4

37

175

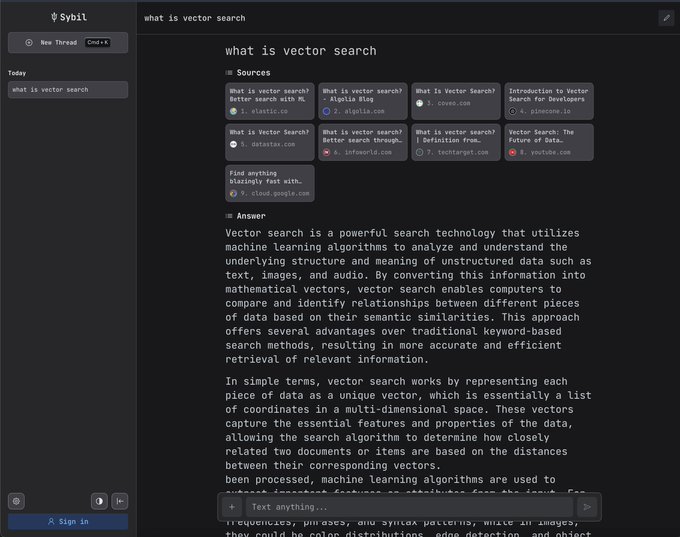

New product built by

@0xcarro

on

#Bittensor

using SN4. 🔥

I'm pretty sure I'll be using it daily from now on.

$TAO

11

35

162

The

@neural_internet

team is expanding! 👀

Neural Internet grows with seven new hires joining the team to build decentralized compute on the Bittensor network. 🔥

"Some of these individuals bring their experience from companies such as OpenAI, NVIDIA and Algorand!"

$TAO

13

37

148

Current distribution of $TAO on

#Bittensor

.

Subnets that are not yet receiving emissions include :

SN7 -> Storage

SN10 -> MapReduce

SN15 -> Blockchain Insights

SN16 -> Audio Generation

SN17 -> Petals

SN20 -> Oracle

SN23 -> PrimeNet

SN25 -> Bitcurrent

SN27 -> Compute

$TAO

3

26

143

On the 3rd of January 2009 the genesis block of

#Bitcoin

was mined.

12 years later, history repeated itself with the

#Bittensor

genesis block.

Same values, different mission.

$TAO

8

21

128

.

@NousResearch

announces version 0.2 of their finetuning subnet. 👀

"Introduction of multiple concurrent competitions." 🔥

"Alongside the existing Mistral-7b competition, we've started a new Gemma-2b competition, to create a highly efficient yet capable small model."

$TAO

5

35

124

.

@zxocw

, founder of

@polychain

, said in a statement shared with

@FortuneMagazine

:

"It is a radical new use case for blockchains that is unprecedented."

$TAO

4

49

130

.

@opentensor

just released the new Bittensor Wallet for iOS. 👀

The link is available in the announcement channel of the official Discord.

$TAO

5

20

121

Gobble Gobble. $TAO

2

9

119

6 days left until the release of the Bittensor documentary. 🔥

Like the rest of the community, I'm beyond excited to watch it.

Thank you

@evert_scott

for making this a reality, I'm sure it was a lot of work.

It will be an excellent resource/introduction for people curious

6

12

119

"We have already agreed with a 1m+ user Discord bot provider to implement BitTranslate solutions."

$TAO

10

27

105

Every single time I see this, I think of the incredible value these subnets will represent in a few years.

If you've seen the pace of development so far, you know that

#Bittensor

can become a behemoth in no time.

$TAO

15

16

102

New dashboard for the S&P 500 Oracle Subnet, owned by

@FoundryServices

. 👀

"Easily access subnet documentation as well as a miner dashboard to view performance metrics."

$TAO

1

27

114

Check out this clean and short explanation of

#Bittensor

made by ViktorThink.

Here's the link to the full version (also presenting NicheNet).

$TAO

19

38

102

Bittensor is incentivizing miners like

@TensorplexLabs

to train better foundational models through SN9, the pretraining subnet.

As a result, a 7b model is outperforming Llama 7b, Llama2 7b, and Falcon 7b on the benchmarks below.

It works, and it's just getting started.

$TAO

4

20

110

#bitcoin

made us reconsider how we think of money.

#bittensor

will do the same for AI.

$TAO

1

16

106

.

@NousResearch

is "thinking about diversifying the subnet to have multiple concurrent competitions going on the same time." 👀

$TAO

2

15

101