AI at Meta

@AIatMeta

Followers

601,752

Following

272

Media

946

Statuses

2,297

Together with the AI community, we are pushing the boundaries of what’s possible through open science to create a more connected world.

Joined August 2018

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Puerto Rico

• 756756 Tweets

Ten Hag

• 388569 Tweets

Manchester United

• 227709 Tweets

París

• 225497 Tweets

Rodri

• 218256 Tweets

#CDTVライブライブ

• 192320 Tweets

Vini

• 170232 Tweets

Tyler

• 138234 Tweets

LINGLING THAI TOUR EP1

• 127593 Tweets

#HighSchoolFrenemyEP5

• 96575 Tweets

Ruud

• 96432 Tweets

Bola de Ouro

• 62582 Tweets

Fifth Veda Of God Kabir

• 58944 Tweets

Xavi

• 42703 Tweets

पप्पू यादव

• 35980 Tweets

Carvajal

• 34519 Tweets

기아 우승

• 34517 Tweets

frank ocean

• 28268 Tweets

Lautaro

• 21139 Tweets

#モンスター

• 20413 Tweets

新シナリオ

• 16207 Tweets

新時代の扉

• 14391 Tweets

メカウマ娘

• 14111 Tweets

Nagelsmann

• 13757 Tweets

Southgate

• 13690 Tweets

スカリーくん

• 13259 Tweets

リズミック

• 13109 Tweets

France Football

• 12975 Tweets

#เติมพลังเต็มถังให้นุนิว

• 12107 Tweets

Eurocopa

• 11722 Tweets

삐끼삐끼

• 11372 Tweets

上垣アナ

• 11059 Tweets

الكره الذهبيه

• 10637 Tweets

Last Seen Profiles

We’re pleased to introduce Make-A-Video, our latest in

#GenerativeAI

research! With just a few words, this state-of-the-art AI system generates high-quality videos from text prompts.

Have an idea you want to see? Reply w/ your prompt using

#MetaAI

and we’ll share more results.

879

2K

8K

Meta AI presents CICERO — the first AI to achieve human-level performance in Diplomacy, a strategy game which requires building trust, negotiating and cooperating with multiple players.

Learn more about

#CICERObyMetaAI

:

238

833

4K

Today we’re introducing SceneScript, a novel method for reconstructing environments and representing the layout of physical spaces from

@RealityLabs

Research.

Details ➡️

SceneScript is able to directly infer a room’s geometry using end-to-end machine

47

516

3K

Today we’re releasing V-JEPA, a method for teaching machines to understand and model the physical world by watching videos. This work is another important step towards

@ylecun

’s outlined vision of AI models that use a learned understanding of the world to plan, reason and

95

563

3K

New on

@huggingface

— CoTracker simultaneously tracks the movement of multiple points in videos using a flexible design based on a transformer network — it models correlation of the points in time via specialized attention layers.

🤗 Try CoTracker ➡️

40

430

2K

Open source AI is the way forward and today we're sharing a snapshot of how that's going with the adoption and use of Llama models.

Read the full update here ➡️

🦙 A few highlights

• Llama is approaching 350M downloads on

@HuggingFace

. More than 10x

90

302

2K

With the release of Llama 3.1 405B,

@TogetherCompute

built LlamaCoder — an open source web app that can generate an entire app from a prompt. The repo has now been cloned by hundreds of devs on GitHub and starred 2K+ times. More on this project ➡️

39

426

2K

New AI research from Meta – CoTracker3 Simpler and Better Point Tracking by Pseudo-Labelling Real Videos.

More details ➡️

Demo on

@huggingface

➡️

Building on our previous work on CoTracker, this new model demonstrates impressive

44

332

2K

Using structured weight pruning and knowledge distillation, the

@NVIDIAAI

research team refined Llama 3.1 8B into a new Llama-3.1-Minitron 4B.

They're releasing the new models on

@huggingface

and shared a deep dive on how they did it ➡️

30

356

2K

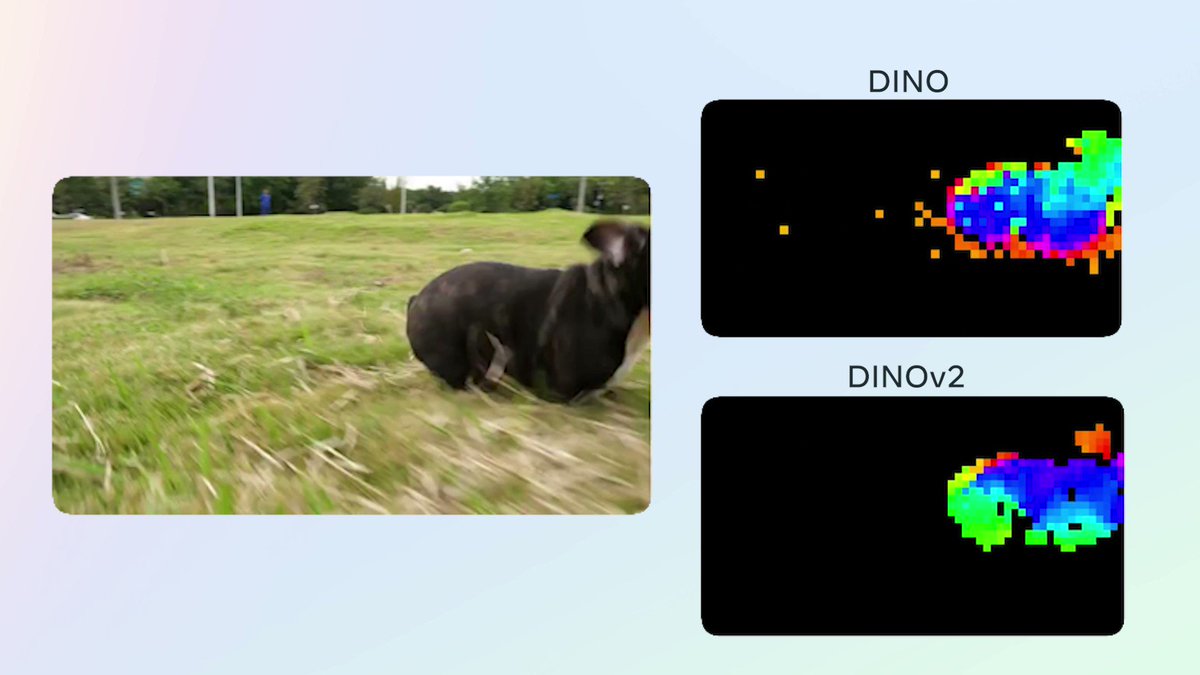

Today we're releasing our work on I-JEPA — self-supervised computer vision that learns to understand the world by predicting it. It's the first model based on a component of

@ylecun

's vision to make AI systems learn and reason like animals and humans.

Details ⬇️

38

344

2K

We’re happy to work with

@oasislabs

on this important project announced today that will advance fairness measurement in AI models.

As

@Meta

’s technology partner, Oasis Labs built the platform that uses Secure Multi-Party Computation (SMPC) to safeguard information as Meta asks users on

@Instagram

to take a survey in which they can voluntarily share their race or ethnicity

21

204

749

66

470

1K

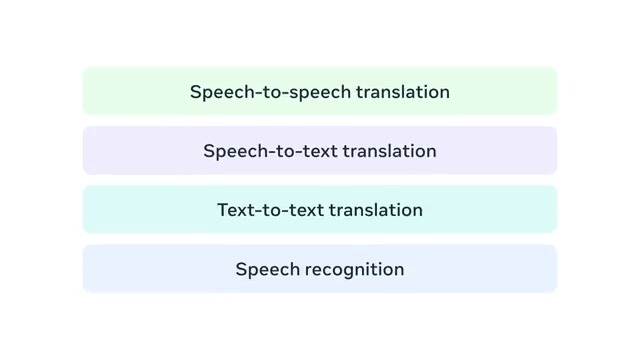

Newly published today in

@Nature

: No Language Left Behind (NLLB) is an AI model created by researchers at Meta capable of delivering high-quality translations directly between 200 languages – including low-resource languages.

Read more in Nature ⬇️

46

295

1K

Read about new developments in deep learning with authors and researchers Daniel A. Roberts (

@danintheory

), Sho Yaida (

@Shoyaida

) and Boris Hanin (

@BorisHanin

) in their book The Principles of Deep Learning Theory: An Effective Theory Approach to Understanding Neural Networks. 👇

19

291

1K

"Exo's use of Llama 405B and consumer-grade devices to run inference at scale on the edge shows that the future of AI is open source and decentralized." -

@mo_baioumy

2 MacBooks is all you need.

Llama 3.1 405B running distributed across 2 MacBooks using

@exolabs_

home AI cluster

210

728

5K

44

220

1K

It's been exactly one week since we released Meta Llama 3, in that time the models have been downloaded over 1.2M times, we've seen 600+ derivative models on

@HuggingFace

and much more.

More on the exciting impact we're already seeing with Llama 3 ➡️

26

172

1K

Facebook AI and

@CarnegieMellon

researchers have built Pluribus, the first AI bot to beat elite poker pros in 6 player Texas Hold’em. This breakthrough is the first major benchmark outside of 2 player games and we’re sharing specifics on how we built it.

77

424

1K

We're releasing code for a new approach to generating recipes directly from food images. This produces more compelling recipes than retrieval-based approaches and improves performance with respect to previous baselines for ingredient prediction.

#CVPR2019

18

345

1K