Logan Kilpatrick

@OfficialLoganK

Followers

112,863

Following

2,044

Media

364

Statuses

6,364

Lead product for @Google AI Studio, working on the Gemini API, and AGI, my views!

Joined April 2011

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

ドラフト

• 407151 Tweets

#わたしの宝物

• 78929 Tweets

Baakhabar Sant Rampal Ji

• 66211 Tweets

#หนึ่งในร้อยEP11

• 63134 Tweets

CONSTRUCT A NEW DREAMSCAPE

• 61201 Tweets

#빛나는_리노의_YOUTH

• 60319 Tweets

#るぅとくん誕生祭2024

• 59924 Tweets

#PrideOfKpopLeeKnow

• 56065 Tweets

青春18きっぷ

• 53272 Tweets

ILL BE THERE TODAY

• 48364 Tweets

FURIA EN BONDI

• 31638 Tweets

#與那城奨を沖縄から世界へ

• 29603 Tweets

Sumar

• 28606 Tweets

WALL SONG OHMLENG

• 27081 Tweets

#HAPPYSHODAY

• 24013 Tweets

まっすー

• 15506 Tweets

GENTO SA TAIWAN

• 14064 Tweets

GALA X DESPIERTA

• 13691 Tweets

もやしもん

• 13642 Tweets

REALLY LIKE YOU PREVIEW

• 13151 Tweets

#甲斐田晴誕生日2024

• 12450 Tweets

LEVEL UP PITBABE

• 12237 Tweets

冬月くん

• 11600 Tweets

Last Seen Profiles

Today is a huge day for developers. 🤯

- ChatGPT API released (10x cheaper)

- Whisper available in the API

- Overhauled data usage policy

- Focus on stability

And more!

Here’s a quick thread on everything we shipped today

@OpenAI

🧵

123

1K

8K

Excited to share I’ve joined

@Google

to lead product for AI Studio and support the Gemini API.

Lots of hard work ahead, but we are going to make Google the best home for developers building with AI.

I’m not going to settle for anything less.

575

187

5K

Yesterday was my last day

@OpenAI

.

I spent the last year and a half putting my heart and soul into supporting developers every single day.

I’m going to miss all the amazing folks I got to work with, y’all are doing such important work, keep shipping ♥️

Stay tuned…

492

124

4K

Today is the biggest day ever for developers building with

@OpenAI

We are releasing new models, API’s, open source models, and more. Full details in 🧵

73

316

3K

A note to

@OpenAI

developers 🫶:

I wanted to express my appreciation for all the warm, thoughtful, and supportive messages I got and I’ve seen posted across the community.

Despite a moment of uncertainty, our commitment to developers remained steadfast.

In the meantime,

198

193

2K

Beyond excited to share that today is my first day

@OpenAI

where I’ll be their first Developer Advocate and helping lead/build Dev Rel! 🥳

I’ll be supporting the developer community using/building with ChatGPT, GPT-3, DALLE, the API, and more! 🚀

163

121

2K

I have so much love and respect for my colleagues at

@OpenAI

.

Truly the most incredible group of people on the planet. This has been an utterly devastating last 3 days.

98

77

2K

Congrats to the

@OpenAI

team on o1 and o1-mini, the universe does not want things to be shipped, but you did it.

40

58

2K

Good news for

@GoogleAI

developers:

- Gemini 1.5 Flash price is now ~70% lower ($0.075 / 1M)

- Gemini 1.5 Flash tuning available to all

- Added support for 100+ new languages in the API

- AI Studio is available to all workspace customers

- Much more : )

246

272

2K

Anyone who thinks

@iruletheworldmo

is AGI or some advanced model has no idea how OpenAI operates as a company.

93

32

1K

New

@Google

developer launch today:

- Gemini 1.5 Pro is now available in 180+ countries via the Gemini API in public preview

- Supports audio (speech) understanding capability, and a new File API to make it easy to handle files

- New embedding model!

108

251

2K

We are so back! Check out the busy person introduction to large language models by

@karpathy

, just released 👀

36

212

2K

Incredible news for

@OpenAI

devs:

- new GPT-4 and 3.5 Turbo models

- function calling in the API (plugins)

- 16k context 3.5 Turbo model (available to everyone today)

- 75% price reduction on V2 embeddings models

And more 🤯🧵

90

180

1K

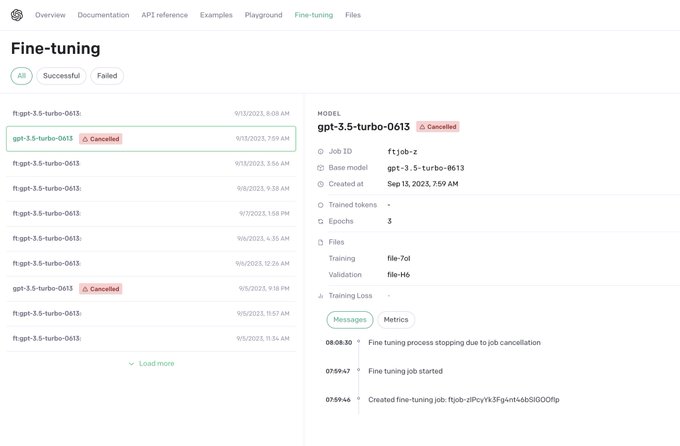

The

@OpenAI

fine-tuning UI is here! 🔥

You can now see your fine-tunes directly and will be able to create them though the UI in the months to come!

We also bumped the concurrent training limit from 1 to 3 so you can fine-tune more models!

60

190

1K

Great news for

@OpenAIDevs

, we are launching:

- Embedding V3 models (small & large)

- Updated GPT-4 Turbo preview

- Updated GPT-3.5 Turbo (*next week + with 50% price cut on Input tokens / 25% price cut on output tokens)

- Scoped API keys

90

155

1K

Gemini 1.5 Pro experimental (0801) is currently ranked

#1

on LMSYS for both text and multi-modal, we are excited to see what you think!

Exciting News from Chatbot Arena!

@GoogleDeepMind

's new Gemini 1.5 Pro (Experimental 0801) has been tested in Arena for the past week, gathering over 12K community votes.

For the first time, Google Gemini has claimed the

#1

spot, surpassing GPT-4o/Claude-3.5 with an impressive

84

419

2K

28

42

406

On the way back from the office today, almost got into a car accident when I saw this, I had to pull off and take a picture. 🤯

Am I being pranked?

#JuliaLang

42

127

1K

If you are a developer using the

@OpenAI

API, DALL-E, ChatGPT, etc. what can we do to make the developer experience better? 🧵👇

286

146

1K

Great news for

@Google

developers:

Context caching for the Gemini API is here, supports both 1.5 Flash and 1.5 Pro, is 2x cheaper than we previously announced, and is available to everyone right now. 🤯

30

159

1K

The

@OpenAI

cookbook is one of the most underrated and underused developer resources available today ⭐️

Here are 7 notebooks you should know about 🧵

29

172

1K

Exciting news for

@OpenAI

devs: we are close to a 1.0 release of the OpenAI Python SDK 🎊. You can test the beta version of 1.0 today, we would love to get your early feedback!

36

187

1K

Huge news for

@OpenAIDevs

: API key based usage is here 🥳

To get started, head to the API key page and generate a tracking token for each key which will enable per key tracking in the usage dashboard for all new requests!

77

103

1K

You could make a lot of money 💰 right now with a Generative AI / Large Language Model consultancy integrating

@OpenAI

into products and services.

Build a diverse portfolio of examples and there will be unlimited demand.

67

86

1K

Awesome new

@OpenAI

developer resources just dropped 👀:

- GPT guide

- GPT best practices (prompt engineering)

- Updated introduction

And more! If you use large language models, the GPT best practices is a must read! More info in 🧵

32

174

970

New: excited to publicly share the

@OpenAI

Forum, a place to discuss, learn, and shape AI.

The forum features online and in-person events along with paid activities that directly impact OpenAI models.

Details in 🧵, along with how to join. (1/n)

51

140

961