PyTorch

@PyTorch

Followers

419K

Following

820

Media

764

Statuses

2K

Tensors and neural networks in Python with strong hardware acceleration. PyTorch is an open source project at the Linux Foundation. #PyTorchFoundation

Joined September 2016

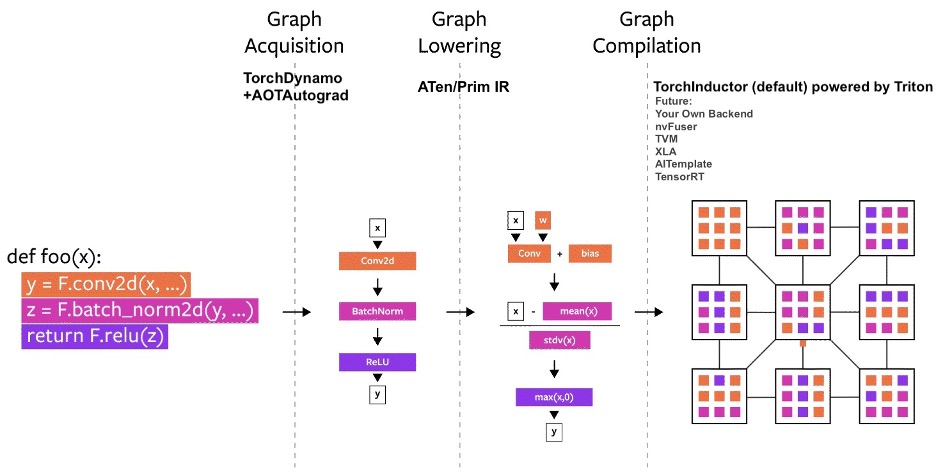

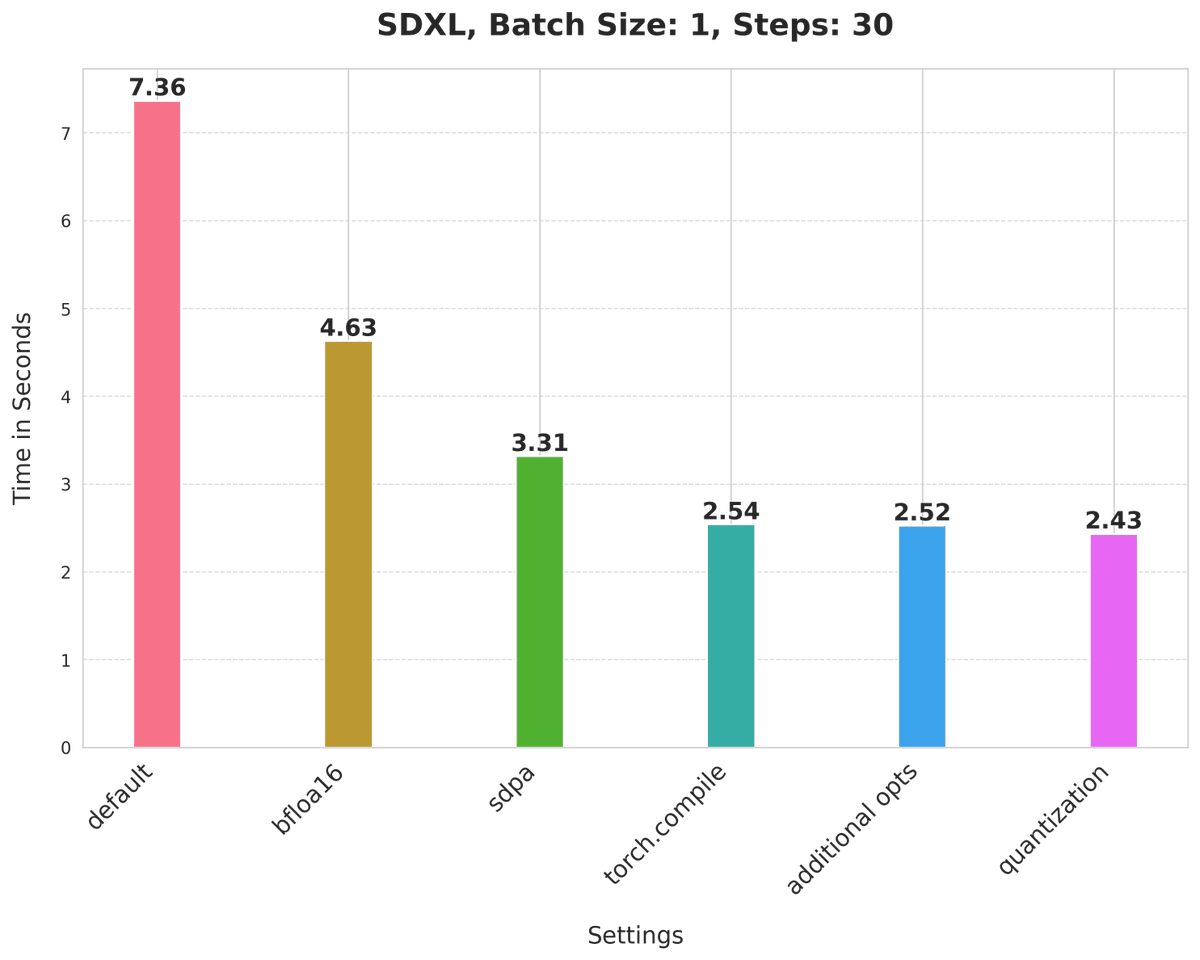

We just introduced PyTorch 2.0 at the #PyTorchConference, introducing torch.compile!. Available in the nightlies today, stable release Early March 2023. Read the full post: 🧵below!. 1/5

23

514

2K

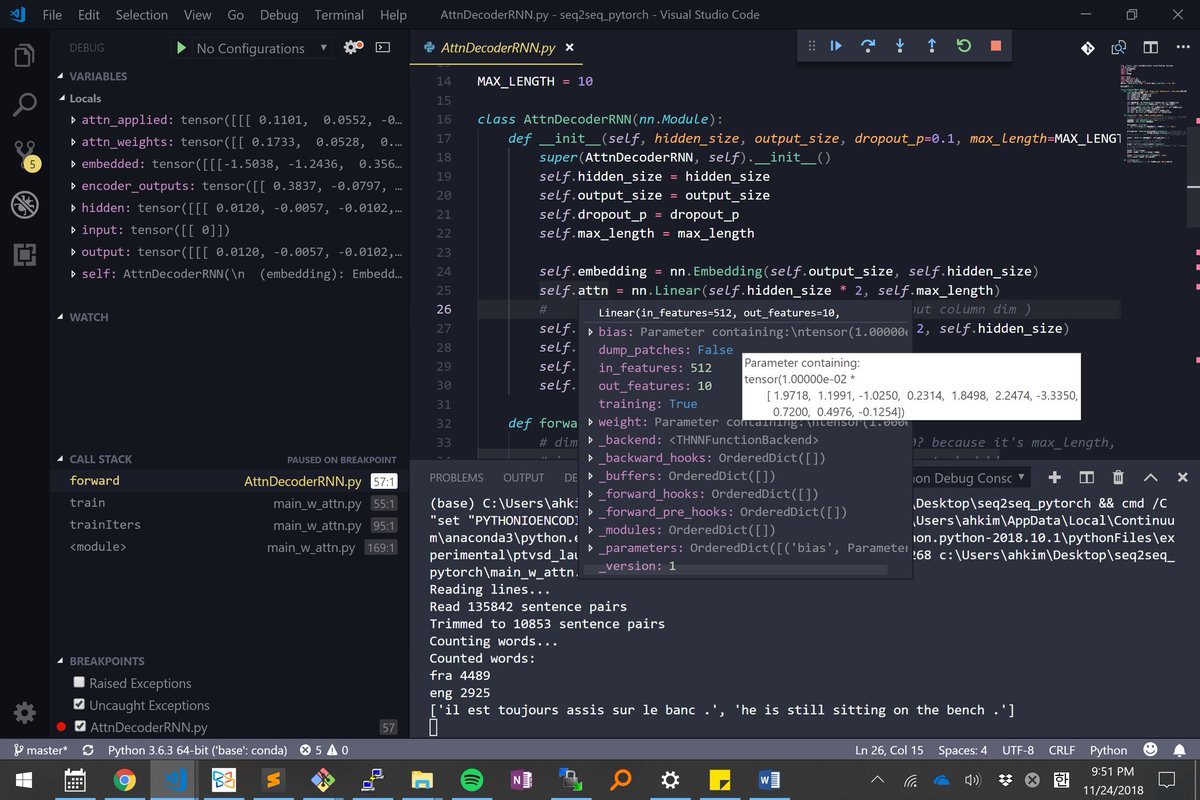

Microsoft VSCode integrates deeply with PyTorch out of the box. As @aerinykim highlights:. 1. It shows values inside tensors. (Left panel).2. By simply mousing over, you can see the variable's shape, weight, bias, dtype, device, etc.

21

423

1K

Learn PyTorch on GPUs for free via Google Colaboratory. each PyTorch tutorial now has a link to open it on Colab, where you can interactively execute and play with code. #thanksgoogle

4

280

1K

Introducing torchchat 🔥. A lightweight library to run LLMs locally across mobile, desktop and laptops powered by PyTorch. Learn more: #llms #mobilellms #localai #pytorchllm #edge #ondeviceai

16

190

886

Happy 3rd Birthday TensorFlow! Looking forward to competing with and complementing you over the years. #HappyBirthdayTF.

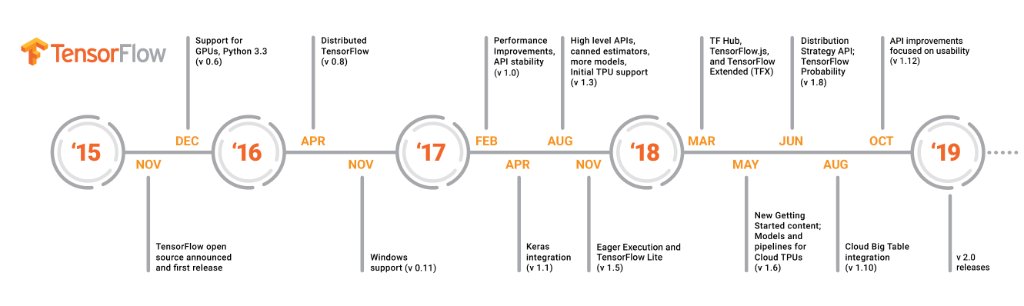

Happy 3rd birthday TensorFlow! We've come a long way since the first release in 2015 & TensorFlow wouldn't be the framework it is today without you. As we work on #TensorFlow20, look at all the features we've added over the years to make TensorFlow easier to use. #HappyBirthdayTF

12

114

872

Stochastic Weight Averaging (SWA) is a simple procedure that improves generalization in deep learning over Stochastic Gradient Descent (SGD). PyTorch 1.6 now includes SWA natively. Learn more from @Pavel_Izmailov, @andrewgwils and Vincent: .

5

206

847

Disney uses PyTorch for animated character recognition and to speed up its video processing pipeline. @DTCITechnology engineers also contributed new features to the Torchvision domain library.

0

184

788

The team @WadhwaniAI has built a multi-task network that detects pest infestations in cotton crops. This technology is being put directly in the hands of more than 18,000 farmers across India using #PyTorch Mobile, TorchServe, and Weights & Biases.

11

171

762

vLLM Joins PyTorch Ecosystem 🎉 .@vllm_project has always had a strong connection with the PyTorch project. Tight coupling with PyTorch ensures seamless compatibility and performance optimization across diverse hardware platforms. Read more:

9

108

730

Two creators of passion projects that transformed the landscape of how we code today — Linus Torvalds and @soumithchintala meet for the first time, sharing a smile and a love for the open source community. #PyTorchFoundation

10

72

656

The Global PyTorch Summer Hackathon is back! This year, teams can compete in 3 categories:. 1. Developer Tools.2. Web/Mobile applications.3. Responsible AI Development Tools. Read more at: #PyTorchSummerHack

7

199

638

Today, Mark Zuckerberg announced the launch of the #PyTorchFoundation under the @LinuxFoundation & a board of @Meta @AMD @awscloud @googlecloud @Azure & @NVIDIAAI. With these leaders, learn how we're giving the AI community tools to accelerate innovation.

9

155

625

Stochastic Weight Averaging: a simple procedure that improves generalization over SGD at no additional cost. Can be used as a drop-in replacement for any other optimizer in PyTorch. Read more: guest blogpost by @Pavel_Izmailov and @andrewgwils

3

181

596

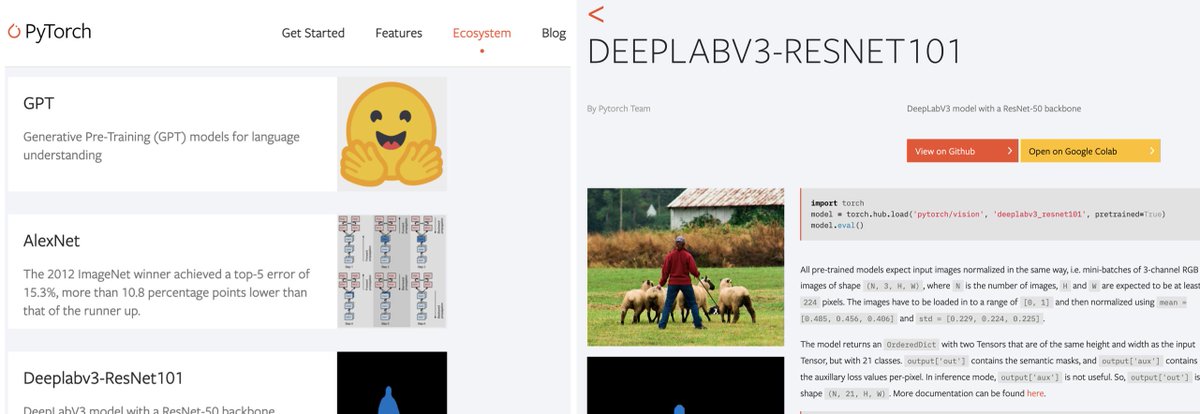

PyTorch Hub: reducing the friction in reproducing and building-upon research.- Pull models with 1 line of code, and a few more to use.- Curated models. Open with Google Colab and @paperswithcode .- Publish your models by sending a PR.Blog: #ICML2019

1

220

555

PyTorch BERT models are now 4x faster, thanks to @nvidia.

Always amazed by what people do when you open-source your code!.Here is pytorch-bert v0.4.0 in which.- NVIDIA used their winning MLPerf competition techniques to make the model 4 times faster,.- @rodgzilla added a multiple-choice model & how to fine-tune it on SWAG. many others!

1

121

525

Introducing @PyTorchLive, an easy to use library of tools for creating on-device ML demos on Android and iOS. With Live, you can build a working mobile app ML demo in minutes. Learn more at and post your demo with #PyTorchLive.

11

149

530

Introducing “Learn the Basics" - a guide to a complete ML workflow with detailed explanations on concepts like Tensors, DataLoaders, Transforms, and more. Thanks @sethjuarez, @subramen, @Cassieview, @shwars, @pythiccoder for your contributions! Click👇.

1

124

480

Our community member from Japan, Yutaro Ogawa, shares the PyTorch Tutorials in Japanese. コミュニティメンバの小川さん(電通国際情報サービスISID AIトランスフォーメーションセンター)@ISID_AI_team より、日本語版PyTorchチュートリアルが公開されました.

1

112

478

Tensor Comprehensions are now integrated and interoperable with @PyTorch . Read our blog post to get started:

Tensor Comprehensions: einstein-notation like language transpiles to CUDA, and autotuned via evolutionary search to maximize perf. Know nothing about GPU programming? Still write high-performance deep learning. @PyTorch integration coming in <3 weeks.

2

197

459