Clay Bavor

@claybavor

Followers

31,079

Following

527

Media

304

Statuses

1,164

Co-Founder, Sierra

Mountain View, CA

Joined April 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

مدريد

• 1239162 Tweets

Real Madrid

• 278547 Tweets

Real Madrid

• 278547 Tweets

Champions

• 203686 Tweets

Vini

• 151770 Tweets

Eminem

• 83800 Tweets

Mbappe

• 72086 Tweets

Neymar

• 70451 Tweets

Barca

• 69124 Tweets

Blade

• 62411 Tweets

Bernabéu

• 53683 Tweets

Saka

• 46718 Tweets

MELHOR DO MUNDO

• 44953 Tweets

Balón de Oro

• 42745 Tweets

Lakers

• 40862 Tweets

Ancelotti

• 39617 Tweets

Bola de Ouro

• 34802 Tweets

Sahin

• 29327 Tweets

#GHLímite7

• 21175 Tweets

Aston Villa

• 20233 Tweets

Arteta

• 19438 Tweets

#PSGPSV

• 19340 Tweets

#RMABVB

• 18118 Tweets

Lucas Vázquez

• 18005 Tweets

#TemptationIsland

• 17856 Tweets

Dembele

• 17063 Tweets

Gittens

• 16527 Tweets

#KohLanta

• 15778 Tweets

Rodri

• 15677 Tweets

Stuttgart

• 15405 Tweets

Rodrygo

• 15272 Tweets

Modric

• 14708 Tweets

Martinelli

• 13157 Tweets

الريال

• 12337 Tweets

Trossard

• 12312 Tweets

Kupp

• 12167 Tweets

Havertz

• 10563 Tweets

Danilo

• 10119 Tweets

Last Seen Profiles

Flying at 37k feet, this is what it would be like to look out the window of a 747 vs. an SR-71 vs. a New Horizons.

http://t.co/ChVsgK77Rl

18

1K

1K

We've taken what we’ve learned from Tango and are bringing AR to millions of Android phones, starting with Pixel and S8. Here's

#ARCore

.

42

541

1K

This is what we're seeing through

@NASA

's new James Webb Space Telescope. Full version here:

19

262

1K

So excited to announce the first Daydream standalone headset, the Mirage Solo from

@Lenovo

. It's simple to use, comfortable, and, with built-in positional tracking, it's incredibly immersive.

20

200

513

In case you were wondering, this is how a Super Star Destroyer and Manhattan compare in size.

http://t.co/FXBjQGGtuh

http://t.co/l0Opxzl0K7

29

577

475

It’s one thing to read that a great white shark can be 18 feet long. It’s another to see one up close in relation to the things around you. Introducing AR in Google Search.

#io19

10

96

352

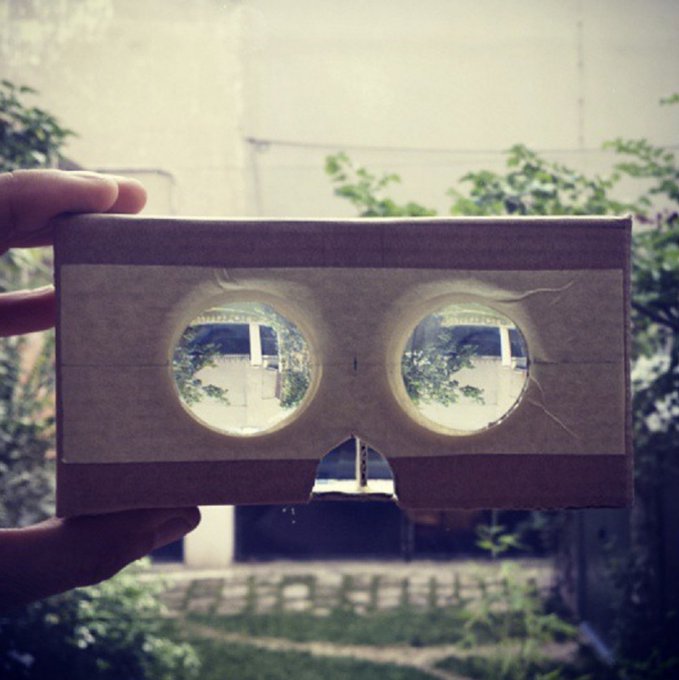

We built some neat technology that makes VR headsets "transparent", digitally.

@googleresearch

@googlevr

13

147

308

Really excited to have this start rolling out to Maps Local Guides. A big step towards overcoming the blue dot / where am I actually problem.

Check out an experiment that aims to improve the position and orientation accuracy of the little blue dot in Google Maps using global localization, a technique that combines Visual Positioning Service, Street View, machine learning and

#AugmentedReality

→

15

259

787

12

56

316

We had a lot of fun working on this one. :)

Who did it better?

@childishgambino

or our new Childish Gambino Playmoji? You decide.

#pixeldanceoff

#GRAMMYs

26

349

1K

5

21

261

"Do the thing that can only be done in VR, not the thing that can also be done in VR." Sage advice for developers from

@chetfaliszek

.

8

94

240

Simulating Job Simulator at Google. We are absurdly excited to have

@OwlchemyLabs

joining us!

10

35

233

New version of ARCore rolling out today. Also, a preview of what's to come: persistent Cloud Anchors. Think of them as the save button for AR.

New

#ARCore

updates to Cloud Anchors and Augmented Faces enable more shared cross-platform AR experiences. Learn more →

1

40

141

9

45

197

Before AR/VR, I worked on apps like Gmail to help people be more productive at work. It’s one of many reasons I’m excited Glass is joining our team now. Today, we're launching Glass Enterprise Edition 2 to help businesses work better, smarter, and faster.

10

45

181

Portals between Scotland and San Francisco with

#ARCore

.

Prototyping portals...

#arcore

#portals

#ar

#prototype

#experiments

#unity

#google

#android

#pixel

#madewithunity

#dev

#design

#interactive

49

798

2K

2

64

187

"It was just me, and math, and Google." -Andy Weir, on how he wrote The Martian cc:

@andyweirauthor

0

112

159

Excited about the

@TiltBrush

launch on Oculus Quest today. Have at it!

Paint with an infinite canvas. Experience creativity, untethered.

#TiltBrush

is now available on

#OculusQuest

! ✨🎨🖌️

@OculusGaming

40

108

416

12

17

157